model.eval() :让BatchNorm、Dropout等失效;

with torch.no_grad() : 不再缓存activation,节省显存;

这是矩阵乘法:

y1 = tensor @ tensor.T y2 = tensor.matmul(tensor.T) y3 = torch.rand_like(y1) torch.matmul(tensor, tensor.T, out=y3)这是点乘:

z1 = tensor * tensor z2 = tensor.mul(tensor) z3 = torch.rand_like(tensor) torch.mul(tensor, tensor, out=z3)

Tensor如果是1*1大小的,可以转为普通Python变量

agg = tensor.sum() agg_item = agg.item()

Tensor和numpy之间,是share内存的,改一个另一个也被改动

n = torch.ones(5).numpy() n = np.ones(5) t = torch.from_numpy(n)

root本地文件夹里有,则从本地读;没有的话,如指定了ownload=True,则从远程下载;

import torch from torch.utils.data import Dataset from torchvision import datasets from torchvision.transforms import ToTensor, Lambda training_data = datasets.FashionMNIST( root="data", train=True, download=True, transform=ToTensor(), target_transform=Lambda(lambda y: torch.zeros(10, dtype=torch.float).scatter_(0, torch.tensor(y), value=1)) )Dataset类:通过index,拿到1条数据;

数据可以都在磁盘上,用到哪条,就加载哪条;

自定义一个类,需要继承Dataset类,并重写__init__、__len__、__getitem__

DataLoader类:batching, shuffle(sampling策略), multiprocess加载,pin memory,...

ToTensor(): 把PIL格式的Image,转成Tensor;

Lambda: 把int的y,转成10维度的1-hot向量;

一切模型层,皆继承自torch.nn.Module

class NeuralNetwork(nn.Module):Module必须copy到device上

model = NeuralNetwork().to(device)input data也必须copy到device上

X = torch.rand(1, 28, 28, device=device)不能直接使用Module.forward,使用Module(input)语法可以使前后的hook起作用

logits = model(X)

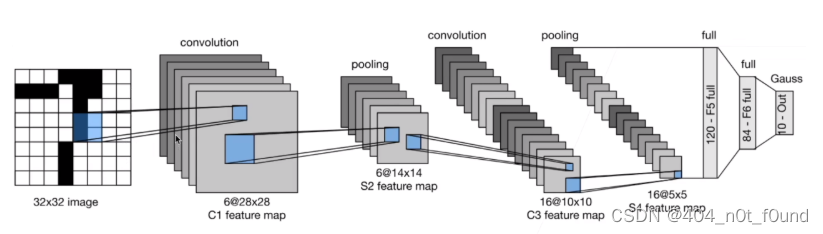

![[pytorch]常用函数(自用)](https://img-blog.csdnimg.cn/7639b662e6014186a08a3cb1cbe66044.png)