【0】资源配置文件

[root@mcwk8s03 mcwtest]# ls

mcwdeploy.yaml

[root@mcwk8s03 mcwtest]# cat mcwdeploy.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: mcwpython

name: mcwtest-deploy

spec:

replicas: 1

selector:

matchLabels:

app: mcwpython

template:

metadata:

labels:

app: mcwpython

spec:

containers:

- command:

- sh

- -c

- echo 123 >>/mcw.txt && cd / && rm -rf /etc/yum.repos.d/* && curl -o /etc/yum.repos.d/CentOS-Base.repo https://mirrors.aliyun.com/repo/Centos-vault-8.5.2111.repo && yum install -y python2 && python2 -m SimpleHTTPServer 20000

image: centos

imagePullPolicy: IfNotPresent

name: mcwtest

dnsPolicy: "None"

dnsConfig:

nameservers:

- 8.8.8.8

- 8.8.4.4

searches:

#- namespace.svc.cluster.local

- my.dns.search.suffix

options:

- name: ndots

value: "5"

---

apiVersion: v1

kind: Service

metadata:

name: mcwtest-svc

spec:

ports:

- name: mcwport

port: 2024

protocol: TCP

targetPort: 20000

selector:

app: mcwpython

type: NodePort

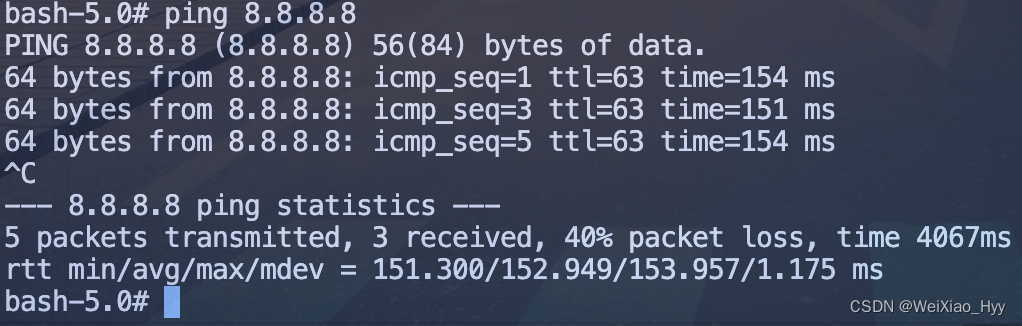

[root@mcwk8s03 mcwtest]#[1]查看服务部分通,部分不通

[root@mcwk8s03 mcwtest]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.2.0.1 <none> 443/TCP 583d

mcwtest-svc NodePort 10.2.0.155 <none> 2024:41527/TCP 133m

nginx ClusterIP None <none> 80/TCP 413d

[root@mcwk8s03 mcwtest]# curl -I 10.2.0.155

curl: (7) Failed connect to 10.2.0.155:80; Connection timed out

[root@mcwk8s03 mcwtest]# curl -I 10.0.0.33:41527

curl: (7) Failed connect to 10.0.0.33:41527; Connection refused

[root@mcwk8s03 mcwtest]# curl -I 10.0.0.36:41527

curl: (7) Failed connect to 10.0.0.36:41527; Connection timed out

[root@mcwk8s03 mcwtest]# curl -I 10.0.0.35:41527

HTTP/1.0 200 OK

Server: SimpleHTTP/0.6 Python/2.7.18

Date: Tue, 04 Jun 2024 16:38:18 GMT

Content-type: text/html; charset=ANSI_X3.4-1968

Content-Length: 816

[root@mcwk8s03 mcwtest]#【2】查看,能通的IP,是因为在容器所在的宿主机,因此需要排查容器跨宿主机是否可以通信

[root@mcwk8s03 mcwtest]# kubectl get pod -o wide|grep mcwtest

mcwtest-deploy-6465665557-g9zjd 1/1 Running 0 37m 172.17.98.13 mcwk8s05 <none> <none>

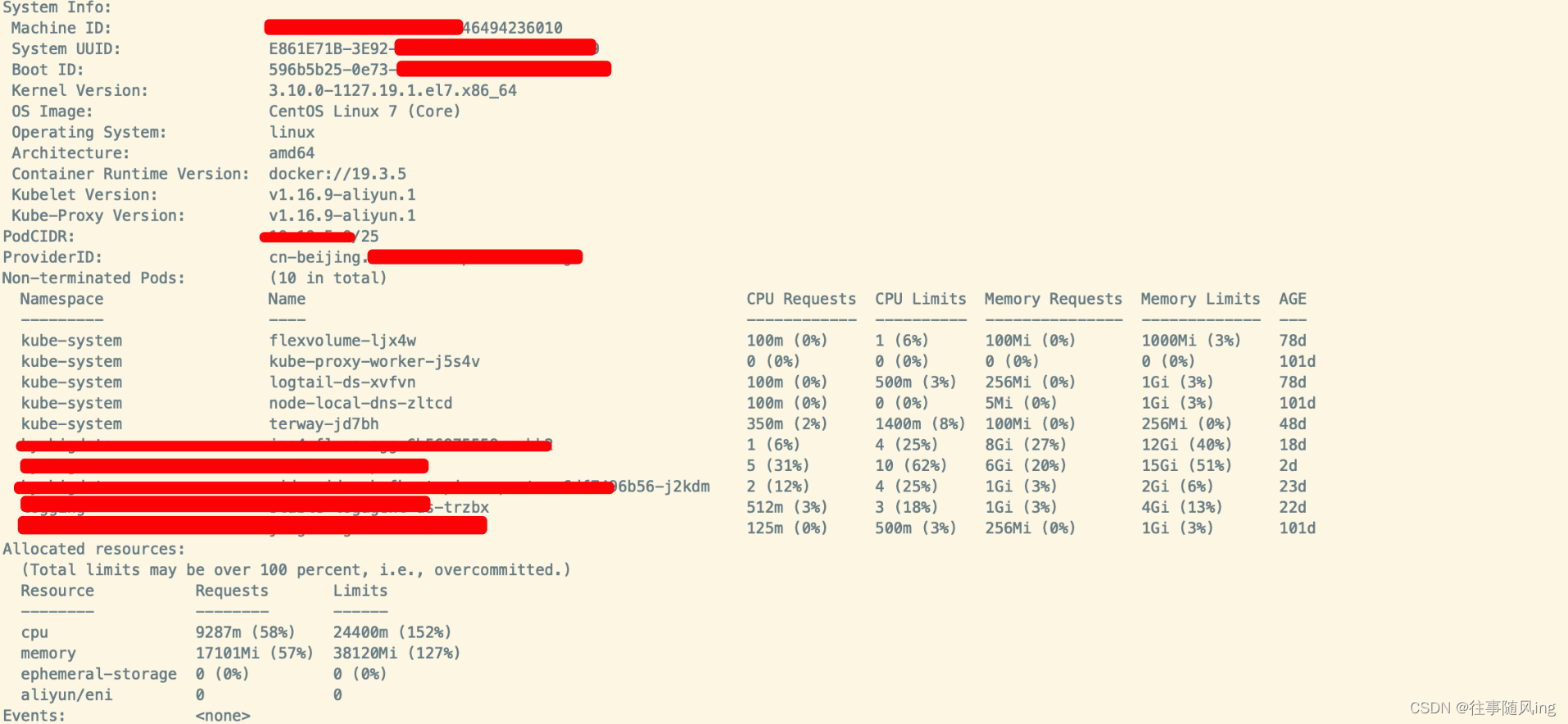

[root@mcwk8s03 mcwtest]# kubectl get nodes -o wide

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

mcwk8s05 Ready <none> 580d v1.15.12 10.0.0.35 <none> CentOS Linux 7 (Core) 3.10.0-693.el7.x86_64 docker://20.10.21

mcwk8s06 Ready <none> 580d v1.15.12 10.0.0.36 <none> CentOS Linux 7 (Core) 3.10.0-693.el7.x86_64 docker://20.10.21

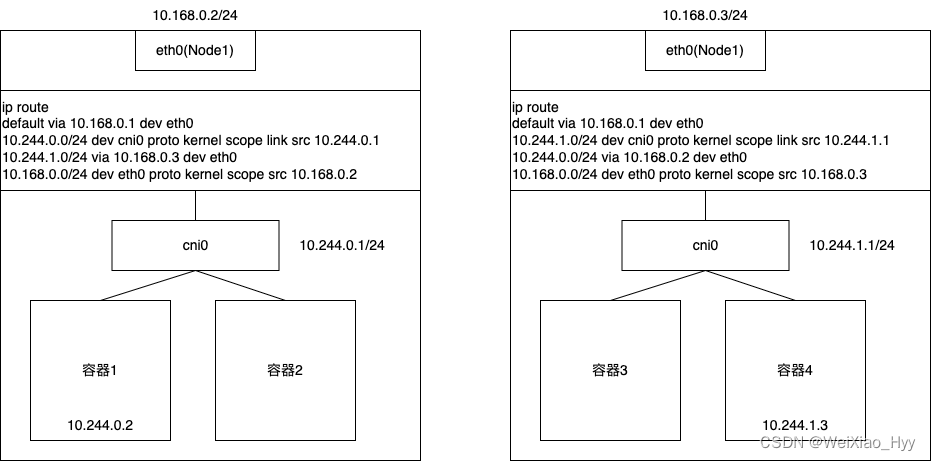

[root@mcwk8s03 mcwtest]#【3】排查容器是否可以跨宿主机IP,首先这个容器是在这个宿主机上,应该优先排查这个宿主机是否能到其他机器的docker0 IP

[root@mcwk8s03 mcwtest]# ifconfig docker

docker0: flags=4099<UP,BROADCAST,MULTICAST> mtu 1500

inet 172.17.83.1 netmask 255.255.255.0 broadcast 172.17.83.255

ether 02:42:e9:a4:51:4f txqueuelen 0 (Ethernet)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

[root@mcwk8s03 mcwtest]#

[root@mcwk8s05 /]# ifconfig docker

docker0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 172.17.98.1 netmask 255.255.255.0 broadcast 172.17.98.255

inet6 fe80::42:18ff:fee1:e8fc prefixlen 64 scopeid 0x20<link>

ether 02:42:18:e1:e8:fc txqueuelen 0 (Ethernet)

RX packets 548174 bytes 215033771 (205.0 MiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 632239 bytes 885330301 (844.3 MiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

[root@mcwk8s05 /]#

[root@mcwk8s06 ~]# ifconfig docker

docker0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 172.17.9.1 netmask 255.255.255.0 broadcast 172.17.9.255

inet6 fe80::42:f0ff:fefa:133e prefixlen 64 scopeid 0x20<link>

ether 02:42:f0:fa:13:3e txqueuelen 0 (Ethernet)

RX packets 229 bytes 31724 (30.9 KiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 212 bytes 53292 (52.0 KiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

[root@mcwk8s06 ~]#

可以看到,05宿主机是不通其他机器的docker0的,03 06是互通的

[root@mcwk8s05 /]# ping -c 1 172.17.83.1

PING 172.17.83.1 (172.17.83.1) 56(84) bytes of data.

^C

--- 172.17.83.1 ping statistics ---

1 packets transmitted, 0 received, 100% packet loss, time 0ms

[root@mcwk8s05 /]# ping -c 1 172.17.9.1

PING 172.17.9.1 (172.17.9.1) 56(84) bytes of data.

^C

--- 172.17.9.1 ping statistics ---

1 packets transmitted, 0 received, 100% packet loss, time 0ms

[root@mcwk8s05 /]#

[root@mcwk8s06 ~]# ping -c 1 172.17.83.1

PING 172.17.83.1 (172.17.83.1) 56(84) bytes of data.

64 bytes from 172.17.83.1: icmp_seq=1 ttl=64 time=0.246 ms

--- 172.17.83.1 ping statistics ---

1 packets transmitted, 1 received, 0% packet loss, time 0ms

rtt min/avg/max/mdev = 0.246/0.246/0.246/0.000 ms

[root@mcwk8s06 ~]#【4】可以看到,etcd里面,没有005宿主机的docker网段的

[root@mcwk8s03 mcwtest]# etcdctl ls /coreos.com/network/subnets

/coreos.com/network/subnets/172.17.9.0-24

/coreos.com/network/subnets/172.17.83.0-24

[root@mcwk8s03 mcwtest]# etcdctl get /coreos.com/network/subnets/172.17.9.0-24

{"PublicIP":"10.0.0.36","BackendType":"vxlan","BackendData":{"VtepMAC":"2a:2c:21:3a:58:21"}}

[root@mcwk8s03 mcwtest]# etcdctl get /coreos.com/network/subnets/172.17.83.0-24

{"PublicIP":"10.0.0.33","BackendType":"vxlan","BackendData":{"VtepMAC":"b2:83:33:7b:fd:37"}}

[root@mcwk8s03 mcwtest]#【5】重启005的flannel服务,如果不重启docker0,那么网络就会有点问题,docker0不会被分配新的网段IP

[root@mcwk8s05 ~]# systemctl restart flanneld.service

[root@mcwk8s05 ~]# ifconfig flannel.1

flannel.1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 172.17.89.0 netmask 255.255.255.255 broadcast 0.0.0.0

inet6 fe80::3470:76ff:feea:39b8 prefixlen 64 scopeid 0x20<link>

ether 36:70:76:ea:39:b8 txqueuelen 0 (Ethernet)

RX packets 1 bytes 40 (40.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 9 bytes 540 (540.0 B)

TX errors 0 dropped 8 overruns 0 carrier 0 collisions 0

[root@mcwk8s05 ~]# ifconfig docker

docker0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 172.17.98.1 netmask 255.255.255.0 broadcast 172.17.98.255

inet6 fe80::42:18ff:fee1:e8fc prefixlen 64 scopeid 0x20<link>

ether 02:42:18:e1:e8:fc txqueuelen 0 (Ethernet)

RX packets 551507 bytes 216568663 (206.5 MiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 635860 bytes 891305864 (850.0 MiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

[root@mcwk8s05 ~]# systemctl restart docker

[root@mcwk8s05 ~]# ifconfig docker

docker0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 172.17.89.1 netmask 255.255.255.0 broadcast 172.17.89.255

inet6 fe80::42:18ff:fee1:e8fc prefixlen 64 scopeid 0x20<link>

ether 02:42:18:e1:e8:fc txqueuelen 0 (Ethernet)

RX packets 552135 bytes 216658479 (206.6 MiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 636771 bytes 892057926 (850.7 MiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

[root@mcwk8s05 ~]#【6】再次查看,etcd已经有了05节点的这个网段了

[root@mcwk8s03 mcwtest]# etcdctl ls /coreos.com/network/subnets

/coreos.com/network/subnets/172.17.83.0-24

/coreos.com/network/subnets/172.17.9.0-24

/coreos.com/network/subnets/172.17.89.0-24

[root@mcwk8s03 mcwtest]# etcdctl get /coreos.com/network/subnets/172.17.89.0-24

{"PublicIP":"10.0.0.35","BackendType":"vxlan","BackendData":{"VtepMAC":"36:70:76:ea:39:b8"}}

[root@mcwk8s03 mcwtest]#【7】再次测试,05节点的,可以nodeport访问到了

[root@mcwk8s03 mcwtest]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.2.0.1 <none> 443/TCP 583d

mcwtest-svc NodePort 10.2.0.155 <none> 2024:33958/TCP 154m

nginx ClusterIP None <none> 80/TCP 413d

[root@mcwk8s03 mcwtest]# curl -I 10.2.0.155:2024

curl: (7) Failed connect to 10.2.0.155:2024; Connection timed out

[root@mcwk8s03 mcwtest]# curl -I 10.0.0.33:33958

curl: (7) Failed connect to 10.0.0.33:33958; Connection refused

[root@mcwk8s03 mcwtest]# curl -I 10.0.0.35:33958

HTTP/1.0 200 OK

Server: SimpleHTTP/0.6 Python/2.7.18

Date: Tue, 04 Jun 2024 16:59:03 GMT

Content-type: text/html; charset=ANSI_X3.4-1968

Content-Length: 816

[root@mcwk8s03 mcwtest]# curl -I 10.0.0.36:33958

HTTP/1.0 200 OK

Server: SimpleHTTP/0.6 Python/2.7.18

Date: Tue, 04 Jun 2024 16:59:12 GMT

Content-type: text/html; charset=ANSI_X3.4-1968

Content-Length: 816

[root@mcwk8s03 mcwtest]#【8】03节点不通,可能是03是master,但是它本身好像不作为node,就是个单纯的master,所以没法当做nodeIP去访问。但是集群IP,无法访问,是怎么回事呢

[root@mcwk8s03 mcwtest]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.2.0.1 <none> 443/TCP 583d

mcwtest-svc NodePort 10.2.0.155 <none> 2024:33958/TCP 157m

nginx ClusterIP None <none> 80/TCP 413d

[root@mcwk8s03 mcwtest]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

mcwk8s05 Ready <none> 580d v1.15.12

mcwk8s06 Ready <none> 580d v1.15.12

[root@mcwk8s03 mcwtest]#【9】路由方面,重启flannel,etcd里面会重新写入新的网段,并且其他节点也会有这个新的网段的路由,需要重启该宿主机的docker,给容器重新分配新的IP用吧,应该。

之前03 master只有一个flanel的路由,

[root@mcwk8s03 mcwtest]# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 10.0.0.254 0.0.0.0 UG 100 0 0 eth0

10.0.0.0 0.0.0.0 255.255.255.0 U 100 0 0 eth0

172.17.9.0 172.17.9.0 255.255.255.0 UG 0 0 0 flannel.1

172.17.83.0 0.0.0.0 255.255.255.0 U 0 0 0 docker0

[root@mcwk8s03 mcwtest]#

05 node重启之后,03上多出来一个05 node的flannel路由,

[root@mcwk8s03 mcwtest]# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 10.0.0.254 0.0.0.0 UG 100 0 0 eth0

10.0.0.0 0.0.0.0 255.255.255.0 U 100 0 0 eth0

172.17.9.0 172.17.9.0 255.255.255.0 UG 0 0 0 flannel.1

172.17.83.0 0.0.0.0 255.255.255.0 U 0 0 0 docker0

172.17.89.0 172.17.89.0 255.255.255.0 UG 0 0 0 flannel.1

[root@mcwk8s03 mcwtest]#

06好的node也是如此

之前:

[root@mcwk8s06 ~]# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 10.0.0.254 0.0.0.0 UG 100 0 0 eth0

10.0.0.0 0.0.0.0 255.255.255.0 U 100 0 0 eth0

172.17.9.0 0.0.0.0 255.255.255.0 U 0 0 0 docker0

172.17.83.0 172.17.83.0 255.255.255.0 UG 0 0 0 flannel.1

[root@mcwk8s06 ~]#

操作之后,多了个路由

[root@mcwk8s06 ~]# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 10.0.0.254 0.0.0.0 UG 100 0 0 eth0

10.0.0.0 0.0.0.0 255.255.255.0 U 100 0 0 eth0

172.17.9.0 0.0.0.0 255.255.255.0 U 0 0 0 docker0

172.17.83.0 172.17.83.0 255.255.255.0 UG 0 0 0 flannel.1

172.17.89.0 172.17.89.0 255.255.255.0 UG 0 0 0 flannel.1

[root@mcwk8s06 ~]#

05node之前是有两个路由,但是都是错误的

[root@mcwk8s05 /]# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 10.0.0.254 0.0.0.0 UG 100 0 0 eth0

10.0.0.0 0.0.0.0 255.255.255.0 U 100 0 0 eth0

172.17.59.0 172.17.59.0 255.255.255.0 UG 0 0 0 flannel.1

172.17.61.0 172.17.61.0 255.255.255.0 UG 0 0 0 flannel.1

172.17.98.0 0.0.0.0 255.255.255.0 U 0 0 0 docker0

[root@mcwk8s05 /]#

05重启之后,也更新了路由为正确的

[root@mcwk8s05 /]# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 10.0.0.254 0.0.0.0 UG 100 0 0 eth0

10.0.0.0 0.0.0.0 255.255.255.0 U 100 0 0 eth0

172.17.9.0 172.17.9.0 255.255.255.0 UG 0 0 0 flannel.1

172.17.83.0 172.17.83.0 255.255.255.0 UG 0 0 0 flannel.1

172.17.89.0 0.0.0.0 255.255.255.0 U 0 0 0 docker0

[root@mcwk8s05 /]#【10】然后看看集群IP为啥不通,这直接原因应该是没有ipvs规则。

[root@mcwk8s03 mcwtest]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.2.0.1 <none> 443/TCP 583d

mcwtest-svc NodePort 10.2.0.155 <none> 2024:33958/TCP 3h3m

nginx ClusterIP None <none> 80/TCP 413d

[root@mcwk8s03 mcwtest]# curl -I 10.2.0.155:2024

curl: (7) Failed connect to 10.2.0.155:2024; Connection timed out

[root@mcwk8s03 mcwtest]# ipvsadm -Ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

[root@mcwk8s03 mcwtest]# systemctl status kube-proxy

Unit kube-proxy.service could not be found.

[root@mcwk8s03 mcwtest]#

其它节点都是有ipvs规则的,有时间确认下,ipvsadm规则是哪个服务创建的,应该是kube-proxy创建的吧,我们的03 master是没有部署kube-proxy,这样的话,没有ipvs规则并且无法在master上访问集群IP就说的通了。如过是这样的话,也就是容器集群IP之间的通信,跟ipvs有关,跟apiserver是否挂了没有直接关系,不影响,有时间验证

[root@mcwk8s05 /]# ipvsadm -Ln|grep -C 1 10.2.0.155

-> 172.17.89.4:9090 Masq 1 0 0

TCP 10.2.0.155:2024 rr

-> 172.17.89.10:20000 Masq 1 0 0

[root@mcwk8s05 /]#

[root@mcwk8s05 /]# curl -I 10.2.0.155:2024

HTTP/1.0 200 OK

Server: SimpleHTTP/0.6 Python/2.7.18

Date: Tue, 04 Jun 2024 17:34:45 GMT

Content-type: text/html; charset=ANSI_X3.4-1968

Content-Length: 816

[root@mcwk8s05 /]#【综上】

单节点的容器网络用nodeIP:nodeport等之类的用不了了,可以优先检查下docker0 网关是不是不通了;如果flannel等网络服务重启之后,即使是pod方式部署的网络插件,也要看下重启docker服务,让容器分配新的网段。比如某次flannel oom总是重启,导致kube001网络故障;每个机器,好像都有其他节点的flannel.1网卡网段的路由,使用当前机器的flannel.1的网卡接口;并且有当前节点docker0网段的路由,是走的 0.0.0.0网关,走默认路由由上面可以知道,svc里面有个是clusterIP用的端口,clusterIP是用ipvs规则管理,进行数据转发的;nodeip clusterIP的ipvs规则进行转发,因为转发到后端容器IP,当前宿主机有所有node的flannel网段的路由,所有flannel网关以及docker0都正常且对应网段的话,那么就是用这两个接口实现容器跨宿主机通信的。而至于访问nodeip:nodeport以及clusterIP:port是怎么知道把流量给到正确的pod的,这里是通过ipvs规则来实现寻找到后端pod的,转发到pod对应的IP和端口的时候,又根据当前机器有所有网络插件flannel,也就是每个宿主机单独网段的路由条目,让它们知道自己要去走flannel.1接口。而etcd保存有哪个网段是哪个宿主机,有对应宿主机IP,找到宿主机IP了,那么在该机器上转发到的pod机器,在那个宿主机上就是能用该IP,使用docker0进行通信的,因为单个宿主机上的容器,都是通过docker0进行通信,并且互通的。只有跨宿主机通信容器的时候,才会根据路由,找到flannel.1接口,然后在etcd找到是那个宿主机上的容器,然后找到这个容器,完成通信。flannel网络插件这样,其它网络插件原理类似;ipvs网络代理模式是这个作用,iptables网络代理模式作用类似。

文章转载自:马昌伟

![[Bug]使用Transformers 微调 Whisper出现版本不兼容的bug](https://img-blog.csdnimg.cn/direct/91f3f5876f9240a4aefe0851648dfbb6.png)