1,准备数据集

1.1voc数据转yolo数据

voc格式

文件联级如下所述:

VOCdevkit

---VOC2007

---Annotations

---ImageSets

---JPEGImages

yolo格式

voc_to_yolo.py

from tqdm import tqdm

import shutil

from pathlib import Path

import xml.etree.ElementTree as ET

def convert_label(path, lb_path, year, image_id, names):

def convert_box(size, box):

dw, dh = 1. / size[0], 1. / size[1]

x, y, w, h = (box[0] + box[1]) / 2.0 - 1, (box[2] + box[3]) / 2.0 - 1, box[1] - box[0], box[3] - box[2]

return x * dw, y * dh, w * dw, h * dh

in_file = open(path / f'VOC{year}/Annotations/{image_id}.xml')

out_file = open(lb_path, 'w')

tree = ET.parse(in_file)

root = tree.getroot()

size = root.find('size')

w = int(size.find('width').text)

h = int(size.find('height').text)

for obj in root.iter('object'):

cls = obj.find('name').text

if cls in names:

xmlbox = obj.find('bndbox')

bb = convert_box((w, h), [float(xmlbox.find(x).text) for x in ('xmin', 'xmax', 'ymin', 'ymax')])

cls_id = names.index(cls) # class id

out_file.write(" ".join(str(a) for a in (cls_id, *bb)) + '\n')

else:

print("category error: ", cls)

year = "2007"

image_sets = ["train", "val"]

path = Path("H:\\work\\daodan_move\\ultralytics-main\\ultralytics\\datasets\\VOCdevkit\\")

class_names = ["call","dislike","fist","four","like","mute","ok","one","palm","1","2","3","4","5","6","7","8","9","10"]

for image_set in image_sets:

imgs_path = path / 'images' / f'{image_set}'

lbs_path = path / 'labels' / f'{image_set}'

imgs_path.mkdir(exist_ok=True, parents=True)

lbs_path.mkdir(exist_ok=True, parents=True)

with open(path / f'VOC{year}/ImageSets/Main/{image_set}.txt') as f:

image_ids = f.read().strip().split()

for id in tqdm(image_ids, desc=f'{image_set}'):

f = path / f'VOC{year}/JPEGImages/{id}.jpg' # old img path

lb_path = (lbs_path / f.name).with_suffix('.txt') # new label path

# f.rename(imgs_path / f.name) # move image

shutil.copyfile(f, imgs_path / f.name) # copy image

convert_label(path, lb_path, year, id, class_names) # convert labels to YOLO format

1.2 COCO格式转YOLO格式 转换脚本

coco格式

── VOCdevkit

├── images

│ ├── train # 存放训练集图片

│ └── val # 存放验证集图片

└── labels

├── train # 存放训练集标注文件

└── val # 存放验证集标注文件

import json

import os

import shutil

from tqdm import tqdm

coco_path = "F:/datasets/Apple_Detection_Swift-YOLO_192"

output_path = "F:/vsCode/ultralytics/datasets/Apple"

os.makedirs(os.path.join(output_path, "images", "train"), exist_ok=True)

os.makedirs(os.path.join(output_path, "images", "val"), exist_ok=True)

os.makedirs(os.path.join(output_path, "labels", "train"), exist_ok=True)

os.makedirs(os.path.join(output_path, "labels", "val"), exist_ok=True)

with open(os.path.join(coco_path, "train", "_annotations.coco.json"), "r") as f:

train_annotations = json.load(f)

with open(os.path.join(coco_path, "valid", "_annotations.coco.json"), "r") as f:

val_annotations = json.load(f)

# Iterate over the training images

for image in tqdm(train_annotations["images"]):

width, height = image["width"], image["height"]

scale_x = 1.0 / width

scale_y = 1.0 / height

label = ""

for annotation in train_annotations["annotations"]:

if annotation["image_id"] == image["id"]:

# Convert the annotation to YOLO format

x, y, w, h = annotation["bbox"]

x_center = x + w / 2.0

y_center = y + h / 2.0

x_center *= scale_x

y_center *= scale_y

w *= scale_x

h *= scale_y

class_id = annotation["category_id"]

label += "{} {} {} {} {}\n".format(class_id, x_center, y_center, w, h)

# Save the image and label

shutil.copy(os.path.join(coco_path, "train", image["file_name"]), os.path.join(output_path, "images", "train", image["file_name"]))

with open(os.path.join(output_path, "labels", "train", image["file_name"].replace(".jpg", ".txt")), "w") as f:

f.write(label)

# Iterate over the validation images

for image in tqdm(val_annotations["images"]):

width, height = image["width"], image["height"]

scale_x = 1.0 / width

scale_y = 1.0 / height

label = ""

for annotation in val_annotations["annotations"]:

if annotation["image_id"] == image["id"]:

# Convert the annotation to YOLO format

x, y, w, h = annotation["bbox"]

x_center = x + w / 2.0

y_center = y + h / 2.0

x_center *= scale_x

y_center *= scale_y

w *= scale_x

h *= scale_y

class_id = annotation["category_id"]

label += "{} {} {} {} {}\n".format(class_id, x_center, y_center, w, h)

# Save the image and label

shutil.copy(os.path.join(coco_path, "valid", image["file_name"]), os.path.join(output_path, "images", "val", image["file_name"]))

with open(os.path.join(output_path, "labels", "val", image["file_name"].replace(".jpg", ".txt")), "w") as f:

f.write(label)

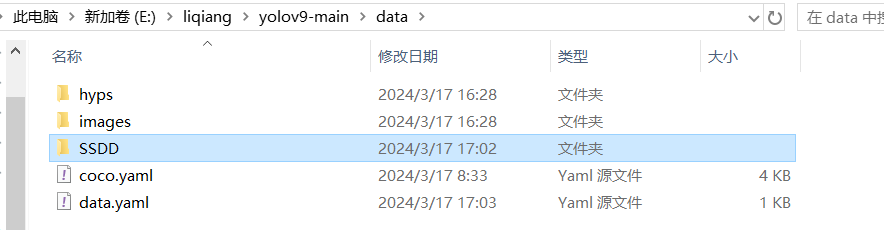

2.训练数据

将voc.yaml复制为voc_self.yaml,然后修改如下:

# Ultralytics YOLO 🚀, AGPL-3.0 license

# PASCAL VOC dataset http://host.robots.ox.ac.uk/pascal/VOC by University of Oxford

# Documentation: # Documentation: https://docs.ultralytics.com/datasets/detect/voc/

# Example usage: yolo train data=VOC.yaml

# parent

# ├── ultralytics

# └── datasets

# └── VOC ← downloads here (2.8 GB)

# Train/val/test sets as 1) dir: path/to/imgs, 2) file: path/to/imgs.txt, or 3) list: [path/to/imgs1, path/to/imgs2, ..]

path: H:\\work\\daodan_move\\ultralytics-main\\ultralytics\\datasets\\VOCdevkit

train: # train images (relative to 'path') 16551 images

- images/train

val: # val images (relative to 'path') 4952 images

- images/val

test: # test images (optional)

- images/val

# Classes

names:

0: call

1: dislike

2: fist

3: four

4: like

5: mute

6: ok

7: one

8: palm

9: 1

10: 2

11: 3

12: 4

13: 5

14: 6

15: 7

16: 8

17: 9

18: 10

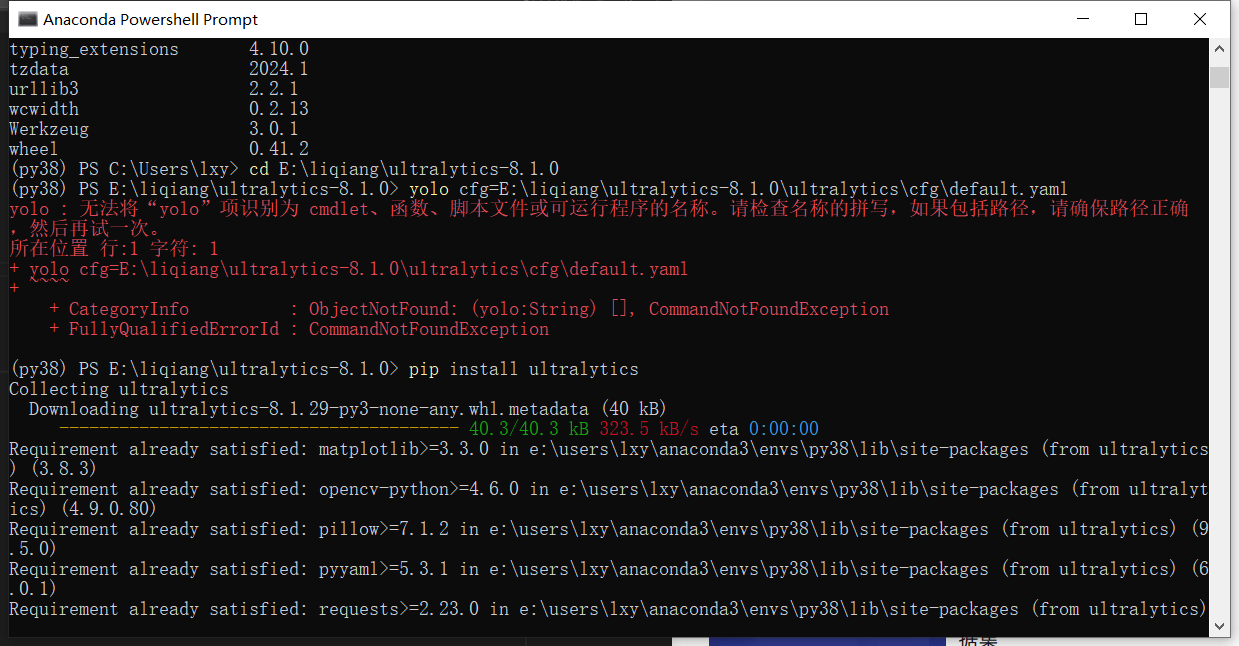

训练数据

yolo task=detect mode=train model=yolov8n.pt data=H:\\work\\daodan_move\\ultralytics-main\\ultralytics\\cfg\\datasets\\VOC_self.yaml epochs=100 batch=4 device=0or

# from ultralytics import YOLO

# # Load a model

# model = YOLO("yolov8n.pt") # load a pretrained model (recommended for training)

# # Train the model with 2 GPUs

# results = model.train(data="H:\\work\\daodan_move\\ultralytics-main\\ultralytics\\cfg\\datasets\\VOC_self.yaml", epochs=100, imgsz=640, device="0")

from ultralytics import YOLO

if __name__ == '__main__':

#下面是三种训练的方式,使用其中一个的时候要注释掉另外两个

# Load a model 加载模型

#这种方式是选择.yaml模型文件,从零开始训练

# model = YOLO('yolov8n.yaml') # build a new model from YAML

#这种方式是选择.pt文件,利用预训练模型训练

model = YOLO('yolov8n.pt') # load a pretrained model (recommended for training)

#这种方式是可以加载自己想要使用的预训练模型

# model = YOLO('yolov8n.yaml').load('yolov8n.pt') # build from YAML and transfer weights

# Train the model data = 这里用来设置你的data.yaml路径。即第一步设置的文件。后面的就是它的一些属性设置,你也可以增加一些比如batch=16等等。

model.train(data='H:\\work\\daodan_move\\ultralytics-main\\ultralytics\\cfg\\datasets\\VOC_self.yaml', epochs=100, imgsz=640, device="0")

![[图解]SysML和EA建模住宅安全系统-13-时间图](https://i-blog.csdnimg.cn/direct/3da983450460400cbee73a30af6e9c5e.png)