https://www.kaggle.com/code/vanpatangan/orders-forecasting-challenge

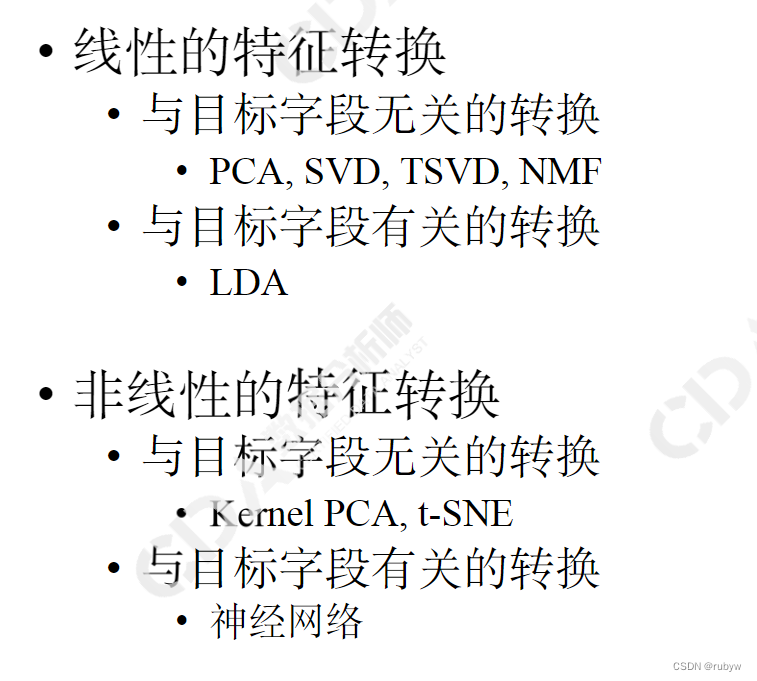

1 时间特征周期性转换、ont-hot编码

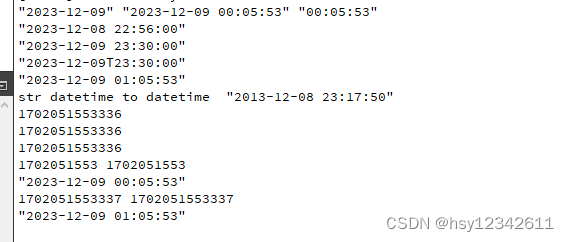

date_col = ['date']

for _col in date_col:

date_col = pd.to_datetime(all_df[_col], errors='coerce')

all_df[_col + "_year"] = date_col.dt.year.fillna(-1)

all_df[_col + "_month"] = date_col.dt.month.fillna(-1)

all_df[_col + "_day"] = date_col.dt.day.fillna(-1)

all_df[_col + "week"] = date_col.dt.isocalendar().week.fillna(-1)

all_df[_col + "_day_of_week"] = date_col.dt.dayofweek.fillna(-1)

all_df[_col + "_day_of_year"] = date_col.dt.dayofyear.fillna(-1)

for m in range(1,13):

all_df[f'month_{m}']=(all_df[_col + "_month"]==m)

for d in range(7):

all_df[f'dayofweek_{d}']=(all_df[_col + "_day_of_week"]==d)

# Apply sine and cosine transformations

all_df[_col + '_year_sin'] = all_df[_col + "_year"] * np.sin(2 * np.pi * all_df[_col + "_year"])

all_df[_col + '_year_cos'] = all_df[_col + "_year"] * np.cos(2 * np.pi * all_df[_col + "_year"])

all_df[_col + '_month_sin'] = all_df[_col + "_month"] * np.sin(2 * np.pi * all_df[_col + "_month"]/12)

all_df[_col + '_month_cos'] = all_df[_col + "_month"] * np.cos(2 * np.pi * all_df[_col + "_month"]/12)

all_df[_col + '_day_sin'] = all_df[_col + "_day"] * np.sin(2 * np.pi * all_df[_col + "_day"]/30)

all_df[_col + '_day_cos'] = all_df[_col + "_day"] * np.cos(2 * np.pi * all_df[_col + "_day"]/30)

all_df[_col + '_day_of_week_sin'] = all_df[_col + "_day_of_week"] * np.sin(2 * np.pi * all_df[_col + "_day_of_week"]/7)

all_df[_col + '_day_of_week_cos'] = all_df[_col + "_day_of_week"] * np.cos(2 * np.pi * all_df[_col + "_day_of_week"]/7)

all_df[_col + '_day_of_year_sin'] = all_df[_col + "_day_of_year"] * np.sin(2 * np.pi * all_df[_col + "_day_of_year"]/365)

all_df[_col + '_day_of_year_cos'] = all_df[_col + "_day_of_year"] * np.cos(2 * np.pi * all_df[_col + "_day_of_year"]/365)

all_df.loc[(all_df[_col + "_day_of_week"].isin([5, 6]))&(all_df['holiday_name']=='no_holiday'), 'holiday_name'] = 'weekend'

all_df.drop(_col, axis=1, inplace=True)

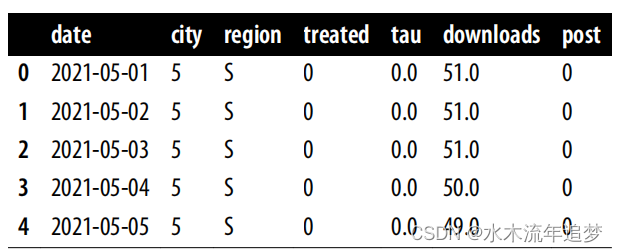

2 EDA

对数据有一个整体的认识:

def check(df):

"""

Generates a concise summary of DataFrame columns.

"""

# Use list comprehension to iterate over each column

summary = [

[col, df[col].dtype, df[col].count(), df[col].nunique(),

df[col].isnull().sum(), df.duplicated().sum()]

for col in df.columns]

# Create a DataFrame from the list of lists

df_check = pd.DataFrame(summary, columns=["column", "dtype", "instances", "unique", "sum_null", "duplicates"])

return df_check

print("Training Data Summary")

display(check(train_df))

print("Test Data Summary")

display(check(test_df))3 参数选择

from sklearn.model_selection import RandomizedSearchCV, cross_val_score

# Define parameter grid for Randomized Search

param_dist = {

'eta': uniform(0.01, 0.1),

'max_depth': randint(3, 10),

'subsample': uniform(0.7, 0.3),

'colsample_bytree': uniform(0.7, 0.3),

'n_estimators': randint(100, 500)

}

# Initialize RandomizedSearchCV

random_search = RandomizedSearchCV(

xgb.XGBRegressor(objective='reg:squarederror', n_jobs=-1),

param_distributions=param_dist,

n_iter=50,

cv=5,

scoring='neg_mean_absolute_percentage_error',

n_jobs=-1,

random_state=42,

verbose=0

)

# Fit RandomizedSearchCV

random_search.fit(X_train_scaled, y_train)

# Get the best model

best_model = random_search.best_estimator_

# Perform crossvalidation

kf = KFold(n_splits=5, shuffle=True, random_state=42)

cv_scores = cross_val_score(best_model, X_train_scaled, y_train,

cv=kf, scoring='neg_mean_absolute_percentage_error')

cv_mape = -cv_scores.mean()