linux 网卡模式

linux网卡支持非vlan模式、vlan模式、bond模式、bridge模式,下面介绍交换机端及服务器端配置示例。

前置要求:

- 准备一台物理交换机,以 H3C S5130 三层交换机为例

- 准备一台物理服务器,以 Ubuntu 22.04 LTS 操作系统为例

交换机创建2个示例VLAN,vlan10和vlan20,及VLAN接口。

<H3C>system-view

[H3C]vlan 10 20

[H3C]interface Vlan-interface 10

[H3C-Vlan-interface10]ip address 172.16.10.1 24

[H3C-Vlan-interface10]undo shutdown

[H3C-Vlan-interface10]exit

[H3C]

[H3C]interface Vlan-interface 20

[H3C-Vlan-interface20]ip address 172.16.20.1 24

[H3C-Vlan-interface20]undo shutdown

[H3C-Vlan-interface20]exit

[H3C]

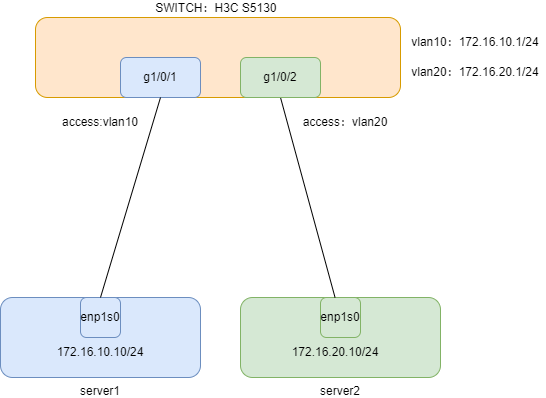

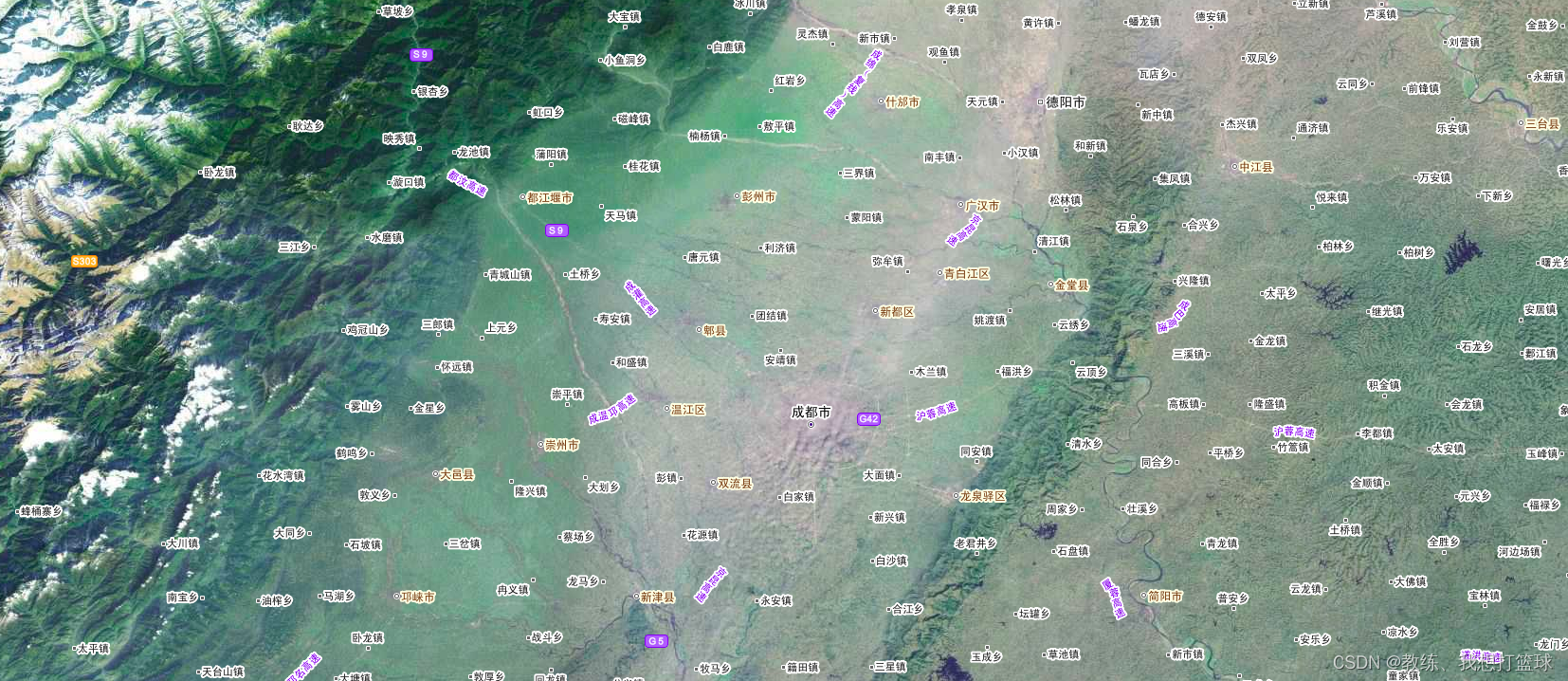

网卡非vlan模式

示意图如下

交换机配置,交换机接口配置为access模式,并加入对应vlan

<H3C>system-view

[H3C]interface GigabitEthernet 1/0/1

[H3C-GigabitEthernet1/0/1]port link-type access

[H3C-GigabitEthernet1/0/1]port access vlan 10

[H3C-GigabitEthernet1/0/1]exit

[H3C]

[H3C]interface GigabitEthernet 1/0/2

[H3C-GigabitEthernet1/0/2]port link-type access

[H3C-GigabitEthernet1/0/2]port access vlan 20

[H3C-GigabitEthernet1/0/2]exit

[H3C]

服务器1配置,服务器网卡直接配置IP地址

root@server1:~# cat /etc/netplan/00-installer-config.yaml

network:

ethernets:

enp1s0:

dhcp4: false

addresses:

- 172.16.10.10/24

nameservers:

addresses:

- 223.5.5.5

- 223.6.6.6

routes:

- to: default

via: 172.16.10.1

version: 2

服务器2配置,服务器网卡直接配置IP地址

root@server2:~# cat /etc/netplan/00-installer-config.yaml

network:

ethernets:

enp1s0:

dhcp4: false

addresses:

- 172.16.20.10/24

nameservers:

addresses:

- 223.5.5.5

- 223.6.6.6

routes:

- to: default

via: 172.16.20.1

version: 2

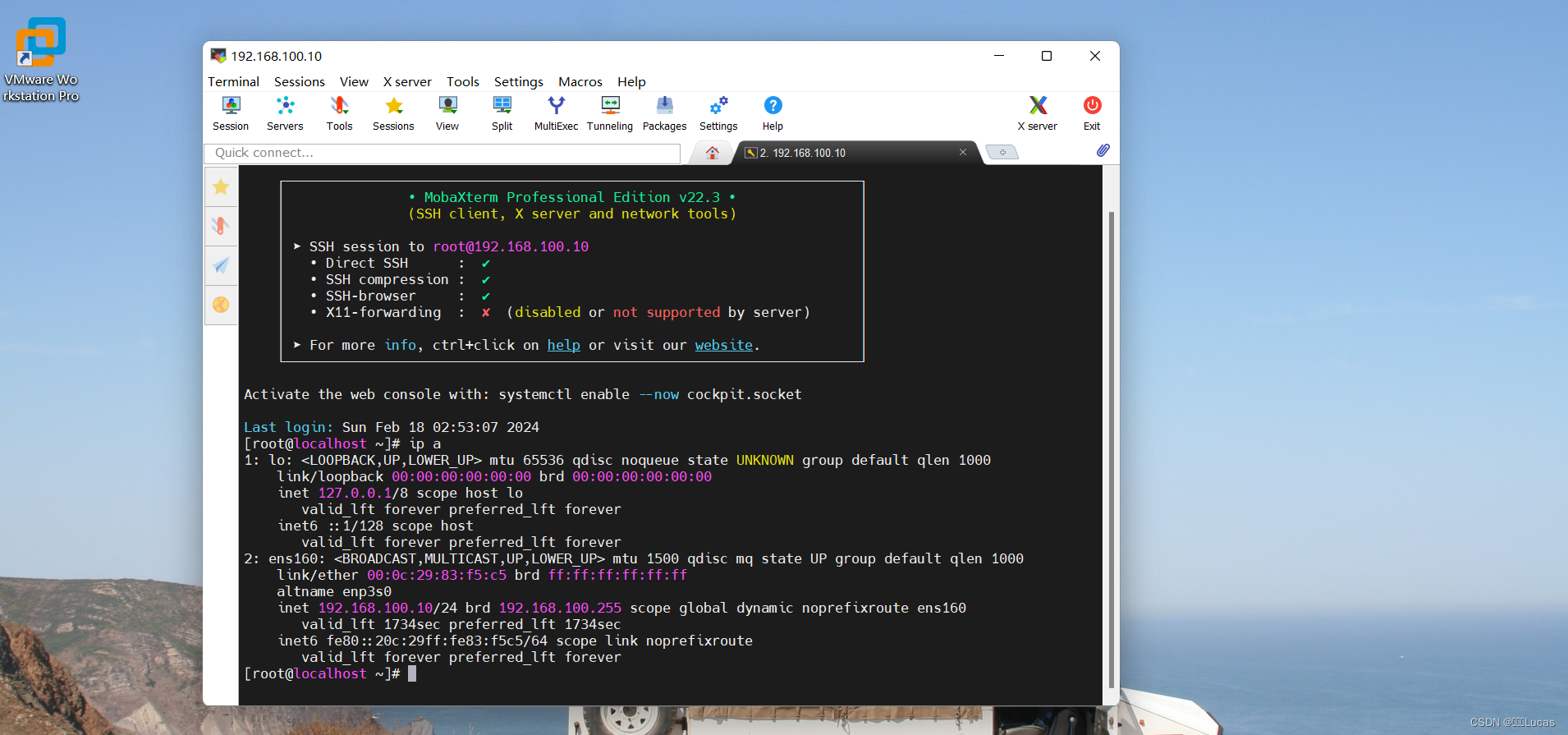

查看服务器接口信息

root@server1:~# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: enp1s0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 7c:b5:9b:59:0a:71 brd ff:ff:ff:ff:ff:ff

inet 172.16.10.10/24 brd 172.16.10.255 scope global enp1s0

valid_lft forever preferred_lft forever

inet6 fe80::7eb5:9bff:fe59:a71/64 scope link

valid_lft forever preferred_lft forever

通过server1 ping server2测试连通性,三层交换机支持路由功能,能够打通二层隔离的vlan网段。

root@server1:~# ping 172.16.20.10 -c 4

PING 172.16.20.10 (172.16.20.10) 56(84) bytes of data.

64 bytes from 172.16.20.10: icmp_seq=1 ttl=64 time=0.033 ms

64 bytes from 172.16.20.10: icmp_seq=2 ttl=64 time=0.048 ms

64 bytes from 172.16.20.10: icmp_seq=3 ttl=64 time=0.048 ms

64 bytes from 172.16.20.10: icmp_seq=4 ttl=64 time=0.047 ms

--- 172.16.20.10 ping statistics ---

4 packets transmitted, 4 received, 0% packet loss, time 3061ms

rtt min/avg/max/mdev = 0.033/0.044/0.048/0.006 ms

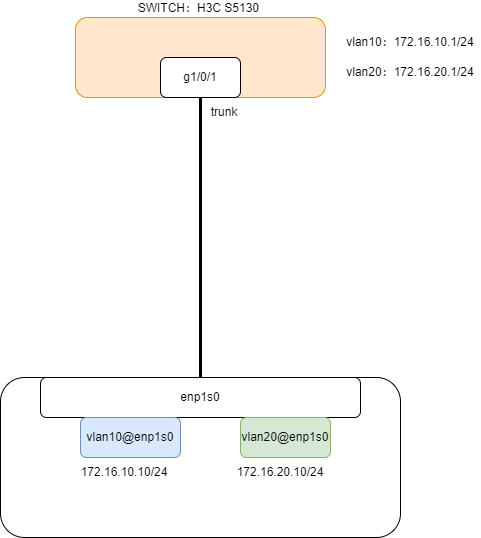

网卡vlan模式

示意图如下

交换机配置,交换机需要配置为trunk口,允许多个vlan通过

H3C>system-view

[H3C]interface GigabitEthernet 1/0/1

[H3C-GigabitEthernet1/0/1]port link-type trunk

[H3C-GigabitEthernet1/0/1]port trunk permit vlan 10 20

[H3C-GigabitEthernet1/0/1]exit

[H3C]

服务器配置,服务器需要配置vlan子接口

root@server1:~# cat /etc/netplan/00-installer-config.yaml

network:

ethernets:

enp1s0:

dhcp4: true

vlans:

vlan10:

id: 10

link: enp1s0

addresses: [ "172.16.10.10/24" ]

vlan20:

id: 20

link: enp1s0

addresses: [ "172.16.20.10/24" ]

version: 2

查看接口信息,新建了两个vlan子接口vlan10和vlan20

root@server1:~# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: enp1s0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 7c:b5:9b:59:0a:71 brd ff:ff:ff:ff:ff:ff

inet6 fe80::7eb5:9bff:fe59:a71/64 scope link

valid_lft forever preferred_lft forever

10: vlan10@enp1s0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

link/ether 7c:b5:9b:59:0a:71 brd ff:ff:ff:ff:ff:ff

inet 172.16.10.10/24 brd 172.16.10.255 scope global vlan10

valid_lft forever preferred_lft forever

inet6 fe80::7eb5:9bff:fe59:a71/64 scope link

valid_lft forever preferred_lft forever

11: vlan20@enp1s0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

link/ether 7c:b5:9b:59:0a:71 brd ff:ff:ff:ff:ff:ff

inet 172.16.20.10/24 brd 172.16.20.255 scope global vlan20

valid_lft forever preferred_lft forever

inet6 fe80::7eb5:9bff:fe59:a71/64 scope link

valid_lft forever preferred_lft forever

通过server1 ping server2测试连通性

root@server1:~# ping 172.16.20.10 -c 4

PING 172.16.20.10 (172.16.20.10) 56(84) bytes of data.

64 bytes from 172.16.20.10: icmp_seq=1 ttl=64 time=0.033 ms

64 bytes from 172.16.20.10: icmp_seq=2 ttl=64 time=0.048 ms

64 bytes from 172.16.20.10: icmp_seq=3 ttl=64 time=0.048 ms

64 bytes from 172.16.20.10: icmp_seq=4 ttl=64 time=0.047 ms

--- 172.16.20.10 ping statistics ---

4 packets transmitted, 4 received, 0% packet loss, time 3061ms

rtt min/avg/max/mdev = 0.033/0.044/0.048/0.006 ms

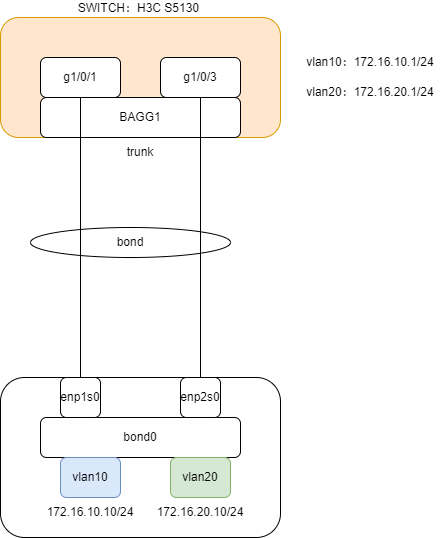

网卡bond模式

示意图如下

交换机配置,配置动态链路聚合,将端口g1/0/1和g1/0/3加入聚合组。然后将bond口配置为trunk模式。

<H3C>system-view

[H3C]interface Bridge-Aggregation 1

[H3C-Bridge-Aggregation1]link-aggregation mode dynamic

[H3C-Bridge-Aggregation1]quit

[H3C]interface GigabitEthernet 1/0/1

[H3C-GigabitEthernet1/0/1]port link-aggregation group 1

[H3C-GigabitEthernet1/0/1]exit

[H3C]interface GigabitEthernet 1/0/3

[H3C-GigabitEthernet1/0/3]port link-aggregation group 1

[H3C-GigabitEthernet1/0/3]exit

[H3C]interface Bridge-Aggregation 1

[H3C-Bridge-Aggregation1]port link-type trunk

[H3C-Bridge-Aggregation1]port trunk permit vlan 10 20

[H3C-Bridge-Aggregation1]exit

服务器配置

root@server1:~# cat /etc/netplan/00-installer-config.yaml

network:

version: 2

ethernets:

enp1s0:

dhcp4: no

enp2s0:

dhcp4: no

bonds:

bond0:

interfaces:

- enp1s0

- enp2s0

parameters:

mode: 802.3ad

lacp-rate: fast

mii-monitor-interval: 100

transmit-hash-policy: layer2+3

vlans:

vlan10:

id: 10

link: bond0

addresses: [ "172.16.10.10/24" ]

vlan20:

id: 20

link: bond0

addresses: [ "172.16.20.10/24" ]

查看网卡信息,新建了bond0网口,并且基于bond0网口创建了两个vlan子接口vlan10和vlan20,enp1s0和enp2s0显示master bond0,说明两个网卡属于bond0成员接口。

root@server1:~# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: enp1s0: <BROADCAST,MULTICAST,SLAVE,UP,LOWER_UP> mtu 1500 qdisc fq_codel master bond0 state UP group default qlen 1000

link/ether ae:fd:60:48:84:1a brd ff:ff:ff:ff:ff:ff permaddr 7c:b5:9b:59:0a:71

3: enp2s0: <BROADCAST,MULTICAST,SLAVE,UP,LOWER_UP> mtu 1500 qdisc fq_codel master bond0 state UP group default qlen 1000

link/ether ae:fd:60:48:84:1a brd ff:ff:ff:ff:ff:ff permaddr e4:54:e8:dc:e5:88

7: bond0: <BROADCAST,MULTICAST,MASTER,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

link/ether ae:fd:60:48:84:1a brd ff:ff:ff:ff:ff:ff

inet6 fe80::acfd:60ff:fe48:841a/64 scope link

valid_lft forever preferred_lft forever

8: vlan10@bond0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

link/ether ae:fd:60:48:84:1a brd ff:ff:ff:ff:ff:ff

inet 172.16.10.10/24 brd 172.16.10.255 scope global vlan10

valid_lft forever preferred_lft forever

inet6 fe80::acfd:60ff:fe48:841a/64 scope link

valid_lft forever preferred_lft forever

9: vlan20@bond0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

link/ether ae:fd:60:48:84:1a brd ff:ff:ff:ff:ff:ff

inet 172.16.20.10/24 brd 172.16.20.255 scope global vlan20

valid_lft forever preferred_lft forever

inet6 fe80::acfd:60ff:fe48:841a/64 scope link

valid_lft forever preferred_lft forever

查看bond状态,Bonding Mode显示为IEEE 802.3ad Dynamic link aggregation,并且下面Slave Interface显示了两个成员接口的信息。

root@server1:~# cat /proc/net/bonding/bond0

Ethernet Channel Bonding Driver: v5.15.0-60-generic

Bonding Mode: IEEE 802.3ad Dynamic link aggregation

Transmit Hash Policy: layer2+3 (2)

MII Status: up

MII Polling Interval (ms): 100

Up Delay (ms): 0

Down Delay (ms): 0

Peer Notification Delay (ms): 0

802.3ad info

LACP active: on

LACP rate: fast

Min links: 0

Aggregator selection policy (ad_select): stable

System priority: 65535

System MAC address: ae:fd:60:48:84:1a

Active Aggregator Info:

Aggregator ID: 1

Number of ports: 2

Actor Key: 9

Partner Key: 1

Partner Mac Address: fc:60:9b:35:ad:18

Slave Interface: enp1s0

MII Status: up

Speed: 1000 Mbps

Duplex: full

Link Failure Count: 2

Permanent HW addr: 7c:b5:9b:59:0a:71

Slave queue ID: 0

Aggregator ID: 1

Actor Churn State: none

Partner Churn State: none

Actor Churned Count: 0

Partner Churned Count: 0

details actor lacp pdu:

system priority: 65535

system mac address: ae:fd:60:48:84:1a

port key: 9

port priority: 255

port number: 1

port state: 63

details partner lacp pdu:

system priority: 32768

system mac address: fc:60:9b:35:ad:18

oper key: 1

port priority: 32768

port number: 2

port state: 61

Slave Interface: enp2s0

MII Status: up

Speed: 1000 Mbps

Duplex: full

Link Failure Count: 3

Permanent HW addr: e4:54:e8:dc:e5:88

Slave queue ID: 0

Aggregator ID: 1

Actor Churn State: none

Partner Churn State: none

Actor Churned Count: 0

Partner Churned Count: 0

details actor lacp pdu:

system priority: 65535

system mac address: ae:fd:60:48:84:1a

port key: 9

port priority: 255

port number: 2

port state: 63

details partner lacp pdu:

system priority: 32768

system mac address: fc:60:9b:35:ad:18

oper key: 1

port priority: 32768

port number: 1

port state: 61

测试连通性,测试与交换机网关地址的连通性:

root@server1:~# ping 172.16.10.1 -c 4

PING 172.16.10.1 (172.16.10.1) 56(84) bytes of data.

64 bytes from 172.16.10.1: icmp_seq=1 ttl=255 time=1.64 ms

64 bytes from 172.16.10.1: icmp_seq=2 ttl=255 time=1.59 ms

64 bytes from 172.16.10.1: icmp_seq=3 ttl=255 time=1.95 ms

64 bytes from 172.16.10.1: icmp_seq=4 ttl=255 time=1.93 ms

--- 172.16.10.1 ping statistics ---

4 packets transmitted, 4 received, 0% packet loss, time 3006ms

rtt min/avg/max/mdev = 1.589/1.776/1.953/0.165 ms

root@server1:~#

关闭一个接口,再次测试连通性,依然能够ping通

root@server1:~# ip link set dev enp2s0 down

root@server1:~# ip link show enp2s0

3: enp2s0: <BROADCAST,MULTICAST,SLAVE> mtu 1500 qdisc fq_codel master bond0 state DOWN mode DEFAULT group default qlen 1000

link/ether ae:fd:60:48:84:1a brd ff:ff:ff:ff:ff:ff permaddr e4:54:e8:dc:e5:88

root@server1:~#

root@server1:~# ping 172.16.10.1 -c 4

PING 172.16.10.1 (172.16.10.1) 56(84) bytes of data.

64 bytes from 172.16.10.1: icmp_seq=1 ttl=255 time=1.54 ms

64 bytes from 172.16.10.1: icmp_seq=2 ttl=255 time=1.64 ms

64 bytes from 172.16.10.1: icmp_seq=3 ttl=255 time=2.73 ms

64 bytes from 172.16.10.1: icmp_seq=4 ttl=255 time=1.47 ms

--- 172.16.10.1 ping statistics ---

4 packets transmitted, 4 received, 0% packet loss, time 3006ms

rtt min/avg/max/mdev = 1.470/1.844/2.732/0.516 ms

桥接模式

示意图如下

交换机配置,交换机接口配置为access模式为例,并加入对应vlan

<H3C>system-view

[H3C]interface GigabitEthernet 1/0/1

[H3C-GigabitEthernet1/0/1]port link-type access

[H3C-GigabitEthernet1/0/1]port access vlan 10

[H3C-GigabitEthernet1/0/1]exit

[H3C]

服务器配置,物理网卡加入到网桥中,IP地址配置到网桥接口br0上。

root@server1:~# cat /etc/netplan/00-installer-config.yaml

network:

version: 2

ethernets:

enp1s0:

dhcp4: no

dhcp6: no

bridges:

br0:

interfaces: [enp1s0]

addresses: [172.16.10.10/24]

routes:

- to: default

via: 172.16.10.1

metric: 100

on-link: true

mtu: 1500

nameservers:

addresses:

- 223.5.5.5

- 223.6.6.6

parameters:

stp: true

forward-delay: 4

查看网卡信息

root@server1:~# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: enp1s0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel master br0 state UP group default qlen 1000

link/ether 7c:b5:9b:59:0a:71 brd ff:ff:ff:ff:ff:ff

12: br0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

link/ether 0e:d0:7e:31:9c:74 brd ff:ff:ff:ff:ff:ff

inet 172.16.10.10/24 brd 172.16.10.255 scope global br0

valid_lft forever preferred_lft forever

inet6 fe80::cd0:7eff:fe31:9c74/64 scope link

valid_lft forever preferred_lft forever

查看网桥及接口,当前网桥上只有一个物理接口enp1s0。

root@server1:~# apt install -y bridge-utils

root@ubuntu:~# brctl show

bridge name bridge id STP enabled interfaces

br0 8000.0ed07e319c74 yes enp1s0

root@server1:~#

这样在KVM虚拟化环境,虚拟机实例连接到网桥后,虚拟机可以配置与物理网卡相同网段的IP地址。访问虚拟机可以像访问物理机一样方便。

![[flask]cookie的基本使用/](https://img-blog.csdnimg.cn/direct/69a1acfaffb44f9a8940a5899c220147.png)