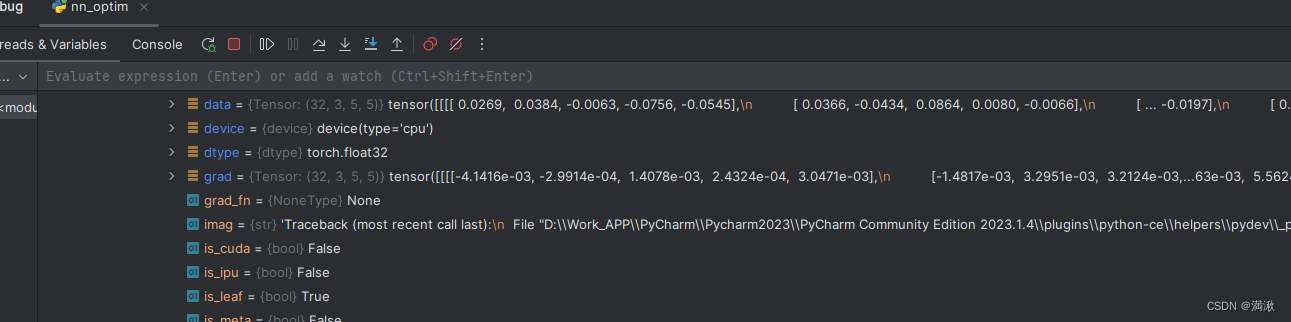

很久之前学PyTorch记的笔记,顺手整理一下

安装

pip3 install torch torchvision torchaudio --index-url https://download.pytorch.org/whl/cu118

tensors张量

标量是零维张量,向量是一维张量,矩阵是二维张量,一个RGB图像数组就是一个三维张量,第一维是图像高,第二维是图像的宽,第三维是图像颜色通道。

torch.Tensor是一个类, torch.tensor是一个函数,tensor是pytorch中最核心的数据结构,梯度的记录也是被其实现的

模型的输入输出,模型的参数都是张量

张量和numpy数组共享内存

import torch

#直接创建tensor

import torch

import numpy as np

l = [[1.,-1.],[1.,-1.]]

tensor_from_list = torch.tensor(l)

print(tensor_from_list,tensor_from_list.dtype)

"""

tensor([[ 1., -1.],

[ 1., -1.]]) torch.float32

"""

#从列表创建张量,自动判别类型

data = [[1, 2],[3, 4]]

print(type(data))

x_data = torch.tensor(data)

print(type(x_data))

print(x_data)

#查看数据类型

x_data.dtype

"""

<class 'list'>

<class 'torch.Tensor'>

tensor([[1, 2],

[3, 4]])

torch.int64

"""

#从numpy创建张量

import numpy as np

arr = np.array([[1, 2, 3], [4, 5, 6]])

t_from_array = torch.tensor(arr)

# print(t_from_list, t_from_list.dtype)

print(t_from_array, t_from_array.dtype)

"""

tensor([[1, 2, 3],

[4, 5, 6]], dtype=torch.int32) torch.int32

"""

#通过numpy的第二种方式:和numpy的原arrray共享内存

#当改变array里的数值,tensor中的数值也会被改变

arr = np.array([[1,2,3],[4,5,6]])

print(arr)

tensor_from_numpy = torch.from_numpy(arr)

print(tensor_from_numpy)

arr[0,0] = 10

print(arr)

print(tensor_from_numpy)

"""

[[1 2 3]

[4 5 6]]

tensor([[1, 2, 3],

[4, 5, 6]], dtype=torch.int32)

[[10 2 3]

[ 4 5 6]]

tensor([[10, 2, 3],

[ 4, 5, 6]], dtype=torch.int32)

"""

a = np.random.normal((2,3))

print(a)

a = torch.tensor(a)

a

"""

[2.03773753 0.70605461]

tensor([2.0377, 0.7061], dtype=torch.float64)

"""

# 指定out:即为指定返回的tensor赋值给out

# torch.zeros()给定的size创建一个全0的tensor,默认数据类型为torch.float32(也称为torch.float)。

o_t = torch.tensor([1])

print(o_t)

t = torch.zeros((3, 3), out=o_t)

print(t, '\n', o_t)

print(id(t), id(o_t))

# 通过torch.zeros创建的张量不仅赋给了t,同时赋给了o_t,并且这两个张量是共享同一块内存,只是变量名不同。

"""

tensor([1])

tensor([[0, 0, 0],

[0, 0, 0],

[0, 0, 0]])

tensor([[0, 0, 0],

[0, 0, 0],

[0, 0, 0]])

2804923738192 2804923738192

"""

#从另一个张量初始化,保留形状,数据类型

#全为1的张量

print(torch.ones_like(a))

#rand_like,zeros_like

#使用shape元组+随机值或常量值,也可以传入列表,也可以加,号

shape = (2,3,)

rand_tensor = torch.rand(shape)

ones_tensor = torch.ones(shape)

zeros_tensor = torch.zeros(shape)

#依据给定的size创建

t1 = torch.tensor([[1., -1.], [1., -1.]])

t2 = torch.zeros_like(t1)

print(t2)

#类似创建全0,还有创建全1的:torch.ones(),torch.ones_like()

# torch.full(size, fill_value, out=None, dtype=None, layout=torch.strided, device=None, requires_grad=False)

# 功能:依给定的size创建一个值全为fill_value的tensor。

print(torch.full((2, 3), 3.141592))

# 还有full_like

print(torch.arange(1,6,2))#创建等差的1维张量,长度为 (end-start)/step,需要注意数值区间为[start, end)。

"""

tensor([[0., 0.],

[0., 0.]])

tensor([[3.1416, 3.1416, 3.1416],

[3.1416, 3.1416, 3.1416]])

tensor([1, 3, 5])

"""

print(f"Random Tensor: \n {rand_tensor} \n")

print(f"Ones Tensor: \n {ones_tensor} \n")

print(f"Zeros Tensor: \n {zeros_tensor}")

"""

tensor([1., 1.], dtype=torch.float64)

Random Tensor:

tensor([[0.4611, 0.4579, 0.1990],

[0.7588, 0.5500, 0.7764]])

Ones Tensor:

tensor([[1., 1., 1.],

[1., 1., 1.]])

Zeros Tensor:

tensor([[0., 0., 0.],

[0., 0., 0.]])

"""

#属性

tensor = torch.rand(3,4)

print(f"Shape of tensor: {tensor.shape}")

print(f"Datatype of tensor: {tensor.dtype}")

print(f"Device tensor is stored on: {tensor.device}")

"""

Shape of tensor: torch.Size([3, 4])

Datatype of tensor: torch.float32

Device tensor is stored on: cpu

"""

#长度为steps 的均分一维张量

print(torch.linspace(3, 10, steps=5))

print(torch.linspace(1, 5, steps=3))

# 对数均分的1维张量,长度为steps, 底为base(默认10)

torch.logspace(start=0.1, end=1.0, steps=5)

torch.logspace(start=2, end=2, steps=1, base=2)

#单位对角矩阵

print(torch.eye(3))#如果列为空则创建方阵

print(torch.eye(3,4))

#空张量,这里的“空”指的是不会进行初始化赋值操作,类似还有torch.empty_like()

print(torch.empty((2,3)))

#stride (tuple of python:ints) - 张量存储在内存中的步长,是设置在内存中的存储方式。

print(torch.empty_strided((2,5),(1,2)))

"""

tensor([ 3.0000, 4.7500, 6.5000, 8.2500, 10.0000])

tensor([1., 3., 5.])

tensor([[1., 0., 0.],

[0., 1., 0.],

[0., 0., 1.]])

tensor([[1., 0., 0., 0.],

[0., 1., 0., 0.],

[0., 0., 1., 0.]])

tensor([[0.0000, 1.8750, 0.0000],

[1.8750, 0.0000, 1.8750]])

tensor([[0.0000e+00, 1.4013e-45, 0.0000e+00, 1.4013e-45, 0.0000e+00],

[0.0000e+00, 0.0000e+00, 0.0000e+00, 0.0000e+00, 0.0000e+00]])

"""

mean = torch.arange(1,11.)

print(mean)

std = torch.arange(1,0,-0.1)

print(std)

torch.normal(mean = mean,std = std)

#1.3530是通过均值为1,标准差为1的高斯分布采样得来,

# -1.3498是通过均值为2,标准差为0.9的高斯分布采样得来,以此类推

#在区间[0, 1)上,生成均匀分布。

print(torch.rand((2,3)))

#torch.rand_like之于torch.rand等同于torch.zeros_like之于torch.zeros

# 在区间[low, high)上,生成整数的均匀分布。

print(torch.randint(3,10,(3,3)))

#torch.randint_like

# 生成形状为size的标准正态分布张量,torch.rafndn_like

print(torch.randn((2,3)))

torch.randperm(n, out=None, dtype=torch.int64, layout=torch.strided, device=None, requires_grad=False)

功能:生成从0到n-1的随机排列。perm == permutation

torch.bernoulli(input, *, generator=None, out=None)

功能:以input的值为概率,生成伯努力分布(0-1分布,两点分布)。

主要参数:

input (Tensor) - 分布的概率值,该张量中的每个值的值域为[0-1]

example:

p = torch.empty(3, 3).uniform_(0, 1)

b = torch.bernoulli(p)

print("probability: \n{}, \nbernoulli_tensor:\n{}".format(p, b))

"""

probability:

tensor([[0.8256, 0.6711, 0.1326],

[0.8749, 0.7119, 0.6666],

[0.8028, 0.8902, 0.2548]]),

bernoulli_tensor:

tensor([[0., 1., 0.],

[1., 1., 1.],

[0., 1., 1.]])

"""

张量操作

#转移到gpu

if torch.cuda.is_available():

tensor = tensor.to("cuda")

print(torch.is_tensor(tensor))#Returns True if obj is a PyTorch tensor.

#判断复数,判断浮点型

#Returns True if the input is a single element tensor which is not equal to zero after type conversions.

test = torch.tensor(1.0)

print(torch.is_nonzero(test))

"""

True

True

"""

#切片+索引

tensor = torch.rand(4, 4)

print(f"First row: {tensor[0]}")

print(f"First column: {tensor[:, 0]}")

print(f"Last column: {tensor[..., -1]}")

tensor[:,1] = 0

print(tensor)

"""

First row: tensor([0.5492, 0.0567, 0.2490, 0.5438])

First column: tensor([0.5492, 0.8558, 0.5434, 0.5295])

Last column: tensor([0.5438, 0.7757, 0.2420, 0.6499])

tensor([[0.5492, 0.0000, 0.2490, 0.5438],

[0.8558, 0.0000, 0.8031, 0.7757],

[0.5434, 0.0000, 0.0919, 0.2420],

[0.5295, 0.0000, 0.7761, 0.6499]])

"""

#沿给定维度连接一系列张量,使用torch.cat((A,B),dim)时,除拼接维数dim数值可不同外其余维数数值需相同,方能对齐。

C = torch.cat( (A,B),0 ) #按维数0拼接(竖着拼)

C = torch.cat( (A,B),1 ) #按维数1拼接(横着拼)

t1 = torch.cat([tensor, tensor, tensor], dim=1)

print(t1)

#torch.arange返回一维张量,range比arange长1

print(torch.arange(5))

print(torch.arange(1,5,3))

"""

tensor([0, 1, 2, 3, 4])

tensor([1, 4])

"""

#eye,对角线全为1

#填充

torch.full([2,2],5)#==torch.ones([2,2])*5

torch.ones([2,2])*5

"""

tensor([[5., 5.],

[5., 5.]])

"""

#chunk尝试将张量拆分为指定数量的块。

b = torch.rand([3,2,])

print(b)

torch.chunk(b,chunks = 2)

#各种堆叠,各种分割

"""

tensor([[0.2334, 0.3184],

[0.6157, 0.6722],

[0.3690, 0.3402]])

(tensor([[0.2334, 0.3184],

[0.6157, 0.6722]]),

tensor([[0.3690, 0.3402]]))

"""

#gather

t = torch.tensor([[1,2],[3,4]])

torch.gather(t,1,torch.tensor([[0,0],[1,0]]))

"""

tensor([[1, 1],

[4, 3]])

"""

# reshape,元素顺序不变

#scatter,inplace:原地操作,内存位置没有改变

# squeeze,对多余的维度压缩

# 将维度为1的维度移除

b = torch.rand([3,2])

print(b.shape)

b = torch.reshape(b,[3,1,2])#只要保证列表元素个数相等就可以

print(b)

b = torch.squeeze(b)

print(b)

b = torch.reshape(b,[3,2,1,1,1])

print(b)

b = torch.squeeze(b,dim = 2)#从0开始

print(b.shape)

"""

torch.Size([3, 2])

tensor([[[0.5230, 0.6472]],

[[0.1827, 0.7827]],

[[0.3485, 0.8408]]])

tensor([[0.5230, 0.6472],

[0.1827, 0.7827],

[0.3485, 0.8408]])

tensor([[[[[0.5230]]],

[[[0.6472]]]],

[[[[0.1827]]],

[[[0.7827]]]],

[[[[0.3485]]],

[[[0.8408]]]]])

torch.Size([3, 2, 1, 1])

"""

#stack,沿着新的维度拼接,tensor维度要一样

a = torch.rand([3,2])

b = torch.rand([3,2])

print(torch.stack([a,b],dim = 0).shape)

print(torch.stack([a,b],dim = 1).shape)

print(torch.stack([a,b],dim = 2).shape)

"""

torch.Size([2, 3, 2])

torch.Size([3, 2, 2])

torch.Size([3, 2, 2])

"""