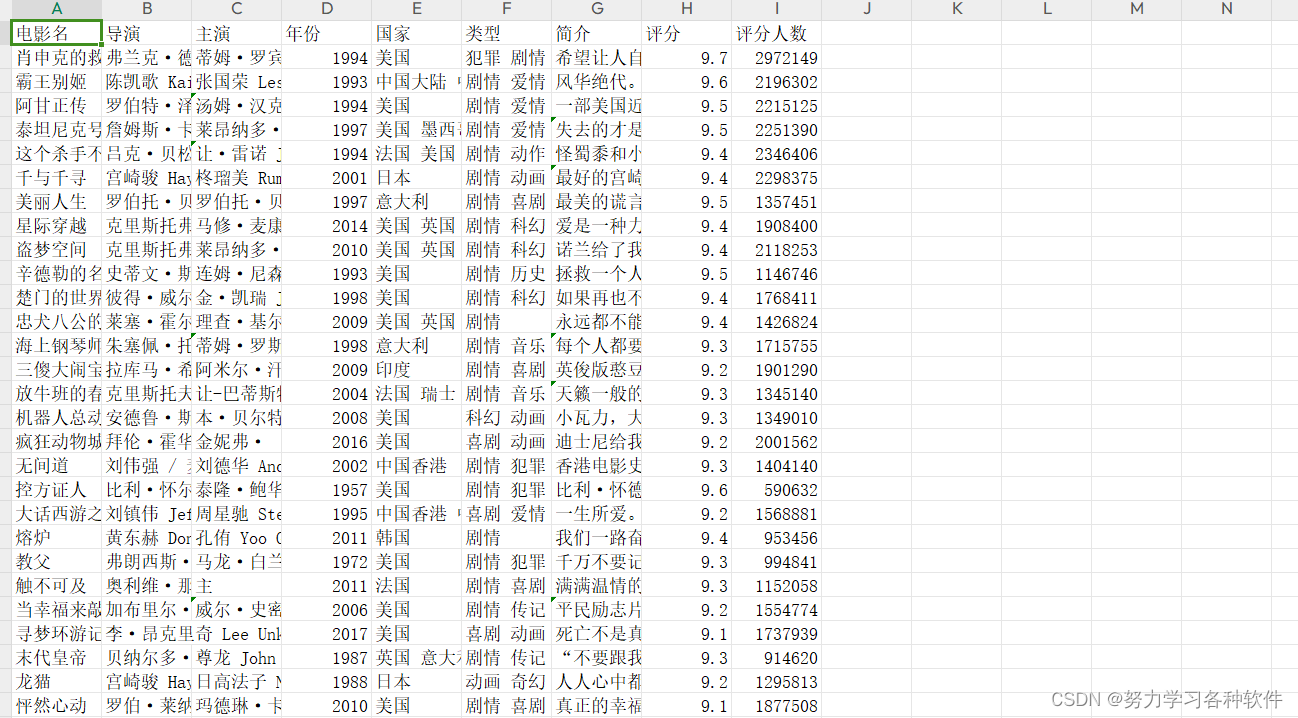

一、爬取数据并用csv文件保存

import numpy as np

import requests

from lxml import etree

from time import sleep

import xlwt

import csv

url='https://movie.douban.com/top250'

headers = {

'User-Agent':'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/86.0.4240.198 Safari/537.36'

}

titles_cn = []

titles_en=[]

links = []

director=[]

actors=[]

years=[]

nations=[]

types=[]

scores=[]

rating_nums=[]

fp = open('./douban_top250.csv','w',encoding='utf-8')

writer = csv.writer(fp)

writer.writerow(

['电影中文名','电影英文名','电影详情页链接','导演','演员','上映年份','国际','类型','评分','评分人数']

)

for i in range(0,226,25):

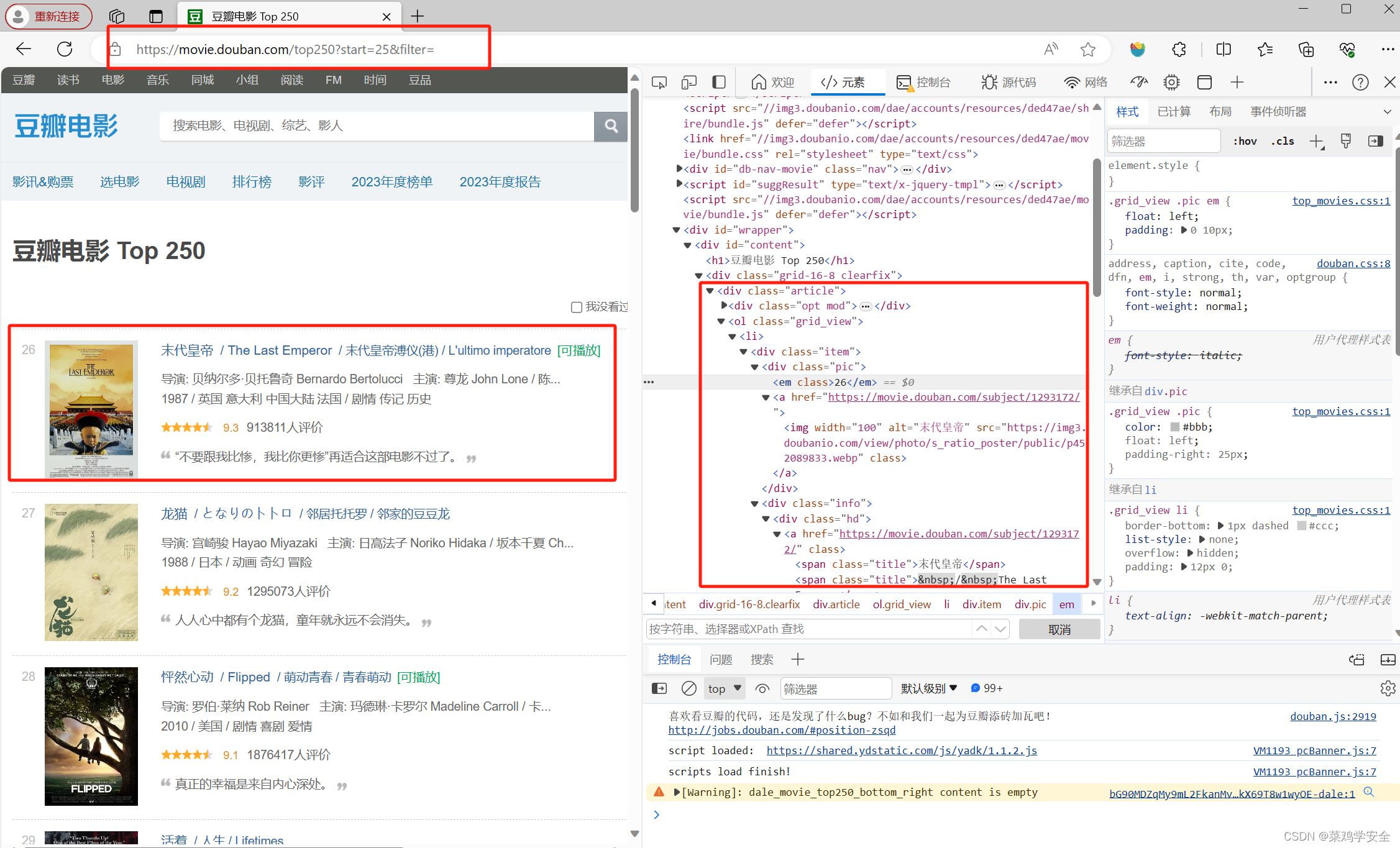

url = f'https://movie.douban.com/top250?start={i}&filter='

data={

'start':i,

'filter':' ',

}

response = requests.get(url, headers=headers, data=data)

sleep(1)

#print(response, status_code)

#print(response, encoding)

#print(response.text)

html = response.text

data = etree.HTML(html)

li_list=data.xpath('//*[@id="content"]/div/div[1]/ol/li')

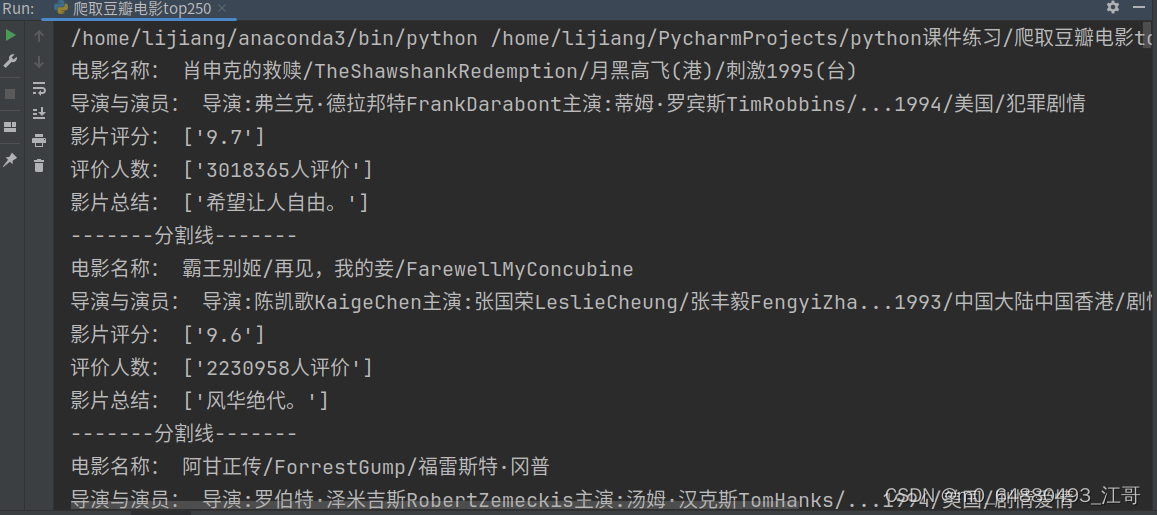

for each in li_list:

title1=each.xpath('./div/div[2]/div[1]/a/span[1]/text()')[0]

titles_cn.append(title1)

title2=each.xpath('./div/div[2]/div[1]/a/span[2]/text()')[0].strip('\xa0/\xa0')

titles_en.append(title2)

link = each.xpath('./div/div[2]/div[1]/a/@href')[0]

links.append(link)

info1 = each.xpath('./div/div[2]/div[2]/p[1]/text()[1]')[0].strip()

split_info1 = info1.split('\xa0\xa0\xa0')

dirt = split_info1[0].strip('导演: ')

director.append(dirt)

if len(split_info1) == 2:

ac = split_info1[1].strip('主演: ')

actors.append(ac)

else:

actors.append(np.nan)

info2 = each.xpath('./div/div[2]/div[2]/p[1]/text()[2]')[0].strip()

split_info2 = info2.split('\xa0/\xa0')

# print(split_info)

year = split_info2[0]

nation = split_info2[1]

ftype = split_info2[2]

years.append(year)

nations.append(nation)

types.append(ftype)

score = each.xpath('./div/div[2]/div[2]/div/span[2]/text()')[0]

scores.append(score)

num = each.xpath('./div/div[2]/div[2]/div/span[4]/text()')[0].strip('人评价')

rating_nums.append(num)

writer.writerow([title1, title2, link, dirt, ac, year, nation, ftype, score, num])

print(f'————————————第{int((i / 25) + 1)}页爬取完毕!——————————————')

fp.close()

print('------------------------------------------爬虫结束!---------------------------------------------')

![[<span style='color:red;'>Python</span>练习]使用<span style='color:red;'>Python</span><span style='color:red;'>爬虫</span><span style='color:red;'>爬</span><span style='color:red;'>取</span><span style='color:red;'>豆瓣</span><span style='color:red;'>top</span><span style='color:red;'>250</span>的电影的页面源码](https://img-blog.csdnimg.cn/direct/1e7f2a5a6b2f4251b7cde3a5be7970f7.png)