RAGFlow 学习笔记

- 0. 引言

- 1. RAGFlow 支持的文档格式

- 2. 嵌入模型选择后不再允许改变

- 3. 干预文件解析

- 4. RAGFlow 与其他 RAG 产品有何不同?

- 5. RAGFlow 支持哪些语言?

- 6. 哪些嵌入模型可以本地部署?

- 7. 为什么RAGFlow解析文档的时间比LangChain要长?

- 8. 为什么RAGFlow比其他项目需要更多的资源?

- 9. RAGFlow 支持哪些架构或设备?

- 10. 可以通过URL分享对话吗?

- 11. 为什么我的 pdf 解析在接近完成时停止,而日志没有显示任何错误?

- 12. 为什么我无法将 10MB 以上的文件上传到本地部署的 RAGFlow?

- 13. 如何增加RAGFlow响应的长度?

- 14. Empty response(空响应)是什么意思?怎么设置呢?

- 15. 如何配置 RAGFlow 以 100% 匹配的结果进行响应,而不是利用 LLM?

- 16. 使用 DataGrip 连接 ElasticSearch

- 99. 功能扩展

0. 引言

这篇文章记录一下学习 RAGFlow 是一些笔记,方便以后自己查看和回忆。

1. RAGFlow 支持的文档格式

RAGFlow 支持的文件格式包括文档(PDF、DOC、DOCX、TXT、MD)、表格(CSV、XLSX、XLS)、图片(JPEG、JPG、PNG、TIF、GIF)和幻灯片(PPT、PPTX)。

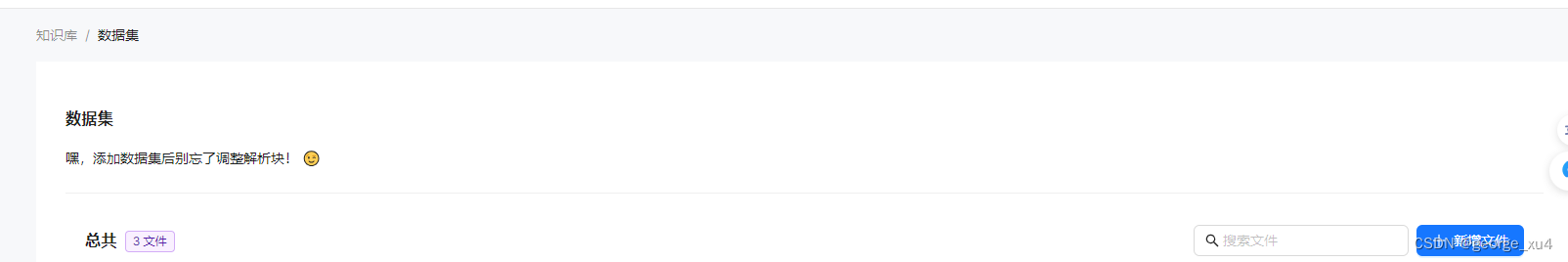

2. 嵌入模型选择后不再允许改变

一旦您选择了嵌入模型并使用它来解析文件,您就不再允许更改它。明显的原因是我们必须确保特定知识库中的所有文件都使用相同的嵌入模型进行解析(确保它们在相同的嵌入空间中进行比较)。

3. 干预文件解析

RAGFlow 具有可见性和可解释性,允许您查看分块结果并在必要时进行干预。

4. RAGFlow 与其他 RAG 产品有何不同?

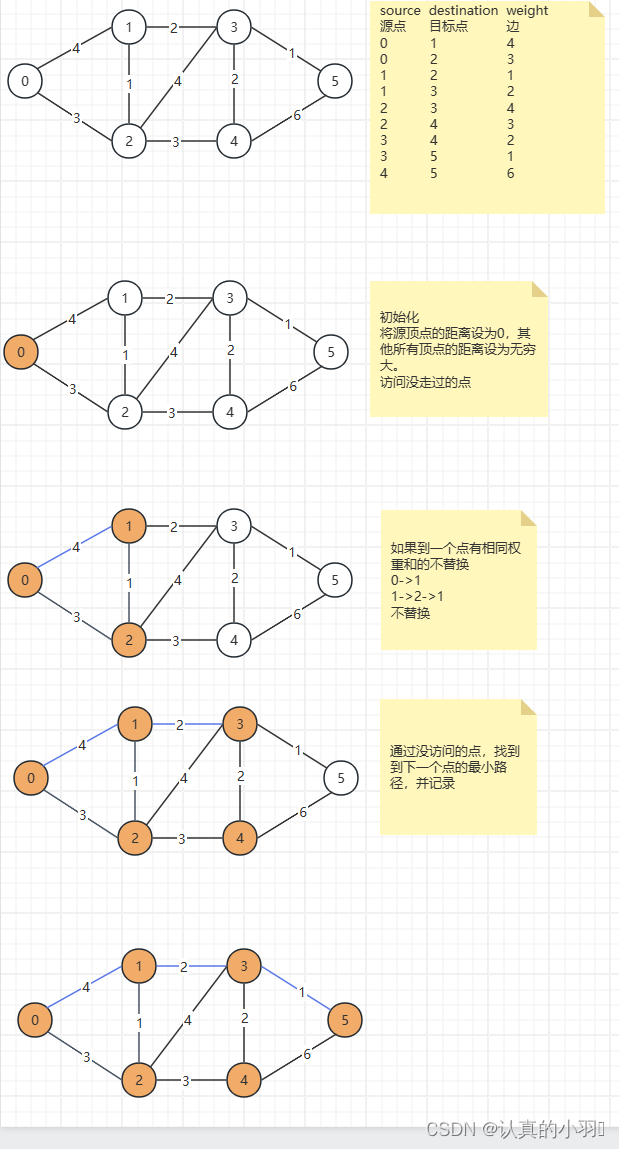

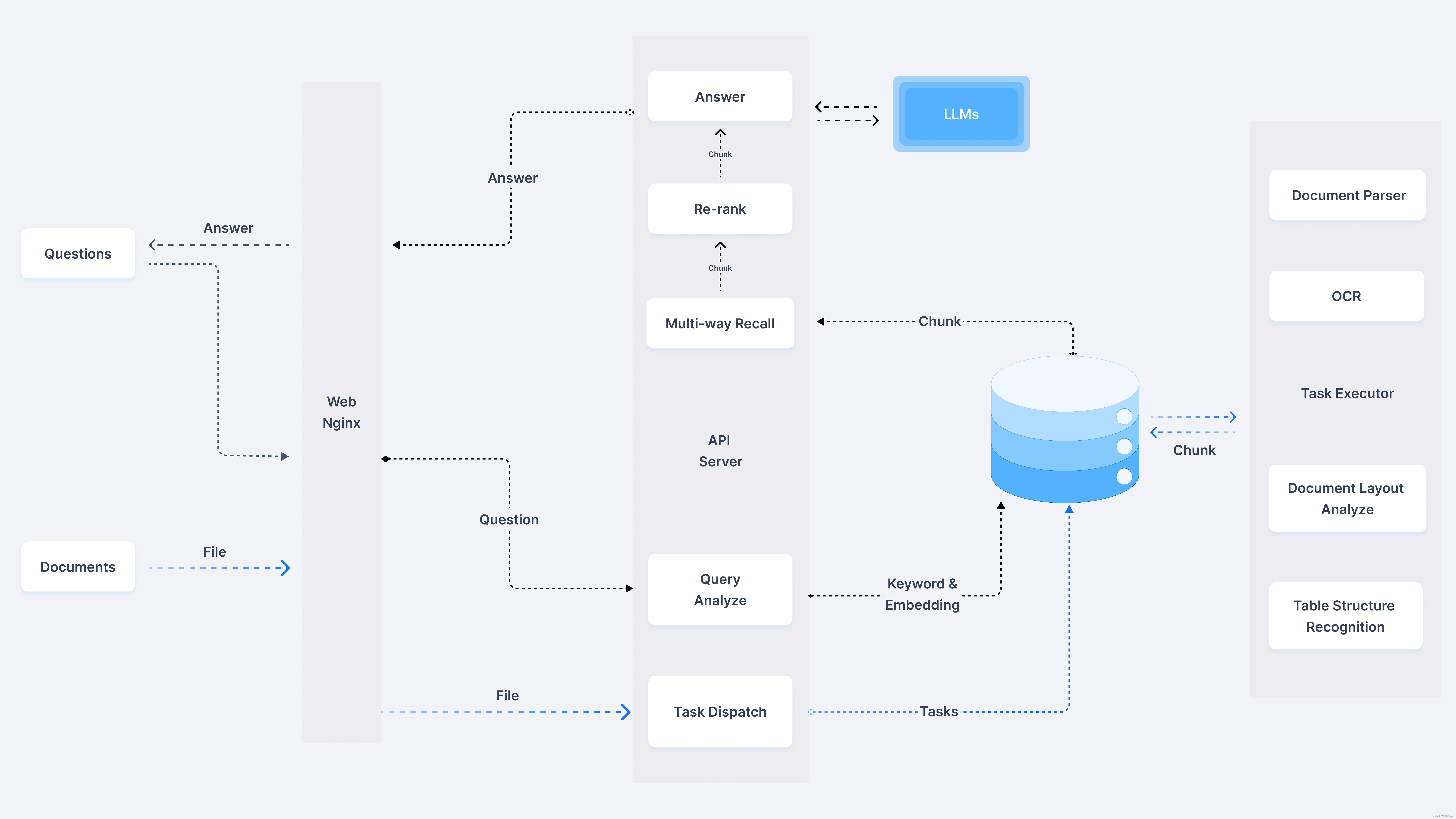

尽管 LLMs 显着推进了自然语言处理 (NLP),但“垃圾进垃圾出”的现状仍然没有改变。为此,RAGFlow 引入了与其他检索增强生成 (RAG) 产品相比的两个独特功能。

- 细粒度文档解析:文档解析涉及图片和表格,您可以根据需要灵活干预。

- 可追踪的答案,减少幻觉:您可以信任 RAGFlow 的答案,因为您可以查看支持它们的引文和参考文献。

5. RAGFlow 支持哪些语言?

目前有英文、简体中文、繁体中文。

6. 哪些嵌入模型可以本地部署?

- BAAI/bge-large-zh-v1.5

- BAAI/bge-base-en-v1.5

- BAAI/bge-large-en-v1.5

- BAAI/bge-small-en-v1.5

- BAAI/bge-small-zh-v1.5

- jinaai/jina-embeddings-v2-base-en

- jinaai/jina-embeddings-v2-small-en

- nomic-ai/nomic-embed-text-v1.5

- sentence-transformers/all-MiniLM-L6-v2

- maidalun1020/bce-embedding-base_v1

7. 为什么RAGFlow解析文档的时间比LangChain要长?

RAGFlow 使用了视觉模型,在布局分析、表格结构识别和 OCR(光学字符识别)等文档预处理任务中投入了大量精力。这会增加所需的额外时间。

8. 为什么RAGFlow比其他项目需要更多的资源?

RAGFlow 有许多用于文档结构解析的内置模型,这些模型占用了额外的计算资源。

9. RAGFlow 支持哪些架构或设备?

目前,我们仅支持 x86 CPU 和 Nvidia GPU。

10. 可以通过URL分享对话吗?

是的,此功能现已可用。

11. 为什么我的 pdf 解析在接近完成时停止,而日志没有显示任何错误?

如果您的 RAGFlow 部署在本地,则解析进程可能会因 RAM 不足而被终止。尝试通过增加 docker/.env 中的 MEM_LIMIT 值来增加内存分配。

12. 为什么我无法将 10MB 以上的文件上传到本地部署的 RAGFlow?

您可能忘记更新 MAX_CONTENT_LENGTH 环境变量:

将环境变量 MAX_CONTENT_LENGTH 添加到 ragflow/docker/.env:

MAX_CONTENT_LENGTH=100000000

更新 docker-compose.yml:

environment:

- MAX_CONTENT_LENGTH=${MAX_CONTENT_LENGTH}

重新启动 RAGFlow 服务器:

docker compose up ragflow -d

现在您应该能够上传大小小于 100MB 的文件。

13. 如何增加RAGFlow响应的长度?

右键单击所需的对话框以显示“Chat Configuration(聊天配置)”窗口。

切换到Model Setting(模型设置)选项卡并调整Max Tokens(最大令牌)滑块以获得所需的长度。

单击“确定”确认您的更改。

14. Empty response(空响应)是什么意思?怎么设置呢?

如果从您的知识库中未检索到任何内容,则您可以将系统的响应限制为您在“Empty response(空响应)”中指定的内容。如果您没有在空响应中指定任何内容,您就可以让您的 LLM 即兴创作,给它一个产生幻觉的机会。

15. 如何配置 RAGFlow 以 100% 匹配的结果进行响应,而不是利用 LLM?

单击页面中间顶部的知识库。

右键单击所需的知识库以显示配置对话框。

选择“Q&A(问答)”作为块方法,然后单击“保存”以确认您的更改。

16. 使用 DataGrip 连接 ElasticSearch

curl -X POST -u "elastic:infini_rag_flow" -k "http://localhost:1200/_license/start_trial?acknowledge=true&pretty"

99. 功能扩展

99-1. 扩展支持本地 LLM 功能

vi rag/utils/__init__.py

---

# encoder = tiktoken.encoding_for_model("gpt-3.5-turbo")

encoder = tiktoken.encoding_for_model("gpt-4-128k")

---

vi api/settings.py

---

"Local-OpenAI": {

"chat_model": "gpt-4-128k",

"embedding_model": "",

"image2text_model": "",

"asr_model": "",

},

---

vi api/db/init_data.py

---

factory_infos = [{

"name": "OpenAI",

"logo": "",

"tags": "LLM,TEXT EMBEDDING,SPEECH2TEXT,MODERATION",

"status": "1",

}, {

"name": "Local-OpenAI",

"logo": "",

"tags": "LLM",

"status": "1",

},

---

# ---------------------- Local-OpenAI ------------------------

{

"fid": factory_infos[0]["name"],

"llm_name": "gpt-4-128k",

"tags": "LLM,CHAT,128K",

"max_tokens": 128000,

"model_type": LLMType.CHAT.value

},

vi rag/llm/__init__.py

---

ChatModel = {

"OpenAI": GptTurbo,

"Local-OpenAI": GptTurbo,

---

vi web/src/pages/user-setting/setting-model/index.tsx

---

const IconMap = {

'Tongyi-Qianwen': 'tongyi',

Moonshot: 'moonshot',

OpenAI: 'openai',

'Local-OpenAI': 'openai',

'ZHIPU-AI': 'zhipu',

文心一言: 'wenxin',

Ollama: 'ollama',

Xinference: 'xinference',

DeepSeek: 'deepseek',

VolcEngine: 'volc_engine',

BaiChuan: 'baichuan',

Jina: 'jina',

};

---

vi web/src/pages/user-setting/setting-model/api-key-modal/index.tsx

---

{llmFactory === 'Local-OpenAI' && (

<Form.Item<FieldType>

label={t('baseUrl')}

name="base_url"

tooltip={t('baseUrlTip')}

>

<Input placeholder="https://api.openai.com/v1" />

</Form.Item>

)}

---

连接 MySQL 数据库,

1. 向llm_factories表插入数据

Local-OpenAI,1717812204952,2024-06-08 10:03:24,1717812204952,2024-06-08 10:03:24,"",LLM,1

2. 向llm表插入数据

gpt-4-128k,1717812204975,2024-06-08 10:03:24,1717812204975,2024-06-08 10:03:24,chat,Local-OpenAI,128000,"LLM,CHAT,128K",1

99-2. 扩展支持 OCI Cohere Embedding 功能

连接 MySQL 数据库,

1. 向llm_factories表插入数据

OCI-Cohere,1717812204967,2024-06-08 10:03:24,1717812204967,2024-06-08 10:03:24,"",TEXT EMBEDDING,1

2. 向llm表插入数据

cohere.embed-multilingual-v3.0,1717812204979,2024-06-08 10:03:24,1717812204979,2024-06-08 10:03:24,embedding,OCI-Cohere,512,"TEXT EMBEDDING,",1

vi api/apps/llm_app.py

---

fac = LLMFactoriesService.get_all()

return get_json_result(data=[f.to_dict() for f in fac if f.name not in ["Youdao", "FastEmbed", "BAAI"]])

# return get_json_result(data=[f.to_dict() for f in fac if f.name not in ["Youdao", "FastEmbed", "BAAI", "OCI-Cohere"]])

---

---

for m in llms:

m["available"] = m["fid"] in facts or m["llm_name"].lower() == "flag-embedding" or m["fid"] in ["Youdao","FastEmbed", "BAAI"]

# m["available"] = m["fid"] in facts or m["llm_name"].lower() == "flag-embedding" or m["fid"] in ["Youdao","FastEmbed", "BAAI", "OCI-Cohere"]

---

vi api/settings.py

---

"OCI-Cohere": {

"chat_model": "",

"embedding_model": "cohere.embed-multilingual-v3.0",

"image2text_model": "",

"asr_model": "",

},

---

vi api/db/init_data.py

---

factory_infos = [{

"name": "OpenAI",

"logo": "",

"tags": "LLM,TEXT EMBEDDING,SPEECH2TEXT,MODERATION",

"status": "1",

}, {

"name": "OCI-Cohere",

"logo": "",

"tags": "TEXT EMBEDDING",

"status": "1",

},

---

# ---------------------- OCI-Cohere ------------------------

{

"fid": factory_infos[0]["name"],

"llm_name": "cohere.embed-multilingual-v3.0",

"tags": "TEXT EMBEDDING,512",

"max_tokens": 512,

"model_type": LLMType.EMBEDDING.value

},

vi rag/llm/__init__.py

---

EmbeddingModel = {

"OCI-Cohere": OCICohereEmbed,

---

vi rag/llm/embedding_model.py

---

class OCICohereEmbed(Base):

def __init__(self, key, model_name="cohere.embed-multilingual-v3.0",

base_url="https://inference.generativeai.us-chicago-1.oci.oraclecloud.com"):

if not base_url:

base_url = "https://inference.generativeai.us-chicago-1.oci.oraclecloud.com"

CONFIG_PROFILE = "DEFAULT"

config = oci.config.from_file('~/.oci/config', CONFIG_PROFILE)

self.client = oci.generative_ai_inference.GenerativeAiInferenceClient(config=config, service_endpoint=base_url,

retry_strategy=oci.retry.NoneRetryStrategy(),

timeout=(10, 240))

self.model_name = model_name

self.compartment = key

def encode(self, texts: list, batch_size=1):

token_count = 0

texts = [truncate(t, 512) for t in texts]

for t in texts:

token_count += num_tokens_from_string(t)

embed_text_detail = oci.generative_ai_inference.models.EmbedTextDetails()

embed_text_detail.serving_mode = oci.generative_ai_inference.models.OnDemandServingMode(

model_id=self.model_name)

embed_text_detail.inputs = texts

embed_text_detail.truncate = "NONE"

embed_text_detail.compartment_id = self.compartment

res = self.client.embed_text(embed_text_detail)

print(f"{res.data=}")

return res.data.embeddings, token_count

def encode_queries(self, text):

text = truncate(text, 512)

token_count = num_tokens_from_string(text)

res = self.encode(texts=[text])

return res[0], token_count

在服务器上设置好 ~/.oci/config。

添加的模型时,OCI-Cohere输入使用的OCI CompartmentID。

制作图标,访问 https://brandfetch.com/oracle.com 下载 oracle svg 图标,保存到 web/src/assets/svg/llm 目录下面。

vi web/src/pages/user-setting/setting-model/index.tsx

---

const IconMap = {

'OCI-Cohere': 'oracle',

---

99-3. 扩展支持 OCI Cohere Command-r 功能

连接 MySQL 数据库,

1. 向llm_factories表插入数据

OCI-Cohere,1717812204967,2024-06-08 10:03:24,1717812204967,2024-06-08 10:03:24,"",LLM,TEXT EMBEDDING,1

2. 向llm表插入数据

cohere.embed-multilingual-v3.0,1717812204979,2024-06-08 10:03:24,1717812204979,2024-06-08 10:03:24,embedding,OCI-Cohere,512,"TEXT EMBEDDING,",1

vi api/apps/llm_app.py

---

fac = LLMFactoriesService.get_all()

return get_json_result(data=[f.to_dict() for f in fac if f.name not in ["Youdao", "FastEmbed", "BAAI"]])

# return get_json_result(data=[f.to_dict() for f in fac if f.name not in ["Youdao", "FastEmbed", "BAAI", "OCI-Cohere"]])

---

---

for m in llms:

m["available"] = m["fid"] in facts or m["llm_name"].lower() == "flag-embedding" or m["fid"] in ["Youdao","FastEmbed", "BAAI"]

# m["available"] = m["fid"] in facts or m["llm_name"].lower() == "flag-embedding" or m["fid"] in ["Youdao","FastEmbed", "BAAI", "OCI-Cohere"]

---

vi api/settings.py

---

"OCI-Cohere": {

"chat_model": "cohere.command-r-16k",

"embedding_model": "cohere.embed-multilingual-v3.0",

"image2text_model": "",

"asr_model": "",

},

---

vi api/db/init_data.py

---

factory_infos = [{

"name": "OpenAI",

"logo": "",

"tags": "LLM,TEXT EMBEDDING,SPEECH2TEXT,MODERATION",

"status": "1",

}, {

"name": "OCI-Cohere",

"logo": "",

"tags": "LLM,TEXT EMBEDDING",

"status": "1",

},

---

# ---------------------- OCI-Cohere ------------------------

{

"fid": factory_infos[0]["name"],

"llm_name": "cohere.embed-multilingual-v3.0",

"tags": "TEXT EMBEDDING,512",

"max_tokens": 512,

"model_type": LLMType.EMBEDDING.value

},

{

"fid": factory_infos[0]["name"],

"llm_name": "cohere.command-r-16k",

"tags": "LLM,CHAT,16K",

"max_tokens": 16385,

"model_type": LLMType.CHAT.value

},

vi rag/llm/__init__.py

---

ChatModel = {

"OCI-Cohere": OCICohereChat,

---

vi rag/llm/chat_model.py

---

class OCICohereChat(Base):

def __init__(self, key, model_name="cohere.command-r-16k",

base_url="https://inference.generativeai.us-chicago-1.oci.oraclecloud.com"):

if not base_url:

base_url = "https://inference.generativeai.us-chicago-1.oci.oraclecloud.com"

CONFIG_PROFILE = "DEFAULT"

config = oci.config.from_file('~/.oci/config', CONFIG_PROFILE)

self.client = oci.generative_ai_inference.GenerativeAiInferenceClient(config=config, service_endpoint=base_url,

retry_strategy=oci.retry.NoneRetryStrategy(),

timeout=(10, 240))

self.model_name = model_name

self.compartment = key

@staticmethod

def _format_params(params):

return {

"max_tokens": params.get("max_tokens", 3999),

"temperature": params.get("temperature", 0),

"frequency_penalty": params.get("frequency_penalty", 0),

"top_p": params.get("top_p", 0.75),

"top_k": params.get("top_k", 0),

}

def chat(self, system, history, gen_conf):

chat_detail = oci.generative_ai_inference.models.ChatDetails()

chat_request = oci.generative_ai_inference.models.CohereChatRequest()

params = self._format_params(gen_conf)

chat_request.max_tokens = params.get("max_tokens")

chat_request.temperature = params.get("temperature")

chat_request.frequency_penalty = params.get("frequency_penalty")

chat_request.top_p = params.get("top_p")

chat_request.top_k = params.get("top_k")

chat_detail.serving_mode = oci.generative_ai_inference.models.OnDemandServingMode(

model_id=self.model_name)

chat_detail.chat_request = chat_request

chat_detail.compartment_id = self.compartment

chat_request.is_stream = False

if system:

chat_request.preamble_override = system

print(f"{history[-1]=}")

chat_request.message = history[-1]['content']

chat_response = self.client.chat(chat_detail)

# Print result

print("**************************Chat Result**************************")

print(vars(chat_response))

chat_response = vars(chat_response)

chat_response_data = chat_response['data']

chat_response_data_chat_response = chat_response_data.chat_response

ans = chat_response_data_chat_response.text

token_count = 0

for t in history[-1]['content']:

token_count += num_tokens_from_string(t)

for t in ans:

token_count += num_tokens_from_string(t)

return ans, token_count

def chat_streamly(self, system, history, gen_conf):

chat_detail = oci.generative_ai_inference.models.ChatDetails()

chat_request = oci.generative_ai_inference.models.CohereChatRequest()

params = self._format_params(gen_conf)

chat_request.max_tokens = params.get("max_tokens")

chat_request.temperature = params.get("temperature")

chat_request.frequency_penalty = params.get("frequency_penalty")

chat_request.top_p = params.get("top_p")

chat_request.top_k = params.get("top_k")

chat_detail.serving_mode = oci.generative_ai_inference.models.OnDemandServingMode(

model_id=self.model_name)

chat_detail.chat_request = chat_request

chat_detail.compartment_id = self.compartment

chat_request.is_stream = True

if system:

chat_request.preamble_override = system

print(f"{history[-1]=}")

token_count = 0

for t in history[-1]['content']:

token_count += num_tokens_from_string(t)

chat_request.message = history[-1]['content']

chat_response = self.client.chat(chat_detail)

# Print result

print("**************************Chat Result**************************")

chat_response = vars(chat_response)

chat_response_data = chat_response['data']

# for msg in chat_response_data.iter_content(chunk_size=None):

for event in chat_response_data.events():

token_count += 1

yield json.loads(event.data)["text"]

yield token_count

在服务器上设置好 ~/.oci/config。

添加的模型时,OCI-Cohere输入使用的OCI CompartmentID。

99-4. 扩展支持 Cohere Rerank 功能

连接 MySQL 数据库,

1. 向llm_factories表插入数据

Cohere,1717812204971,2024-06-08 10:03:24,1717812204971,2024-06-08 10:03:24,"",TEXT RE-RANK,1

2. 向llm表插入数据

rerank-multilingual-v3.0,1717812205057,2024-06-08 10:03:25,1717812205057,2024-06-08 10:03:25,rerank,Cohere,4096,"RE-RANK,4k",1

vi api/settings.py

---

"Cohere": {

"chat_model": "",

"embedding_model": "",

"image2text_model": "",

"asr_model": "",

"rerank_model": "rerank-multilingual-v3.0",

},

---

vi api/db/init_data.py

---

factory_infos = [{

"name": "OpenAI",

"logo": "",

"tags": "LLM,TEXT EMBEDDING,SPEECH2TEXT,MODERATION",

"status": "1",

}, {

"name": "Cohere",

"logo": "",

"tags": "TEXT RE-RANK",

"status": "1",

}

---

# ---------------------- Cohere ------------------------

{

"fid": factory_infos[12]["name"],

"llm_name": "rerank-multilingual-v3.0",

"tags": "RE-RANK,4k",

"max_tokens": 4096,

"model_type": LLMType.RERANK.value

},

vi rag/llm/__init__.py

---

RerankModel = {

"Cohere": CohereRerank,

---

vi rag/llm/rerank_model.py

---

def similarity(self, query: str, texts: list):

token_count = 1

texts = [truncate(t, 4096) for t in texts]

for t in texts:

token_count += num_tokens_from_string(t)

for t in query:

token_count += num_tokens_from_string(t)

response = self.co.rerank(

model=self.model,

query=query,

documents=texts,

top_n=len(texts),

return_documents=False,

)

return np.array([r.relevance_score for r in response.results]), token_count

添加的模型时,Cohere输入使用的Cohere 的 API Key。

制作图标,访问 https://brandfetch.com/cohere.com 下载 cohere svg 图标,保存到 web/src/assets/svg/llm 目录下面。

vi web/src/pages/user-setting/setting-model/index.tsx

---

const IconMap = {

'Cohere': 'cohere',

---

未完待续!

![[图解]企业应用架构模式2024新译本讲解12-领域模型5](https://img-blog.csdnimg.cn/direct/16f5a61e5a094747bb6ee1d0c368e7f1.png)