原文链接

引言

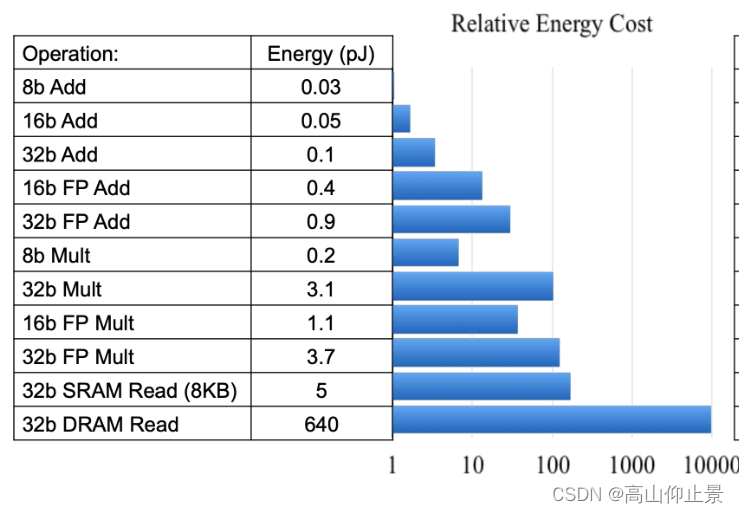

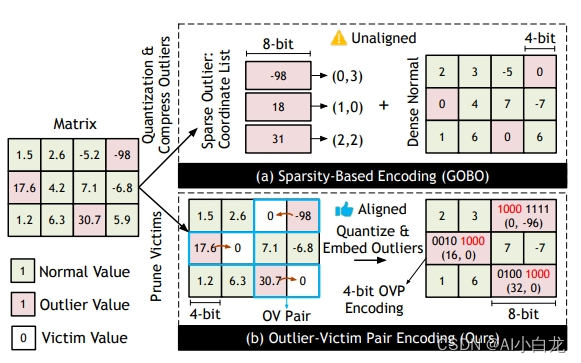

量化涉及将模型的权重和激活值从浮点数转换为整数,这样可以缩小模型大小,加快推理速度,但对准确性的影响很小。

在本教程中,我们将把最简单的量化形式--动态量化--应用到基于 LSTM 的下一个单词预测模型中,这与 PyTorch 示例中的单词语言模型密切相关。

# imports

import os

from io import open

import time

import torch

import torch.nn as nn

import torch.nn.functional as F

定义模型

在此,我们按照单词语言模型示例中的模型,定义 LSTM 模型架构。

class LSTMModel(nn.Module):

"""Container module with an encoder, a recurrent module, and a decoder."""

def __init__(self, ntoken, ninp, nhid, nlayers, dropout=0.5):

super(LSTMModel, self).__init__()

self.drop = nn.Dropout(dropout)

self.encoder = nn.Embedding(ntoken, ninp)

self.rnn = nn.LSTM(ninp, nhid, nlayers, dropout=dropout)

self.decoder = nn.Linear(nhid, ntoken)

self.init_weights()

self.nhid = nhid

self.nlayers = nlayers

def init_weights(self):

initrange = 0.1

self.encoder.weight.data.uniform_(-initrange, initrange)

self.decoder.bias.data.zero_()

self.decoder.weight.data.uniform_(-initrange, initrange)

def forward(self, input, hidden):

emb = self.drop(self.encoder(input))

output, hidden = self.rnn(emb, hidden)

output = self.drop(output)

decoded = self.decoder(output)

return decoded, hidden

def init_hidden(self, bsz):

weight = next(self.parameters())

return (weight.new_zeros(self.nlayers, bsz, self.nhid),

weight.new_zeros(self.nlayers, bsz, self.nhid))加载文本数据

接下来,我们将 Wikitext-2 数据集加载到[Corpus]{.title-ref}中,同样按照单词语言模型示例进行预处理。

class Dictionary(object):

def __init__(self):

self.word2idx = {}

self.idx2word = []

def add_word(self, word):

if word not in self.word2idx:

self.idx2word.append(word)

self.word2idx[word] = len(self.idx2word) - 1

return self.word2idx[word]

def __len__(self):

return len(self.idx2word)

class Corpus(object):

def __init__(self, path):

self.dictionary = Dictionary()

self.train = self.tokenize(os.path.join(path, 'train.txt'))

self.valid = self.tokenize(os.path.join(path, 'valid.txt'))

self.test = self.tokenize(os.path.join(path, 'test.txt'))

def tokenize(self, path):

"""Tokenizes a text file."""

print(path)

assert os.path.exists(path), f"Error: The path {path} does not exist."

# Add words to the dictionary

with open(path, 'r', encoding="utf8") as f:

for line in f:

words = line.split() + ['<eos>']

for word in words:

self.dictionary.add_word(word)

# Tokenize file content

with open(path, 'r', encoding="utf8") as f:

idss = []

for line in f:

words = line.split() + ['<eos>']

ids = []

for word in words:

ids.append(self.dictionary.word2idx[word])

idss.append(torch.tensor(ids).type(torch.int64))

ids = torch.cat(idss)

return ids

model_data_filepath = ".\data\\"

corpus = Corpus(model_data_filepath + 'wikitext-2')加载预训练模型

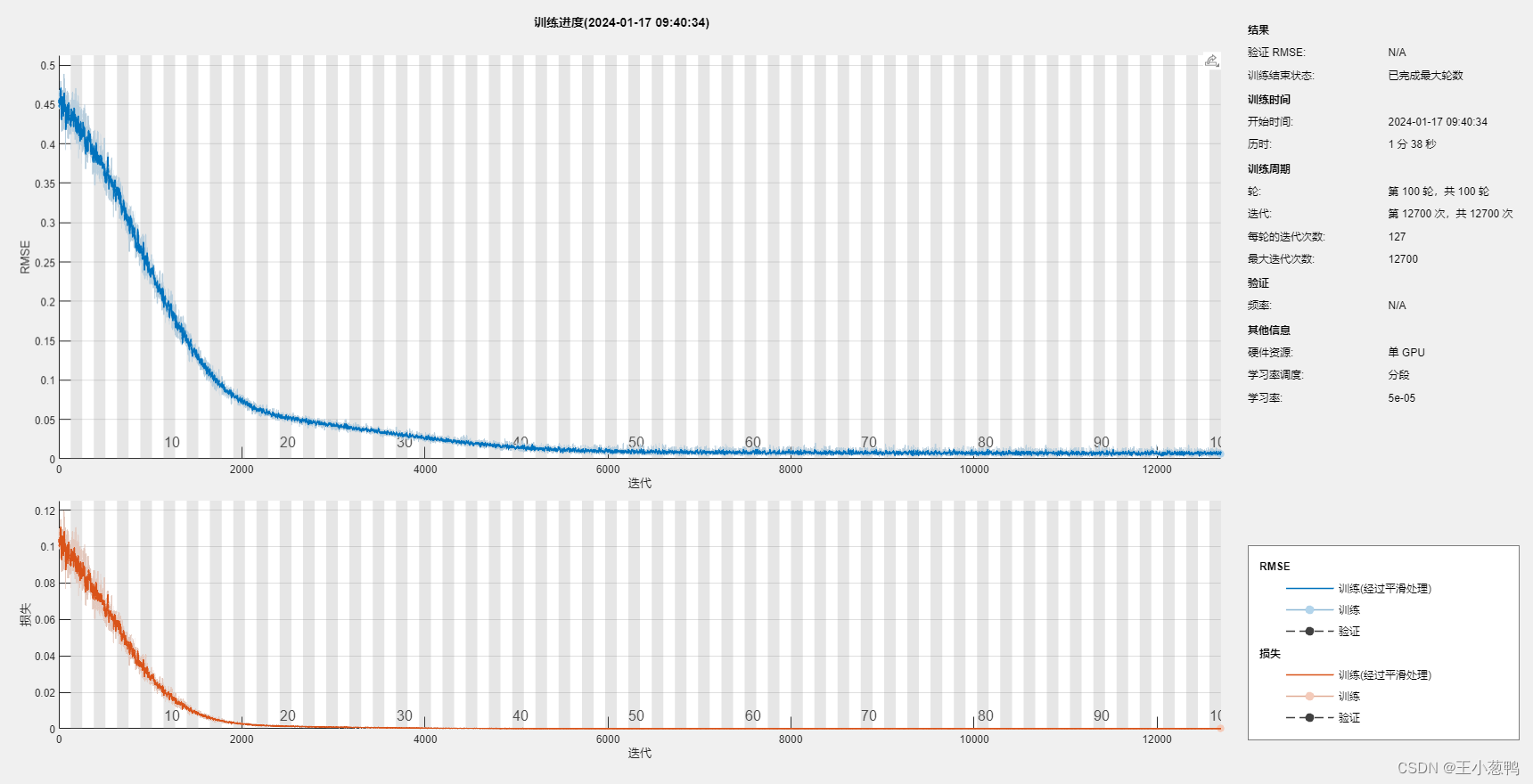

这是一个关于动态量化的教程,一种在模型训练完成后应用的量化技术。因此,我们只需将一些预先训练好的权重加载到该模型架构中;这些权重是通过使用单词语言模型示例中的默认设置进行五次历时训练获得的。

ntokens = len(corpus.dictionary)

model = LSTMModel(

ntoken=ntokens,

ninp=512,

nhid=256,

nlayers=5,

)

# model.load_state_dict(

# torch.load(

# model_data_filepath + 'word_language_model_quantize.pth',

# map_location=torch.device('cpu')

# )

# )

model.eval()

print(model)现在让我们生成一些文本,以确保预训练模型正常工作 - 与之前类似,我们遵循此处

input_ = torch.randint(ntokens, (1, 1), dtype=torch.long)

hidden = model.init_hidden(1)

temperature = 1.0

num_words = 1000

with open(model_data_filepath + 'out.txt', 'w') as outf:

with torch.no_grad(): # no tracking history

for i in range(num_words):

output, hidden = model(input_, hidden)

word_weights = output.squeeze().div(temperature).exp().cpu()

word_idx = torch.multinomial(word_weights, 1)[0]

input_.fill_(word_idx)

word = corpus.dictionary.idx2word[word_idx]

outf.write(str(word.encode('utf-8')) + ('\n' if i % 20 == 19 else ' '))

if i % 100 == 0:

print('| Generated {}/{} words'.format(i, 1000))

with open(model_data_filepath + 'out.txt', 'r') as outf:

all_output = outf.read()

print(all_output)虽然不是 GPT-2,但看起来模型已经开始学习语言结构了!

我们差不多可以演示动态量化了。我们只需要再定义几个辅助函数:

bptt = 25

criterion = nn.CrossEntropyLoss()

eval_batch_size = 1

# create test data set

def batchify(data, bsz):

# Work out how cleanly we can divide the dataset into ``bsz`` parts.

nbatch = data.size(0) // bsz

# Trim off any extra elements that wouldn't cleanly fit (remainders).

data = data.narrow(0, 0, nbatch * bsz)

# Evenly divide the data across the ``bsz`` batches.

return data.view(bsz, -1).t().contiguous()

test_data = batchify(corpus.test, eval_batch_size)

# Evaluation functions

def get_batch(source, i):

seq_len = min(bptt, len(source) - 1 - i)

data = source[i:i + seq_len]

target = source[i + 1:i + 1 + seq_len].reshape(-1)

return data, target

def repackage_hidden(h):

"""Wraps hidden states in new Tensors, to detach them from their history."""

if isinstance(h, torch.Tensor):

return h.detach()

else:

return tuple(repackage_hidden(v) for v in h)

def evaluate(model_, data_source):

# Turn on evaluation mode which disables dropout.

model_.eval()

total_loss = 0.

hidden = model_.init_hidden(eval_batch_size)

with torch.no_grad():

for i in range(0, data_source.size(0) - 1, bptt):

data, targets = get_batch(data_source, i)

output, hidden = model_(data, hidden)

hidden = repackage_hidden(hidden)

output_flat = output.view(-1, ntokens)

total_loss += len(data) * criterion(output_flat, targets).item()

return total_loss / (len(data_source) - 1)

测试动态量化

最后,我们可以在模型上调用 torch.quantization.quantize_dynamic!具体来说就是

我们指定要对模型中的 nn.LSTM 和 nn.Linear 模块进行量化

我们指定要将权重转换为 int8 值

import torch.quantization

quantized_model = torch.quantization.quantize_dynamic(

model, {nn.LSTM, nn.Linear}, dtype=torch.qint8

)

print(quantized_model)

# 模型看起来没有变化,这对我们有什么好处呢?首先,我们看到模型的尺寸大幅缩小:

def print_size_of_model(model):

torch.save(model.state_dict(), "temp.p")

print('Size (MB):', os.path.getsize("temp.p") / 1e6)

os.remove('temp.p')

print_size_of_model(model)

print_size_of_model(quantized_model)其次,我们看到推理时间更快,而评估损失没有区别:

注:我们将单线程比较的线程数设为一个,因为量化模型是单线程运行的。

torch.set_num_threads(1)

def time_model_evaluation(model, test_data):

s = time.time()

loss = evaluate(model, test_data)

elapsed = time.time() - s

print('''loss: {0:.3f}\nelapsed time (seconds): {1:.1f}'''.format(loss, elapsed))

time_model_evaluation(model, test_data)

time_model_evaluation(quantized_model, test_data)

在本地 MacBook Pro 上运行这个程序,在不进行量化的情况下,推理时间约为 200 秒,而在进行量化的情况下,推理时间仅为 100 秒左右。

结论

动态量化是减少模型大小的一种简单方法,但对准确性的影响有限。

感谢您的阅读!我们一如既往地欢迎任何反馈,如果您有任何问题,请在此创建一个问题。