基本视频/图像数据格式

参考:

- 雷霄骅博士博客:

- http://t.csdnimg.cn/kl2jL

- http://t.csdnimg.cn/pMLLE

- http://t.csdnimg.cn/FjtOK

- http://t.csdnimg.cn/K95yN

- ffmpeg-7.0中/test/utils.c文件

1.概述

视频/图像数据格式作为视频/图像处理的对象,是整个视频图像处理体系的最基本单元,有必要熟悉其存储格式。主要视频图像数据格式有yuv420p,yuv422p,yuv444p,bmp和rgb24等,其中视频编码器的主要输入数据格式就是yuv420p,因为这种格式存储数据量很小,易于存储。

视频图像处理体系大致可以分为几层:

- 协议层(http、rtmp、file…)

- 封装层(mkv,mp4,flv,mpegts,avi…)

- 编解码层(h264,h265,mpeg2…)

- 像素层(yuv420p,yuv422p,yuv444p,rgb24…)

这里仅关注像素层(视频图像的主要组成部分)的数据格式。

2.视频图像数据格式

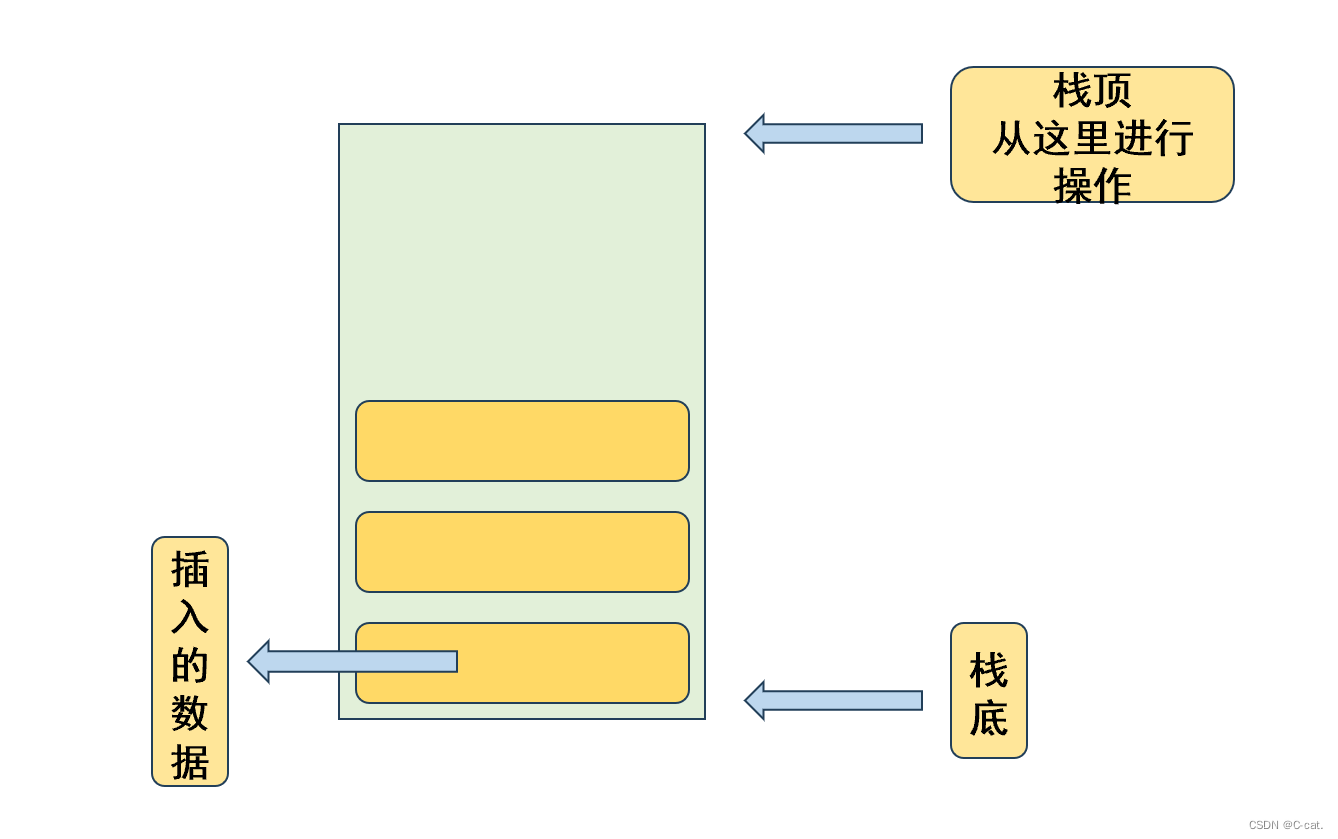

2.1 yuv420p

最常见的视频编码数据格式,yuv420p格式中,p的含义是planar,即平面存储。存储时分为三个分量Y、U和V,即在内存当中存储时先存储Y,后存储U,最后V。如果是420p格式,U和V分量的数据大小均为Y分量的1/4,这是因为U和V分量的width和height均为Y分量的1/2。

在内存中,YUV420P的存储方式为:Y0 Y1 Y2 … U0 U1 U2 … V0 V1 V2 …,并且Y的长度分别为U和V分量的4倍。如果不是p格式,可能存储的方式是interleave,即交叉式存储,这里不讨论。YUV420p格式的数据,其读取和写入数据的方式为

int video_yuv420_split(char *url, int w, int h, int num){

FILE *fp = fopen(url, "rb+");

FILE *fp_y = fopen("output_420_y.y", "wb+");

FILE *fp_u = fopen("output_420_u.y", "wb+");

FILE *fp_v = fopen("output_420_v.y", "wb+");

// 3/2 = 1(Y) + 1/4(U) + 1/4(V)

unsigned char *pic = (unsigned char *) mallo c(w * h * 3/2);

for(int i = 0; i < num; i++){

fread(pic, 1, w * h * 3/2, fp); // Read YUV data from .yuv file

fwrite(pic, 1, w * h, fp_y); // Write Y component into fp_y

fwrite(pic + w * h, 1, w * h / 4, fp_u); // Write U component into fp_u

fwrite(pic + w * h * 5 / 4, 1, w * h / 4, fp_v);// Write V component into fp_v

}

free(pic);

fclose(fp);

fclose(fp_y);

fclose(fp_u);

fclose(fp_v);

return 0;

}

2.2 yuv422p

与yuv420p类似,区别在于U和V分量的比例不同。yuv422p格式当中,仍然是先存储Y分量,后存储U分量,最后是V分量。但是U和V分量的大小分别是Y分量的一半,例如 Y0 Y1 Y2 Y3 U0 U1 V0 V1。读写方式和yuv420p的区别在于

unsigned char *pic = (unsigned char *) malloc (w * h * 2);

for(int i = 0; i < num; i++){

fread(pic, 1, w * h * 2, fp); // Read YUV data from .yuv file

fwrite(pic, 1, w * h, fp_y); // Write Y component into fp_y

fwrite(pic + w * h, 1, w * h / 2, fp_u); // Write U component into fp_u

fwrite(pic + w * h * 3 / 2, 1, w * h / 2, fp_v);// Write V component into fp_v

}

2.3 yuv444p

yuv444p格式中,YUV三个分量的大小相同,例如 Y0 Y1 Y2 Y3 U0 U1 U2 U3 V0 V1 V2 V3。读写方式为

unsigned char *pic = (unsigned char *) malloc (w * h * 3);

for(int i = 0; i < num; i++){

fread(pic, 1, w * h * 3, fp); // Read YUV data from .yuv file

fwrite(pic, 1, w * h, fp_y); // Write Y component into fp_y

fwrite(pic + w * h, 1, w * h, fp_u); // Write U component into fp_u

fwrite(pic + w * h * 2, 1, w * h, fp_v); // Write V component into fp_v

}

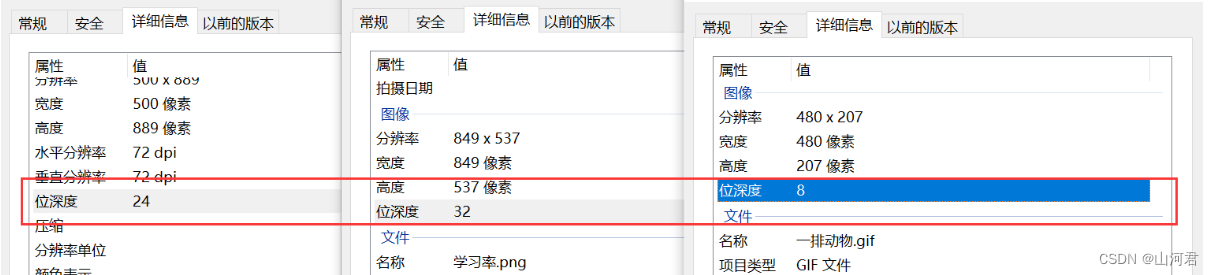

2.4 RGB格式

对于后缀为.rgb格式的文件,其存储数据的方式与yuv不同。在YUV格式当中,YUV三个通道是分别进行存储,而RGB格式是三个通道交替进行存储,例如 r0 g0 b0 r1 g1 b1 r2 g2 b2…,因此其读写数据的方式也不同。

unsigned char *pic = (unsigned char *) malloc (w * h * 3);

for(int i = 0; i < num; i++){

fread(pic, 1, w * h * 3, fp); // read .rgb file

for(int j = 0; j < w * h * 3; j = j + 3){

fwrite(pic + j, 1, 1, fp_y); // write r component

fwrite(pic + j + 1, 1, 1, fp_u); // write g component

fwrite(pic + j + 2 , 1, 1, fp_v); // write b component

}

常见的8种颜色RGB数值为

| 颜色 | RGB |

|---|---|

| 白 | (255, 255, 255) |

| 黄 | (255, 255, 0) |

| 青 | (0, 255, 255) |

| 绿 | ( 0, 255, 0) |

| 品红 | (255, 0, 255) |

| 红 | (255, 0, 0) |

| 蓝 | (0, 0, 255) |

| 黑 | (0, 0, 0) |

另外,灰色为128

2.5 BMP格式

BMP格式是对RGB进行封装得到的格式,能够使用普通的图片浏览器打开。对RGB格式进行封装得到BMP格式的方式如下:

/**

1. Convert RGB24 file to BMP file

2. @param rgb24path Location of input RGB file.

3. @param width Width of input RGB file.

4. @param height Height of input RGB file.

5. @param url_out Location of Output BMP file.

*/

int video_rgb24_to_bmp(const char *rgb24path,int width,int height,const char *bmppath){

typedef struct

{

long imageSize;

long blank;

long startPosition;

}BmpHead;

typedef struct

{

long Length;

long width;

long height;

unsigned short colorPlane;

unsigned short bitColor;

long zipFormat;

long realSize;

long xPels;

long yPels;

long colorUse;

long colorImportant;

}InfoHead;

int i = 0;

int j = 0;

BmpHead m_BMPHeader = { 0 };

InfoHead m_BMPInfoHeader = { 0 };

char bfType[2] = {'B', 'M'};

int header_size = sizeof(bfType) + sizeof(BmpHead) + sizeof(InfoHead);

unsigned char *rgb24_buffer = NULL;

FILE* fp_rgb24 = NULL;

FILE* fp_bmp = NULL;

if((fp_rgb24 = fopen(rgb24path, "rb")) == NULL){

printf("Error: Cannot open input RGB24 file.\n");

return -1;

}

if((fp_bmp = fopen(bmppath, "wb")) == NULL){

printf("Error: Cannot open output BMP file.\n");

return -1;

}

rgb24_buffer = (unsigned char *)malloc(width * height * 3);

fread(rgb24_buffer, 1, width * height * 3, fp_rgb24);

m_BMPHeader.imageSize = 3 * width * height + header_size;

m_BMPHeader.startPosition = header_size;

m_BMPInfoHeader.Length = sizeof(InfoHead);

m_BMPInfoHeader.width = width;

//BMP storage pixel data in opposite direction of Y-axis (from bottom to top).

m_BMPInfoHeader.height =- height;

m_BMPInfoHeader.colorPlane = 1;

m_BMPInfoHeader.bitColor = 24;

m_BMPInfoHeader.realSize = 3 * width * height;

fwrite(bfType, 1, sizeof(bfType), fp_bmp);

fwrite(&m_BMPHeader, 1, sizeof(m_BMPHeader), fp_bmp);

fwrite(&m_BMPInfoHeader, 1, sizeof(m_BMPInfoHeader), fp_bmp);

//BMP save R1|G1|B1,R2|G2|B2 as B1|G1|R1,B2|G2|R2

//It saves pixel data in Little Endian

//So we change 'R' and 'B'

for(j = 0; j < height; j++){

for(i = 0; i < width; i++){

// 将R分量和B分量的位置进行交换

char temp = rgb24_buffer[(j * width + i) * 3 + 2];

rgb24_buffer[(j * width + i) * 3 + 2] = rgb24_buffer[(j * width + i) * 3 + 0];

rgb24_buffer[(j * width + i) * 3 + 0] = temp;

}

}

fwrite(rgb24_buffer, 3 * width * height, 1, fp_bmp);

fclose(fp_rgb24);

fclose(fp_bmp);

free(rgb24_buffer);

printf("Finish generate %s!\n", bmppath);

return 0;

}

在这里,代码执行的任务包括:

- 存储写上BMP的头部信息

- 将RGB格式的文件修改为BGR。这是因为BMP存储时使用的是小端存储(Little Endian),存储时的顺序为B、G、R

BMP文件是由BITMAPFILEHEADER、BITMAPINFOHEADER、RGB像素数据共3个部分构成,如下所示。其中,BITMAPFILEHEADER对应上述的BmpHead,BITMAPINFOHEADER对应上述的InfoHead。

typedef struct tagBITMAPFILEHEADER

{

unsigned short int bfType; //位图文件的类型,必须为BM

unsigned long bfSize; //文件大小,以字节为单位

unsigned short int bfReserverd1; //位图文件保留字,必须为0

unsigned short int bfReserverd2; //位图文件保留字,必须为0

unsigned long bfbfOffBits; //位图文件头到数据的偏移量,以字节为单位

}BITMAPFILEHEADER;

typedef struct tagBITMAPINFOHEADER

{

long biSize; //该结构大小,字节为单位

long biWidth; //图形宽度以象素为单位

long biHeight; //图形高度以象素为单位

short int biPlanes; //目标设备的级别,必须为1

short int biBitcount; //颜色深度,每个象素所需要的位数

short int biCompression; //位图的压缩类型

long biSizeImage; //位图的大小,以字节为单位

long biXPelsPermeter; //位图水平分辨率,每米像素数

long biYPelsPermeter; //位图垂直分辨率,每米像素数

long biClrUsed; //位图实际使用的颜色表中的颜色数

long biClrImportant; //位图显示过程中重要的颜色数

}BITMAPINFOHEADER;

3.格式转换

3.1 RGB24转换为YUV420P

RGB24转换YUV420p的公式为:

Y = 0.299 * R + 0.587 * G + 0.114 * B

U =-0.147 * R - 0.289 * G + 0.463 * B

V = 0.615 * R - 0.515 * G - 0.100 * B

代码参考ffmpeg-7.0当中的/test/utils.c,这个文档相比雷霄骅博士的写法有所不同,或许更好理解。

#define SCALEBITS 8

#define ONE_HALF (1 << (SCALEBITS - 1))

#define FIX(x) ((int) ((x) * (1 << SCALEBITS) + 0.5)) // 乘以255倍,猜测目的应该是提升精度

#define err_if(expr) do { \

if (expr) { \

fprintf(stderr, "%s\n", strerror(errno)); \

exit(1); \

} \

} while (0)

static void rgb24_to_yuv420p(unsigned char *lum, unsigned char *cb, // lum是Y分量的地址,cb是U分量的地址

unsigned char *cr, const unsigned char *src, // cr是V分量的地址

int width, int height)

{

int wrap, wrap3, x, y;

int r, g, b, r1, g1, b1;

const unsigned char *p;

wrap = width; // yuv指针偏移量,用于定位图像每一行的宽度

wrap3 = width * 3; // rgb指针偏移量,用于定位图像每一行的宽度

p = src; // src为rgb图像的指针地址

// 这里每2x2个像素进行处理,是因为U和V分量的长和宽分别只占据Y分量的1/2

for (y = 0; y < height; y += 2) {

for (x = 0; x < width; x += 2) {

r = p[0];

g = p[1];

b = p[2];

r1 = r;

g1 = g;

b1 = b;

lum[0] = (FIX(0.29900) * r + FIX(0.58700) * g +

FIX(0.11400) * b + ONE_HALF) >> SCALEBITS;

r = p[3];

g = p[4];

b = p[5];

r1 += r;

g1 += g;

b1 += b;

lum[1] = (FIX(0.29900) * r + FIX(0.58700) * g +

FIX(0.11400) * b + ONE_HALF) >> SCALEBITS;

p += wrap3; // 移动到当前2x2小块的左下小块

lum += wrap; // 移动到当前2x2小块的左下小块

r = p[0];

g = p[1];

b = p[2];

r1 += r;

g1 += g;

b1 += b;

lum[0] = (FIX(0.29900) * r + FIX(0.58700) * g +

FIX(0.11400) * b + ONE_HALF) >> SCALEBITS;

r = p[3];

g = p[4];

b = p[5];

r1 += r;

g1 += g;

b1 += b;

lum[1] = (FIX(0.29900) * r + FIX(0.58700) * g +

FIX(0.11400) * b + ONE_HALF) >> SCALEBITS;

// 每2x2个像素有一个Cb和Cr分量,将其写入到cb和cr数组当中

cb[0] = ((- FIX(0.16874) * r1 - FIX(0.33126) * g1 +

FIX(0.50000) * b1 + 4 * ONE_HALF - 1) >> (SCALEBITS + 2)) + 128;

cr[0] = ((FIX(0.50000) * r1 - FIX(0.41869) * g1 -

FIX(0.08131) * b1 + 4 * ONE_HALF - 1) >> (SCALEBITS + 2)) + 128;

cb++;

cr++;

p += -wrap3 + 2 * 3; // 乘以3是因为rgb是顺序存储的,移动到下一个2x2小块左上小块的r分量

lum += -wrap + 2; // 回到上一行的起始位置,加2则指向下一个2x2小块的左上小块

}

p += wrap3;

lum += wrap;

}

}

static void pgmyuv_save(const char *filename, int w, int h,

const unsigned char *rgb_tab)

{

FILE *f;

int i, h2, w2;

unsigned char *cb, *cr;

unsigned char *lum_tab, *cb_tab, *cr_tab;

lum_tab = malloc(w * h);

cb_tab = malloc(w * h / 4);

cr_tab = malloc(w * h / 4);

rgb24_to_yuv420p(lum_tab, cb_tab, cr_tab, rgb_tab, w, h);

if (filename) {

f = fopen(filename, "wb");

fprintf(f, "P5\n%d %d\n%d\n", w, h * 3 / 2, 255);

} else {

f = stdout;

}

err_if(fwrite(lum_tab, 1, w * h, f) != w * h); // 写入Y分量

h2 = h / 2;

w2 = w / 2;

cb = cb_tab;

cr = cr_tab;

if (filename) {

for (i = 0; i < h2; i++) {

err_if(fwrite(cb, 1, w2, f) != w2); // 写入U分量

err_if(fwrite(cr, 1, w2, f) != w2); // 写入V分量

cb += w2;

cr += w2;

}

fclose(f);

} else {

for (i = 0; i < h2; i++) {

err_if(fwrite(cb, 1, w2, f) != w2);

cb += w2;

}

for (i = 0; i < h2; i++) {

err_if(fwrite(cr, 1, w2, f) != w2);

cr += w2;

}

}

free(lum_tab);

free(cb_tab);

free(cr_tab);

}

4.视频图像评价指标

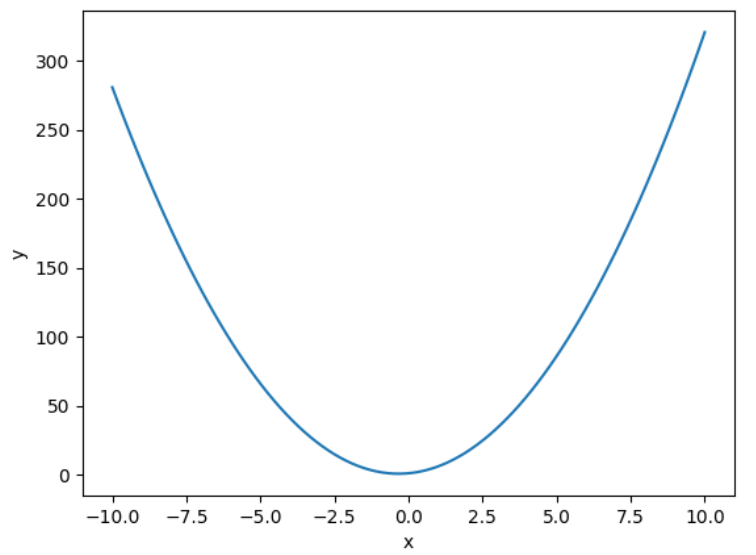

4.1 MSE

MSE全称为Mean Square Error,表示均方误差,其计算方式为

int width = WIDTH;

int height = HEIGHT;

double mse = 0.0;

for(int j = 0; j < width * height; j++){

mse += pow((double)(src[j] - dst[j]), 2);

}

mse = mse / (width * height);

4.2 PSNR

PSNR的计算是在MSE计算的基础之上获得的,计算方式为

double psnr = 0.0;

psnr = 10 * log10(255.0 * 255.0 / mse);

PSNR描述了两幅图片的差异程度,单位是dB,dB越大,表示两幅图像越接近,否则差异越大。在视频编码标准中,PSNR是衡量编码工具的重要指标,通常与Bitrate结合起来,来评判编码算法的优劣。

CSDN:https://blog.csdn.net/weixin_42877471

Github:https://github.com/DoFulangChen