文章目录

关于 Hadoop

The Apache™ Hadoop® project develops open-source software for reliable, scalable, distributed computing.

The Apache Hadoop software library is a framework that allows for the distributed processing of large data sets across clusters of computers using simple programming models.

It is designed to scale up from single servers to thousands of machines, each offering local computation and storage. Rather than rely on hardware to deliver high-availability, the library itself is designed to detect and handle failures at the application layer, so delivering a highly-available service on top of a cluster of computers, each of which may be prone to failures.

- 官网: https://hadoop.apache.org

- 官方教程: https://hadoop.apache.org/docs/r1.0.4/cn/

- W3C教程:https://www.w3cschool.cn/hadoop/

- 菜鸟教程:https://www.runoob.com/w3cnote/hadoop-tutorial.html

发行版本

- Apache 开源社区版: https://hadoop.apache.org

- 商业发行版本

- Cloudera: https://www.cloudera.com

Hortonworks 和 Cloudera 合并了

- Cloudera: https://www.cloudera.com

架构变迁 1.0 --> 2.0 --> 3.0

安装配置

官方安装配置:

https://hadoop.apache.org/docs/r1.0.4/cn/quickstart.html

安装

macOS 安装 Hadoop:使用 brew

brew 安装、使用方法可见:https://blog.csdn.net/lovechris00/article/details/121613647

brew install hadoop

如果出现报错:

Error: Cannot install hadoop because conflicting formulae are installed.

yarn: because both installyarnbinaries

Pleasebrew unlink yarnbefore continuing.

根据提示执行命令即可:

brew unlink yarn

安装成功,查看版本

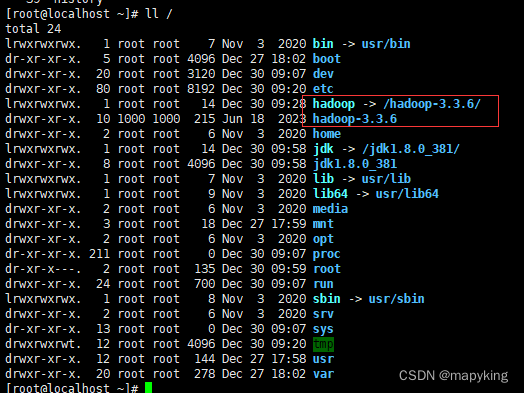

# 查看 hadoop 安装目录

brew info hadoop

配置环境变量

根据你的环境变量文件,编辑

vim ~/.zshrc

# vim ~/.bash_profile

# Hadoop

export HADOOP_HOME=/usr/local/Cellar/hadoop/3.3.4/libexec

export PATH=$PATH:HADOOP_HOME

使环境变量在当前窗口生效。

你也可以使用 ctrl + tab 新开一个终端窗口。

source ~/.zshrc

# source ~/.bash_profile

配置

cd /usr/local/Cellar/hadoop/3.3.3/libexec/etc/hadoop

ls

修改配置文件

core-site.xml

vim core-site.xml

进入文件后加入:

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://localhost:8020</value>

</property>

<!--用来指定hadoop运行时产生文件的存放目录 自己创建-->

<property>

<name>hadoop.tmp.dir</name>

<value>file:/usr/local/Cellar/hadoop/tmp</value>

</property>

</configuration>

hdfs-site.xml

配置副本数

vim hdfs-site.xml

<configuration>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

<!--不是root用户也可以写文件到hdfs-->

<property>

<name>dfs.permissions</name>

<value>false</value> <!--关闭防火墙-->

</property>

<!--把路径换成本地的name坐在位置-->

<property>

<name>dfs.namenode.name.dir</name>

<value>file:/usr/local/Cellar/hadoop/tmp/dfs/name</value>

</property>

<!--在本地新建一个存放hadoop数据的文件夹,然后将路径在这里配置一下-->

<property>

<name>dfs.datanode.data.dir</name>

<value>file:/usr/local/Cellar/hadoop/tmp/dfs/data</value>

</property>

</configuration>

mapped-site.xml

vim mapped-site.xml

<configuration>

<property>

<!--指定mapreduce运行在yarn上-->

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>mapred.job.tracker</name>

<value>localhost:9010</value>

</property>

<!-- 新添加 -->

<!-- 下面的路径就是你hadoop distribution directory -->

<property>

<name>yarn.app.mapreduce.am.env</name>

<value>HADOOP_MAPRED_HOME=/usr/local/Cellar/hadoop/3.3.3/libexec</value>

</property>

<property>

<name>mapreduce.map.env</name>

<value>HADOOP_MAPRED_HOME=/usr/local/Cellar/hadoop/3.3.3/libexec</value>

</property>

<property>

<name>mapreduce.reduce.env</name>

<value>HADOOP_MAPRED_HOME=/usr/local/Cellar/hadoop/3.3.3/libexec</value>

</property>

</configuration>

yarn-site.xml

vim yarn-site.xml

<configuration>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.resourcemanager.address</name>

<value>localhost:9000</value>

</property>

<property>

<name>yarn.scheduler.capacity.maximum-am-resource-percent</name>

<value>100</value>

</property>

</configuration>

配置 hadoop-env

$HADOOOP_HOME/etc/hadoop/hadoop-env.sh 中配置 JAVA_HOME

export JAVA_HOME=/Library/Java/JavaVirtualMachines/jdk1.8.0_331.jdk/Contents/Home

启动/停止 Hadoop 服务

初始化 HDFS NameNode

hdfs namenode -format

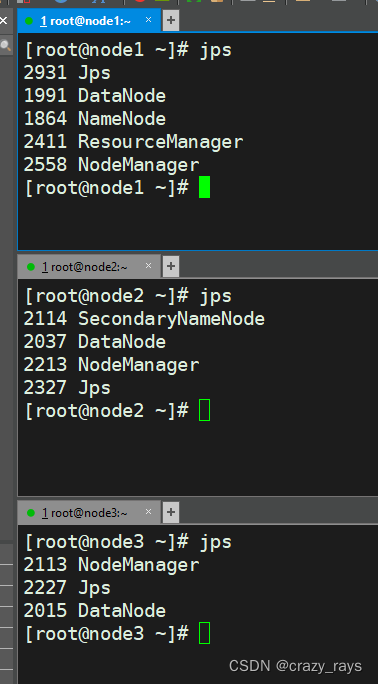

1、启动

cd $HADOOP_HOME

./start-dfs.sh

2、在浏览器中输入url:

http://localhost:9870/dfshealth.html#tab-overview

http://localhost:9870

看到以下界面启动成功

如果打不开这个页面,而且又启动了,需要在hadoop下/etc/hadoop/hadoop-env.sh文件下第52行后面添加下方配置:

cd /usr/local/Cellar/hadoop/3.3.1/libexec/etc

export HADOOP_OPTS="-Djava.library.path=${HADOOP_HOME}/lib/native"

3、停止hadoop服务

./stop-yarn.sh

4、启动yarn服务

cd /usr/local/Cellar/hadoop/3.3.1/libexec/sbin

./start-yarn.sh

在浏览器中输入 http://localhost:8088/cluster 看到一下界面则启动成功

5、停止yarn服务

./stop-yarn.sh

查看 hdfs report

hdfs dfsadmin -report

启动 yarn

cd $HADOOP_HOME

../sbin/start-yarn.sh

浏览器输入 http://localhost:8088,出现下面界面则代表启动成功