https://developer.horizon.ai/forumDetail/112555512834430484

OriginBot智能机器人开源套件|23.视觉巡线(AI深度学习) - 哔哩哔哩

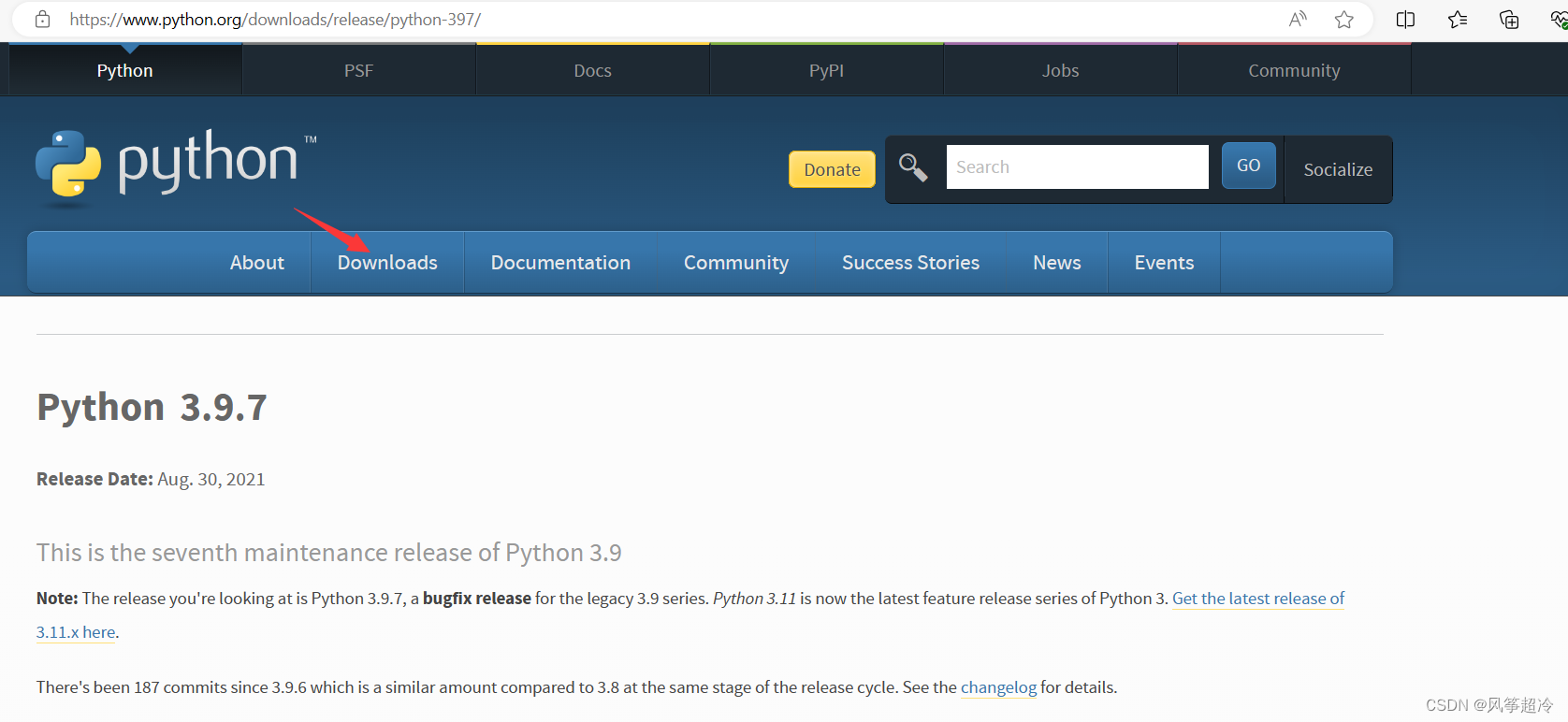

origincar在执行以下命令部署模型时

ros2 run line_follower_perception line_follower_perception --ros-args -p model_path:=model/resnet18_224x224_nv12.bin -p model_name:=resnet18_224x224_nv12

报错:

root@ubuntu:~/dev_ws/src/origincar/origincar_deeplearning/line_follower_perception# ros2 run line_follower_perception line_follower_perception --ros-args -p model_path:=model/resnet18_224x224_nv12.bin -p model_name:=resnet18_224x224_nv12

[INFO] [1712136670.826876413] [dnn]: Node init.

[INFO] [1712136670.827445897] [LineFollowerPerceptionNode]: path:model/resnet18_224x224_nv12.bin

[INFO] [1712136670.827541602] [LineFollowerPerceptionNode]: name:resnet18_224x224_nv12

[INFO] [1712136670.827679473] [dnn]: Model init.

[EasyDNN]: EasyDNN version = 1.6.1_(1.18.6 DNN)

[BPU_PLAT]BPU Platform Version(1.3.3)!

[HBRT] set log level as 0. version = 3.15.25.0

[DNN] Runtime version = 1.18.6_(3.15.25 HBRT)

[A][DNN][packed_model.cpp:234][Model](2024-04-03,17:31:11.265.176) [HorizonRT] The model builder version = 1.9.9

[INFO] [1712136671.404482752] [dnn]: The model input 0 width is 224 and height is 224

[INFO] [1712136671.404657414] [dnn]: Task init.

[INFO] [1712136671.406786852] [dnn]: Set task_num [4]

[INFO] [1712136671.435160111] [LineFollowerPerceptionNode]: image_height:480

[INFO] [1712136671.435210859] [LineFollowerPerceptionNode]: image_width:640

[E][DNN][validate_util.cpp:641][Util](2024-04-03,17:31:11.436.85) left: 0 and right: 959, should be in [0, 640)

[E][DNN][hb_dnn.cpp:595][Task](2024-04-03,17:31:11.436.287) taskHandle is invalid

[ERROR] [1712136671.436338327] [LineFollowerPerceptionNode]: hbDNNWaitTaskDone failed!

[E][DNN][hb_dnn.cpp:615][Task](2024-04-03,17:31:11.436.645) taskHandle is invalid

[ERROR] [1712136671.436727024] [LineFollowerPerceptionNode]: release task failed!

[ERROR]["LOG"][src/utils/mem_log.c:108] [ERROR][16322.79688][680329:680329][MEM_ALLOCATOR] <hb_mem_free_buf_with_vaddr:1506> Invalid NULL virt_addr.

[ERROR]["LOG"][src/utils/mem_log.c:108] [ERROR][16322.79692][680329:680329][HBMEM] <hbmem_free:149> Fail to free buffer(ret=-16777214).

[ERROR]["LOG"][src/utils/mem_log.c:108] [ERROR][16322.79695][680329:680329][MEM_ALLOCATOR] <hb_mem_free_buf_with_vaddr:1506> Invalid NULL virt_addr.

[ERROR]["LOG"][src/utils/mem_log.c:108] [ERROR][16322.79697][680329:680329][HBMEM] <hbmem_free:149> Fail to free buffer(ret=-16777214).

root@ubuntu:~/dev_ws/src/origincar/origincar_deeplearning/line_follower_perception# ls

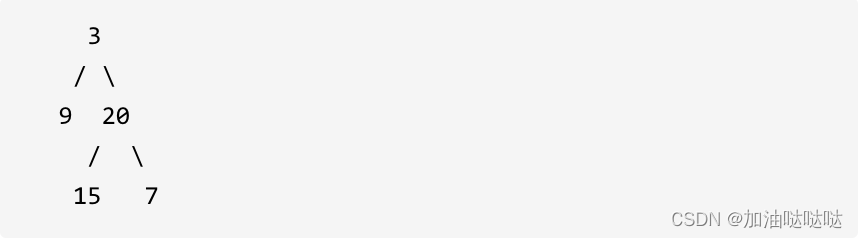

这里有一个错误提示如下:

[E][DNN][validate_util.cpp:641][Util](2024-04-03,17:31:11.436.85) left: 0 and right: 959, should be in [0, 640)

也就是 image_width 是0~959,超过了范围640

为什么会报这个错呢?

原来,OriginBot的摄像头是MIPI接口,而OriginCar的摄像头是USB接口,

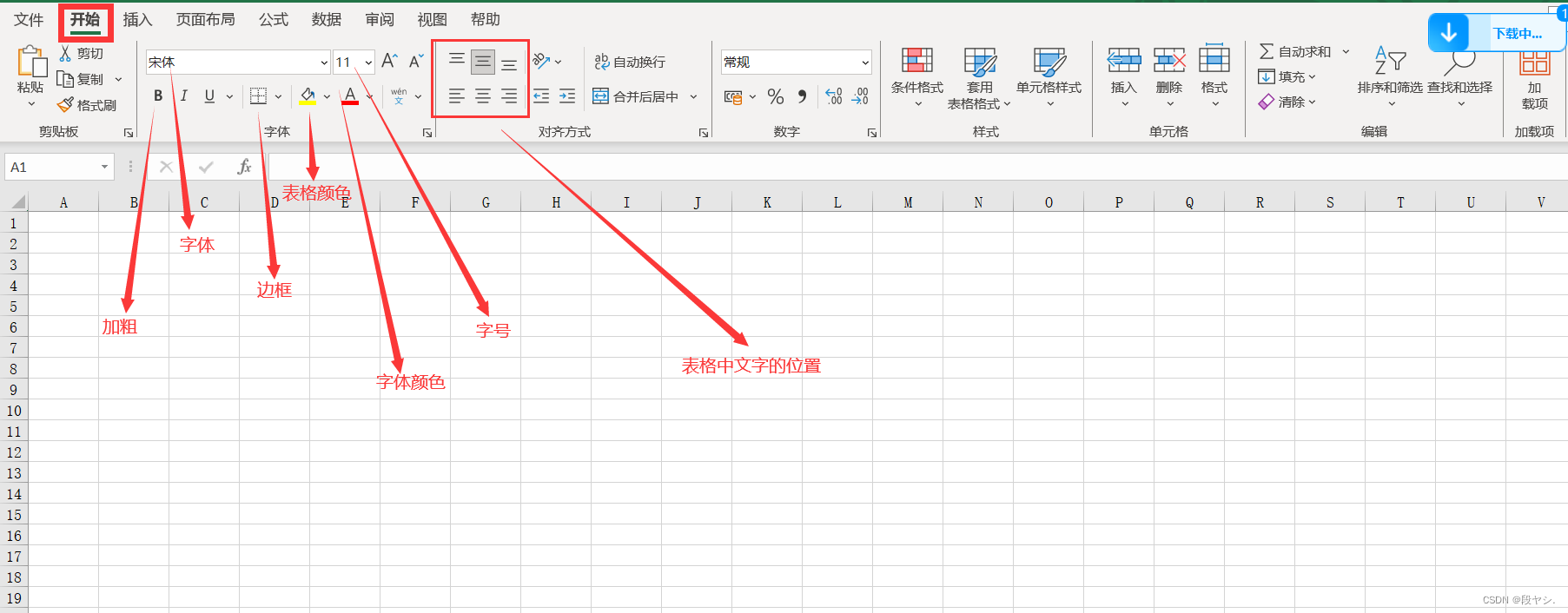

对应受到影响的文件有以下几个:

OriginBot:

~/dev_ws/src/originbot/originbot_bringup/launch/camera.launch.py

def generate_launch_description():

return LaunchDescription([

Node(

package='mipi_cam',

executable='mipi_cam',

output='screen',

parameters=[

{"mipi_camera_calibration_file_path": "/opt/tros/lib/mipi_cam/config/GC4663_calibration.yaml"},

{"out_format": "bgr8"},

{"image_width": 960}, # 960

{"image_height": 544},

{"io_method": "ros"},

{"mipi_video_device": "GC4663"}

],

arguments=['--ros-args', '--log-level', 'error']

),

Node(

package='originbot_demo',

executable='transport_img',

arguments=['--ros-args', '--log-level', 'error']

),

])~/dev_ws/src/originbot/originbot_bringup/launch/camera_websoket_display.launch.py

def generate_launch_description():

mipi_cam_device_arg = DeclareLaunchArgument(

'device',

default_value='GC4663',

description='mipi camera device')

mipi_node = IncludeLaunchDescription(

PythonLaunchDescriptionSource(

os.path.join(

get_package_share_directory('mipi_cam'),

'launch/mipi_cam.launch.py')),

launch_arguments={

'mipi_image_width': '960', # 960

'mipi_image_height': '544',

'mipi_io_method': 'shared_mem',

'mipi_video_device': LaunchConfiguration('device')

}.items()

)

~/dev_ws/src/originbot_desktop/originbot_deeplearning/line_follower_model/line_follower_model/annotation_member_function.py

OriginBot上的MIPI摄像头发布的话题是/image_raw,图像格式是bgr8,而OriginCar上的USB摄像头发布的话题是/image,图像格式是JPEG

def __init__(self):

super().__init__('ImageSubscriber')

# 创建sub节点,订阅image_raw话题

self.subscription = self.create_subscription(

Image,

'image_raw', ## image_raw 在OriginCar上应该改为image

self.listener_callback,

1)

# 创建CvBridge实例

self.bridge = CvBridge()

self.x = -1

self.y = -1

self.uuid = -1

self.image = np.zeros((960, 224, 3)) # 960

self.initialize = False

# 检查用户存放标定数据的image_dataset文件夹是否存在,如果不存在则创建

if not os.path.exists('./image_dataset'):

os.makedirs('./image_dataset')

# 设置opecv展示窗属性

cv.namedWindow("capture image", cv.WINDOW_NORMAL)

cv.resizeWindow("capture image", 960, 224) # 960

self.subscription

~/dev_ws/src/originbot_desktop/originbot_deeplearning/line_follower_model/line_follower_model/training_member_function.py

# 创建一个torch.utils.data.Dataset的实现。因为模型输入为224*224,图像分辨率为960*224所以X方向坐标需要缩放

def get_x(path, width):

"""Gets the x value from the image filename"""

return (float(int(path.split("_")[1])) * 224.0 / 960.0 - width/2) / (width/2) # 960

~/dev_ws/src/orginbot/originbot_deeplearning/line_follower_perception/src/line_follower_perception.cpp

void LineFollowerPerceptionNode::subscription_callback(

const hbm_img_msgs::msg::HbmMsg1080P::SharedPtr msg) {

...

hbDNNRoi roi;

roi.left = 0;

roi.top = 160;

roi.right = 960 - 1; // 960

roi.bottom = 384 - 1;

hbDNNTensor input_tensor;

prepare_nv12_tensor_without_padding(reinterpret_cast<const char*>(msg->data.data()),

msg->height,

msg->width,

&input_tensor);

// Prepare output tensor

hbDNNTensor output_tensor;

prepare_nv12_tensor_without_padding(224, 224, &output_tensor);

// resize

hbDNNResizeCtrlParam ctrl = {

HB_BPU_CORE_0, 0, HB_DNN_RESIZE_TYPE_BILINEAR, 0, 0, 0, 0};

hbDNNTaskHandle_t task_handle = nullptr;

hbDNNResize(&task_handle, &output_tensor, &input_tensor, &roi, &ctrl);

}

int32_t LineCoordinateParser::Parse(

std::shared_ptr<LineCoordinateResult> &output,

std::vector<std::shared_ptr<InputDescription>> &input_descriptions,

std::shared_ptr<OutputDescription> &output_description,

std::shared_ptr<DNNTensor> &output_tensor) {

...

...

hbSysFlushMem(&(tensor.sysMem[0]), HB_SYS_MEM_CACHE_INVALIDATE);

float x = reinterpret_cast<float *>(tensor.sysMem[0].virAddr)[0];

float y = reinterpret_cast<float *>(tensor.sysMem[0].virAddr)[1];

result->x = (x * 112 + 112) * 960.0 / 224.0; // 960

result->y = 224 - (y * 112 + 112) + 272 - 112;

RCLCPP_INFO(rclcpp::get_logger("LineFollowerPerceptionNode"),

"coor rawx: %f, rawy:%f, x: %f y:%f", x, y, result->x, result->y);

return 0;

}

OriginCar:

~/dev_ws/src/origincar/origincar_deeplearning/line_follower_perception/src/line_follower_perception.cpp

~/dev_ws/src/origincar/origincar_bringup/launch/usb_websocket_display.launch.py usb_node = IncludeLaunchDescription(PythonLaunchDescriptionSource(get_package_share_directory('hobot_usb_cam') + '/launch/hobot_usb_cam.launch.py'),

launch_arguments={'usb_image_width': '640', 'usb_image_height': '480',

'usb_video_device': LaunchConfiguration('device')}.items())