替换yum源

wget -O /etc/yum.repos.d/CentOS-Base.repo http://mirrors.aliyun.com/repo/Centos-7.repo

wget -O /etc/yum.repos.d/epel.repo http://mirrors.aliyun.com/repo/epel-7.repo

yum clean all

yum makecache

yum -y update

安装docker

wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo -O /etc/yum.repos.d/docker-ce.repo

yum -y install docker-ce-19.03.8-3.el7

yum update xfsprogs -y

rm -rf /etc/docker/daemon.json

vi /etc/docker/daemon.json

{

"exec-opts": ["native.cgroupdriver=systemd"],

"registry-mirrors": [

"https://1nj0zren.mirror.aliyuncs.com",

"https://kfwkfulq.mirror.aliyuncs.com",

"https://2lqq34jg.mirror.aliyuncs.com",

"https://pee6w651.mirror.aliyuncs.com",

"http://hub-mirror.c.163.com",

"https://docker.mirrors.ustc.edu.cn",

"http://f1361db2.m.daocloud.io",

"https://registry.docker-cn.com"

]

}

重启

systemctl daemon-reload

systemctl restart docker

查看docker日志

dockerd

安装k8s

docker pull registry.aliyuncs.com/google_containers/kube-proxy:v1.19.0

docker pull registry.aliyuncs.com/google_containers/kube-apiserver:v1.19.0

docker pull registry.aliyuncs.com/google_containers/kube-controller-manager:v1.19.0

docker pull registry.aliyuncs.com/google_containers/kube-scheduler:v1.19.0

docker pull registry.aliyuncs.com/google_containers/etcd:3.4.9-1

docker pull registry.aliyuncs.com/google_containers/coredns:1.7.0

docker pull registry.aliyuncs.com/google_containers/pause:3.2

systemctl stop firewalld && systemctl disable firewalld

swapoff -a && sed -i '/ swap / s/^\(.*\)$/#\1/g' /etc/fstab

setenforce 0 && sed -i 's/^SELINUX=.*/SELINUX=disabled/' /etc/selinux/config

yum -y install ntpdate

ntpdate time.windows.com

hwclock --systohc

cat > /etc/sysctl.d/k8s.conf << EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

sysctl --system

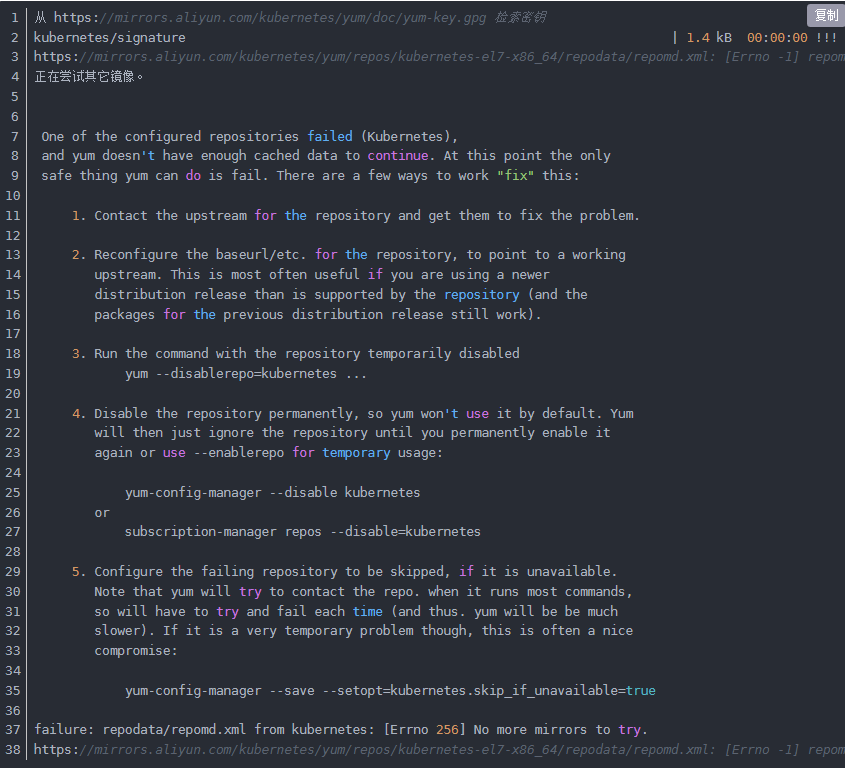

cat > /etc/yum.repos.d/kubernetes.repo << EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

yum remove -y kubelet-1.19.0 kubeadm-1.19.0 kubectl-1.19.0

yum install -y kubelet-1.19.0 kubeadm-1.19.0 kubectl-1.19.0

systemctl enable kubelet

修改配置文件

/usr/lib/systemd/system/kubelet.service.d/10-kubeadm.conf

ExecStart=/usr/bin/kubelet $KUBELET_KUBECONFIG_ARGS $KUBELET_CONFIG_ARGS $KUBELET_KUBEADM_ARGS $KUBELET_EXTRA_ARGS --feature-gates SupportPodPidsLimit=false --feature-gates SupportNodePidsLimit=false

systemctl daemon-reload

systemctl restart docker

systemctl restart kubelet

kubeadm init --kubernetes-version=1.19.0 \

--apiserver-advertise-address=$(ifconfig | grep eno -A 1 | grep inet | awk '{ print $2 }') \

--image-repository=registry.aliyuncs.com/google_containers \

--service-cidr=10.1.0.0/16 \

--pod-network-cidr=10.244.0.0/16 \

--ignore-preflight-errors=all \

--v=5

kubeadm join --token 7q9hvo.e65aim12as1a0v98 --discovery-token-ca-cert-hash sha256:76e68e8ac68af391e3f406a5ea451ea44b0f67a63ac44754a43f8e483d613e14 192.168.116.27:6443

--ignore-preflight-errors=all

安装flannel

wget https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

kubectl apply -f kube-flannel.yml

kubectl get pods -n kube-system

去除污点

kubectl describe node localhost.localdomain | grep Taints

kubectl taint nodes localhost.localdomain node.kubernetes.io/not-ready:NoSchedule-

[root@localhost k8s]

NAME STATUS ROLES AGE VERSION

localhost.localdomain Ready master 49m v1.19.0

[root@localhost k8s]

NAMESPACE NAME READY STATUS RESTARTS AGE

default nginx1-6978dd5678-66wc7 1/1 Running 0 15m

kube-flannel kube-flannel-ds-rvdqq 1/1 Running 1 29m

kube-system coredns-6d56c8448f-mcbkv 1/1 Running 0 28m

kube-system coredns-6d56c8448f-z49bb 1/1 Running 0 41m

kube-system etcd-localhost.localdomain 1/1 Running 1 41m

kube-system kube-apiserver-localhost.localdomain 1/1 Running 2 41m

kube-system kube-controller-manager-localhost.localdomain 1/1 Running 3 41m

kube-system kube-proxy-jcbcq 1/1 Running 1 41m

kube-system kube-scheduler-localhost.localdomain 1/1 Running 3 41m

[root@localhost k8s]

grpc服务demo

// protoc --go_out=plugins=grpc:. demo.proto

syntax = "proto3";

package demo;

option go_package = "demo";

service demo {

rpc hello(helloReq) returns(helloResp);

}

message helloReq {

string req=1;

}

message helloResp {

string msg=1;

}

package main

import (

"context"

"flag"

"fmt"

pb "gg/demo"

"log"

"net"

"os"

"google.golang.org/grpc"

"google.golang.org/grpc/reflection"

)

type server struct {

}

var (

listen = flag.String("listen", "listen", ":80")

)

func main() {

flag.Parse()

fmt.Println(os.Args)

s := grpc.NewServer()

pb.RegisterDemoServer(s, &server{})

reflection.Register(s)

l, err := net.Listen("tcp", *listen)

if err != nil {

log.Printf("Failed to listen %s, err %v", *listen, err)

return

}

log.Println("server run")

s.Serve(l)

}

func (s *server) Hello(ctx context.Context, in *pb.HelloReq) (*pb.HelloResp, error) {

log.Printf("Hello req:%+v", in)

return &pb.HelloResp{Msg: in.Req}, nil

}

编写dockerfile

FROM ubuntu

ADD ./main /

EXPOSE 80

EXPOSE 8078

打包生成镜像

docker build -t helloworld:1.0 .

deploy & svc

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: helloworld

name: helloworld

spec:

replicas: 1

selector:

matchLabels:

app: helloworld

template:

metadata:

labels:

app: helloworld

spec:

containers:

- image: helloworld:1.0

imagePullPolicy: Never

name: helloworld

command:

- /main

- --listen=:80

apiVersion: v1

kind: Service

metadata:

labels:

app: helloworld

name: helloworld

spec:

selector:

app: helloworld

ports:

- name: http-admin

port: 8078

protocol: TCP

targetPort: 8078

- name: grpc

port: 80

protocol: TCP

targetPort: 80

测试

[root@localhost ~]

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

helloworld ClusterIP 10.1.173.212 <none> 8078/TCP,80/TCP 58s

kubernetes ClusterIP 10.1.0.1 <none> 443/TCP 4h42m

[root@localhost ~]

{

"msg": "aa"

}

[root@localhost ~]

NAME READY STATUS RESTARTS AGE

helloworld-6fcfff47d5-srdsr 1/1 Running 0 5m31s

nginx1-6978dd5678-66wc7 1/1 Running 1 4h2m

[root@localhost ~]

[/main --listen=:80]

2024/04/04 13:56:54 server run

2024/04/04 14:01:37 Hello req:req:"aa"

2024/04/04 14:02:17 Hello req:req:"aa"