注意力机制是深度学习中的重要技术之一,正日益受到重视关注。注意力机制作为一种信息贡献筛选的方法被提出,它可以帮助神经网络更多地关注与任务相关的特征,从而减少对任务贡献较小信息的影响。因此,利用注意机制可以提高神经网络的学习能力和可解释性。Transformer是一种基于纯注意力机制的代表性网络模型,仅使用注意力机制就能从原始输入数据中学习全局依赖关系进行建模,并且不再需要与CNN、LSTM等网络搭配使用。与其他传统深度学习网络相比,Transformer的并行计算能力与长序列依赖关系的捕捉能力更强。作为现代深度学习堆栈中一股强大的力量,Transformer已经取得一些研究成果。

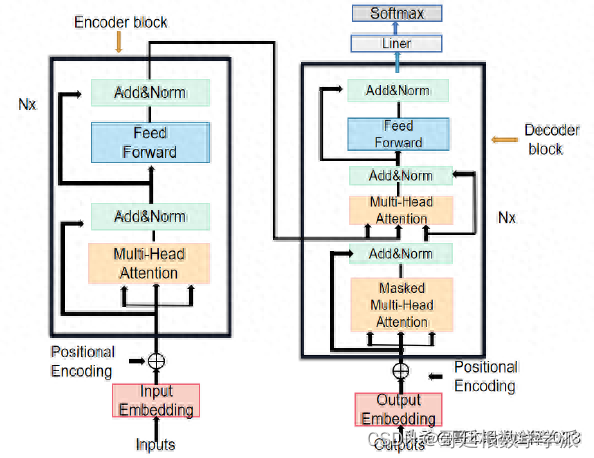

Transformer是一种基于编码与解码结构的模型,与传统的卷积神经网络不同,它主要由多头注意力机制与前馈神经网络组成,如图所示。

图中左侧部分为N个编码模块堆叠,右侧部分为N个解码模块堆叠。编码模块由多头注意力机制和前馈神经网络两个子层组成,每个子层之间均采用了残差连接。解码模块的组成部分与编码模块相比增加了遮挡多头注意力机制,是为了更好地实现模型的预测功能。Transformer模型最初是应用于文本翻译的模型,模型的编码解码输入部分采用了Embedding操作;为了获取信息的序列顺序,模型的输入部分还采用了位置编码功能。

几件事弄明白

Transformer 是做什么的

Transformer 的输入是什么

Transformer 的输出是什么

Transformer 是什么,长什么样

Transformer 还能怎么优化

如何从浅入深理解transformer? - 麦克船长的回答 - 知乎

https://www.zhihu.com/question/471328838/answer/2864224369

如何从浅入深理解transformer? - Gordon Lee的回答 - 知乎

https://www.zhihu.com/question/4713

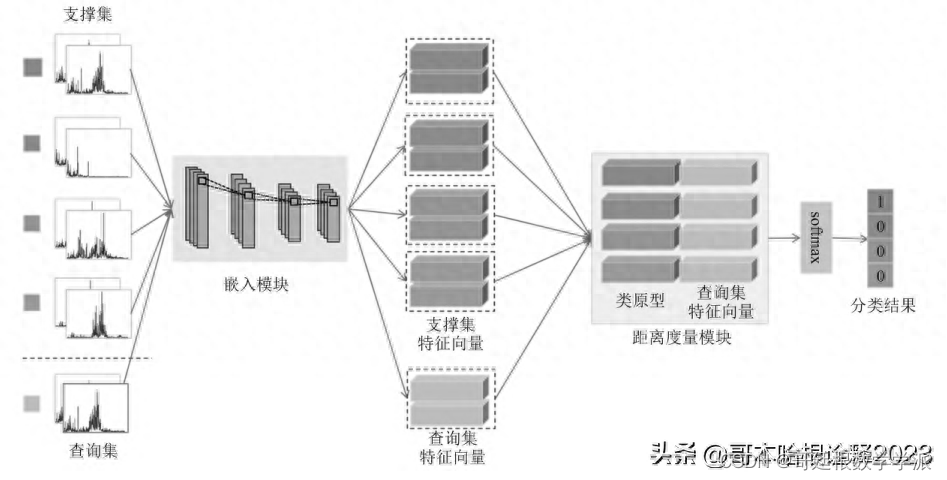

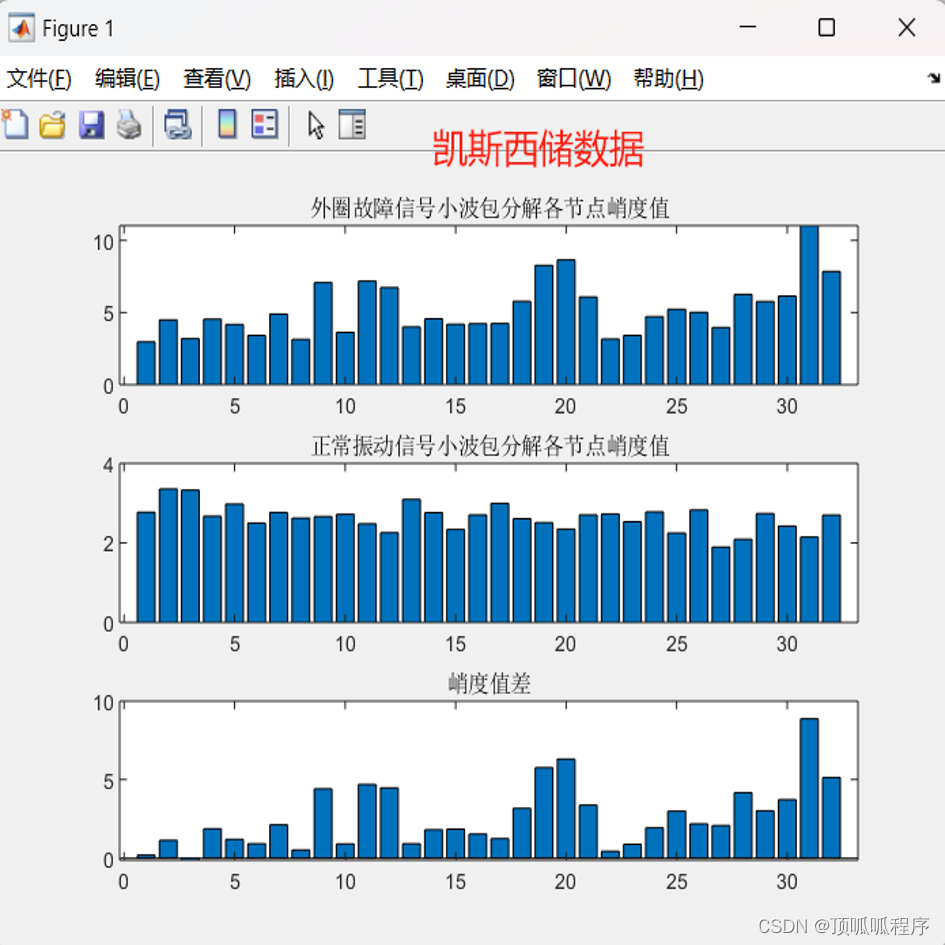

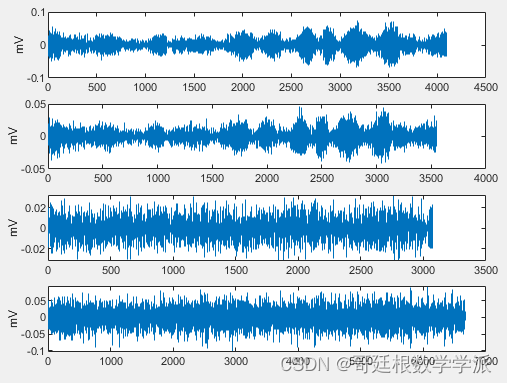

鉴于此,提出一种简单的基于Transformer模型的滚动轴承故障诊断方法,运行环境为Python,采用Pytorch深度学习模块,数据集为西储大学轴承数据集,主代码如下:

import torch

import torch.nn as nn

import torch.optim as optim

from model import DSCTransformer

from tqdm import tqdm

from data_set import MyDataset

from torch.utils.data import DataLoader

def train(epochs=100):

#定义参数

N = 4 #编码器个数

input_dim = 1024

seq_len = 16 #句子长度

d_model = 64 #词嵌入维度

d_ff = 256 #全连接层维度

head = 4 #注意力头数

dropout = 0.1

lr = 5E-5 #学习率

batch_size = 32

#加载数据

train_path = r'data\train\train.csv'

val_path = r'data\val\val.csv'

train_dataset = MyDataset(train_path, 'fd')

val_dataset = MyDataset(val_path, 'fd')

train_loader = DataLoader(dataset=train_dataset, batch_size=batch_size, shuffle=True, drop_last=True)

val_loader = DataLoader(dataset=val_dataset, batch_size=batch_size, shuffle=True, drop_last=True)

#定义模型

model = DSCTransformer(input_dim=input_dim, num_classes=10, dim=d_model, depth=N,

heads=head, mlp_dim=d_ff, dim_head=d_model, emb_dropout=dropout, dropout=dropout)

criterion = nn.CrossEntropyLoss()

params = [p for p in model.parameters() if p.requires_grad]

optimizer = optim.Adam(params, lr=lr)

device = torch.device('cuda:0' if torch.cuda.is_available() else 'cpu')

print("using {} device.".format(device))

model.to(device)

#训练

for epoch in range(epochs):

#train

train_loss = []

train_acc = []

model.train()

train_bar = tqdm(train_loader)

for datas, labels in train_bar:

optimizer.zero_grad()

outputs = model(datas.float().to(device))

loss = criterion(outputs, labels.type(torch.LongTensor).to(device))

loss.backward()

optimizer.step()

# torch.argmax(dim=-1), 求每一行最大的列序号

acc = (outputs.argmax(dim=-1) == labels.to(device)).float().mean()

# Record the loss and accuracy

train_loss.append(loss.item())

train_acc.append(acc)

train_bar.desc = "train epoch[{}/{}] loss:{:.3f}".format(epoch + 1, epochs, loss.item())

#val

model.eval()

valid_loss = []

valid_acc = []

val_bar = tqdm(val_loader)

for datas, labels in val_bar:

with torch.no_grad():

outputs = model(datas.float().to(device))

loss = criterion(outputs, labels.type(torch.LongTensor).to(device))

acc = (outputs.argmax(dim=-1) == labels.to(device)).float().mean()

# Record the loss and accuracy

valid_loss.append(loss.item())

valid_acc.append(acc)

val_bar.desc = "valid epoch[{}/{}]".format(epoch + 1, epochs)

print(f"[{epoch + 1:02d}/{epochs:02d}] train loss = "

f"{sum(train_loss) / len(train_loss):.5f}, train acc = {sum(train_acc) / len(train_acc):.5f}", end=" ")

print(f"valid loss = {sum(valid_loss) / len(valid_loss):.5f}, valid acc = {sum(valid_acc) / len(valid_acc):.5f}")

if __name__ == '__main__':

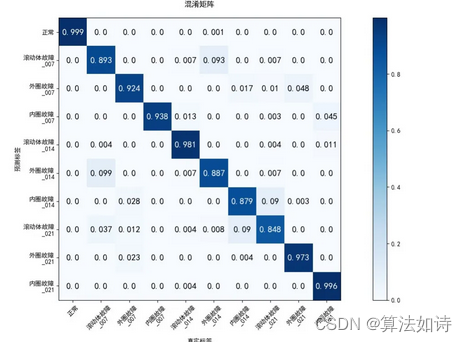

train()运行结果如下:

train epoch[80/100] loss:0.014: 100%|██████████| 37/37 [00:01<00:00, 24.50it/s]

valid epoch[80/100]: 100%|██████████| 12/12 [00:00<00:00, 90.47it/s]

[80/100] train loss = 0.01458, train acc = 1.00000 valid loss = 0.03555, valid acc = 0.99479

train epoch[81/100] loss:0.013: 100%|██████████| 37/37 [00:01<00:00, 25.19it/s]

valid epoch[81/100]: 100%|██████████| 12/12 [00:00<00:00, 78.13it/s]

[81/100] train loss = 0.01421, train acc = 1.00000 valid loss = 0.03513, valid acc = 0.99479

train epoch[82/100] loss:0.012: 100%|██████████| 37/37 [00:01<00:00, 24.34it/s]

valid epoch[82/100]: 100%|██████████| 12/12 [00:00<00:00, 80.21it/s]

[82/100] train loss = 0.01436, train acc = 1.00000 valid loss = 0.03407, valid acc = 0.99479

train epoch[83/100] loss:0.012: 100%|██████████| 37/37 [00:01<00:00, 24.44it/s]

valid epoch[83/100]: 100%|██████████| 12/12 [00:00<00:00, 83.56it/s]

[83/100] train loss = 0.01352, train acc = 1.00000 valid loss = 0.03477, valid acc = 0.99479

train epoch[84/100] loss:0.012: 100%|██████████| 37/37 [00:01<00:00, 25.02it/s]

valid epoch[84/100]: 100%|██████████| 12/12 [00:00<00:00, 81.85it/s]

[84/100] train loss = 0.01425, train acc = 1.00000 valid loss = 0.03413, valid acc = 0.99219

train epoch[85/100] loss:0.016: 100%|██████████| 37/37 [00:01<00:00, 24.03it/s]

valid epoch[85/100]: 100%|██████████| 12/12 [00:00<00:00, 77.63it/s]

[85/100] train loss = 0.01345, train acc = 1.00000 valid loss = 0.03236, valid acc = 0.99479

train epoch[86/100] loss:0.017: 100%|██████████| 37/37 [00:01<00:00, 24.00it/s]

valid epoch[86/100]: 100%|██████████| 12/12 [00:00<00:00, 78.13it/s]

[86/100] train loss = 0.01273, train acc = 1.00000 valid loss = 0.02760, valid acc = 0.99479

train epoch[87/100] loss:0.011: 100%|██████████| 37/37 [00:01<00:00, 24.83it/s]

valid epoch[87/100]: 100%|██████████| 12/12 [00:00<00:00, 81.28it/s]

[87/100] train loss = 0.01185, train acc = 1.00000 valid loss = 0.02206, valid acc = 0.99740

train epoch[88/100] loss:0.010: 100%|██████████| 37/37 [00:01<00:00, 24.75it/s]

valid epoch[88/100]: 100%|██████████| 12/12 [00:00<00:00, 89.79it/s]

[88/100] train loss = 0.01135, train acc = 1.00000 valid loss = 0.02629, valid acc = 0.99479

train epoch[89/100] loss:0.012: 100%|██████████| 37/37 [00:01<00:00, 24.39it/s]

valid epoch[89/100]: 100%|██████████| 12/12 [00:00<00:00, 82.98it/s]

[89/100] train loss = 0.01156, train acc = 1.00000 valid loss = 0.02901, valid acc = 0.99479

train epoch[90/100] loss:0.011: 100%|██████████| 37/37 [00:01<00:00, 24.67it/s]

valid epoch[90/100]: 100%|██████████| 12/12 [00:00<00:00, 85.94it/s]

[90/100] train loss = 0.01108, train acc = 1.00000 valid loss = 0.02793, valid acc = 0.99479

train epoch[91/100] loss:0.010: 100%|██████████| 37/37 [00:01<00:00, 24.38it/s]

valid epoch[91/100]: 100%|██████████| 12/12 [00:00<00:00, 87.82it/s]

[91/100] train loss = 0.01050, train acc = 1.00000 valid loss = 0.02961, valid acc = 0.99479

train epoch[92/100] loss:0.013: 100%|██████████| 37/37 [00:01<00:00, 24.75it/s]

valid epoch[92/100]: 100%|██████████| 12/12 [00:00<00:00, 81.30it/s]

[92/100] train loss = 0.01256, train acc = 0.99916 valid loss = 0.04156, valid acc = 0.98177

train epoch[93/100] loss:0.011: 100%|██████████| 37/37 [00:01<00:00, 24.88it/s]

valid epoch[93/100]: 100%|██████████| 12/12 [00:00<00:00, 82.98it/s]

[93/100] train loss = 0.01328, train acc = 0.99916 valid loss = 0.03718, valid acc = 0.99479

train epoch[94/100] loss:0.010: 100%|██████████| 37/37 [00:01<00:00, 24.14it/s]

valid epoch[94/100]: 100%|██████████| 12/12 [00:00<00:00, 78.13it/s]

[94/100] train loss = 0.01258, train acc = 0.99916 valid loss = 0.03206, valid acc = 0.99479

train epoch[95/100] loss:0.009: 100%|██████████| 37/37 [00:01<00:00, 24.30it/s]

valid epoch[95/100]: 100%|██████████| 12/12 [00:00<00:00, 81.30it/s]

[95/100] train loss = 0.01152, train acc = 0.99916 valid loss = 0.04057, valid acc = 0.99219

train epoch[96/100] loss:0.008: 100%|██████████| 37/37 [00:01<00:00, 24.36it/s]

valid epoch[96/100]: 100%|██████████| 12/12 [00:00<00:00, 81.30it/s]

[96/100] train loss = 0.01059, train acc = 1.00000 valid loss = 0.03764, valid acc = 0.99479

train epoch[97/100] loss:0.012: 100%|██████████| 37/37 [00:01<00:00, 24.98it/s]

valid epoch[97/100]: 100%|██████████| 12/12 [00:00<00:00, 83.54it/s]

[97/100] train loss = 0.00955, train acc = 1.00000 valid loss = 0.03798, valid acc = 0.99479

train epoch[98/100] loss:0.008: 100%|██████████| 37/37 [00:01<00:00, 24.23it/s]

valid epoch[98/100]: 100%|██████████| 12/12 [00:00<00:00, 80.75it/s]

[98/100] train loss = 0.00969, train acc = 1.00000 valid loss = 0.03981, valid acc = 0.99219

train epoch[99/100] loss:0.010: 100%|██████████| 37/37 [00:01<00:00, 24.06it/s]

valid epoch[99/100]: 100%|██████████| 12/12 [00:00<00:00, 87.83it/s]

[99/100] train loss = 0.00962, train acc = 1.00000 valid loss = 0.04668, valid acc = 0.98698

train epoch[100/100] loss:0.008: 100%|██████████| 37/37 [00:01<00:00, 24.92it/s]

valid epoch[100/100]: 100%|██████████| 12/12 [00:00<00:00, 82.98it/s][100/100] train loss = 0.00876, train acc = 1.00000 valid loss = 0.03714, valid acc = 0.99479

完整代码:

Pytorch环境下基于Transformer模型的滚动轴承故障诊断

擅长领域:现代信号处理,机器学习,深度学习,数字孪生,时间序列分析,设备缺陷检测、设备异常检测、设备智能故障诊断与健康管理PHM等。