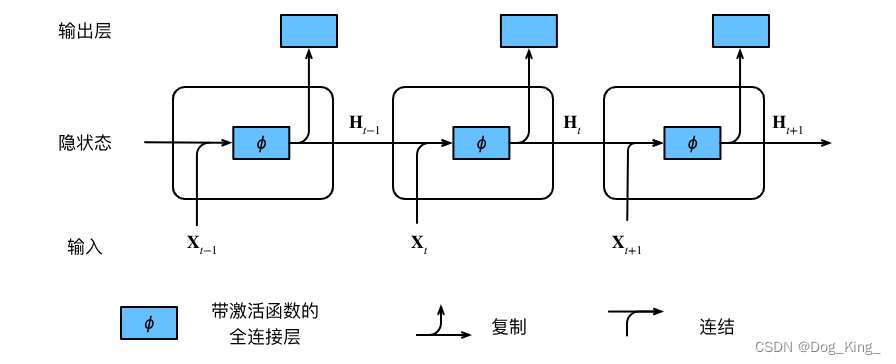

LSTM 代码流程与RNN代码基本一致,只是这里做了几点优化

1、数据准备

数据从导入到分词,流程是一致的

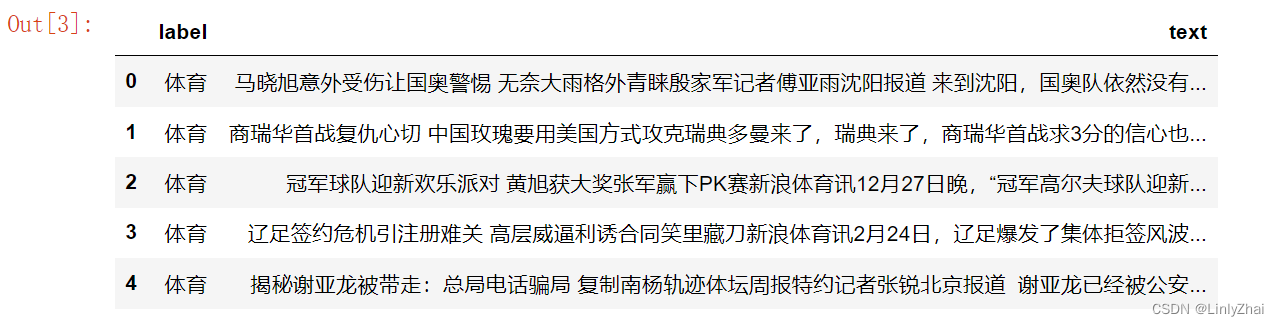

# 加载数据

file_path = './data/news.csv'

data = pd.read_csv(file_path)

# 显示数据的前几行

data.head()

# 划分数据集

X_train, X_test, y_train, y_test = train_test_split(data['text'], data['label'], test_size=0.2, random_state=42)

X_train.shape, X_test.shape

# 文本清洗和分词函数

def clean_and_cut(text):

# 删除特殊字符和数字

text = re.sub(r'[^a-zA-Z\u4e00-\u9fff]', '', text)

# 使用jieba进行分词

words = jieba.cut(text)

return ' '.join(words)

# 应用函数到训练集和测试集

X_train_cut = X_train.apply(clean_and_cut)

X_test_cut = X_test.apply(clean_and_cut)

# 显示处理后的文本

X_train_cut.head()

2、分词

在RNN代码中我们是自己创建了Vocabulary(词汇表),在这里我们做一下修改,使用gensim库里面的Word2Vec进行创建embedding

from gensim.models import Word2Vec

from sklearn.feature_extraction.text import CountVectorizer

# 使用CountVectorizer获取词汇表

vectorizer = CountVectorizer().fit(X_train_cut)

vocabulary = vectorizer.get_feature_names_out()

# 将分词后的文本转换为列表形式

X_train_cut_list = X_train_cut.apply(lambda x: x.split()).tolist()

X_test_cut_list = X_test_cut.apply(lambda x: x.split()).tolist()

# 训练word2vec模型

word2vec_model = Word2Vec(sentences=X_train_cut_list + X_test_cut_list, vector_size=100, window=5, min_count=5, workers=4)

# 将文本转换为word2vec向量

def text_to_word2vec(text_list):

vectors = []

for text in text_list:

vector = []

for word in text:

if word in word2vec_model.wv.key_to_index:

vector.append(word2vec_model.wv[word])

if len(vector) > 0:

vectors.append(sum(vector) / len(vector))

else:

vectors.append([0] * 100) # 如果文本中没有word2vec词汇,则用0向量代替

return vectors

2.1 查看词汇表

word2vec_model.wv.key_to_index

{'的': 0,

'在': 1,

'了': 2,

'是': 3,

'和': 4,

'也': 5,

'有': 6,

'基金': 7,

'都': 8,

'我': 9,

'他': 10,

……

2.2 查看字词的embedding

word2vec_model.wv['健康']

array([-0.01810974, 0.27138963, 0.22368671, 0.15925884, 0.00260239,

-0.6498546 , 0.04772706, 0.6372838 , -0.0666943 , -0.19555964,

-0.29694465, -0.7099566 , -0.07624389, 0.20403604, 0.01061103,

0.0839119 , -0.04758683, -0.22259666, 0.00257784, -0.6900303 ,

0.10259973, -0.0222414 , 0.19873343, -0.32307413, -0.14821231,

-0.05052961, -0.40442178, 0.01868534, -0.2937809 , 0.0507537 ,

0.40648073, -0.10833946, -0.00197444, -0.3136729 , -0.32454553,

0.22438885, -0.11383332, -0.11252628, -0.20691326, -0.22707234,

0.2116262 , -0.31386453, -0.30298904, -0.01941648, 0.4644694 ,

-0.24589385, -0.30516517, -0.21708779, 0.21309872, 0.29561085,

-0.16977915, -0.08319842, 0.10486396, -0.2473325 , -0.28935805,

0.34872615, 0.1478753 , 0.05210855, -0.23518556, 0.16296415,

0.04608041, -0.00315166, 0.09013587, -0.01028902, -0.2992899 ,

0.19127987, 0.0816482 , 0.2817147 , -0.24951911, 0.40776795,

-0.03947425, 0.15177304, 0.27037534, -0.04361408, 0.33221805,

0.06377248, -0.03019366, -0.1347892 , -0.11624625, -0.08422591,

-0.14234477, -0.14741378, -0.3383527 , 0.44758153, -0.12425947,

-0.10724418, 0.03614402, 0.3065817 , 0.41921914, 0.11192655,

0.27908325, -0.02369861, -0.21884899, 0.10293698, 0.37389368,

0.28169003, 0.13951117, -0.10917827, -0.00591412, 0.25471288],

dtype=float32)

2.3 查看相似性

word2vec_model.wv.most_similar("健康")

[('作用', 0.9974547028541565),

('之间', 0.9972665309906006),

('环境', 0.9970947504043579),

('符合', 0.9970938563346863),

('设计师', 0.9966321587562561),

('程度', 0.9965812563896179),

('特点', 0.9964777827262878),

('优秀', 0.9964598417282104),

('所', 0.9962416887283325),

('关系', 0.9958899617195129)]

两个词之间的余弦相似度

word2vec_model.wv.similarity('思想', '健康')

0.99308807

2.4 保存embedding

word2vec_model.wv.save('word_vector')

2.5 读取embedding

from gensim.models import KeyedVectors

loaded_wv = KeyedVectors.load('word_vector', mmap='r') # 加载保存的word vectors

loaded_wv

<gensim.models.keyedvectors.KeyedVectors at 0x7fb0c125b310>

2.6 可视化展示

#可视化

from sklearn.decomposition import PCA

from matplotlib import pyplot

import matplotlib.pyplot as plt

plt.rcParams['font.sans-serif'] = ['Hiragino Sans GB']

plt.rcParams['axes.unicode_minus'] = False

words = list(word2vec_model.wv.key_to_index.keys())

X = word2vec_model.wv[words]

pca = PCA(n_components=2)

result = pca.fit_transform(X)

# 查看前20个

pyplot.scatter(result[:, 0][:20], result[:, 1][:20])

for i, word in enumerate(words[:20]):

pyplot.annotate(word, xy=(result[i, 0], result[i, 1]))

pyplot.show()

3、数据处理

X_train_word2vec = text_to_word2vec(X_train_cut_list)

X_test_word2vec = text_to_word2vec(X_test_cut_list)

# 将向量转换为numpy数组

X_train_word2vec = np.array(X_train_word2vec)

X_test_word2vec = np.array(X_test_word2vec)

# 将标签转换为数值形式

label_encoder = LabelEncoder()

y_train_encoded = label_encoder.fit_transform(y_train)

y_test_encoded = label_encoder.transform(y_test)

input_dim = 100

X_train_tensor = torch.tensor(X_train_word2vec, dtype=torch.float32).view(-1, 1, input_dim)

y_train_tensor = torch.tensor(y_train_encoded, dtype=torch.long)

X_test_tensor = torch.tensor(X_test_word2vec, dtype=torch.float32).view(-1, 1, input_dim)

y_test_tensor = torch.tensor(y_test_encoded, dtype=torch.long)

from torch.utils.data import DataLoader, TensorDataset

# 创建TensorDataset和DataLoader

train_dataset = TensorDataset(X_train_tensor, y_train_tensor)

test_dataset = TensorDataset(X_test_tensor, y_test_tensor)

train_loader = DataLoader(dataset=train_dataset, batch_size=32, shuffle=True)

test_loader = DataLoader(dataset=test_dataset, batch_size=32, shuffle=False)

过程比较简单就不过多介绍

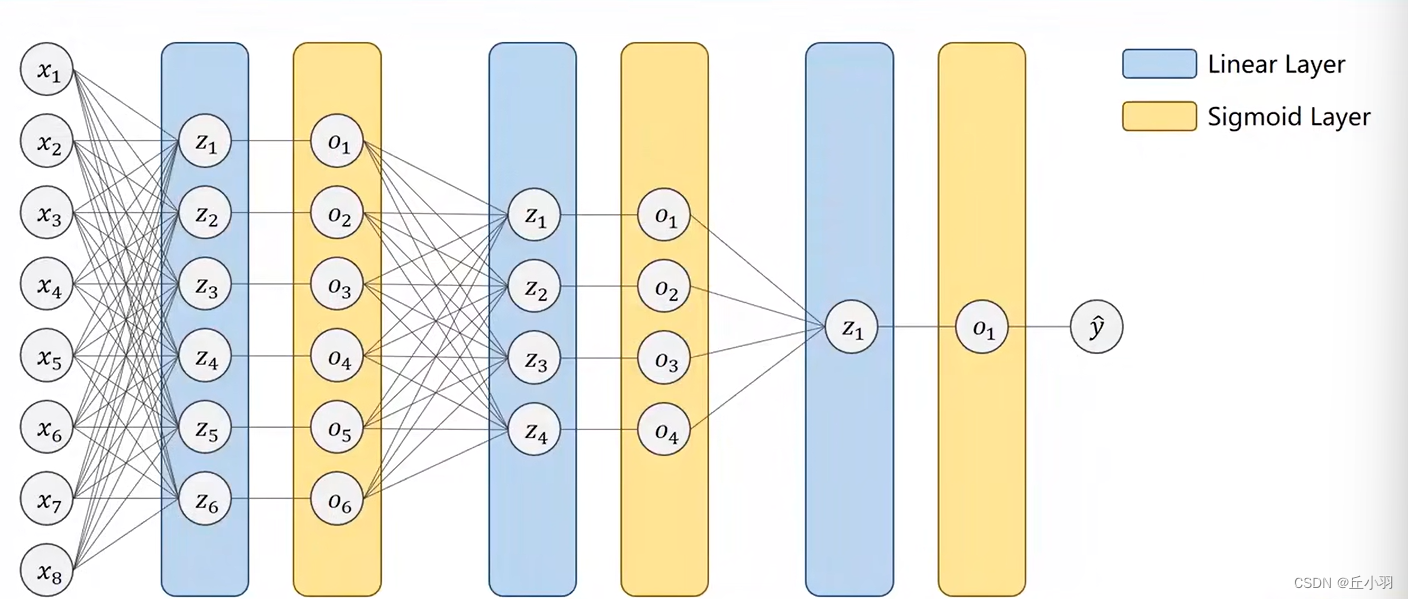

4、模型定义

# 定义LSTM模型

class LSTMClassifier(nn.Module):

def __init__(self, input_dim, hidden_dim, output_dim, n_layers, bidirectional, dropout):

super().__init__()

self.rnn = nn.LSTM(input_dim, hidden_dim, num_layers=n_layers, bidirectional=bidirectional, dropout=dropout, batch_first=True)

self.fc = nn.Linear(hidden_dim * 2 if bidirectional else hidden_dim, output_dim)

self.dropout = nn.Dropout(dropout)

def forward(self, x):

embedded = self.dropout(x)

output, (hidden, cell) = self.rnn(embedded)

if self.rnn.bidirectional:

hidden = self.dropout(torch.cat((hidden[-2,:,:], hidden[-1,:,:]), dim=1))

else:

hidden = self.dropout(hidden[-1,:,:])

return self.fc(hidden)

具体解析可参考RNN代码解析

唯一的不同这里介绍下,就是output, (hidden, cell) = self.rnn(embedded)

RNN没有cell,所以这里需要加上。

在模型中,self.dropout(hidden[-1,:,:])这行代码是对RNN层的最后一个时间步的隐藏状态应用dropout正则化。

让我们逐步解析这行代码:

hidden: 这是RNN层的输出之一,表示隐藏状态。对于每个时间步,RNN会产生一个隐藏状态。如果RNN是多层(n_layers大于1),那么每个时间步的隐藏状态会经过所有的层。因此,hidden的形状将是(num_layers * num_directions, batch_size, hidden_dim),其中num_directions是1(单向)或2(双向)。hidden[-1,:,:]: 这里,-1索引表示选择最后一个RNN层的输出。由于batch_first=True,所以hidden的形状是(num_layers * num_directions, batch_size, hidden_dim),因此hidden[-1,:,:]将返回最后一个RNN层的所有批次数据的隐藏状态,形状为(batch_size, hidden_dim)。self.dropout(...): 这是dropout层的应用。dropout是一种正则化技术,用于防止过拟合,它通过随机地将输入单元设置为0来减少模型对某些特征的依赖。self.dropout是在初始化函数中定义的nn.Dropout(dropout)实例,其中dropout是丢弃概率,即每个输入单元被设置为0的概率。

综上所述,self.dropout(hidden[-1,:,:])是对RNN最后一个时间步的隐藏状态应用dropout,以减少过拟合,并且返回了一个经过dropout处理的隐藏状态,这个状态将被送入全连接层self.fc进行最终的分类。

5、训练

# 训练模型

num_epochs = 100

for epoch in range(num_epochs):

for inputs, labels in train_loader:

# 清除梯度

optimizer.zero_grad()

# 前向传播

outputs = model(inputs)

# 计算损失

loss = criterion(outputs, labels)

# 反向传播

loss.backward()

# 更新参数

optimizer.step()

print(f'Epoch [{epoch+1}/{num_epochs}], Loss: {loss.item()}')

# 在测试集上评估模型

model.eval()

with torch.no_grad():

correct = 0

total = 0

for inputs, labels in test_loader:

outputs = model(inputs)

_, predicted = torch.max(outputs.data, 1)

total += labels.size(0)

correct += (predicted == labels).sum().item()

print(f'Accuracy of the model on the test set: {100 * correct / total}%')

……

Epoch [95/100], Loss: 0.6946447491645813

Epoch [96/100], Loss: 0.5245355367660522

Epoch [97/100], Loss: 0.9825029969215393

Epoch [98/100], Loss: 0.6163020730018616

Epoch [99/100], Loss: 0.6698494553565979

Epoch [100/100], Loss: 0.48705795407295227

Accuracy of the model on the test set: 80.5%

![P9240 [蓝桥杯 2023 省 B] 冶炼金属:二分模型](https://img-blog.csdnimg.cn/direct/38c9948bac794fc2b97e371a68152347.png)