torch具体报错内容如下

RuntimeError: one of the variables needed for gradient computation has been modified by an inplace operation: [torch.FloatTensor [128, 1]], which is output 0 of AsStridedBackward0, is at version

2; expected version 1 instead. Hint: enable anomaly detection to

find the operation that failed to compute its gradient, with torch.autograd.set_detect_anomaly(True).我现在正在做的算法是关于强化学习MADDPG,需要用到两个网络actor和critic

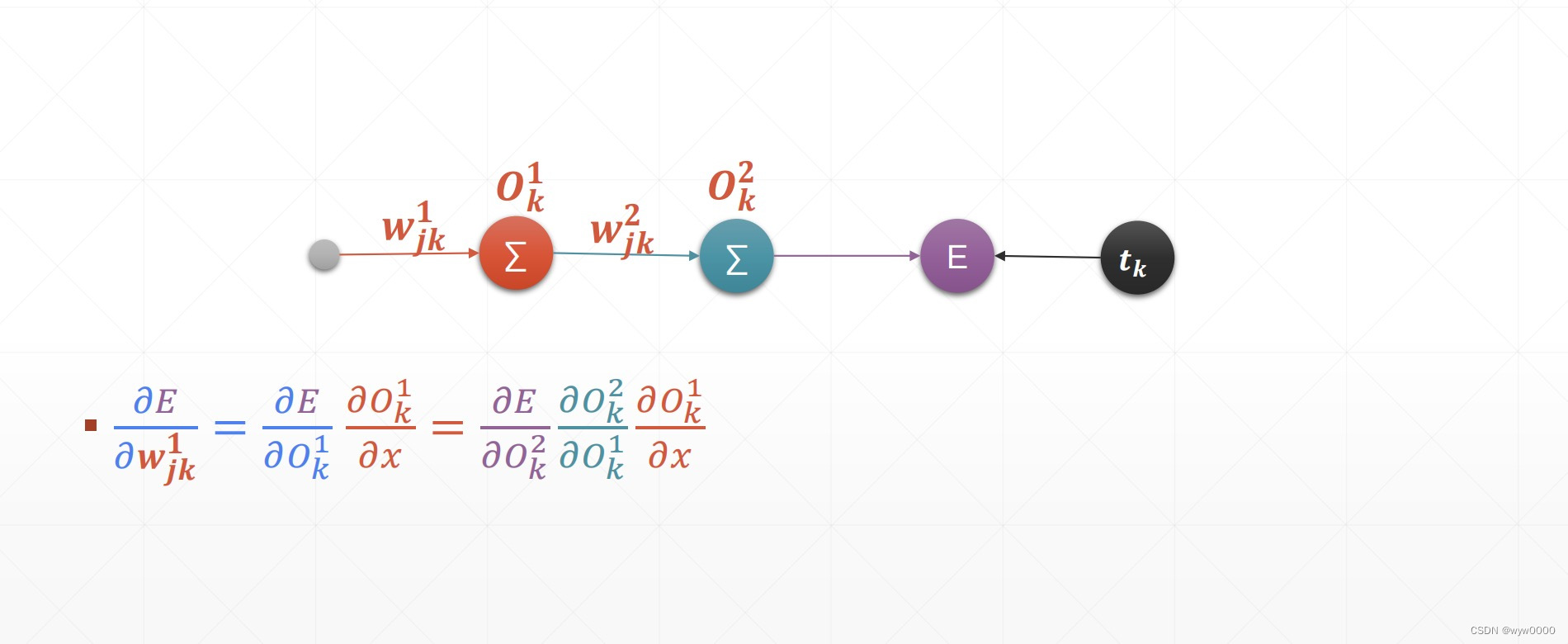

经过调试之后发现,我在计算出critic loss之后并没有进行critic网络的反向传播,而是开始计算actor loss。两个网络的loss都计算完毕后才开始进行反向传播就会出现上述报错。

解决方法就是将两个网络的loss和backward都单独封装计算即可