ok,在上一张中,重点针对processIMU这个函数进行了讲解

实际上

if(USE_IMU)

{

if(!initFirstPoseFlag)

initFirstIMUPose(accVector);///IMU重力对齐,与世界坐标系对齐

for(size_t i = 0; i < accVector.size(); i++)

{

///实际上得到的时间戳的顺序是 t0<accVector[0]<accVector[end]<t1

double dt;

if(i == 0)

dt = accVector[i].first - prevTime;///第一个惯性数据与图像之间的时间差

else if (i == accVector.size() - 1)///最后个惯性数据与图像之间的时间差

dt = curTime - accVector[i - 1].first;

else

dt = accVector[i].first - accVector[i - 1].first;///中间的惯性数据之间的时间差

///预积分首先看下该函数的入参

//

// t : 为当前数据的时间戳

// dt : 为相邻两个IMU数据之间的时间间隔

// linear_acceleration : 加速度

// angular_velocity : 角速度

processIMU(accVector[i].first, dt, accVector[i].second, gyrVector[i].second);

}

}

实际上它处于while(1)的循环当中,对 for(size_t i = 0; i < accVector.size(); i++) 这个accvector里面的长度进行积分,所以得到的预计分实际上是针对两帧图像之间的时间,但是里面包含的惯性的数据仍然在作世界坐标系下的累积积分

Rs[j] *= Utility::deltaQ(un_gyr * dt).toRotationMatrix();

Vector3d un_acc_1 = Rs[j] * (linear_acceleration - Bas[j]) - g;

Vector3d un_acc = 0.5 * (un_acc_0 + un_acc_1);

Ps[j] += dt * Vs[j] + 0.5 * dt * dt * un_acc;

Vs[j] += dt * un_acc;

此时的 RS[j] 还表示的是上一个时刻的旋转,还没有更新,注意这里的 j 表示的图像帧的数量。

、、

今天

在这里插入代码片///2.1.7

void Estimator::processMeasurements()

{

while (1)

{

//printf("process measurments\n");

pair<double, map<int, vector<pair<int, Eigen::Matrix<double, 7, 1> > > > > feature;

vector<pair<double, Eigen::Vector3d>> accVector, gyrVector;

if(!featureBuf.empty())

{

feature = featureBuf.front();

curTime = feature.first + td;

while(1)

{

if ((!USE_IMU || IMUAvailable(feature.first + td)))

break;

else

{

printf("wait for imu ... \n");

if (! MULTIPLE_THREAD)

return;

std::chrono::milliseconds dura(5);

std::this_thread::sleep_for(dura);///睡眠5ms

}

}

mBuf.lock();

if(USE_IMU)得到图像时间之间的IMU

getIMUInterval(prevTime, curTime, accVector, gyrVector);

///prevTime为上一帧图像的时间,curTime为当前帧图像的时间,

///accVector与gyrVector为获取图像帧之间的IMU数据

featureBuf.pop();///删掉上一帧图像的数据

mBuf.unlock();

if(USE_IMU)

{

if(!initFirstPoseFlag)

initFirstIMUPose(accVector);///IMU重力对齐,与世界坐标系对齐

for(size_t i = 0; i < accVector.size(); i++)

{

///实际上得到的时间戳的顺序是 t0<accVector[0]<accVector[end]<t1

double dt;

if(i == 0)

dt = accVector[i].first - prevTime;///第一个惯性数据与图像之间的时间差

else if (i == accVector.size() - 1)///最后个惯性数据与图像之间的时间差

dt = curTime - accVector[i - 1].first;

else

dt = accVector[i].first - accVector[i - 1].first;///中间的惯性数据之间的时间差

///预积分首先看下该函数的入参

//

// t : 为当前数据的时间戳

// dt : 为相邻两个IMU数据之间的时间间隔

// linear_acceleration : 加速度

// angular_velocity : 角速度

processIMU(accVector[i].first, dt, accVector[i].second, gyrVector[i].second);

}

}

processImage(feature.second, feature.first);

prevTime = curTime;

printStatistics(*this, 0);

std_msgs::Header header;

header.frame_id = "world";

header.stamp = ros::Time(feature.first);

pubOdometry(*this, header);

pubKeyPoses(*this, header);

pubCameraPose(*this, header);

pubPointCloud(*this, header);

pubKeyframe(*this);

pubTF(*this, header);

}

if (! MULTIPLE_THREAD)

break;

std::chrono::milliseconds dura(2);

std::this_thread::sleep_for(dura);

}

}

首先是processImage(feature.second, feature.first);

///2.1.12

void Estimator::processImage(const map<int, vector<pair<int, Eigen::Matrix<double, 7, 1>>>> &image, const double header)

{

ROS_DEBUG("new image coming ------------------------------------------");

ROS_DEBUG("Adding feature points %lu", image.size());

if (f_manager.addFeatureCheckParallax(frame_count, image, td))

{

marginalization_flag = MARGIN_OLD;

//printf("keyframe\n");

}

else

{

marginalization_flag = MARGIN_SECOND_NEW;

//printf("non-keyframe\n");

}

ROS_DEBUG("%s", marginalization_flag ? "Non-keyframe" : "Keyframe");

ROS_DEBUG("Solving %d", frame_count);

ROS_DEBUG("number of feature: %d", f_manager.getFeatureCount());

Headers[frame_count] = header;

ImageFrame imageframe(image, header);

imageframe.pre_integration = tmp_pre_integration;

all_image_frame.insert(make_pair(header, imageframe));

tmp_pre_integration = new IntegrationBase{

acc_0, gyr_0, Bas[frame_count], Bgs[frame_count]};

if(ESTIMATE_EXTRINSIC == 2)

{

ROS_INFO("calibrating extrinsic param, rotation movement is needed");

if (frame_count != 0)

{

vector<pair<Vector3d, Vector3d>> corres = f_manager.getCorresponding(frame_count - 1, frame_count);

Matrix3d calib_ric;

if (initial_ex_rotation.CalibrationExRotation(corres, pre_integrations[frame_count]->delta_q, calib_ric))

{

ROS_WARN("initial extrinsic rotation calib success");

ROS_WARN_STREAM("initial extrinsic rotation: " << endl << calib_ric);

ric[0] = calib_ric;

RIC[0] = calib_ric;

ESTIMATE_EXTRINSIC = 1;

}

}

}

if (solver_flag == INITIAL)

{

// monocular + IMU initilization

if (!(STEREO || DEPTH) && USE_IMU)

{

if (frame_count == WINDOW_SIZE)

{

bool result = false;

if(ESTIMATE_EXTRINSIC != 2 && (header - initial_timestamp) > 0.1)

{

result = initialStructure();

initial_timestamp = header;

}

if(result)

{

solver_flag = NON_LINEAR;

optimization();

slideWindow();

ROS_INFO("Initialization finish!");

}

else

slideWindow();

}

}

// stereo + IMU initilization

else if((STEREO || DEPTH) && USE_IMU)

{

f_manager.initFramePoseByPnP(frame_count, Ps, Rs, tic, ric);

// f_manager.triangulate(frame_count, Ps, Rs, tic, ric);

if (frame_count == WINDOW_SIZE)

{

map<double, ImageFrame>::iterator frame_it;

int i = 0;

for (frame_it = all_image_frame.begin(); frame_it != all_image_frame.end(); frame_it++)

{

frame_it->second.R = Rs[i];

frame_it->second.T = Ps[i];

i++;

}

bool result = false;

if(ESTIMATE_EXTRINSIC != 2 && (header - initial_timestamp) > 0.1)

{

result = 1;//visualInitialAlignWithDepth();

initial_timestamp = header;

}

if(result)

{

solveGyroscopeBias(all_image_frame,Bgs);

for (int i = 0; i <= WINDOW_SIZE; i++)

{

pre_integrations[i]->repropagate(Vector3d::Zero(), Bgs[i]);

}

solver_flag = NON_LINEAR;

optimization();

slideWindow();

ROS_INFO("Initialization finish!");

}

else

slideWindow();

}

}

// stereo only initilization

else if((STEREO || DEPTH) && !USE_IMU)

{

f_manager.initFramePoseByPnP(frame_count, Ps, Rs, tic, ric);

f_manager.triangulate(frame_count, Ps, Rs, tic, ric);

optimization();

if(frame_count == WINDOW_SIZE)

{

solver_flag = NON_LINEAR;

slideWindow();

ROS_INFO("Initialization finish!");

}

}

if(frame_count < WINDOW_SIZE)

{

frame_count++;

int prev_frame = frame_count - 1;

Ps[frame_count] = Ps[prev_frame];

Vs[frame_count] = Vs[prev_frame];

Rs[frame_count] = Rs[prev_frame];

Bas[frame_count] = Bas[prev_frame];

Bgs[frame_count] = Bgs[prev_frame];

}

}

else

{

TicToc t_solve;

if(!USE_IMU)

f_manager.initFramePoseByPnP(frame_count, Ps, Rs, tic, ric);

f_manager.triangulate(frame_count, Ps, Rs, tic, ric);

optimization();

set<int> removeIndex;

outliersRejection(removeIndex);

f_manager.removeOutlier(removeIndex);

if (! MULTIPLE_THREAD)

{

featureTracker.removeOutliers(removeIndex);

predictPtsInNextFrame();

}

ROS_DEBUG("solver costs: %fms", t_solve.toc());

if (failureDetection())

{

ROS_WARN("failure detection!");

failure_occur = 1;

clearState();

setParameter();

ROS_WARN("system reboot!");

return;

}

slideWindow();

f_manager.removeFailures();

// prepare output of VINS

key_poses.clear();

for (int i = 0; i <= WINDOW_SIZE; i++)

key_poses.push_back(Ps[i]);

last_R = Rs[WINDOW_SIZE];

last_P = Ps[WINDOW_SIZE];

last_R0 = Rs[0];

last_P0 = Ps[0];

updateLatestStates();

}

}

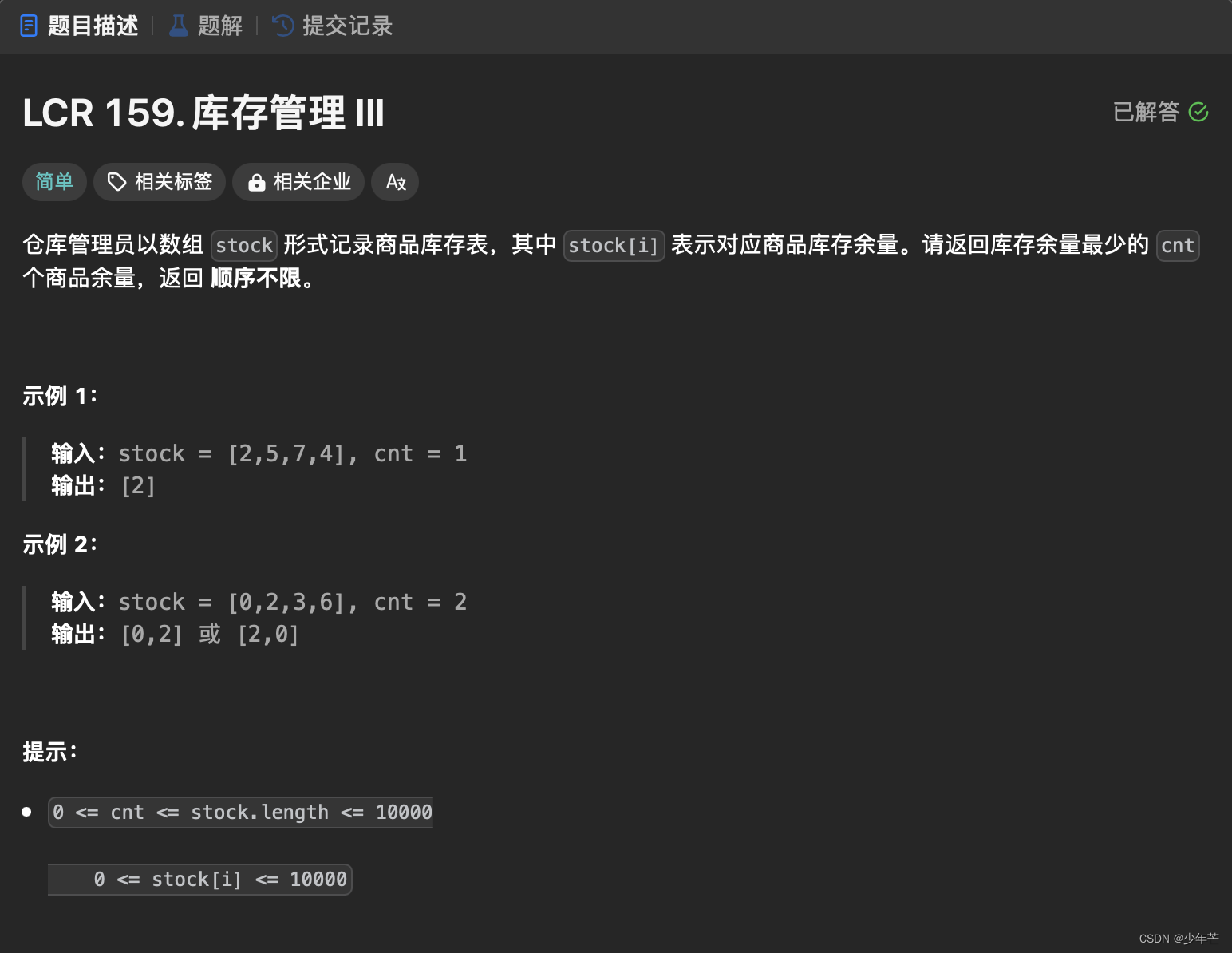

这个部分很长,首先看到的是这个函数,该函数在vins_estimator文件夹下的feature_manager.cpp文件中。其主要功能是根据视差判断其是否为关键帧。

///6.1.6

bool FeatureManager::addFeatureCheckParallax(int frame_count, const map<int, vector<pair<int, Eigen::Matrix<double, 7, 1>>>> &image, double td)

{

ROS_DEBUG("input feature: %d", (int)image.size());

ROS_DEBUG("num of feature: %d", getFeatureCount());

double parallax_sum = 0;

int parallax_num = 0;

last_track_num = 0;

last_average_parallax = 0;

new_feature_num = 0;

long_track_num = 0;

for (auto &id_pts : image)

{

FeaturePerFrame f_per_fra(id_pts.second[0].second, td);

assert(id_pts.second[0].first == 0);

if(id_pts.second.size() == 2)

{

f_per_fra.rightObservation(id_pts.second[1].second);

assert(id_pts.second[1].first == 1);

}

int feature_id = id_pts.first;

auto it = find_if(feature.begin(), feature.end(), [feature_id](const FeaturePerId &it)

{

return it.feature_id == feature_id;

});

if (it == feature.end())

{

feature.push_back(FeaturePerId(feature_id, frame_count));

feature.back().feature_per_frame.push_back(f_per_fra);

new_feature_num++;

}

else if (it->feature_id == feature_id)

{

it->feature_per_frame.push_back(f_per_fra);

last_track_num++;

if( it-> feature_per_frame.size() >= 4)

long_track_num++;

}

}

//if (frame_count < 2 || last_track_num < 20)

//if (frame_count < 2 || last_track_num < 20 || new_feature_num > 0.5 * last_track_num)

if (frame_count < 2 || last_track_num < 20 || long_track_num < 40 || new_feature_num > 0.5 * last_track_num)

return true;

for (auto &it_per_id : feature)

{

if (it_per_id.start_frame <= frame_count - 2 &&

it_per_id.start_frame + int(it_per_id.feature_per_frame.size()) - 1 >= frame_count - 1)

{

parallax_sum += compensatedParallax2(it_per_id, frame_count);

parallax_num++;

}

}

if (parallax_num == 0)

{

return true;

}

else

{

ROS_DEBUG("parallax_sum: %lf, parallax_num: %d", parallax_sum, parallax_num);

ROS_DEBUG("current parallax: %lf", parallax_sum / parallax_num * FOCAL_LENGTH);

last_average_parallax = parallax_sum / parallax_num * FOCAL_LENGTH;

return parallax_sum / parallax_num >= MIN_PARALLAX;

}

}

当然第二行就调用了

///6.1.5

int FeatureManager::getFeatureCount()

{

int cnt = 0;

for (auto &it : feature)// 遍历feature

{

it.used_num = it.feature_per_frame.size(); // 所有特征点被观测到的帧数

// 如果该特征点有4帧以上观测到了

if (it.used_num >= 4)

{

cnt++;// 这个特征点是有效的

}

}

return cnt;

}

实际上这里对有效特征点进行了计数,对所有特征点遍历,在不同观测帧上的观测的次数大于4,认为是有效帧。

接下来是对每一个特征点进行比较

/// _point 每帧的特征点[x,y,z,u,v,vx,vy]和td IMU和cam同步时间差

///const map<int, vector<pair<int, Eigen::Matrix<double, 7, 1>>>> &image

///id_pts.second[0].second可以认为是image的map类型中的第id_pts个点的vector的第0个数的igen::Matrix<double, 7, 1>

FeaturePerFrame f_per_fra(id_pts.second[0].second, td);

这个调用了

class FeaturePerFrame //每一个特征点的在一张图像中属性 point

{

public:

FeaturePerFrame(const Eigen::Matrix<double, 7, 1> &_point, double td) //_point:[x,y,z,u,v,vx,vy]

{

point.x() = _point(0);

point.y() = _point(1);

point.z() = _point(2); //特征点归一化坐标、特征点的像素坐标、特征点的跟踪速度、时间延迟

uv.x() = _point(3);

uv.y() = _point(4);

velocity.x() = _point(5);

velocity.y() = _point(6);

cur_td = td; //td IMU和cam同步时间差

}

double cur_td; //imu-camera的不同步时的相差时间

Vector3d point; //特征点空间坐标

Vector2d uv; //特征点映射到该帧上的图像坐标

Vector2d velocity; //特征点的跟踪速度

double z; // 特征点的深度

bool is_used; // 是否被用了

double parallax; // 视差 ??? not used

MatrixXd A;//变换矩阵

VectorXd b;

double dep_gradient; //not used ???

};

从英文上来看FeaturePerFrame ,每一帧的特征,= 每一个特征点的在一张图像中属性。

///id_pts.second[0].second可以认为是image的map类型中的第id_pts个点的vector的第0个数的Eigen::Matrix<double, 7, 1>