一、目录

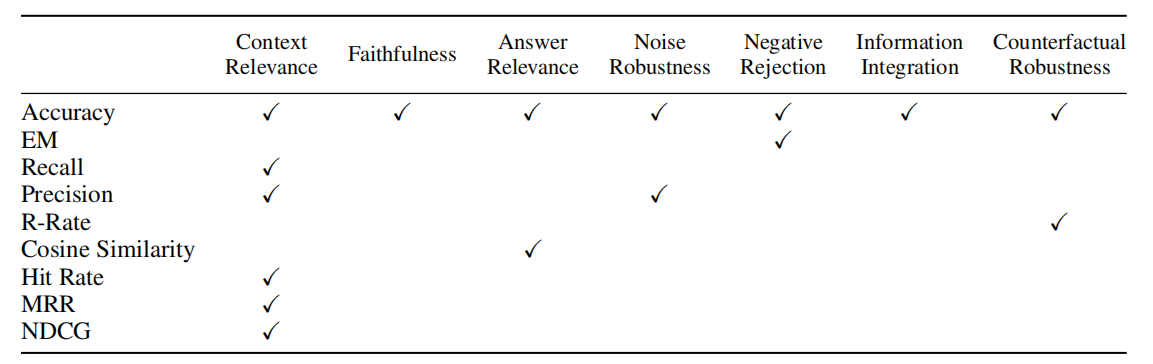

1 采用官方评估器进行评估

2 Open Ai的key分享

3 采用gpt 生成词嵌入的训练集

4 微调sentence_transformer模型

5 评估sentence_transformer模型

二、实现

官方网址:https://github.com/run-llama/finetune-embedding/blob/main/evaluate.ipynb

1.采用官方评估器进行评估

数据集格式:

datasets={

"corpus":[{uuid1:doc1},{uuid2:doc2},{uuid3:doc3} #对应的文本id、文本

],

"queries":[{uuid1:问题},{uuid2:问题},...

],

"relevant_docs":[{uuid1:[uuid答案]},{uuid2:[uuid答案]},{uuid3:[uuid答案]}

]

}

from sentence_transformers.evaluation import InformationRetrievalEvaluator

from sentence_transformers import SentenceTransformer

def evaluate_st(

dataset,

model_id,

name,

):

corpus = dataset['corpus']

queries = dataset['queries']

relevant_docs = dataset['relevant_docs']

evaluator = InformationRetrievalEvaluator(queries, corpus, relevant_docs, name=name) #评估器

model = SentenceTransformer(model_id) #加载模型

return evaluator(model, output_path='results/')

bge="C:/Users/86188/Downloads/bge-small-en"

evaluate_st(val_dataset, bge, name='bge')

bge="bge"

dfs=pd.read_csv(f"./results/Information-Retrieval_evaluation_{bge}_results.csv")

print(dfs)

注:具体见下文5

2. Open Ai的key分享

参考:https://blog.csdn.net/qq_32265503/article/details/130471485

1 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

2 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

3 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

4 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

5 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

6 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

7 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

8 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

9 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

10 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

11 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

12 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

13 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

14 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

15 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

16 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

17 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

18 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

19 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

- 采用gpt 生成词嵌入的训练集(本人并未购买openAI对应的key)

import json

from llama_index import SimpleDirectoryReader

from llama_index.node_parser import SimpleNodeParser

from llama_index.schema import MetadataMode

#加载pdf 文件

def load_corpus(files, verbose=False):

if verbose:

print(f"Loading files {files}")

reader = SimpleDirectoryReader(input_files=files)

docs = reader.load_data()

if verbose:

print(f'Loaded {len(docs)} docs')

parser = SimpleNodeParser.from_defaults()

nodes = parser.get_nodes_from_documents(docs, show_progress=verbose)

if verbose:

print(f'Parsed {len(nodes)} nodes')

corpus = {node.node_id: node.get_content(metadata_mode=MetadataMode.NONE) for node in nodes}

return corpus

TRAIN_FILES = ['C:/Users/86188/Downloads/智能变电站远程可视化运维与无人值守技术研究与实现_栾士岩.pdf']

VAL_FILES = ['C:/Users/86188/Downloads/基于人工智能的核电文档知识管理探索与实践_詹超铭.pdf']

train_corpus = load_corpus(TRAIN_FILES, verbose=True) #加载文章

val_corpus = load_corpus(VAL_FILES, verbose=True)

# #保存文本文件

TRAIN_CORPUS_FPATH = './data/train_corpus.json'

VAL_CORPUS_FPATH = './data/val_corpus.json'

with open(TRAIN_CORPUS_FPATH, 'w+') as f:

json.dump(train_corpus, f)

with open(VAL_CORPUS_FPATH, 'w+') as f:

json.dump(val_corpus, f)

import re

import uuid

from llama_index.llms import OpenAI

from llama_index.schema import MetadataMode

from tqdm.notebook import tqdm

#加载数据集

with open(TRAIN_CORPUS_FPATH, 'r+') as f:

train_corpus = json.load(f)

with open(VAL_CORPUS_FPATH, 'r+') as f:

val_corpus = json.load(f)

import os

os.environ["OPENAI_API_KEY"] ="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"

# 采用gpt 3.5 生成训练集

def generate_queries(

corpus,

num_questions_per_chunk=2,

prompt_template=None,

verbose=False,

):

"""

Automatically generate hypothetical questions that could be answered with

doc in the corpus.

"""

llm = OpenAI(model='gpt-3.5-turbo')

prompt_template = prompt_template or """\

Context information is below.

---------------------

{context_str}

---------------------

Given the context information and not prior knowledge.

generate only questions based on the below query.

You are a Teacher/ Professor. Your task is to setup \

{num_questions_per_chunk} questions for an upcoming \

quiz/examination. The questions should be diverse in nature \

across the document. Restrict the questions to the \

context information provided."

"""

queries = {}

relevant_docs = {}

for node_id, text in tqdm(corpus.items()):

query = prompt_template.format(context_str=text, num_questions_per_chunk=num_questions_per_chunk)

print(query)

response = llm.complete(query) #生成答案

result = str(response).strip().split("\n")

questions = [

re.sub(r"^\d+[\).\s]", "", question).strip() for question in result

]

questions = [question for question in questions if len(question) > 0]

for question in questions:

question_id = str(uuid.uuid4())

queries[question_id] = question

relevant_docs[question_id] = [node_id]

return queries, relevant_docs

'''自动生成训练数据集'''

train_queries, train_relevant_docs = generate_queries(train_corpus)

val_queries, val_relevant_docs = generate_queries(val_corpus)

TRAIN_QUERIES_FPATH = './data/train_queries.json'

TRAIN_RELEVANT_DOCS_FPATH = './data/train_relevant_docs.json'

VAL_QUERIES_FPATH = './data/val_queries.json'

VAL_RELEVANT_DOCS_FPATH = './data/val_relevant_docs.json'

with open(TRAIN_QUERIES_FPATH, 'w+') as f:

json.dump(train_queries, f)

with open(TRAIN_RELEVANT_DOCS_FPATH, 'w+') as f:

json.dump(train_relevant_docs, f)

with open(VAL_QUERIES_FPATH, 'w+') as f:

json.dump(val_queries, f)

with open(VAL_RELEVANT_DOCS_FPATH, 'w+') as f:

json.dump(val_relevant_docs, f)

'''生成的数据转为标准格式'''

TRAIN_DATASET_FPATH = './data/train_dataset.json'

VAL_DATASET_FPATH = './data/val_dataset.json'

train_dataset = {

'queries': train_queries,

'corpus': train_corpus,

'relevant_docs': train_relevant_docs,

}

val_dataset = {

'queries': val_queries,

'corpus': val_corpus,

'relevant_docs': val_relevant_docs,

}

with open(TRAIN_DATASET_FPATH, 'w+') as f:

json.dump(train_dataset, f)

with open(VAL_DATASET_FPATH, 'w+') as f:

json.dump(val_dataset, f)

- 微调sentence_transformer模型

#加载模型

from sentence_transformers import SentenceTransformer

model_id = "BAAI/bge-small-en"

model = SentenceTransformer(model_id)

import json

from torch.utils.data import DataLoader

from sentence_transformers import InputExample

TRAIN_DATASET_FPATH = './data/train_dataset.json'

VAL_DATASET_FPATH = './data/val_dataset.json'

BATCH_SIZE = 10

with open(TRAIN_DATASET_FPATH, 'r+') as f:

train_dataset = json.load(f)

with open(VAL_DATASET_FPATH, 'r+') as f:

val_dataset = json.load(f)

dataset = train_dataset

corpus = dataset['corpus']

queries = dataset['queries']

relevant_docs = dataset['relevant_docs']

examples = []

for query_id, query in queries.items():

node_id = relevant_docs[query_id][0]

text = corpus[node_id]

example = InputExample(texts=[query, text])

examples.append(example)

loader = DataLoader(

examples, batch_size=BATCH_SIZE

)

from sentence_transformers import losses

loss = losses.MultipleNegativesRankingLoss(model)

from sentence_transformers.evaluation import InformationRetrievalEvaluator

dataset = val_dataset

corpus = dataset['corpus']

queries = dataset['queries']

relevant_docs = dataset['relevant_docs']

evaluator = InformationRetrievalEvaluator(queries, corpus, relevant_docs)

#训练epoch 轮次

EPOCHS = 2

warmup_steps = int(len(loader) * EPOCHS * 0.1)

model.fit(

train_objectives=[(loader, loss)],

epochs=EPOCHS,

warmup_steps=warmup_steps,

output_path='exp_finetune', #输出文件夹

show_progress_bar=True,

evaluator=evaluator,

evaluation_steps=50,

)

- 评估sentence_transformer模型

import json

from tqdm.notebook import tqdm

import pandas as pd

pd.set_option("display.max_columns",None)

import logging

logging.basicConfig(level=logging.INFO,format="%(asctime)s-%(filename)s-%(message)s")

from llama_index import ServiceContext, VectorStoreIndex

from llama_index.schema import TextNode

from llama_index.embeddings import OpenAIEmbedding

import os

os.environ["OPENAI_API_KEY"] ="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"

'''加载数据集'''

TRAIN_DATASET_FPATH = './data/train_dataset.json'

VAL_DATASET_FPATH = './data/val_dataset.json'

with open(TRAIN_DATASET_FPATH, 'r+') as f:

train_dataset = json.load(f)

with open(VAL_DATASET_FPATH, 'r+') as f:

val_dataset = json.load(f)

'''召回方法'''

def evaluate(

dataset,

embed_model,

top_k=5,

verbose=False,

):

corpus = dataset['corpus'] #相关节点的文本集合

queries = dataset['queries'] #问题

relevant_docs = dataset['relevant_docs'] #答案所在的文章

#词向量模型

service_context = ServiceContext.from_defaults(embed_model=embed_model)

nodes = [TextNode(id_=id_, text=text) for id_, text in corpus.items()] #将文本转换为张量

index = VectorStoreIndex(

nodes,

service_context=service_context,

show_progress=True

)

retriever = index.as_retriever(similarity_top_k=top_k)

eval_results = []

for query_id, query in tqdm(queries.items()):

retrieved_nodes = retriever.retrieve(query) #召回向量

retrieved_ids = [node.node.node_id for node in retrieved_nodes] #检索到的节点id 列表

expected_id = relevant_docs[query_id][0] #真值id

is_hit = expected_id in retrieved_ids # assume 1 relevant doc

eval_result = {

'is_hit': is_hit,

'retrieved': retrieved_ids,

'expected': expected_id,

'query': query_id,

}

eval_results.append(eval_result)

return eval_results

'''词向量召回,评估器'''

from sentence_transformers.evaluation import InformationRetrievalEvaluator

from sentence_transformers import SentenceTransformer

def evaluate_st(

dataset,

model_id,

name,

):

corpus = dataset['corpus']

queries = dataset['queries']

relevant_docs = dataset['relevant_docs']

evaluator = InformationRetrievalEvaluator(queries, corpus, relevant_docs, name=name) #评估器

model = SentenceTransformer(model_id) #模型

return evaluator(model, output_path='results/')

# openAI 自身的词嵌入模型的命中率

# ada = OpenAIEmbedding()

# ada_val_results = evaluate(val_dataset, ada)

# df_ada = pd.DataFrame(ada_val_results)

# hit_rate_ada = df_ada['is_hit'].mean()

'''测试bge-small-en 检索的命中率'''

bge = "local:/home/jiayafei_linux/bge-small-en"

bge_val_results = evaluate(val_dataset, bge)

# #

# # #检索结果评估,命中率

# #

df_bge = pd.DataFrame(bge_val_results)

hit_rate_bge = df_bge['is_hit'].mean()

print(hit_rate_bge)

# #

bge="C:/Users/86188/Downloads/bge-small-en"

evaluate_st(val_dataset, bge, name='bge')

bge="bge"

dfs=pd.read_csv(f"./results/Information-Retrieval_evaluation_{bge}_results.csv")

print(dfs)