pytorch训练中内存一直增加的原因(部分)

- 代码中存在累加loss,但每步的loss没加item()

import torch

import torch.nn as nn

from collections import defaultdict

if torch.cuda.is_available():

device = 'cuda'

else:

device = 'cpu'

model = nn.Linear(100, 400).to(device)

criterion = nn.L1Loss(reduction='mean').to(device)

optimizer = torch.optim.Adam(model.parameters(), lr=0.001)

train_loss = defaultdict(float)

eval_loss = defaultdict(float)

for i in range(10000):

model.train()

x = torch.rand(50, 100, device=device)

y_pred = model(x) # 50 * 400

y_tgt = torch.rand(50, 400, device=device)

loss = criterion(y_pred, y_tgt)

optimizer.zero_grad()

loss.backward()

optimizer.step()

# 会导致内存一直增加,需改为train_loss['loss'] += loss.item()

train_loss['loss'] += loss

if i % 100 == 0:

train_loss = defaultdict(float)

model.eval()

x = torch.rand(50, 100, device=device)

y_pred = model(x) # 50 * 400

y_tgt = torch.rand(50, 400, device=device)

loss = criterion(y_pred, y_tgt)

# 会导致内存一直增加,需改为eval_loss['loss'] += loss.item()

eval_loss['loss'] += loss

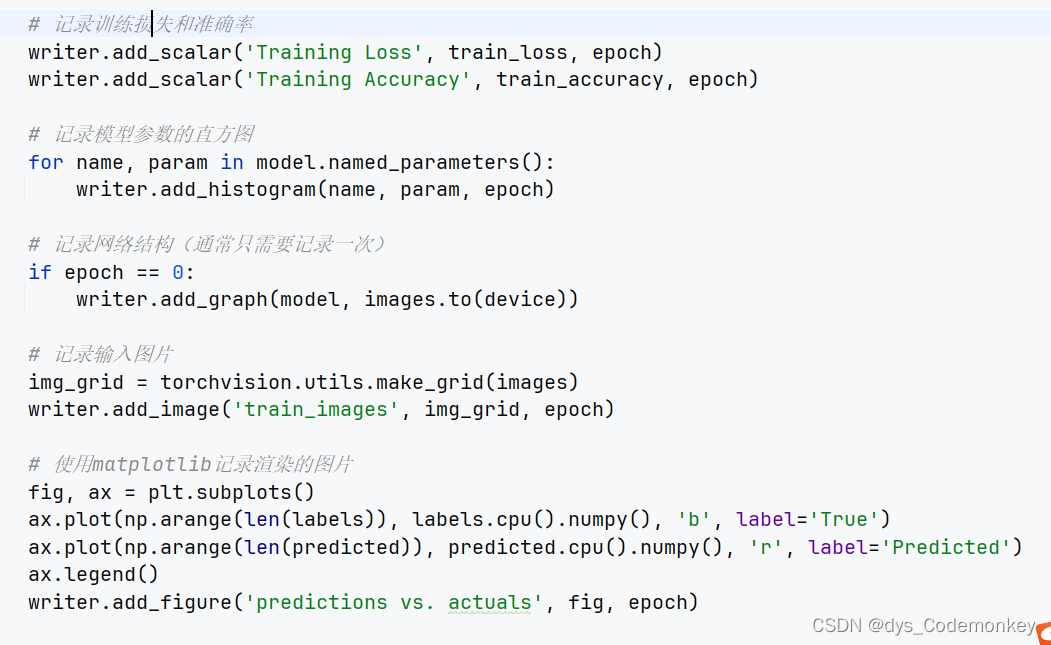

以上代码会导致内存占用越来越大,解决的方法是:train_l oss['loss'] += loss.item() 以及 eval_loss['loss'] += loss.item()。值得注意的是,要复现内存越来越大的问题,模型中需要切换model.train() 和 model.eval(),train_loss以及eval_loss的作用是保存模型的平均误差(这里是累积误差),保存到tensorboard中。