5.3.3 损失函数

飞桨已经将loss封装好,直接计算损失函数,过程更简洁,速度也更快,推荐大家直接使用飞桨paddle.vision.ops.yolo_lossAPI,关键参数说明如下:

paddle.vision.ops.yolo_loss(x, gt_box, gt_label, anchors, anchor_mask, class_num, ignore_thresh, downsample_ratio, gt_score=None, use_label_smooth=True, name=None, scale_x_y=1.0)

- x: 输出特征图。

- gt_box: 真实框。

- gt_label: 真实框标签。

- ignore_thresh,预测框与真实框IoU阈值超过ignore_thresh时,不作为负样本,YOLOv3模型里设置为0.7。

- downsample_ratio,特征图P0的下采样比例,使用Darknet53骨干网络时为32。

- gt_score,真实框的置信度,在使用了mixup技巧时用到。

- use_label_smooth,一种训练技巧,如不使用,设置为False。

- name,该层的名字,比如'yolov3_loss',默认值为None,一般无需设置。

YOLOv3的模型代码如下所示:

def get_loss(num_classes, outputs, gtbox, gtlabel, gtscore=None,

anchors = [10, 13, 16, 30, 33, 23, 30, 61, 62, 45, 59, 119, 116, 90, 156, 198, 373, 326],

anchor_masks = [[6, 7, 8], [3, 4, 5], [0, 1, 2]],

ignore_thresh=0.7,

use_label_smooth=False):

"""

使用paddle.vision.ops.yolo_loss

"""

losses = []

downsample = 32

for i, out in enumerate(outputs): # 对三个层级分别求损失函数

anchor_mask_i = anchor_masks[i]

loss = paddle.vision.ops.yolo_loss(

x=out, # out是P0, P1, P2中的一个

gt_box=gtbox, # 真实框坐标

gt_label=gtlabel, # 真实框类别

gt_score=gtscore, # 真实框得分,使用mixup训练技巧时需要,不使用该技巧时直接设置为1,形状与gtlabel相同

anchors=anchors, # 锚框尺寸,包含[w0, h0, w1, h1, ..., w8, h8]共9个锚框的尺寸

anchor_mask=anchor_mask_i, # 筛选锚框的mask,例如anchor_mask_i=[3, 4, 5],将anchors中第3、4、5个锚框挑选出来给该层级使用

class_num=num_classes, # 分类类别数

ignore_thresh=ignore_thresh, # 当预测框与真实框IoU > ignore_thresh,标注objectness = -1

downsample_ratio=downsample, # 特征图相对于原图缩小的倍数,例如P0是32, P1是16,P2是8

use_label_smooth=False) # 使用label_smooth训练技巧时会用到,这里没用此技巧,直接设置为False

losses.append(paddle.mean(loss)) #mean对每张图片求和

downsample = downsample // 2 # 下一级特征图的缩放倍数会减半

return sum(losses) # 对每个层级求和5.3.4 模型训练

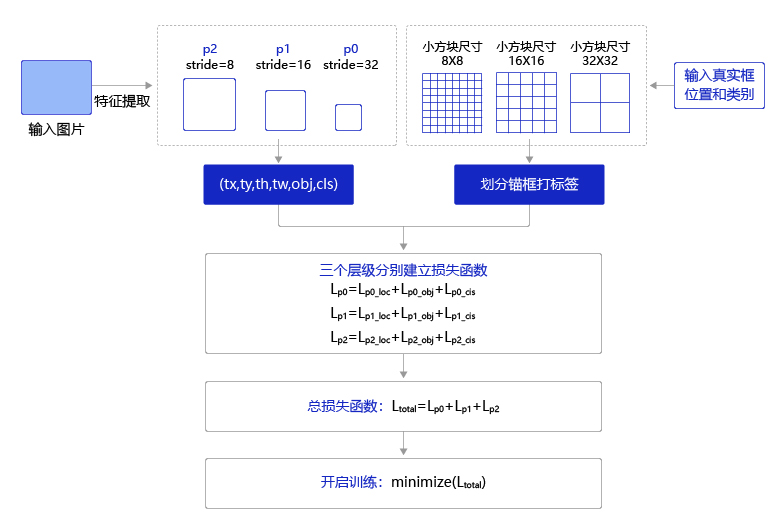

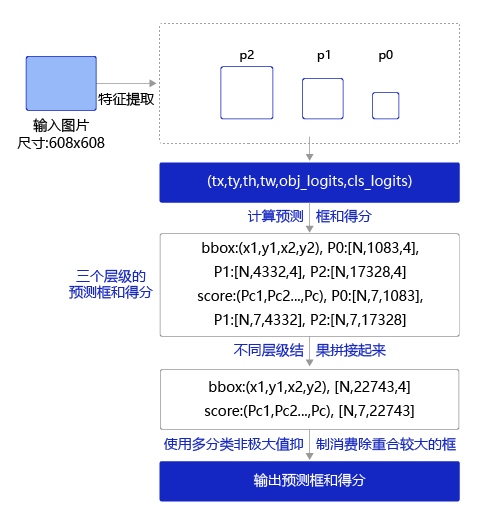

训练过程如图3所示,输入图片经过特征提取得到三个层级的输出特征图P0(stride=32)、P1(stride=16)和P2(stride=8),相应的分别使用不同大小的小方块区域去生成对应的锚框和预测框,并对这些锚框进行标注。

- P0层级特征图,对应着使用 32 × 32 32\times32 大小的小方块,在每个区域中心生成大小分别为 [ 116 , 90 ] [116, 90] , [ 156 , 198 ] [156, 198] , [ 373 , 326 ] [373, 326] 的三种锚框。

- P1层级特征图,对应着使用 16 × 16 16\times16 大小的小方块,在每个区域中心生成大小分别为 [ 30 , 61 ] [30, 61] , [ 62 , 45 ] [62, 45] , [ 59 , 119 ] [59, 119] 的三种锚框。

- P2层级特征图,对应着使用 8 × 8 8\times8 大小的小方块,在每个区域中心生成大小分别为 [ 10 , 13 ] [10, 13] , [ 16 , 30 ] [16, 30] , [ 33 , 23 ] [33, 23] 的三种锚框。

将三个层级的特征图与对应锚框之间的标签关联起来,并建立损失函数,总的损失函数等于三个层级的损失函数相加。通过极小化损失函数,可以开启端到端的训练过程。

图3:端到端训练流程

训练过程的具体实现代码如下,训练时长原因,epoch先设置为1,如果训练更优模型,调大该值。

In [22]

############# 这段代码在本地机器上运行请慎重,容易造成死机,我们可以使用较小的MAX_EPOCH运行#######################

import time

import os

import paddle

def get_lr(base_lr = 0.0001, lr_decay = 0.1):

bd = [10000, 20000]

lr = [base_lr, base_lr * lr_decay, base_lr * lr_decay * lr_decay]

learning_rate = paddle.optimizer.lr.PiecewiseDecay(boundaries=bd, values=lr)

return learning_rate

# MAX_EPOCH = 200

MAX_EPOCH = 1

ANCHORS = [10, 13, 16, 30, 33, 23, 30, 61, 62, 45, 59, 119, 116, 90, 156, 198, 373, 326]

ANCHOR_MASKS = [[6, 7, 8], [3, 4, 5], [0, 1, 2]]

IGNORE_THRESH = .7

NUM_CLASSES = 7

TRAINDIR = '/home/aistudio/work/insects/train'

TESTDIR = '/home/aistudio/work/insects/test'

VALIDDIR = '/home/aistudio/work/insects/val'

paddle.set_device("gpu:0")

# 创建数据读取类

train_dataset = TrainDataset(TRAINDIR, mode='train')

valid_dataset = TrainDataset(VALIDDIR, mode='valid')

test_dataset = TrainDataset(VALIDDIR, mode='valid')

# 使用paddle.io.DataLoader创建数据读取器,并设置batchsize,进程数量num_workers等参数

train_loader = paddle.io.DataLoader(train_dataset, batch_size=10, shuffle=True, num_workers=0, drop_last=True, use_shared_memory=False)

valid_loader = paddle.io.DataLoader(valid_dataset, batch_size=10, shuffle=False, num_workers=0, drop_last=False, use_shared_memory=False)

model = YOLOv3(num_classes = NUM_CLASSES) #创建模型

learning_rate = get_lr()

opt = paddle.optimizer.Momentum(

learning_rate=learning_rate,

momentum=0.9,

weight_decay=paddle.regularizer.L2Decay(0.0005),

parameters=model.parameters()) #创建优化器

# opt = paddle.optimizer.Adam(learning_rate=learning_rate, weight_decay=paddle.regularizer.L2Decay(0.0005), parameters=model.parameters())

if __name__ == '__main__':

for epoch in range(MAX_EPOCH):

for i, data in enumerate(train_loader()):

img, gt_boxes, gt_labels, img_scale = data

gt_scores = np.ones(gt_labels.shape).astype('float32')

gt_scores = paddle.to_tensor(gt_scores)

img = paddle.to_tensor(img)

gt_boxes = paddle.to_tensor(gt_boxes)

gt_labels = paddle.to_tensor(gt_labels)

outputs = model(img) #前向传播,输出[P0, P1, P2]

loss = get_loss(NUM_CLASSES, outputs, gt_boxes, gt_labels, gtscore=gt_scores,

anchors = ANCHORS,

anchor_masks = ANCHOR_MASKS,

ignore_thresh=IGNORE_THRESH,

use_label_smooth=False) # 计算损失函数

loss.backward() # 反向传播计算梯度

opt.step() # 更新参数

opt.clear_grad()

if i % 10 == 0:

timestring = time.strftime("%Y-%m-%d %H:%M:%S",time.localtime(time.time()))

print('{}[TRAIN]epoch {}, iter {}, output loss: {}'.format(timestring, epoch, i, loss.numpy()))

# save params of model

if (epoch % 5 == 0) or (epoch == MAX_EPOCH -1):

paddle.save(model.state_dict(), 'yolo_epoch{}'.format(epoch))

# 每个epoch结束之后在验证集上进行测试

model.eval()

for i, data in enumerate(valid_loader()):

img, gt_boxes, gt_labels, img_scale = data

gt_scores = np.ones(gt_labels.shape).astype('float32')

gt_scores = paddle.to_tensor(gt_scores)

img = paddle.to_tensor(img)

gt_boxes = paddle.to_tensor(gt_boxes)

gt_labels = paddle.to_tensor(gt_labels)

outputs = model(img)

loss = get_loss(NUM_CLASSES,outputs, gt_boxes, gt_labels, gtscore=gt_scores,

anchors = ANCHORS,

anchor_masks = ANCHOR_MASKS,

ignore_thresh=IGNORE_THRESH,

use_label_smooth=False)

if i % 1 == 0:

timestring = time.strftime("%Y-%m-%d %H:%M:%S",time.localtime(time.time()))

print('{}[VALID]epoch {}, iter {}, output loss: {}'.format(timestring, epoch, i, loss.numpy()))

model.train()5.3.5 模型评估

先定义上一节讲解的非极大值抑制,当数据集中含有多个类别的物体时,需要做多分类非极大值抑制,其实现原理与非极大值抑制相同,区别在于需要对每个类别都做非极大值抑制,实现代码如下面的multiclass_nms所示。

# 计算IoU,矩形框的坐标形式为xyxy,这个函数会被保存在box_utils.py文件中

def box_iou_xyxy(box1, box2):

# 获取box1左上角和右下角的坐标

x1min, y1min, x1max, y1max = box1[0], box1[1], box1[2], box1[3]

# 计算box1的面积

s1 = (y1max - y1min + 1.) * (x1max - x1min + 1.)

# 获取box2左上角和右下角的坐标

x2min, y2min, x2max, y2max = box2[0], box2[1], box2[2], box2[3]

# 计算box2的面积

s2 = (y2max - y2min + 1.) * (x2max - x2min + 1.)

# 计算相交矩形框的坐标

xmin = np.maximum(x1min, x2min)

ymin = np.maximum(y1min, y2min)

xmax = np.minimum(x1max, x2max)

ymax = np.minimum(y1max, y2max)

# 计算相交矩形行的高度、宽度、面积

inter_h = np.maximum(ymax - ymin + 1., 0.)

inter_w = np.maximum(xmax - xmin + 1., 0.)

intersection = inter_h * inter_w

# 计算相并面积

union = s1 + s2 - intersection

# 计算交并比

iou = intersection / union

return iou

# 非极大值抑制

def nms(bboxes, scores, score_thresh, nms_thresh, pre_nms_topk, i=0, c=0):

"""

nms

"""

inds = np.argsort(scores)

inds = inds[::-1]

keep_inds = []

while(len(inds) > 0):

cur_ind = inds[0]

cur_score = scores[cur_ind]

# if score of the box is less than score_thresh, just drop it

if cur_score < score_thresh:

break

keep = True

for ind in keep_inds:

current_box = bboxes[cur_ind]

remain_box = bboxes[ind]

iou = box_iou_xyxy(current_box, remain_box)

if iou > nms_thresh:

keep = False

break

if i == 0 and c == 4 and cur_ind == 951:

print('suppressed, ', keep, i, c, cur_ind, ind, iou)

if keep:

keep_inds.append(cur_ind)

inds = inds[1:]

return np.array(keep_inds)

# 多分类非极大值抑制

def multiclass_nms(bboxes, scores, score_thresh=0.01, nms_thresh=0.45, pre_nms_topk=1000, pos_nms_topk=100):

"""

This is for multiclass_nms

"""

batch_size = bboxes.shape[0]

class_num = scores.shape[1]

rets = []

for i in range(batch_size):

bboxes_i = bboxes[i]

scores_i = scores[i]

ret = []

for c in range(class_num):

scores_i_c = scores_i[c]

keep_inds = nms(bboxes_i, scores_i_c, score_thresh, nms_thresh, pre_nms_topk, i=i, c=c)

if len(keep_inds) < 1:

continue

keep_bboxes = bboxes_i[keep_inds]

keep_scores = scores_i_c[keep_inds]

keep_results = np.zeros([keep_scores.shape[0], 6])

keep_results[:, 0] = c

keep_results[:, 1] = keep_scores[:]

keep_results[:, 2:6] = keep_bboxes[:, :]

ret.append(keep_results)

if len(ret) < 1:

rets.append(ret)

continue

ret_i = np.concatenate(ret, axis=0)

scores_i = ret_i[:, 1]

if len(scores_i) > pos_nms_topk:

inds = np.argsort(scores_i)[::-1]

inds = inds[:pos_nms_topk]

ret_i = ret_i[inds]

rets.append(ret_i)

return rets运行如下代码启动评估,需要指定待评估的图片文件存放路径和需要使用到的模型参数。评估结果会被保存在pred_results.json文件中。

为了演示计算过程,下面使用的是验证集下的图片"insects/val/images",在提交比赛结果的时候,请使用测试集图片"insects/test/images"

这里提供的yolo_epoch50.pdparams 是未充分训练好的权重参数,请在比赛时换成自己训练好的权重参数

import json

import paddle

ANCHORS = [10, 13, 16, 30, 33, 23, 30, 61, 62, 45, 59, 119, 116, 90, 156, 198, 373, 326]

ANCHOR_MASKS = [[6, 7, 8], [3, 4, 5], [0, 1, 2]]

VALID_THRESH = 0.01

NMS_TOPK = 400

NMS_POSK = 100

NMS_THRESH = 0.45

NUM_CLASSES = 7

# 在验证集val上评估训练模型

TESTDIR = '/home/aistudio/work/insects/val/images' #请将此目录修改成用户自己保存测试图片的路径

WEIGHT_FILE = '/home/aistudio/yolo_epoch50.pdparams' # 请将此文件名修改成用户自己训练好的权重参数存放路径

# 在测试集test上评估训练模型

# TESTDIR = '/home/aistudio/work/insects/test/images'

# WEIGHT_FILE = '/home/aistudio/yolo_epoch50.pdparams'

if __name__ == '__main__':

model = YOLOv3(num_classes=NUM_CLASSES)

params_file_path = WEIGHT_FILE

model_state_dict = paddle.load(params_file_path)

model.load_dict(model_state_dict)

model.eval()

total_results = []

test_loader = test_data_loader(TESTDIR, batch_size= 1, mode='test')

for i, data in enumerate(test_loader()):

img_name, img_data, img_scale_data = data

img = paddle.to_tensor(img_data)

img_scale = paddle.to_tensor(img_scale_data)

outputs = model.forward(img)

bboxes, scores = model.get_pred(outputs,

im_shape=img_scale,

anchors=ANCHORS,

anchor_masks=ANCHOR_MASKS,

valid_thresh = VALID_THRESH)

bboxes_data = bboxes.numpy()

scores_data = scores.numpy()

result = multiclass_nms(bboxes_data, scores_data,

score_thresh=VALID_THRESH,

nms_thresh=NMS_THRESH,

pre_nms_topk=NMS_TOPK,

pos_nms_topk=NMS_POSK)

for j in range(len(result)):

result_j = result[j]

img_name_j = img_name[j]

total_results.append([img_name_j, result_j.tolist()])

print('processed {} pictures'.format(len(total_results)))

print('')

json.dump(total_results, open('pred_results.json', 'w'))json文件中保存着测试结果,是包含所有图片预测结果的list,其构成如下:

[[img_name, [[label, score, x1, y1, x2, y2], ..., [label, score, x1, y1, x2, y2]]],

[img_name, [[label, score, x1, y1, x2, y2], ..., [label, score, x1, y1, x2, y2]]],

...

[img_name, [[label, score, x1, y1, x2, y2],..., [label, score, x1, y1, x2, y2]]]]

list中的每一个元素是一张图片的预测结果,list的总长度等于图片的数目,每张图片预测结果的格式是:

[img_name, [[label, score, x1, y1, x2, y2],..., [label, score, x1, y1, x2, y2]]]

其中第一个元素是图片名称image_name,第二个元素是包含该图片所有预测框的list, 预测框列表:

[[label, score, x1, x2, y1, y2],..., [label, score, x1, y1, x2, y2]]

预测框列表中每个元素[label, score, x1, y1, x2, y2]描述了一个预测框,label是预测框所属类别标签,score是预测框的得分;x1, y1, x2, y2对应预测框左上角坐标(x1, y1),右下角坐标(x2, y2)。每张图片可能有很多个预测框,则将其全部放在预测框列表中。

计算精度指标

通过运行如下代码计算最终精度指标mAP

同学们训练完之后,可以在val数据集上计算mAP查看结果,所以下面用到的是val标注数据"insects/val/annotations/xmls"

提交比赛成绩的话需要在测试集上计算mAP,本地没有测试集的标注,只能提交json文件到比赛服务器上查看成绩

# -*- coding: utf-8 -*-

import os

import json

import numpy as np

import xml.etree.ElementTree as ET

from map_utils import DetectionMAP

# 存放测试集标签的目录

annotations_dir ="/home/aistudio/work/insects/val/annotations/xmls"

# 存放预测结果的文件

pred_result_file = "pred_results.json"

# pred_result_file 中保存着测试结果,是包含所有图片预测结果的list,其构成如下

# [[img_name, [[label, score, x1, x2, y1, y2],..., [label, score, x1, x2, y1, y2]]],

# [img_name, [[label, score, x1, x2, y1, y2],..., [label, score, x1, x2, y1, y2]]],

# ...

# [img_name, [[label, score, x1, x2, y1, y2],..., [label, score, x1, x2, y1, y2]]]]

# list中的每一个元素是一张图片的预测结果,list的总长度等于图片的数目

# 每张图片预测结果的格式是: [img_name, [[label, score, x1, x2, y1, y2],..., [label, score, x1, x2, y1, y2]]]

# 其中第一个元素是图片名称image_name,第二个元素是包含所有预测框的列表

# 预测框列表中每个元素[label, score, x1, x2, y1, y2]描述了一个预测框,

# label是预测框所属类别标签,score是预测框的得分

# x1, x2, y1, y2对应预测框左上角坐标(x1, y1),右下角坐标(x2, y2)

# 每张图片可能有很多个预测框,则将其全部放在预测框列表中

overlap_thresh = 0.5

map_type = '11point'

insect_names = ['Boerner', 'Leconte', 'Linnaeus', 'acuminatus', 'armandi', 'coleoptera', 'linnaeus']

if __name__ == '__main__':

cname2cid = {}

for i, item in enumerate(insect_names):

cname2cid[item] = i

filename = pred_result_file

results = json.load(open(filename))

num_classes = len(insect_names)

detection_map = DetectionMAP(class_num=num_classes,

overlap_thresh=overlap_thresh,

map_type=map_type,

is_bbox_normalized=False,

evaluate_difficult=False)

for result in results:

image_name = str(result[0])

bboxes = np.array(result[1]).astype('float32')

anno_file = os.path.join(annotations_dir, image_name + '.xml')

tree = ET.parse(anno_file)

objs = tree.findall('object')

im_w = float(tree.find('size').find('width').text)

im_h = float(tree.find('size').find('height').text)

gt_bbox = np.zeros((len(objs), 4), dtype=np.float32)

gt_class = np.zeros((len(objs), 1), dtype=np.int32)

difficult = np.zeros((len(objs), 1), dtype=np.int32)

for i, obj in enumerate(objs):

cname = obj.find('name').text

gt_class[i][0] = cname2cid[cname]

_difficult = int(obj.find('difficult').text)

x1 = float(obj.find('bndbox').find('xmin').text)

y1 = float(obj.find('bndbox').find('ymin').text)

x2 = float(obj.find('bndbox').find('xmax').text)

y2 = float(obj.find('bndbox').find('ymax').text)

x1 = max(0, x1)

y1 = max(0, y1)

x2 = min(im_w - 1, x2)

y2 = min(im_h - 1, y2)

gt_bbox[i] = [x1, y1, x2, y2]

difficult[i][0] = _difficult

detection_map.update(bboxes, gt_bbox, gt_class, difficult)

print("Accumulating evaluatation results...")

detection_map.accumulate()

map_stat = 100. * detection_map.get_map()

print("mAP({:.2f}, {}) = {:.2f}".format(overlap_thresh,

map_type, map_stat))Accumulating evaluatation results...

mAP(0.50, 11point) = 71.97

5.3.6 模型预测

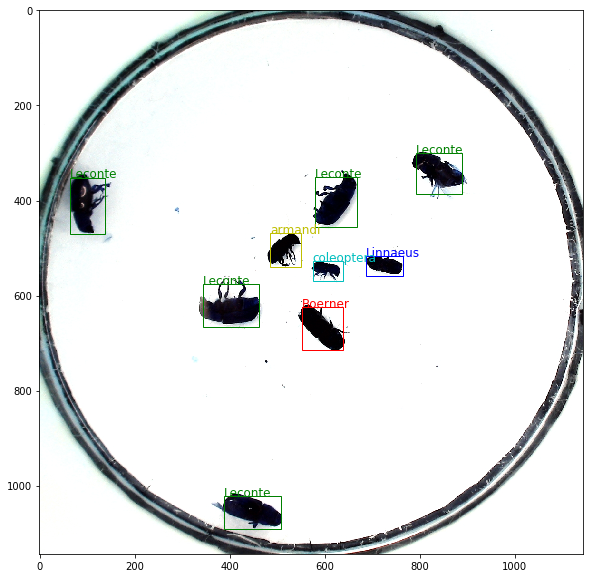

预测过程流程图4如下所示:

图4:预测流程

预测过程可以分为两步:

- 通过

get_pred函数预测框位置和所属类别的得分。 - 使用非极大值抑制来消除重叠较大的预测框。

首先定义绘制预测框的画图函数,代码如下。

import numpy as np

import matplotlib.pyplot as plt

import matplotlib.patches as patches

from matplotlib.image import imread

import math

# 定义画图函数

INSECT_NAMES = ['Boerner', 'Leconte', 'Linnaeus',

'acuminatus', 'armandi', 'coleoptera', 'linnaeus']

# 定义画矩形框的函数

def draw_rectangle(currentAxis, bbox, edgecolor = 'k', facecolor = 'y', fill=False, linestyle='-'):

# currentAxis,坐标轴,通过plt.gca()获取

# bbox,边界框,包含四个数值的list, [x1, y1, x2, y2]

# edgecolor,边框线条颜色

# facecolor,填充颜色

# fill, 是否填充

# linestype,边框线型

# patches.Rectangle需要传入左上角坐标、矩形区域的宽度、高度等参数

rect=patches.Rectangle((bbox[0], bbox[1]), bbox[2]-bbox[0]+1, bbox[3]-bbox[1]+1, linewidth=1,

edgecolor=edgecolor,facecolor=facecolor,fill=fill, linestyle=linestyle)

currentAxis.add_patch(rect)

# 定义绘制预测结果的函数

def draw_results(result, filename, draw_thresh=0.5):

plt.figure(figsize=(10, 10))

im = cv2.imread(filename)

plt.imshow(im)

currentAxis=plt.gca()

colors = ['r', 'g', 'b', 'k', 'y', 'c', 'purple']

for item in result:

box = item[2:6]

label = int(item[0])

name = INSECT_NAMES[label]

if item[1] > draw_thresh:

draw_rectangle(currentAxis, box, edgecolor = colors[label])

plt.text(box[0], box[1], name, fontsize=12, color=colors[label])使用5.3.1.4 小节定义的test_data_loader函数读取指定的图片,输入网络并计算出预测框和得分,然后使用多分类非极大值抑制消除冗余的框。将最终结果画图展示出来。

import json

import paddle

ANCHORS = [10, 13, 16, 30, 33, 23, 30, 61, 62, 45, 59, 119, 116, 90, 156, 198, 373, 326]

ANCHOR_MASKS = [[6, 7, 8], [3, 4, 5], [0, 1, 2]]

VALID_THRESH = 0.01

NMS_TOPK = 400

NMS_POSK = 100

NMS_THRESH = 0.45

NUM_CLASSES = 7

if __name__ == '__main__':

image_name = '/home/aistudio/work/insects/test/images/2642.jpeg'

params_file_path = '/home/aistudio/yolo_epoch50.pdparams'

model = YOLOv3(num_classes=NUM_CLASSES)

model_state_dict = paddle.load(params_file_path)

model.load_dict(model_state_dict)

model.eval()

total_results = []

test_loader = test_data_loader(image_name, mode='test')

for i, data in enumerate(test_loader()):

img_name, img_data, img_scale_data = data

img = paddle.to_tensor(img_data)

img_scale = paddle.to_tensor(img_scale_data)

outputs = model.forward(img)

bboxes, scores = model.get_pred(outputs,

im_shape=img_scale,

anchors=ANCHORS,

anchor_masks=ANCHOR_MASKS,

valid_thresh = VALID_THRESH)

bboxes_data = bboxes.numpy()

scores_data = scores.numpy()

results = multiclass_nms(bboxes_data, scores_data,

score_thresh=VALID_THRESH,

nms_thresh=NMS_THRESH,

pre_nms_topk=NMS_TOPK,

pos_nms_topk=NMS_POSK)

result = results[0]

draw_results(result, image_name, draw_thresh=0.4)

<Figure size 720x720 with 1 Axes>

通过上面的程序,清晰的给读者展示了如何使用训练好的权重,对图片进行预测并将结果可视化。最终输出的图片上,检测出了每个昆虫,标出了它们的边界框和具体类别。

提升方案

这里给出的是一份基础版本的代码,可以在上面继续改进提升,可以使用的改进方案有:

1、使用其它模型如faster rcnn等 (难度系数5)

2、使用数据增多,可以对原图进行翻转、裁剪等操作 (难度系数3)

3、修改anchor参数的设置,教案中的anchor参数设置直接使用原作者在coco数据集上的设置,针对此模型是否要调整 (难度系数3)

4、调整优化器、学习率策略、正则化系数等是否能提升模型精度 (难度系数1)

总结

本章系统化的介绍了计算机视觉的各种网络结构和发展历程,并以图像分类和目标检测两个任务为例,展示了ResNet和YOLOv3等算法的实现。期望读者不仅掌握了计算机视觉模型搭建方法,也能够对提取视觉特征的方法有更深入的认知。

作业

修改模型参数,对比添加多尺度特征融合前后模型的效果。