虽然车牌识别技术很成熟了,但完全没有接触过。一直想搞一下、整一下、试一下、折腾一下,工作之余找了一个简单的例子入个门。本博客简单记录一下 LPRNet 车牌识别部署 rk3588流程,训练参考 LPRNet 官方代码。

1、导出onnx

导出onnx很容易,在推理时加入保存onnx代码,但用onnx推理时发现推理失败,是有算子onnx推理时不支持,看了一下不支持的操作 nn.MaxPool3d() ,查了一下资料有等价的方法,用等价方法替换后推理结果是一致的。

import torch.nn as nn

import torch

class maxpool_3d(nn.Module):

def __init__(self, kernel_size, stride):

super(maxpool_3d, self).__init__()

assert (len(kernel_size) == 3 and len(stride) == 3)

kernel_size2d1 = kernel_size[-2:]

stride2d1 = stride[-2:]

kernel_size2d2 = (kernel_size[0], kernel_size[0])

stride2d2 = (kernel_size[0], stride[0])

self.maxpool1 = nn.MaxPool2d(kernel_size=kernel_size2d1, stride=stride2d1)

self.maxpool2 = nn.MaxPool2d(kernel_size=kernel_size2d2, stride=stride2d2)

def forward(self, x):

x = self.maxpool1(x)

x = x.transpose(1, 3)

x = self.maxpool2(x)

x = x.transpose(1, 3)

return x

class small_basic_block(nn.Module):

def __init__(self, ch_in, ch_out):

super(small_basic_block, self).__init__()

self.block = nn.Sequential(

nn.Conv2d(ch_in, ch_out // 4, kernel_size=1),

nn.ReLU(),

nn.Conv2d(ch_out // 4, ch_out // 4, kernel_size=(3, 1), padding=(1, 0)),

nn.ReLU(),

nn.Conv2d(ch_out // 4, ch_out // 4, kernel_size=(1, 3), padding=(0, 1)),

nn.ReLU(),

nn.Conv2d(ch_out // 4, ch_out, kernel_size=1),

)

def forward(self, x):

return self.block(x)

class LPRNet(nn.Module):

def __init__(self, lpr_max_len, phase, class_num, dropout_rate):

super(LPRNet, self).__init__()

self.phase = phase

self.lpr_max_len = lpr_max_len

self.class_num = class_num

self.backbone = nn.Sequential(

nn.Conv2d(in_channels=3, out_channels=64, kernel_size=3, stride=1), # 0

nn.BatchNorm2d(num_features=64),

nn.ReLU(), # 2

# nn.MaxPool3d(kernel_size=(1, 3, 3), stride=(1, 1, 1)), # 这个可以用MaxPool2d等价

nn.MaxPool2d(kernel_size=(3, 3), stride=(1, 1)),

small_basic_block(ch_in=64, ch_out=128), # *** 4 ***

nn.BatchNorm2d(num_features=128),

nn.ReLU(), # 6

# nn.MaxPool3d(kernel_size=(1, 3, 3), stride=(2, 1, 2)),

maxpool_3d(kernel_size=(1, 3, 3), stride=(2, 1, 2)),

small_basic_block(ch_in=64, ch_out=256), # 8

nn.BatchNorm2d(num_features=256),

nn.ReLU(), # 10

small_basic_block(ch_in=256, ch_out=256), # *** 11 ***

nn.BatchNorm2d(num_features=256), # 12

nn.ReLU(),

# nn.MaxPool3d(kernel_size=(1, 3, 3), stride=(4, 1, 2)), # 14

maxpool_3d(kernel_size=(1, 3, 3), stride=(4, 1, 2)), # 14

nn.Dropout(dropout_rate),

nn.Conv2d(in_channels=64, out_channels=256, kernel_size=(1, 4), stride=1), # 16

nn.BatchNorm2d(num_features=256),

nn.ReLU(), # 18

nn.Dropout(dropout_rate),

nn.Conv2d(in_channels=256, out_channels=class_num, kernel_size=(13, 1), stride=1), # 20

nn.BatchNorm2d(num_features=class_num),

nn.ReLU(), # *** 22 ***

)

self.container = nn.Sequential(

nn.Conv2d(in_channels=448 + self.class_num, out_channels=self.class_num, kernel_size=(1, 1), stride=(1, 1)),

# nn.BatchNorm2d(num_features=self.class_num),

# nn.ReLU(),

# nn.Conv2d(in_channels=self.class_num, out_channels=self.lpr_max_len+1, kernel_size=3, stride=2),

# nn.ReLU(),

)

def forward(self, x):

keep_features = list()

for i, layer in enumerate(self.backbone.children()):

x = layer(x)

if i in [2, 6, 13, 22]: # [2, 4, 8, 11, 22]

keep_features.append(x)

global_context = list()

for i, f in enumerate(keep_features):

if i in [0, 1]:

f = nn.AvgPool2d(kernel_size=5, stride=5)(f)

if i in [2]:

f = nn.AvgPool2d(kernel_size=(4, 10), stride=(4, 2))(f)

f_pow = torch.pow(f, 2)

f_mean = torch.mean(f_pow)

f = torch.div(f, f_mean)

global_context.append(f)

x = torch.cat(global_context, 1)

x = self.container(x)

logits = torch.mean(x, dim=2)

return logits

def build_lprnet(lpr_max_len=8, phase=False, class_num=66, dropout_rate=0.5):

Net = LPRNet(lpr_max_len, phase, class_num, dropout_rate)

if phase == "train":

return Net.train()

else:

return Net.eval()

保存onnx代码

print("=========== onnx =========== ")

dummy_input = torch.randn(1, 3, 24, 94).cuda()

input_names = ['image']

output_names = ['output']

torch.onnx.export(lprnet, dummy_input, "./weights/LPRNet_model.onnx", verbose=False, input_names=input_names, output_names=output_names, opset_version=12)

print("======================== convert onnx Finished! .... ")

2 onnx转换rknn

onnx转rknn代码

# -*- coding: utf-8 -*-

import os

import urllib

import traceback

import time

import sys

import numpy as np

import cv2

from rknn.api import RKNN

from math import exp

import math

ONNX_MODEL = './LPRNet.onnx'

RKNN_MODEL = './LPRNet.rknn'

DATASET = './images_list.txt'

QUANTIZE_ON = True

'''

CHARS = ['京', '沪', '津', '渝', '冀', '晋', '蒙', '辽', '吉', '黑',

'苏', '浙', '皖', '闽', '赣', '鲁', '豫', '鄂', '湘', '粤',

'桂', '琼', '川', '贵', '云', '藏', '陕', '甘', '青', '宁',

'新',

'0', '1', '2', '3', '4', '5', '6', '7', '8', '9',

'A', 'B', 'C', 'D', 'E', 'F', 'G', 'H', 'J', 'K',

'L', 'M', 'N', 'P', 'Q', 'R', 'S', 'T', 'U', 'V',

'W', 'X', 'Y', 'Z', 'I', 'O', '-'

]

'''

CHARS = ['BJ', 'SH', 'TJ', 'CQ', 'HB', 'SN', 'NM', 'LN', 'JN', 'HL',

'JS', 'ZJ', 'AH', 'FJ', 'JX', 'SD', 'HA', 'HB', 'HN', 'GD',

'GL', 'HI', 'SC', 'GZ', 'YN', 'XZ', 'SX', 'GS', 'QH', 'NX',

'XJ',

'0', '1', '2', '3', '4', '5', '6', '7', '8', '9',

'A', 'B', 'C', 'D', 'E', 'F', 'G', 'H', 'J', 'K',

'L', 'M', 'N', 'P', 'Q', 'R', 'S', 'T', 'U', 'V',

'W', 'X', 'Y', 'Z', 'I', 'O', '-'

]

def export_rknn_inference(img):

# Create RKNN object

rknn = RKNN(verbose=True)

# pre-process config

print('--> Config model')

rknn.config(mean_values=[[0, 0, 0]], std_values=[[255, 255, 255]], quantized_algorithm='normal', quantized_method='channel', target_platform='rk3588') # mmse

print('done')

# Load ONNX model

print('--> Loading model')

ret = rknn.load_onnx(model=ONNX_MODEL, outputs=['output'])

if ret != 0:

print('Load model failed!')

exit(ret)

print('done')

# Build model

print('--> Building model')

ret = rknn.build(do_quantization=QUANTIZE_ON, dataset=DATASET, rknn_batch_size=1)

if ret != 0:

print('Build model failed!')

exit(ret)

print('done')

# Export RKNN model

print('--> Export rknn model')

ret = rknn.export_rknn(RKNN_MODEL)

if ret != 0:

print('Export rknn model failed!')

exit(ret)

print('done')

# Init runtime environment

print('--> Init runtime environment')

ret = rknn.init_runtime()

# ret = rknn.init_runtime(target='rk3566')

if ret != 0:

print('Init runtime environment failed!')

exit(ret)

print('done')

# Inference

print('--> Running model')

outputs = rknn.inference(inputs=[img])

rknn.release()

print('done')

return outputs

if __name__ == '__main__':

print('This is main ...')

input_w = 94

input_h = 24

image_path = './test.jpg'

origin_image = cv2.imread(image_path)

image_height, image_width, images_channels = origin_image.shape

img = cv2.resize(origin_image, (input_w, input_h), interpolation=cv2.INTER_LINEAR)

img = np.expand_dims(img, 0)

print(img.shape)

preb = export_rknn_inference(img)[0][0]

preb_label = []

result = []

for j in range(preb.shape[1]):

preb_label.append(np.argmax(preb[:, j], axis=0))

print(preb_label)

pre_c = preb_label[0]

if pre_c != len(CHARS) - 1:

result.append(pre_c)

for c in preb_label:

if (pre_c == c) or (c == len(CHARS) - 1):

if c == len(CHARS) - 1:

pre_c = c

continue

result.append(c)

pre_c = c

ptext = ''

for v in result:

ptext += CHARS[v]

print(ptext)

zero_image = np.ones((image_height, image_width, images_channels), dtype=np.uint8) * 255

cv2.putText(zero_image, ptext, (0, int(image_height / 2)), cv2.FONT_HERSHEY_SIMPLEX, 0.5, (0, 0, 255), 2, cv2.LINE_AA)

combined_image = np.vstack((origin_image, zero_image))

cv2.imwrite('./test_result.jpg', combined_image)

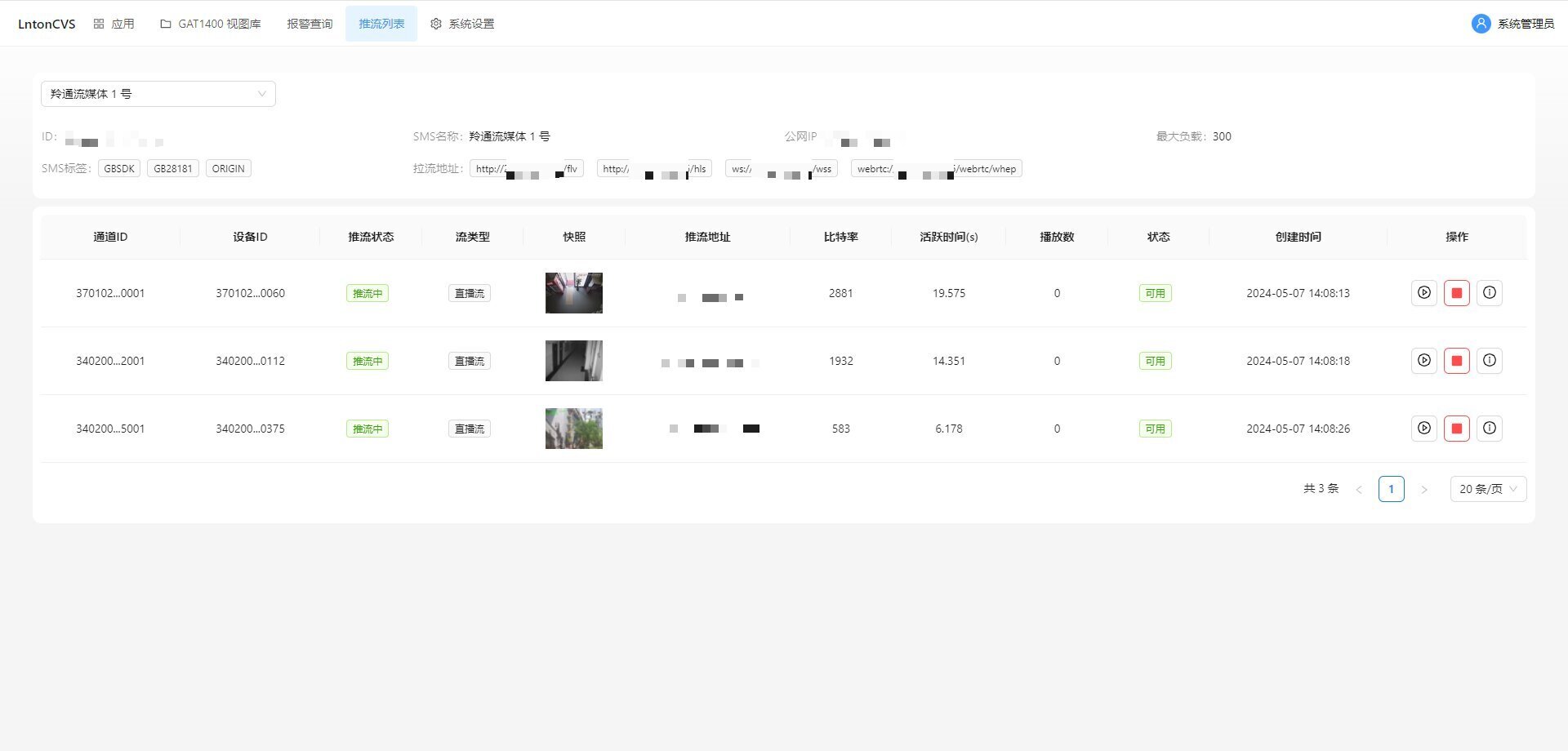

转换rknn测试结果

说明:由于中文显示出现乱码,示例代码中用拼英简写对中文进行了规避

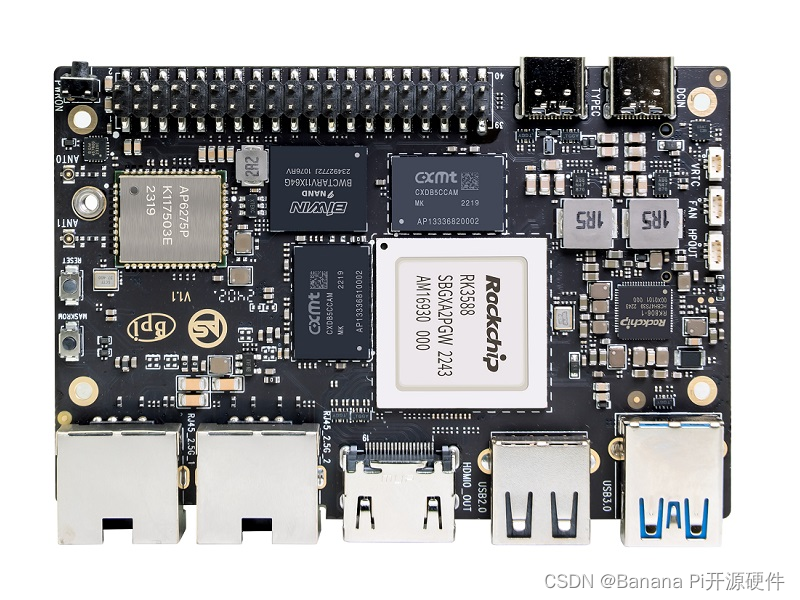

3 部署 rk3588

在rk3588上运行的【完整代码】

板子上运行结果和时耗。

模型这么小在rk3588上推理时耗还是比较长的,毫无疑问是模型推理过程中有操作切换到CPU上了。如果对性能要求的比较高,可以针对切换的CPU上的操作进行规避或替换。查看转换rknn模型log可以知道是那些操作切换到CPU上了。