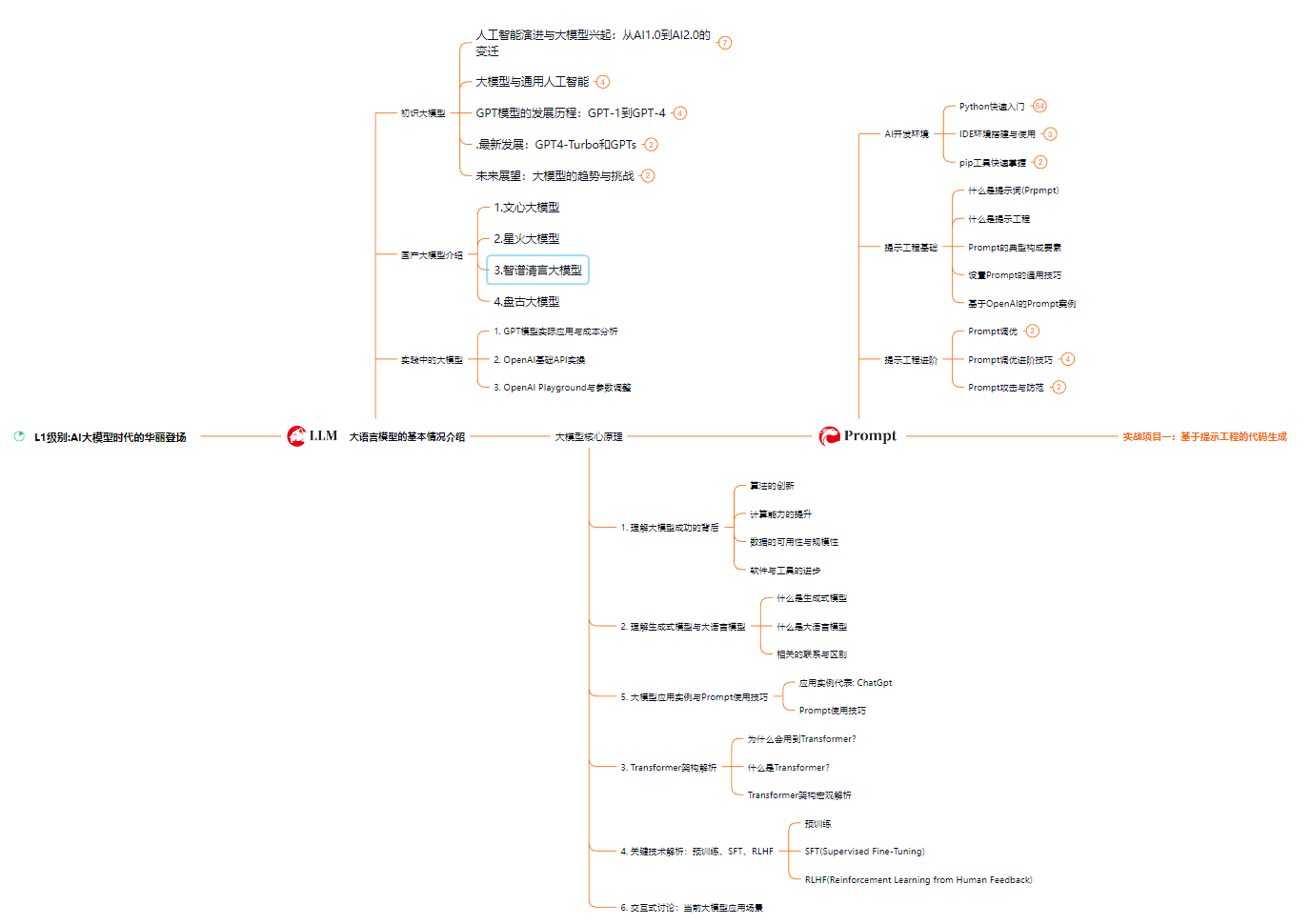

最近几年来,Transformer模型在自然语言处理(NLP)领域大放异彩。无论是谷歌的BERT,还是OpenAI的GPT系列,Transformer架构都展示出了强大的性能。那么今天,我就带大家一步步用Pytorch实现一个简单的Transformer模型,让大家对这个火热的技术有一个更深入的理解。

了解Transformer的基本原理

首先,我们需要了解一下Transformer的基本原理。Transformer模型是由Vaswani等人在2017年提出的,主要用于替代传统的循环神经网络(RNN)和长短期记忆网络(LSTM)。它的核心思想是使用自注意力机制(Self-Attention)来处理输入序列,从而能够更好地捕捉长距离的依赖关系。

Transformer的核心组件

Transformer主要由两部分组成:编码器(Encoder)和解码器(Decoder)。每个编码器和解码器又由多个相同的层叠加而成。每一层主要包括以下几个模块:

- 多头自注意力机制(Multi-Head Self-Attention):用于捕捉输入序列中各个位置的依赖关系。

- 前馈神经网络(FNN):用于对每个位置进行非线性变换。

- 残差连接和层归一化(Residual Connection and Layer Normalization):帮助梯度传播,避免梯度消失问题。

用Pytorch实现Transformer

现在,让我们开始用Pytorch一步步实现一个简单的Transformer模型。首先,确保你已经安装了Pytorch,如果还没有,可以使用以下命令进行安装:

pip install torch

1. 导入必要的库

import torch

import torch.nn as nn

import torch.optim as optim

import numpy as np

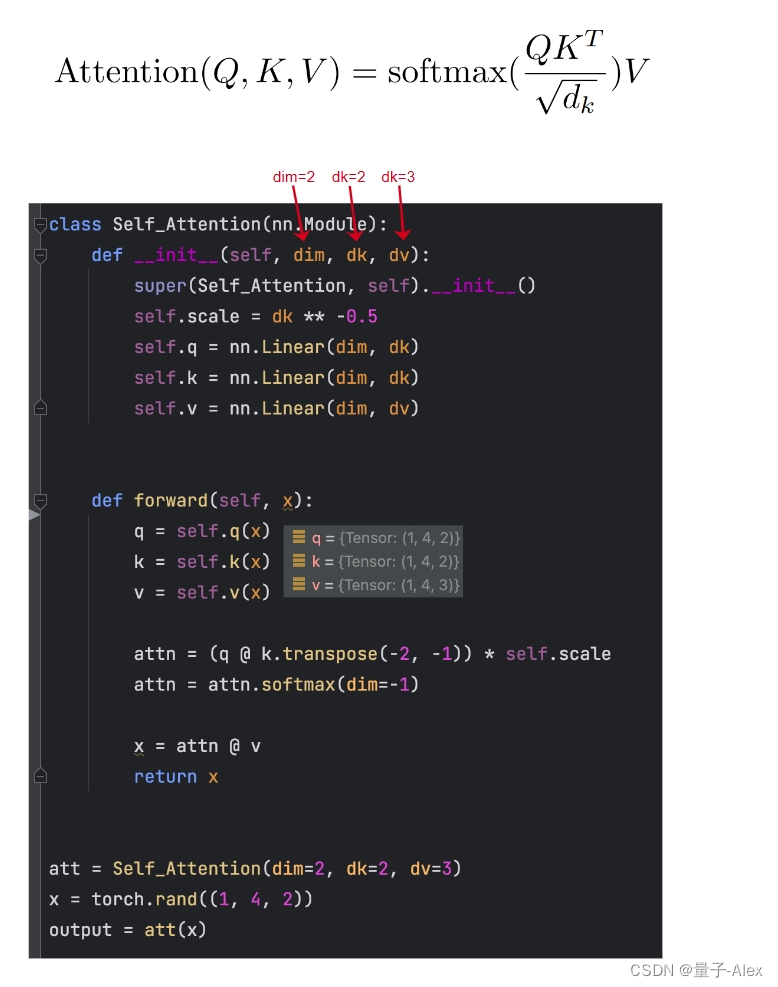

2. 实现多头自注意力机制

多头自注意力机制是Transformer的核心组件之一,它能够让模型在不同的子空间进行注意力操作,从而捕捉到更多的信息。

class MultiHeadSelfAttention(nn.Module):

def __init__(self, embed_size, heads):

super(MultiHeadSelfAttention, self).__init__()

self.embed_size = embed_size

self.heads = heads

self.head_dim = embed_size // heads

assert (self.head_dim * heads == embed_size), "Embedding size needs to be divisible by heads"

self.values = nn.Linear(self.head_dim, embed_size, bias=False)

self.keys = nn.Linear(self.head_dim, embed_size, bias=False)

self.queries = nn.Linear(self.head_dim, embed_size, bias=False)

self.fc_out = nn.Linear(embed_size, embed_size)

def forward(self, values, keys, query, mask):

N = query.shape[0]

value_len, key_len, query_len = values.shape[1], keys.shape[1], query.shape[1]

values = values.reshape(N, value_len, self.heads, self.head_dim)

keys = keys.reshape(N, key_len, self.heads, self.head_dim)

queries = query.reshape(N, query_len, self.heads, self.head_dim)

energy = torch.einsum("nqhd,nkhd->nhqk", [queries, keys])

if mask is not None:

energy = energy.masked_fill(mask == 0, float("-1e20"))

attention = torch.softmax(energy / (self.embed_size ** (1 / 2)), dim=3)

out = torch.einsum("nhql,nlhd->nqhd", [attention, values]).reshape(N, query_len, self.embed_size)

out = self.fc_out(out)

return out

3. 实现前馈神经网络

前馈神经网络是Transformer中另一个重要组件,它能够对每个位置的表示进行非线性变换。

class FeedForward(nn.Module):

def __init__(self, embed_size, ff_hidden):

super(FeedForward, self).__init__()

self.fc1 = nn.Linear(embed_size, ff_hidden)

self.fc2 = nn.Linear(ff_hidden, embed_size)

def forward(self, x):

return self.fc2(torch.relu(self.fc1(x)))

4. 实现编码器层

编码器层由多头自注意力机制和前馈神经网络组成,同时加入了残差连接和层归一化。

class EncoderLayer(nn.Module):

def __init__(self, embed_size, heads, ff_hidden, dropout):

super(EncoderLayer, self).__init__()

self.norm1 = nn.LayerNorm(embed_size)

self.norm2 = nn.LayerNorm(embed_size)

self.attention = MultiHeadSelfAttention(embed_size, heads)

self.ff = FeedForward(embed_size, ff_hidden)

self.dropout = nn.Dropout(dropout)

def forward(self, x, mask):

attention = self.attention(x, x, x, mask)

x = self.dropout(self.norm1(attention + x))

forward = self.ff(x)

x = self.dropout(self.norm2(forward + x))

return x

5. 实现编码器

编码器由多个编码器层组成。

class Encoder(nn.Module):

def __init__(self, src_vocab_size, embed_size, num_layers, heads, ff_hidden, dropout, max_length):

super(Encoder, self).__init__()

self.embed_size = embed_size

self.word_embedding = nn.Embedding(src_vocab_size, embed_size)

self.position_embedding = nn.Embedding(max_length, embed_size)

self.layers = nn.ModuleList(

[EncoderLayer(embed_size, heads, ff_hidden, dropout) for _ in range(num_layers)]

)

self.dropout = nn.Dropout(dropout)

def forward(self, x, mask):

N, seq_length = x.shape

positions = torch.arange(0, seq_length).expand(N, seq_length).to(x.device)

out = self.dropout(self.word_embedding(x) + self.position_embedding(positions))

for layer in self.layers:

out = layer(out, mask)

return out

6. 实现解码器层和解码器

解码器层的结构与编码器层类似,但多了一个额外的注意力机制,用于关注编码器的输出。

class DecoderLayer(nn.Module):

def __init__(self, embed_size, heads, ff_hidden, dropout):

super(DecoderLayer, self).__init__()

self.norm1 = nn.LayerNorm(embed_size)

self.norm2 = nn.LayerNorm(embed_size)

self.norm3 = nn.LayerNorm(embed_size)

self.attention = MultiHeadSelfAttention(embed_size, heads)

self.transformer_block = MultiHeadSelfAttention(embed_size, heads)

self.ff = FeedForward(embed_size, ff_hidden)

self.dropout = nn.Dropout(dropout)

def forward(self, x, value, key, src_mask, trg_mask):

attention = self.attention(x, x, x, trg_mask)

query = self.dropout(self.norm1(attention + x))

attention = self.transformer_block(value, key, query, src_mask)

out = self.dropout(self.norm2(attention + query))

forward = self.ff(out)

out = self.dropout(self.norm3(forward + out))

return out

class Decoder(nn.Module):

def __init__(self, trg_vocab_size, embed_size, num_layers, heads, ff_hidden, dropout, max_length):

super(Decoder, self).__init__()

self.embed_size = embed_size

self.word_embedding = nn.Embedding(trg_vocab_size, embed_size)

self.position_embedding = nn.Embedding(max_length, embed_size)

self.layers = nn.ModuleList(

[DecoderLayer(embed_size, heads, ff_hidden, dropout) for _ in range(num_layers)]

)

self.fc_out = nn.Linear(embed_size, trg_vocab_size)

self.dropout = nn.Dropout(dropout)

def forward(self, x, enc_out, src_mask, trg_mask):

N, seq_length = x.shape

positions = torch.arange(0, seq_length).expand(N, seq_length).to(x.device)

x = self.dropout(self.word_embedding(x) + self.position_embedding(positions))

for layer in self.layers:

x = layer(x, enc_out, enc_out, src_mask, trg_mask)

out = self.fc_out(x)

return out

7. 实现完整的Transformer模型

最后,我们将编码器和解码器组合在一起,形成一个完整的Transformer模型。

class Transformer(nn.Module):

def __init__(self, src_vocab_size, trg_vocab_size, src_pad_idx, trg_pad_idx, embed_size=512, num_layers=6, forward_expansion=4, heads=8, dropout=0, max_length=100):

super(Transformer, self).__init__()

self.encoder = Encoder(src_vocab_size, embed_size, num_layers, heads, forward_expansion, dropout, max_length)

self.decoder = Decoder(trg_vocab_size, embed_size, num_layers, heads, forward_expansion, dropout, max_length)

self.src_pad_idx = src_pad_idx

self.trg_pad_idx = trg_pad_idx

def make_src_mask(self, src):

src_mask = (src != self<br><br>更多精彩内容请关注: [ChatGPT中文网](https://www.chatgptzh.com)