Python爬虫

Python环境安装

安装配置acanoda

Anaconda下载

官网下载:Download Anaconda Distribution | Anaconda

清华开镜像站:Index of /anaconda/archive/ | 清华大学开源软件镜像站 | Tsinghua Open Source Mirror服务器在国内比较快,最新版的没更新

Anaconda安装过程

1.双击Anaconda3.x.exe文件,点击下一步:

2.点击 I agree

温馨提示:对C盘容量不够自信的用户尽可能不要装在C盘,会导致慢慢把conda养成大胖子!

3.选择默认

4.等待就行

5.安装完后会推荐pycharm软件,这里不用管

6. 如果没有添加环境变量的话:

windows+R,输入SYSDM.CPL,找到环境变量,进行设置

验证anaconda是否安装成功

安装pycharm

下载地址:http://www.jetbrains.com/pycharm/download/#section=windows

安装

双击安装包 -- [运⾏] -- [允许你应⽤更改设备]: [是] -- [Next] -- [选择安装位置] -- [Next] -- [Install] --

[Finish]。

选择编译器

acanoda该编译器包含大部分第三方库,无需后续下载

目标:对豆瓣网某热门电影评论的爬取

爬虫基本框架构建

运用Xpath爬取数据

import requests

import lxml

from lxml import etree

#定义要爬取的评论网页的url并模拟浏览器进行访问

url = 'https://movie.douban.com/subject/1889243/comments?start=0&limit=20&status=P&sort=new_score'

# 头部信息

header = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/126.0.0.0 Safari/537.36 Edg/126.0.0.0'

}

# Xpath爬取数据

response = requests.get(url=url, headers=header)

# 模拟浏览器向服务器发送请求

html=etree.HTML(response.text)

# 爬取数据comment,user,date,recommend

comments = html.xpath('//div[@id="comments"]/div[@class="comment-item "]/div[@class="comment"]/p[@class=" comment-content"]/span[@class="short"]/text()')

users = html.xpath('//div[@id="comments"]/div[@class="comment-item "]/div[@class="comment"]/h3/span[@class="comment-info"]/a/text()')

dates = html.xpath('//div[@id="comments"]/div[@class="comment-item "]/div[@class="comment"]/h3/span[@class="comment-info"]/span[@class="comment-time "]/@title')

recommends = html.xpath('//div[@id="comments"]/div[@class="comment-item "]/div[@class="comment"]/h3/span[@class="comment-info"]/span[starts-with(@class,"allstar")]/@title')

print(comments)

print(users)

print(dates)

print(recommends)

Xpath用法

实现多页爬取,并尝试运用反爬虫

import requests

import lxml

from lxml import etree

import pandas as pd

import time

import random

# 头部信息

header = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/126.0.0.0 Safari/537.36 Edg/126.0.0.0'

}

# 设置cookies

cookies = {

'cookie': 'll="118186"; _pk_id.100001.4cf6=35caae0a00be0738.1704106999.; bid=Ldx1xzL4-NY; dbcl2="281689738:+ITwYHhRF4A"; push_noty_num=0; push_doumail_num=0; __utmv=30149280.28168; _vwo_uuid_v2=D18303FEFA0A68F1D2C87504D3B634E22|a3877a0965cdb318daaa87e49dda22e6; ck=1S0X; frodotk_db="463aa8caac206a2cd2affa6004613e84"; _pk_ref.100001.4cf6=%5B%22%22%2C%22%22%2C1720312003%2C%22https%3A%2F%2Fcn.bing.com%2F%22%5D; _pk_ses.100001.4cf6=1; __utma=30149280.548300531.1688621674.1720277330.1720312003.11; __utmb=30149280.0.10.1720312003; __utmc=30149280; __utmz=30149280.1720312003.11.6.utmcsr=cn.bing.com|utmccn=(referral)|utmcmd=referral|utmcct=/; __utma=223695111.544466185.1704106999.1720277330.1720312003.9; __utmb=223695111.0.10.1720312003; __utmc=223695111; __utmz=223695111.1720312003.9.5.utmcsr=cn.bing.com|utmccn=(referral)|utmcmd=referral|utmcct=/; ap_v=0,6.0'

}

# 构造函数,循环遍历爬取数据

def get_data(num_pages):

all_data = []

for page in range(num_pages):

start = page * 20

url = f'https://movie.douban.com/subject/1889243/comments?start={start}&limit=20&status=P&sort=new_score'

print(f'正在爬取第{page + 1}页数据.')

# 异常处理

try:

# 模拟浏览器向服务器发送请求

response = requests.get(url, headers=header,cookies=cookies)

response.raise_for_status()

html = etree.HTML(response.text)

# 爬取数据comment,user,date,recommend

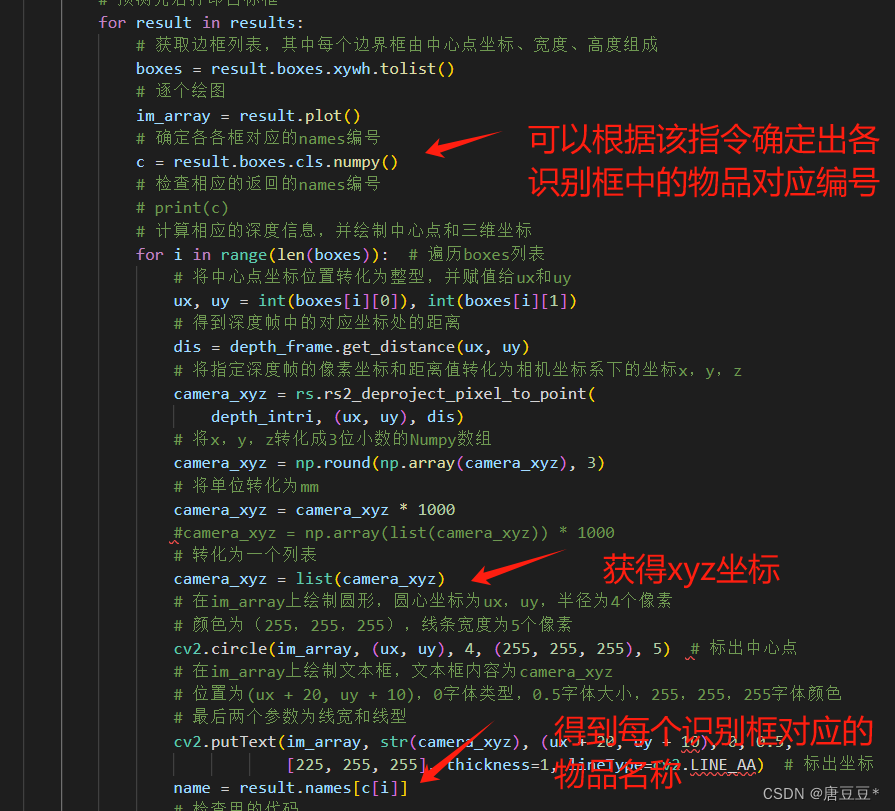

comments = html.xpath('//div[@id="comments"]/div[@class="comment-item "]/div[@class="comment"]/p[@class=" comment-content"]/span[@class="short"]/text()')

users = html.xpath('//div[@id="comments"]/div[@class="comment-item "]/div[@class="comment"]/h3/span[@class="comment-info"]/a/text()')

dates = html.xpath('//div[@id="comments"]/div[@class="comment-item "]/div[@class="comment"]/h3/span[@class="comment-info"]/span[@class="comment-time "]/@title')

recommends = html.xpath('//div[@id="comments"]/div[@class="comment-item "]/div[@class="comment"]/h3/span[@class="comment-info"]/span[starts-with(@class,"allstar")]/@title')

for user, recommend, date, comment in zip(users, recommends, dates, comments):

all_data.append([user, recommend, date, comment])

except requests.exceptions.RequestException as e:

print(f"获取第 {page + 1} 页数据时出错:{e}")

# 设置时间间隔,应对反爬虫

time.sleep(random.uniform(1, 5))

return all_data

num_pages = int(input("输入你要爬取的页数:"))

data = get_data(num_pages)

df = pd.DataFrame(data, columns=['用户名', '推荐', '时间', '评论'])

# 存储为csv文件,编码格式utf-8

df.to_csv('douban_comments.csv', index=False,encoding="utf-8")

print("评论数据已保存到douban_comments.csv文件中")上述增加Cookie和设置请求时间间隔,为了爬取更多数据,目前最多30页,若不加最多11页

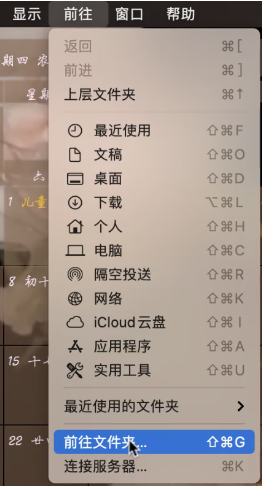

获取User-Agent和cookie的方法

直接复制使用即可