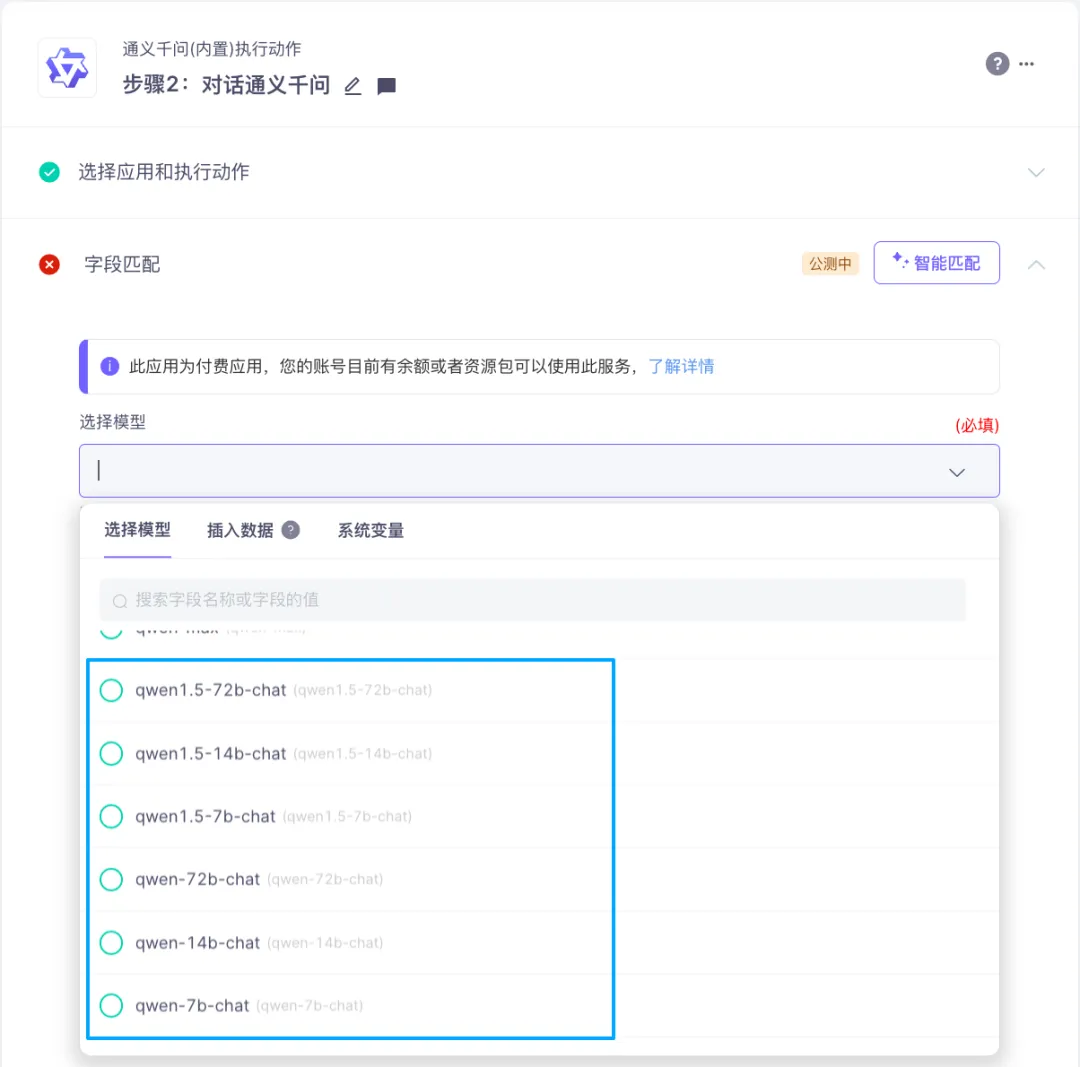

Qwen2 模型介绍

- 5个尺寸的预训练和指令微调模型, 包括 Qwen2-0.5B、Qwen2-1.5B、Qwen2-7B、Qwen2-57B-A14B 以及 Qwen2-72B;

- 在中文英语的基础上,训练数据中增加了27种语言相关的高质量数据;

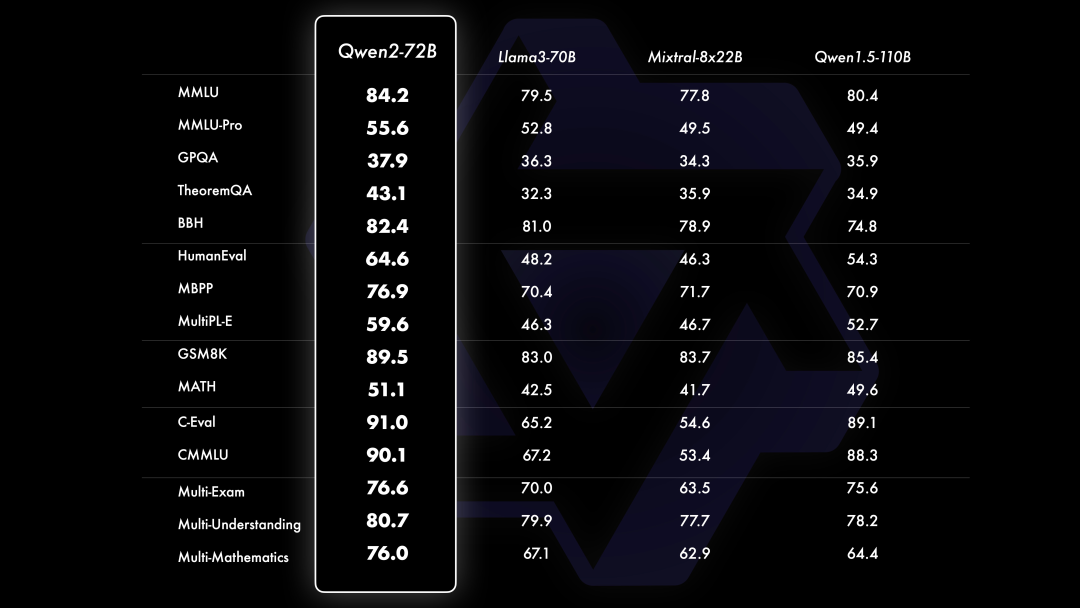

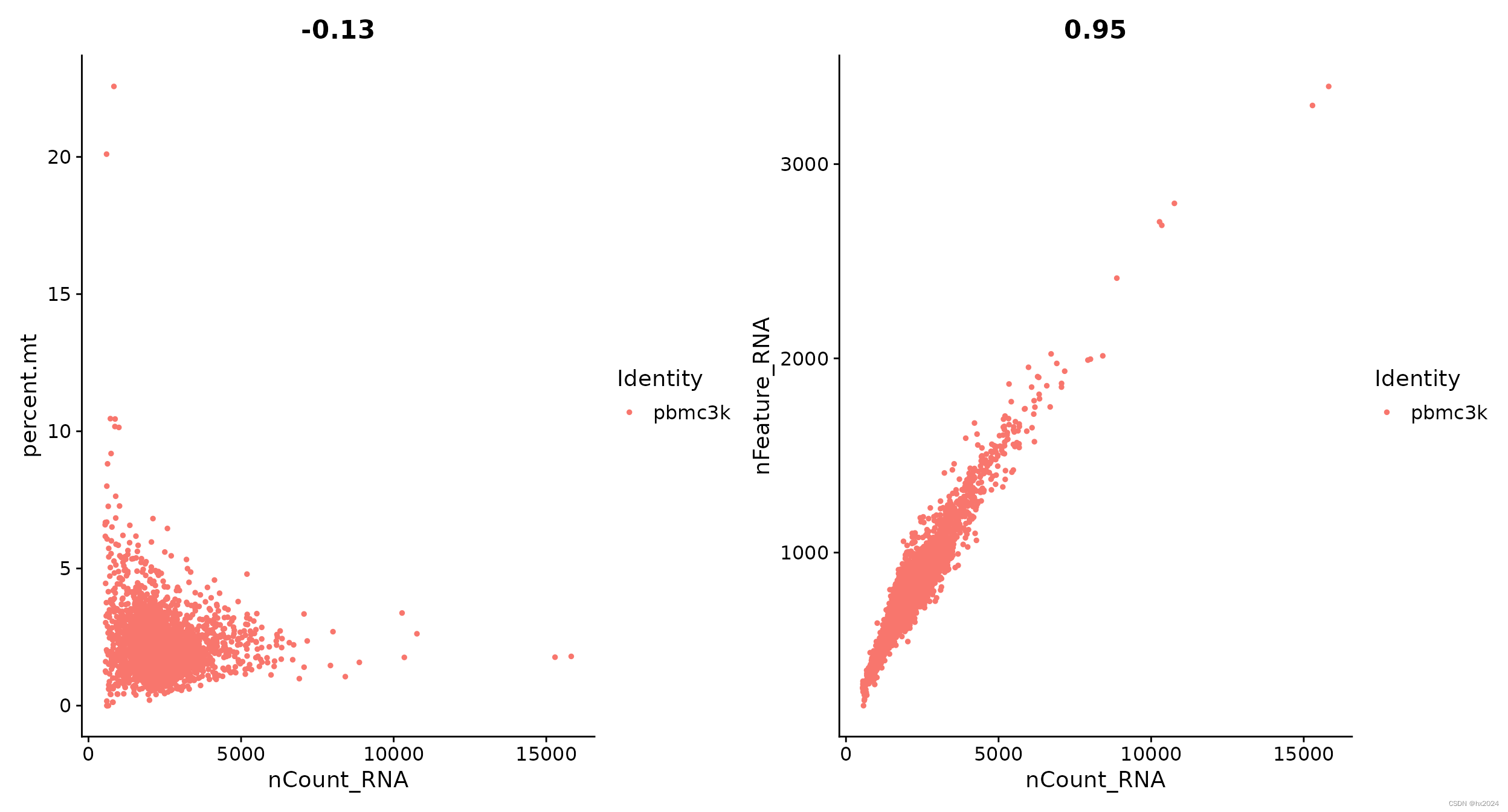

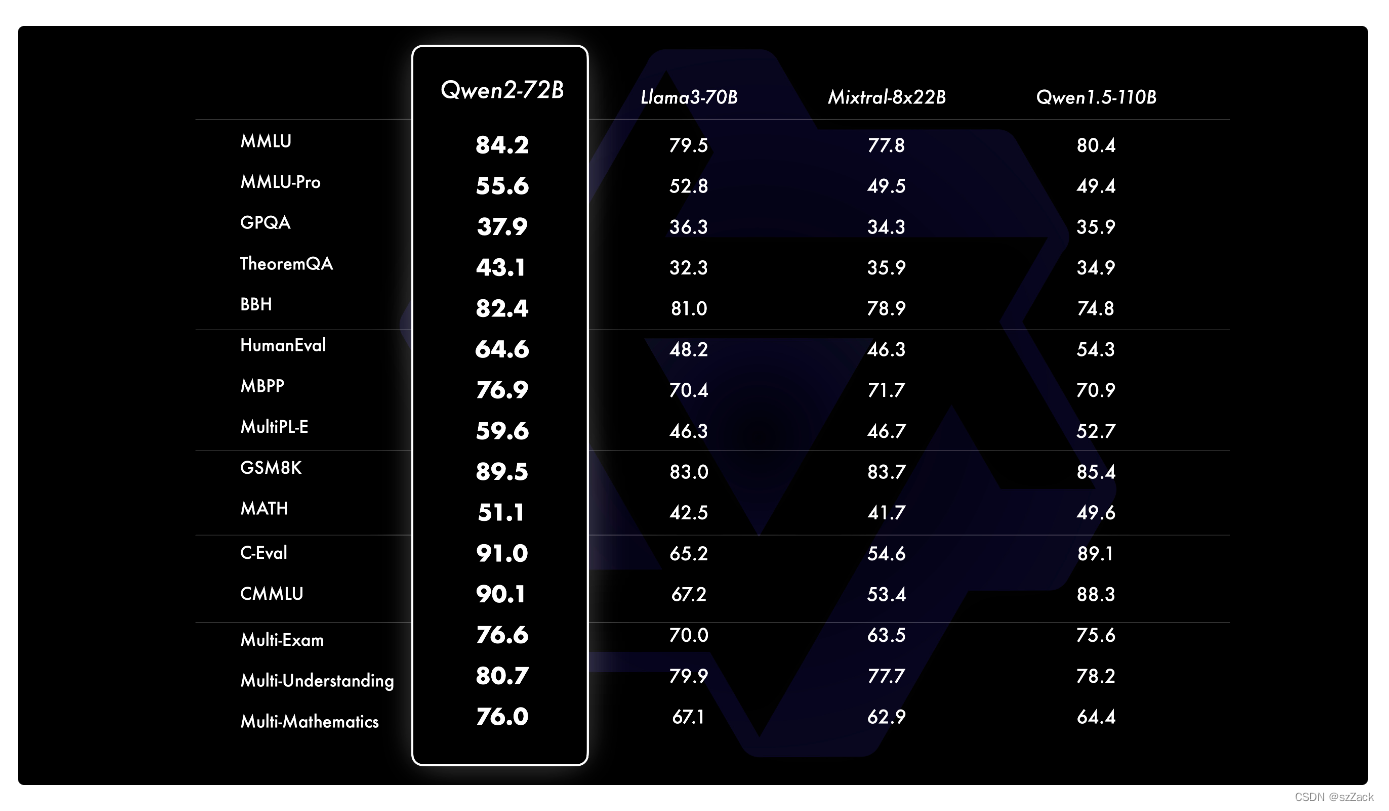

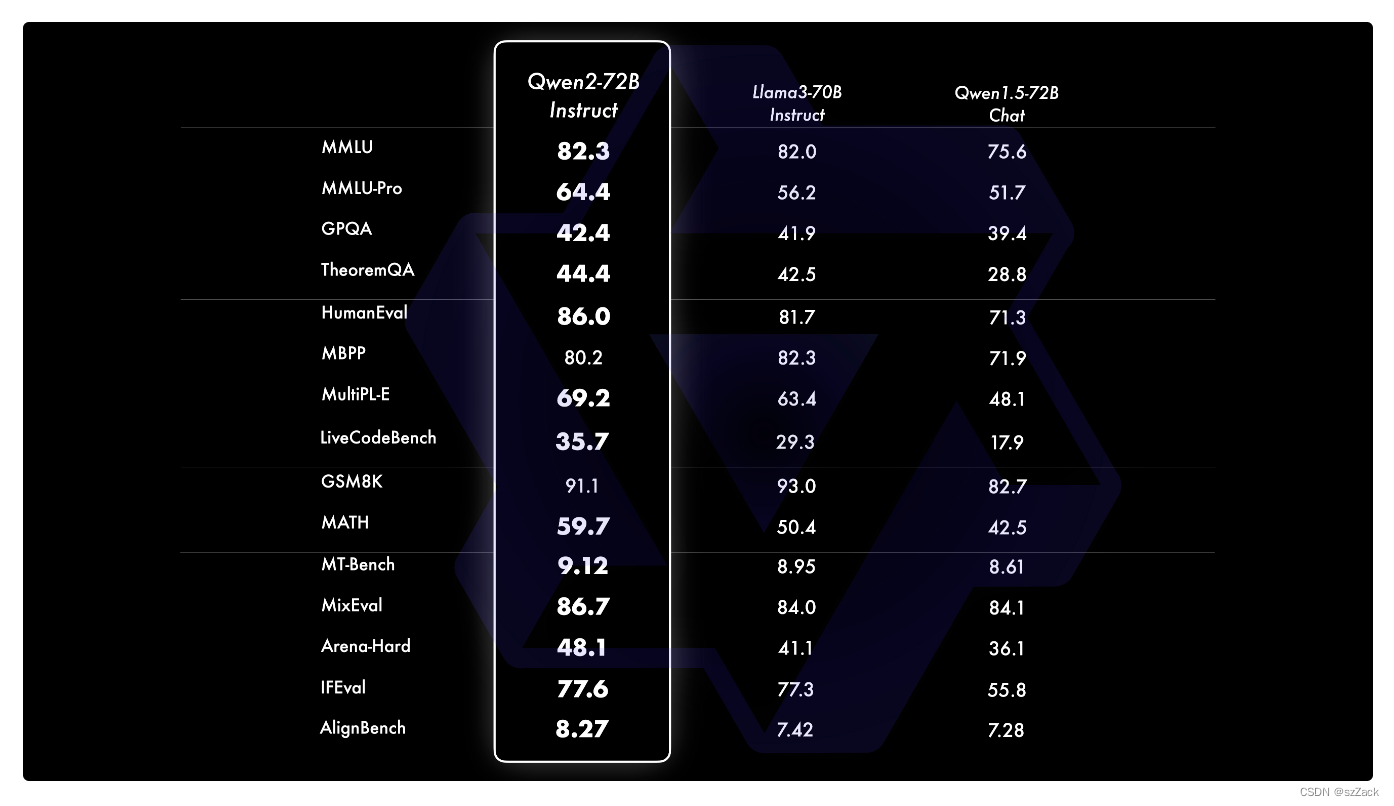

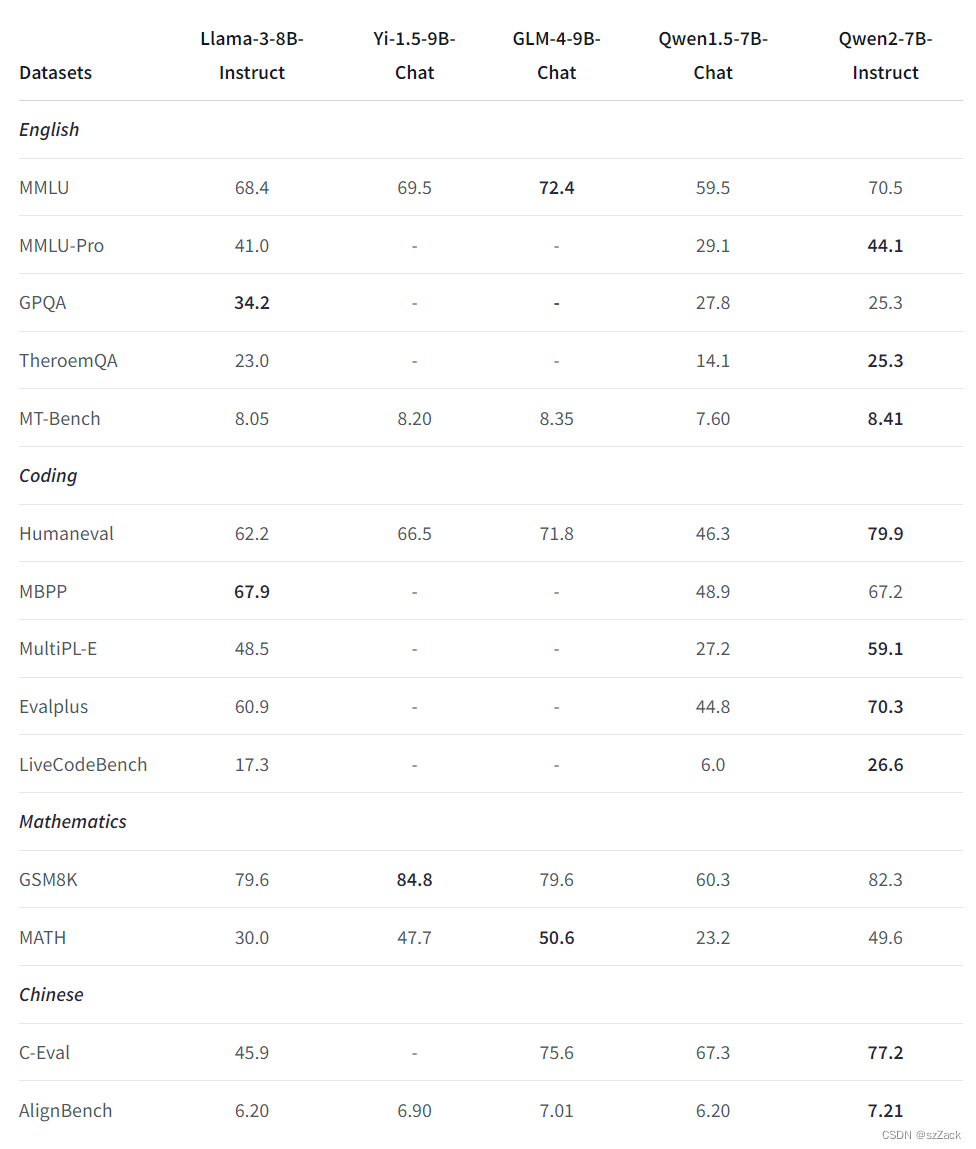

- 多个评测基准上的领先表现;

- 代码和数学能力显著提升;

- 增大了上下文长度支持,最高达到128K tokens(Qwen2-72B-Instruct)。

模型基础信息

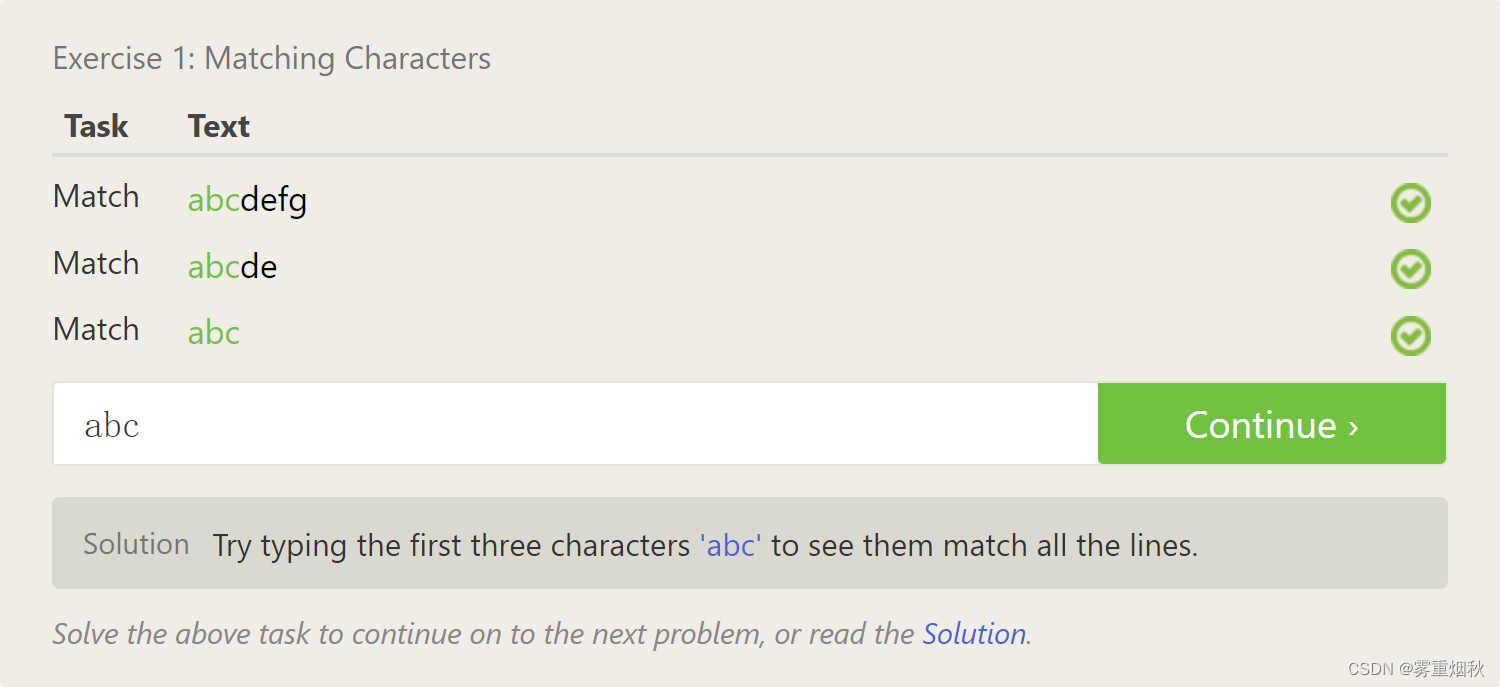

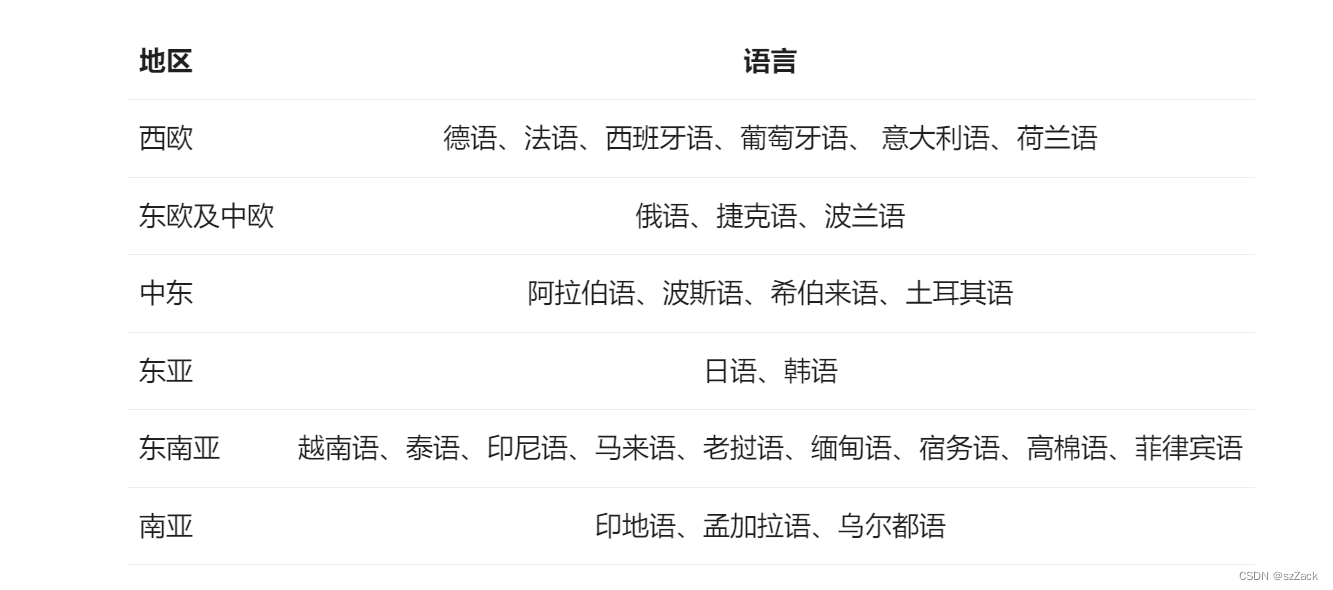

支持语言

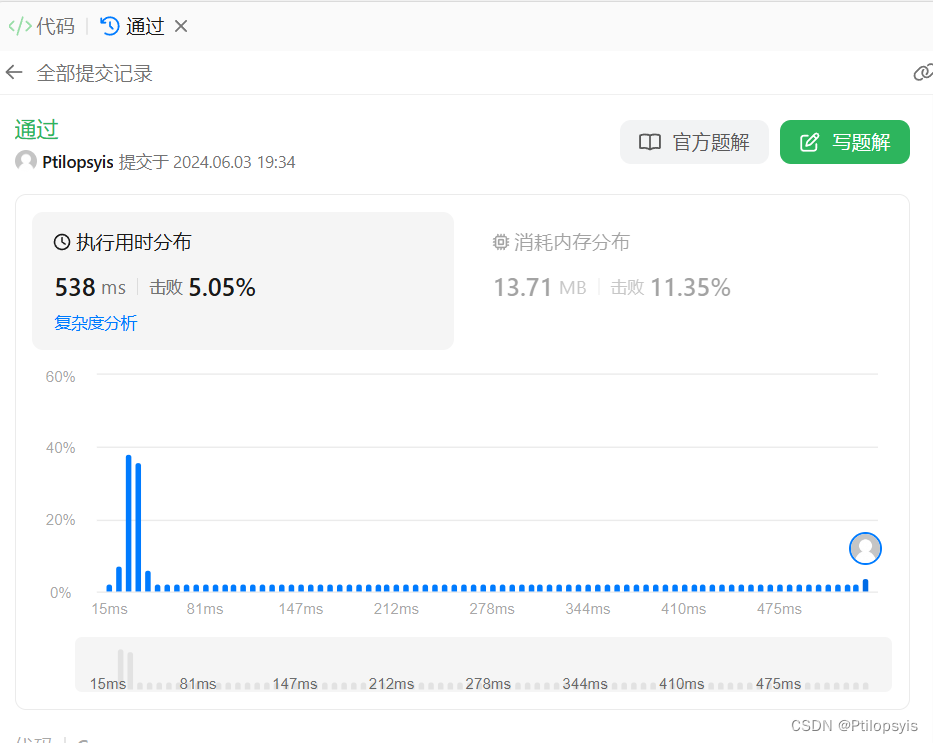

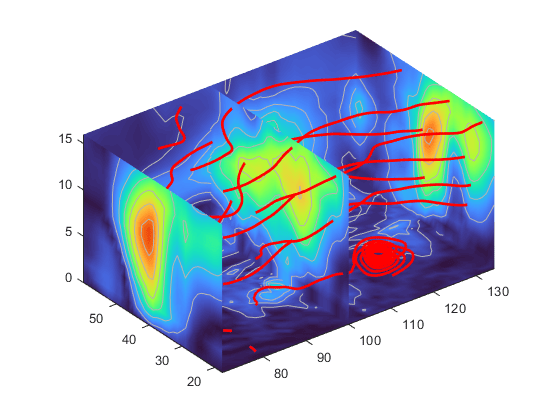

模型测评

- 对比评测

运行模型

使用 transformers 后端进行推理:

要求: transformers>=4.37

from transformers import AutoModelForCausalLM, AutoTokenizer

device = "cuda" # the device to load the model onto

model = AutoModelForCausalLM.from_pretrained(

"Qwen/Qwen2-7B-Instruct",

torch_dtype="auto",

device_map="auto"

)

tokenizer = AutoTokenizer.from_pretrained("Qwen/Qwen2-7B-Instruct")

prompt = "Give me a short introduction to large language model."

messages = [

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": prompt}

]

text = tokenizer.apply_chat_template(

messages,

tokenize=False,

add_generation_prompt=True

)

model_inputs = tokenizer([text], return_tensors="pt").to(device)

generated_ids = model.generate(

model_inputs.input_ids,

max_new_tokens=512

)

generated_ids = [

output_ids[len(input_ids):] for input_ids, output_ids in zip(model_inputs.input_ids, generated_ids)

]

response = tokenizer.batch_decode(generated_ids, skip_special_tokens=True)[0]

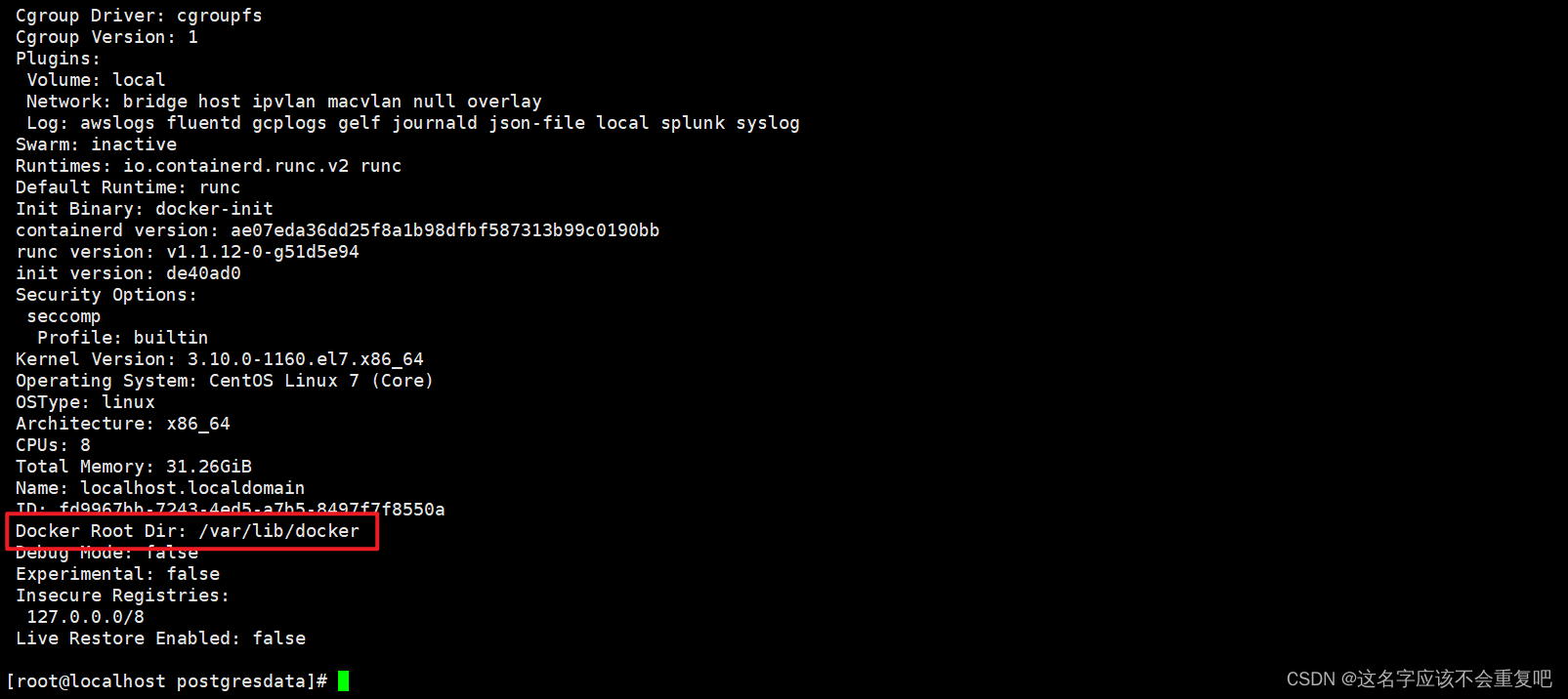

模型部署

- 安装 vllm

pip install "vllm>=0.4.3"

- 修改:config.json

{

"architectures": [

"Qwen2ForCausalLM"

],

// ...

"vocab_size": 152064,

// adding the following snippets

"rope_scaling": {

"factor": 4.0,

"original_max_position_embeddings": 32768,

"type": "yarn"

}

}

- 启动服务:

python -m vllm.entrypoints.openai.api_server --served-model-name Qwen2-7B-Instruct --model path/to/weights

- 测试

curl http://localhost:8000/v1/chat/completions \

-H "Content-Type: application/json" \

-d '{

"model": "Qwen2-7B-Instruct",

"messages": [

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "Your Long Input Here."}

]

}'

下载

model_id: THUDM/glm-4-9b-chat-1m

下载地址:[https://hf-mirror.com/Qwen/Qwen2-7B-Instruct) 不需要翻墙

开源协议

查看LICENSE