使用gRPC基于Protobuf传输大文件或数据流

在现代软件开发中,性能通常是关键的考虑因素之一,尤其是在进行大文件传输时。高效的协议和工具可以显著提升传输速度和可靠性。本文详细介绍如何使用gRPC和Protobuf进行大文件传输,并与传统TCP传输进行性能比较。

1. 背景和技术选择

在过去,大文件传输常常使用传统的TCP/IP协议,虽然简单但在处理大规模数据传输时往往速度较慢,尤其在网络条件不佳的环境下更是如此。在最近一个项目中,就有传输大数据文件的需求,用传统方式进行测试发现传输延时无法达到要求,于是在网上查阅资料发现了一个较优的解决方案。

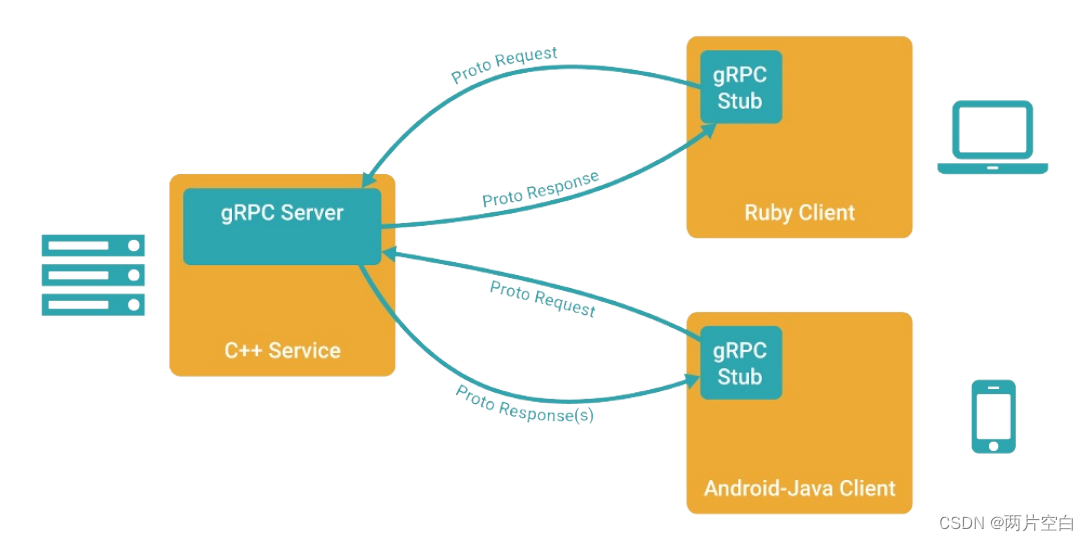

相对于TCP,gRPC提供了一种现代的、高性能的解决方案。gRPC是一个高性能的远程过程调用(RPC)框架,由Google主导开发,使用HTTP/2作为传输层协议,支持多种开发语言,如C++, Java, Python和Go等。Protobuf(Protocol Buffers)则是一种轻量级的数据交换格式,可以高效地序列化结构化数据。

1.1 gRPC的优势

- 高性能: 利用HTTP/2协议,支持多路复用、服务器推送等现代网络技术。

- 跨语言支持: 支持多种编程语言,便于在不同的系统间交互。

- 接口定义: 使用.proto文件定义服务,自动生成服务端和客户端代码,减少重复工作量。

- 流控制: 支持流式传输数据,适合大文件传输和实时数据处理。

1.2 Protocol Buffers的优势

- 高效: 编码和解码迅速,且生成的数据包比XML小3到10倍。

- 灵活: 支持向后兼容性,新旧数据格式可以无缝转换。

- 简洁: 简化了复杂数据结构的处理,易于开发者使用。

2. 项目配置与环境搭建

为了使用gRPC进行项目开发,首先需要在开发环境中安装gRPC及其依赖的库。以下是gRPC安装的步骤,适用于多种操作系统,包括Windows、Linux和macOS。

2.1 安装gRPC和Protocol Buffers

gRPC的安装可以通过多种方式进行,包括使用包管理器或从源代码编译。以下介绍Ubuntu下安装C++版本的gRPC(捆绑了Protocol Buffers)

注:如果gRPC和Protocol Buffers的版本不匹配会有问题,无法正常使用

2.1.1 安装Cmake

sudo apt install -y cmake

2.1.2 设置环境变量

export MY_INSTALL_DIR=$HOME/.local

mkdir -p $MY_INSTALL_DIR

export PATH="$MY_INSTALL_DIR/bin:$PATH" # 为了永久生效可以将该命令写入~/.bashrc文件中

2.1.3 安装必要的依赖

sudo apt install -y build-essential autoconf libtool pkg-config

2.1.4 下载gRPC源码

git clone --recurse-submodules -b v1.62.0 --depth 1 --shallow-submodules https://github.com/grpc/grpc

2.1.5 编译gRPC和 Protocol Buffers

cd grpc

mkdir -p cmake/build

pushd cmake/build

cmake -DgRPC_INSTALL=ON \

-DgRPC_BUILD_TESTS=OFF \

-DCMAKE_INSTALL_PREFIX=$MY_INSTALL_DIR \

../..

make -j 4

make install

popd

2.2 CMake配置详解

2.1.1 通用配置

common.cmake 是一个辅助性的 CMake 模块文件,通常用于存放项目中共用的 CMake 配置,以简化和集中管理 CMakeLists.txt 文件中的代码。这种做法有助于提升项目的可维护性和可读性。

在 gRPC 项目中,示例代码中的common.cmake 包括以下内容:

- 变量设置:定义项目中使用的常见路径和变量,例如 gRPC 和 protobuf 的安装路径,以便在整个项目中重用。

- 库查找:使用

find_package()或find_library()命令来查找和配置项目所需的依赖库,如 gRPC、protobuf、SSL 等。 - 编译器选项:统一设置编译器标志,例如 C++ 版本标准、优化级别、警告处理等。

- 宏定义:创建复用的 CMake 宏或函数,例如用于处理 proto 文件生成相关命令的宏,这有助于避免在

CMakeLists.txt文件中重复相同的代码块。

# Copyright 2018 gRPC authors.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

#

# cmake build file for C++ route_guide example.

# Assumes protobuf and gRPC have been installed using cmake.

# See cmake_externalproject/CMakeLists.txt for all-in-one cmake build

# that automatically builds all the dependencies before building route_guide.

cmake_minimum_required(VERSION 3.8)

if(MSVC)

add_definitions(-D_WIN32_WINNT=0x600)

endif()

find_package(Threads REQUIRED)

if(GRPC_AS_SUBMODULE)

# One way to build a projects that uses gRPC is to just include the

# entire gRPC project tree via "add_subdirectory".

# This approach is very simple to use, but the are some potential

# disadvantages:

# * it includes gRPC's CMakeLists.txt directly into your build script

# without and that can make gRPC's internal setting interfere with your

# own build.

# * depending on what's installed on your system, the contents of submodules

# in gRPC's third_party/* might need to be available (and there might be

# additional prerequisites required to build them). Consider using

# the gRPC_*_PROVIDER options to fine-tune the expected behavior.

#

# A more robust approach to add dependency on gRPC is using

# cmake's ExternalProject_Add (see cmake_externalproject/CMakeLists.txt).

# Include the gRPC's cmake build (normally grpc source code would live

# in a git submodule called "third_party/grpc", but this example lives in

# the same repository as gRPC sources, so we just look a few directories up)

add_subdirectory(../../.. ${CMAKE_CURRENT_BINARY_DIR}/grpc EXCLUDE_FROM_ALL)

message(STATUS "Using gRPC via add_subdirectory.")

# After using add_subdirectory, we can now use the grpc targets directly from

# this build.

set(_PROTOBUF_LIBPROTOBUF libprotobuf)

set(_REFLECTION grpc++_reflection)

set(_ORCA_SERVICE grpcpp_orca_service)

if(CMAKE_CROSSCOMPILING)

find_program(_PROTOBUF_PROTOC protoc)

else()

set(_PROTOBUF_PROTOC $<TARGET_FILE:protobuf::protoc>)

endif()

set(_GRPC_GRPCPP grpc++)

if(CMAKE_CROSSCOMPILING)

find_program(_GRPC_CPP_PLUGIN_EXECUTABLE grpc_cpp_plugin)

else()

set(_GRPC_CPP_PLUGIN_EXECUTABLE $<TARGET_FILE:grpc_cpp_plugin>)

endif()

elseif(GRPC_FETCHCONTENT)

# Another way is to use CMake's FetchContent module to clone gRPC at

# configure time. This makes gRPC's source code available to your project,

# similar to a git submodule.

message(STATUS "Using gRPC via add_subdirectory (FetchContent).")

include(FetchContent)

FetchContent_Declare(

grpc

GIT_REPOSITORY https://github.com/grpc/grpc.git

# when using gRPC, you will actually set this to an existing tag, such as

# v1.25.0, v1.26.0 etc..

# For the purpose of testing, we override the tag used to the commit

# that's currently under test.

GIT_TAG vGRPC_TAG_VERSION_OF_YOUR_CHOICE)

FetchContent_MakeAvailable(grpc)

# Since FetchContent uses add_subdirectory under the hood, we can use

# the grpc targets directly from this build.

set(_PROTOBUF_LIBPROTOBUF libprotobuf)

set(_REFLECTION grpc++_reflection)

set(_PROTOBUF_PROTOC $<TARGET_FILE:protoc>)

set(_GRPC_GRPCPP grpc++)

if(CMAKE_CROSSCOMPILING)

find_program(_GRPC_CPP_PLUGIN_EXECUTABLE grpc_cpp_plugin)

else()

set(_GRPC_CPP_PLUGIN_EXECUTABLE $<TARGET_FILE:grpc_cpp_plugin>)

endif()

else()

# This branch assumes that gRPC and all its dependencies are already installed

# on this system, so they can be located by find_package().

# Find Protobuf installation

# Looks for protobuf-config.cmake file installed by Protobuf's cmake installation.

option(protobuf_MODULE_COMPATIBLE TRUE)

find_package(Protobuf CONFIG REQUIRED)

message(STATUS "Using protobuf ${Protobuf_VERSION}")

set(_PROTOBUF_LIBPROTOBUF protobuf::libprotobuf)

set(_REFLECTION gRPC::grpc++_reflection)

if(CMAKE_CROSSCOMPILING)

find_program(_PROTOBUF_PROTOC protoc)

else()

set(_PROTOBUF_PROTOC $<TARGET_FILE:protobuf::protoc>)

endif()

# Find gRPC installation

# Looks for gRPCConfig.cmake file installed by gRPC's cmake installation.

find_package(gRPC CONFIG REQUIRED)

message(STATUS "Using gRPC ${gRPC_VERSION}")

set(_GRPC_GRPCPP gRPC::grpc++)

if(CMAKE_CROSSCOMPILING)

find_program(_GRPC_CPP_PLUGIN_EXECUTABLE grpc_cpp_plugin)

else()

set(_GRPC_CPP_PLUGIN_EXECUTABLE $<TARGET_FILE:gRPC::grpc_cpp_plugin>)

endif()

endif()

2.2.2 项目配置

这个配置文件包括了从proto文件生成C++代码的命令,以及编译这些生成的源代码文件为库和可执行文件的命令。利用CMake,我们能够确保项目在不同环境中具有可重复构建的能力。

cmake_minimum_required(VERSION 3.15)

project(grpcDemo)

set(CMAKE_CXX_STANDARD 17)

include(common.cmake)

# Proto file

get_filename_component(transfer_proto "transferfile.proto" ABSOLUTE)

get_filename_component(transfer_proto_path "${transfer_proto}" PATH)

# Generated sources

set(transfer_proto_srcs "${CMAKE_CURRENT_BINARY_DIR}/transferfile.pb.cc")

set(transfer_proto_hdrs "${CMAKE_CURRENT_BINARY_DIR}/transferfile.pb.h")

set(transfer_grpc_srcs "${CMAKE_CURRENT_BINARY_DIR}/transferfile.grpc.pb.cc")

set(transfer_grpc_hdrs "${CMAKE_CURRENT_BINARY_DIR}/transferfile.grpc.pb.h")

add_custom_command(

OUTPUT "${transfer_proto_srcs}" "${transfer_proto_hdrs}" "${transfer_grpc_srcs}" "${transfer_grpc_hdrs}"

COMMAND ${_PROTOBUF_PROTOC}

ARGS --grpc_out "${CMAKE_CURRENT_BINARY_DIR}"

--cpp_out "${CMAKE_CURRENT_BINARY_DIR}"

-I "${transfer_proto_path}"

--plugin=protoc-gen-grpc="${_GRPC_CPP_PLUGIN_EXECUTABLE}"

"${transfer_proto}"

DEPENDS "${transfer_proto}")

# Include generated *.pb.h files

include_directories("${CMAKE_CURRENT_BINARY_DIR}")

# transfer_grpc_proto

add_library(transfer_grpc_proto

${transfer_grpc_srcs}

${transfer_grpc_hdrs}

${transfer_proto_srcs}

${transfer_proto_hdrs})

target_link_libraries(transfer_grpc_proto

${_REFLECTION}

${_GRPC_GRPCPP}

${_PROTOBUF_LIBPROTOBUF})

# Targets greeter_[async_](client|server)

foreach(_target

transfer_client transfer_server)

add_executable(${_target} "${_target}.cpp")

target_link_libraries(${_target}

transfer_grpc_proto

${_REFLECTION}

${_GRPC_GRPCPP}

${_PROTOBUF_LIBPROTOBUF})

endforeach()

2.3 服务定义与proto文件

在gRPC中,服务和消息的定义是通过.proto文件进行的。例如,定义一个文件传输服务,可以在transferfile.proto中如下定义:

syntax = "proto3";

package filetransfer;

service FileTransferService {

rpc Upload(stream FileChunk) returns (UploadStatus) {}

}

message FileChunk {

bytes content = 1;

}

message UploadStatus {

bool success = 1;

string message = 2;

}

这里定义了一个FileTransferService服务,包含了一个Upload方法,该方法接受一个FileChunk类型的流,并返回一个UploadStatus状态。

3. 客户端和服务端的实现

客户端和服务端的实现是通过gRPC框架生成的接口进行的,这些接口基于前面定义的.proto文件。

3.1 gRPC客户端实现

客户端的主要职责是打开文件,读取数据,然后以流的形式发送到服务端。实现代码如下:

#include <iostream>

#include <string>

#include <fstream>

#include <chrono>

#include <grpcpp/grpcpp.h>

#include <grpcpp/channel.h>

#include <grpcpp/client_context.h>

#include <grpcpp/create_channel.h>

#include <grpcpp/security/credentials.h>

#include "transferfile.grpc.pb.h"

using grpc::Channel;

using grpc::ClientContext;

using grpc::ClientWriter;

using grpc::Status;

using transferfile::FileChunk;

using transferfile::FileUploadStatus;

using transferfile::TransferFile;

#define CHUNK_SIZE 3 * 1024 * 1024 // 3MB

class TransferClient

{

public:

TransferClient(std::shared_ptr<Channel> channel) : stub_(TransferFile::NewStub(channel)) {}

void uploadFile(const std::string &filename);

private:

std::unique_ptr<TransferFile::Stub> stub_;

};

void TransferClient::uploadFile(const std::string &filename)

{

FileChunk chunk;

char *buffer = new char[CHUNK_SIZE];

FileUploadStatus status;

ClientContext context;

std::ifstream infile;

unsigned long len = 0;

auto start = std::chrono::steady_clock::now();

infile.open(filename, std::ios::binary | std::ios::in);

if (!infile.is_open())

{

std::cerr << "Error: File not found" << std::endl;

return;

}

std::unique_ptr<ClientWriter<FileChunk>> writer(stub_->UploadFile(&context, &status)); // Create a writer to send chunks of file

while (!infile.eof())

{

infile.read(buffer, CHUNK_SIZE);

chunk.set_buffer(buffer, infile.gcount());

if (!writer->Write(chunk))

{

std::cerr << "Error: Failed to write chunk" << std::endl;

break;

}

len += infile.gcount();

}

infile.close();

delete[] buffer;

writer->WritesDone();

Status status1 = writer->Finish();

if (status1.ok() && len != status.length())

{

auto end = std::chrono::steady_clock::now();

std::cout << "File uploaded successfully" << std::endl;

std::cout << "Time taken: " << std::chrono::duration_cast<std::chrono::milliseconds>(end - start).count() << "ms" << std::endl;

std::cout << "File size: " << len << " bytes" << std::endl;

auto speed = (len / std::chrono::duration_cast<std::chrono::milliseconds>(end - start).count()) * 1000 / 1024 / 1024;

std::cout << "Speed: " << speed << " MB/s" << std::endl;

}

else

{

std::cerr << "Error: " << status1.error_message() << "len: " << len << " status.length(): " << status.length()

<< std::endl;

}

}

int main(int argc, char *argv[])

{

if (argc < 2)

{

std::cerr << "Usage: " << argv[0] << " <filename> [server_ip]" << std::endl;

return 1;

}

std::string filename(argv[1]);

std::string server_ip = "localhost";

if (argc == 3)

{

server_ip = argv[2];

}

TransferClient client(grpc::CreateChannel(server_ip + ":50051", grpc::InsecureChannelCredentials()));

client.uploadFile(filename);

return 0;

}

客户端代码展示了如何创建一个gRPC客户端,如何打开文件,如何将文件切割成块,并且如何将这些块通过网络发送到服务端。

3.2 gRPC服务端实现

服务端的实现则负责接收来自客户端的数据块,并将其写入到服务器上的文件中。服务端代码如下:

#include <iostream>

#include <string>

#include <fstream>

#include <grpcpp/grpcpp.h>

#include <grpcpp/server.h>

#include <grpcpp/server_builder.h>

#include <grpcpp/server_context.h>

#include <grpcpp/security/server_credentials.h>

#include "transferfile.grpc.pb.h"

#include <sys/resource.h>

#include <unistd.h>

#include <chrono>

using grpc::Server;

using grpc::ServerBuilder;

using grpc::ServerContext;

using grpc::ServerReader;

using grpc::Status;

using transferfile::FileChunk;

using transferfile::FileUploadStatus;

using transferfile::TransferFile;

using namespace std::chrono;

#define CHUNK_SIZE 3 * 1024 * 1024 // 3MB

class TransferServiceImpl final : public TransferFile::Service

{

public:

Status UploadFile(ServerContext *context, ServerReader<FileChunk> *reader, FileUploadStatus *status) override;

protected:

void printUsage(const struct rusage &start, const struct rusage &end)

{

std::cout << "CPU Usage: User time: " << (end.ru_utime.tv_sec - start.ru_utime.tv_sec) + (end.ru_utime.tv_usec - start.ru_utime.tv_usec) / 1000000.0

<< "s, System time: " << (end.ru_stime.tv_sec - start.ru_stime.tv_sec) + (end.ru_stime.tv_usec - start.ru_stime.tv_usec) / 1000000.0 << "s" << std::endl;

std::cout << "Max resident set size: " << (end.ru_maxrss - start.ru_maxrss) << " KB" << std::endl;

}

};

Status TransferServiceImpl::UploadFile(ServerContext *context, ServerReader<FileChunk> *reader, FileUploadStatus *status)

{

FileChunk chunk;

std::ofstream outfile;

const char *data;

struct rusage usage_start, usage_end;

getrusage(RUSAGE_SELF, &usage_start);

auto start = high_resolution_clock::now();

outfile.open("output.bin", std::ios::binary | std::ios::out | std::ios::trunc);

if (!outfile.is_open())

{

std::cerr << "Error: Failed to open file" << std::endl;

return Status::CANCELLED;

}

while (reader->Read(&chunk))

{

data = chunk.buffer().c_str();

outfile.write(data, chunk.buffer().length());

}

long pos = outfile.tellp();

status->set_length(pos);

outfile.close();

auto end = high_resolution_clock::now();

getrusage(RUSAGE_SELF, &usage_end);

auto duration = duration_cast<milliseconds>(end - start);

printUsage(usage_start, usage_end);

std::cout << "Total transmission time: " << duration.count() << " ms" << std::endl;

std::cout << "Total data transmitted: " << pos << " bytes" << std::endl;

std::cout << "Transmission rate: " << (pos * 1000.0 / duration.count()) / 1024 / 1024 << " MB/s" << std::endl;

return Status::OK;

}

void RunServer()

{

std::string server_address("0.0.0.0:50051");

TransferServiceImpl service;

ServerBuilder builder;

builder.AddListeningPort(server_address, grpc::InsecureServerCredentials());

builder.RegisterService(&service);

std::unique_ptr<Server> server(builder.BuildAndStart());

std::cout << "Server listening on " << server_address << std::endl;

server->Wait();

}

int main(int argc, char **argv)

{

RunServer();

return 0;

}

服务端代码展示了如何创建一个gRPC服务端,如何接收客户端发送的数据块,以及如何将这些数据块写入到磁盘文件中。

3.3 TCP客户端实现

功能同gRPC客户端

#include <iostream>

#include <fstream>

#include <sys/socket.h>

#include <arpa/inet.h>

#include <unistd.h>

#include <string>

#include <cstring>

int main(int argc, char *argv[])

{

const char *server_ip = "127.0.0.1";

int server_port = 8888;

std::string file_path = argv[1];

// Create socket

int sock = socket(AF_INET, SOCK_STREAM, 0);

if (sock < 0)

{

std::cerr << "Socket creation failed." << std::endl;

return 1;

}

// Define the server address

struct sockaddr_in server_address;

memset(&server_address, 0, sizeof(server_address));

server_address.sin_family = AF_INET;

server_address.sin_port = htons(server_port);

inet_pton(AF_INET, server_ip, &server_address.sin_addr);

// Connect to the server

if (connect(sock, (struct sockaddr *)&server_address, sizeof(server_address)) < 0)

{

std::cerr << "Connection to server failed." << std::endl;

close(sock);

return 1;

}

std::ifstream file(file_path, std::ios::binary);

if (!file.is_open())

{

std::cerr << "Failed to open file: " << file_path << std::endl;

close(sock);

return 1;

}

// Send file contents

char *buffer = new char[1024];

while (file.read(buffer, sizeof(buffer)) || file.gcount())

{

if (send(sock, buffer, file.gcount(), 0) < 0)

{

std::cerr << "Failed to send data." << std::endl;

break;

}

}

delete[] buffer;

std::cout << "File sent successfully." << std::endl;

file.close();

close(sock);

return 0;

}

3.4 TCP服务端实现

功能同gRPC服务端

#include <iostream>

#include <fstream>

#include <sys/socket.h>

#include <arpa/inet.h>

#include <unistd.h>

#include <cstring>

#include <string>

#include <sys/resource.h>

#include <unistd.h>

#include <chrono>

#define CHUNK_SIZE 3 * 1024 * 1024 // 3MB

using namespace std::chrono;

void printUsage(const struct rusage &start, const struct rusage &end)

{

std::cout << "CPU Usage: User time: " << (end.ru_utime.tv_sec - start.ru_utime.tv_sec) + (end.ru_utime.tv_usec - start.ru_utime.tv_usec) / 1000000.0

<< "s, System time: " << (end.ru_stime.tv_sec - start.ru_stime.tv_sec) + (end.ru_stime.tv_usec - start.ru_stime.tv_usec) / 1000000.0 << "s" << std::endl;

std::cout << "Max resident set size: " << (end.ru_maxrss - start.ru_maxrss) << " KB" << std::endl;

}

int main()

{

int server_port = 8888;

const char *output_file = "output_received_file";

// Create socket

int server_sock = socket(AF_INET, SOCK_STREAM, 0);

if (server_sock < 0)

{

std::cerr << "Socket creation failed." << std::endl;

return 1;

}

// Bind socket to IP / port

struct sockaddr_in server_address;

memset(&server_address, 0, sizeof(server_address));

server_address.sin_family = AF_INET;

server_address.sin_port = htons(server_port);

server_address.sin_addr.s_addr = INADDR_ANY;

if (bind(server_sock, (struct sockaddr *)&server_address, sizeof(server_address)) < 0)

{

std::cerr << "Bind failed." << std::endl;

close(server_sock);

return 1;

}

// Listen

if (listen(server_sock, 10) < 0)

{

std::cerr << "Listen failed." << std::endl;

close(server_sock);

return 1;

}

std::cout << "Server is listening on port " << server_port << std::endl;

// Accept connection

struct sockaddr_in client_address;

socklen_t client_len = sizeof(client_address);

int client_sock = accept(server_sock, (struct sockaddr *)&client_address, &client_len);

if (client_sock < 0)

{

std::cerr << "Accept failed." << std::endl;

close(server_sock);

return 1;

}

struct rusage usage_start, usage_end;

getrusage(RUSAGE_SELF, &usage_start);

auto start = high_resolution_clock::now();

std::ofstream file(output_file, std::ios::binary);

if (!file.is_open())

{

std::cerr << "Failed to open file for writing." << std::endl;

close(client_sock);

close(server_sock);

return 1;

}

// Receive data

char *buffer = new char[CHUNK_SIZE];

int bytes_received;

while ((bytes_received = recv(client_sock, buffer, sizeof(buffer), 0)) > 0)

{

file.write(buffer, bytes_received);

}

if (bytes_received < 0)

{

std::cerr << "Error in recv()." << std::endl;

}

else

{

std::cout << "File received successfully." << std::endl;

long pos = file.tellp();

auto end = high_resolution_clock::now();

getrusage(RUSAGE_SELF, &usage_end);

auto duration = duration_cast<milliseconds>(end - start);

printUsage(usage_start, usage_end);

std::cout << "Total transmission time: " << duration.count() << " ms" << std::endl;

std::cout << "Total data transmitted: " << pos << " bytes" << std::endl;

std::cout << "Transmission rate: " << (pos * 1000.0 / duration.count()) / 1024 / 1024 << " MB/s" << std::endl;

}

delete[] buffer;

file.close();

close(client_sock);

close(server_sock);

return 0;

}

4. 性能测试与分析

为了验证gRPC与Protobuf的效率,我设置了一个基准测试,比较使用gRPC和传统TCP直接传输大文件的性能差异。

4.1 测试方法

测试方法包括:

- 准备一定大小的测试文件,这里随机生成了2GB的文件。(

fallocate -l 2G 2GBfile.txt) - 分别使用gRPC和TCP传输此文件,记录所需的总时间和CPU、内存等资源的使用情况。

- 重复测试,确保数据的准确性。

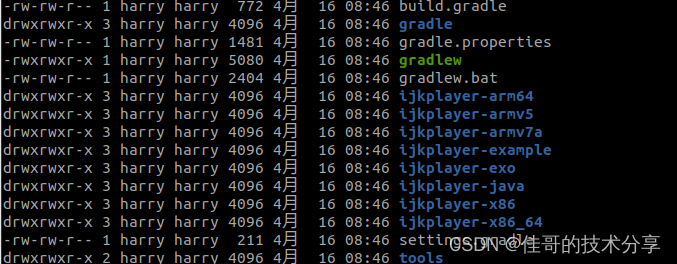

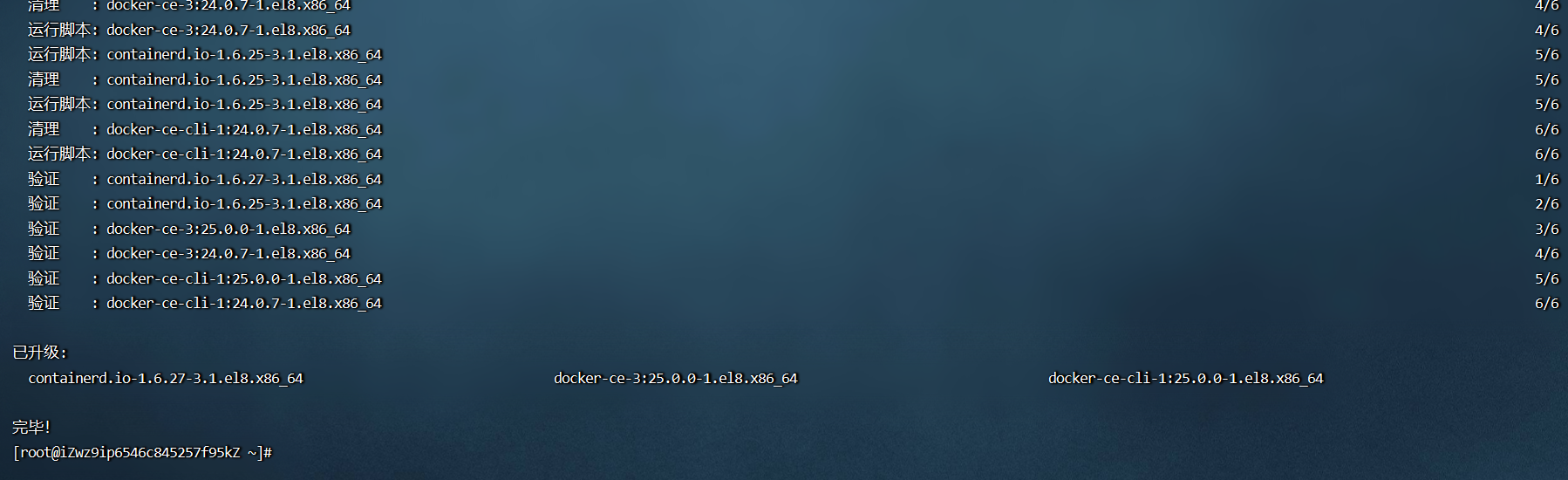

4.1.1 gPRC测试结果

4.1.2 TCP测试结果

4.1.3 多次测试结果

| User time | System time | Max resident set size | Transmission time | Transmission rate | |

|---|---|---|---|---|---|

| gRPC | 0.837348 s | 3.61289 s | 118196 KB | 3.313 s | 618.171 MB/s |

| TCP | 15.0924 s | 64.0648 s | 0 KB | 79.672 s | 25.7054 MB/s |

| gRPC | 0.782648 s | 8.72327 s | 42992 KB | 8.792 s | 232.939 MB/s |

| TCP | 14.2556 s | 61.0553 s | 0 KB | 75.441 s | 27.147 MB/s |

| gRPC | 0.921911 s | 2.25723 s | 44548 KB | 2.340 s | 875.214 MB/s |

| TCP | 14.1333 s | 61.6136 s | 0 KB | 76.090 s | 26.9155 MB/s |

| gRPC | 0.8371 s | 3.73357 s | 130056 KB | 3.565 s | 574.474 MB/s |

| TCP | 14.6751 s | 65.7286 s | 0 KB | 80.590 s | 25.4126 MB/s |

| gRPC | 0.942197 s | 2.74693 s | 36912 KB | 2.601 s | 787.389 MB/s |

| TCP | 18.8037 s | 73.1375 s | 0 KB | 92.526 s | 22.1343 MB/s |

4.2 性能比较结果

4.2.1 CPU 使用

gRPC在CPU使用上明显低于传统的TCP socket方法。这主要因为gRPC内部使用了更现代的HTTP/2协议,它支持多路复用、服务器推送等高效的数据传输机制,而不需要像TCP那样对每个文件传输任务建立单独的连接。此外,gRPC的实现中可能包含了更优化的数据处理路径,减少了上下文切换和系统调用的开销。

4.2.2 内存复用

Max resident set size(最大常驻集大小)表示进程在内存中占用的最大空间。TCP方式的最大常驻集大小一直是0KB,而gRPC分配的比较多,这是由于gRPC框架本身的内存需求,以及可能的内存缓冲机制,这有助于提高数据处理的速率和效率。

4.2.3 传输时间和速率

gRPC在传输速度上极大超过了TCP socket。这种巨大的差异主要来自于gRPC使用HTTP/2的优势,如头部压缩、二进制帧传输和连接复用。HTTP/2的二进制帧结构使得传输更加高效,并减少了因为文本解析带来的开销。此外,连接复用允许在单一连接上并行交换消息,从而显著提升了数据传输效率,减少了因建立和关闭多个TCP连接所产生的延迟和资源消耗。

测试结果显示,使用gRPC和Protobuf传输大文件在多个方面均优于传统TCP方法:

- 传输速度: gRPC利用HTTP/2的多路复用功能,可以在一个连接中并行传输多个文件,显著提升了传输效率。

- 资源利用率: gRPC的传输过程中CPU利用率较低,内存复用率较高,更适合长时间运行的应用场景。

- 错误处理: gRPC内置的错误处理机制能有效地管理网络问题和数据传输错误,保证数据的完整性。

- 高效的数据序列化: Protobuf非常高效,生成的数据包体积小,通常比相等的XML小3到10倍。这意味着在网络上传输相同的数据量时,Protobuf需要的带宽更少。

- 快速序列化与反序列化: Protobuf提供了非常快的数据序列化和反序列化能力,这对于性能要求高的应用尤其重要,可以显著减少数据处理时间。

- 明确的结构定义: 使用Protobuf可以让数据结构更加清晰和严格,有助于团队内部的沟通和后期的维护。

- 避免手动解析:与自定义的二进制格式相比,Protobuf避免了手动解析数据的错误和复杂性,因为解析工作是自动化的,由工具链支持。

5. 结论

使用gRPC和Protobuf传输大文件,不仅提高了传输速度,而且确保了更高的可靠性和更低的资源消耗。这使得gRPC成为大规模数据处理和分布式系统中的理想选择。未来,随着技术的进一步成熟和优化,预计gRPC在更多场景中将显示出其优越性。

希望本文对于那些寻求改进大文件传输性能的开发者有所帮助,并能够启发更多的技术创新和应用。