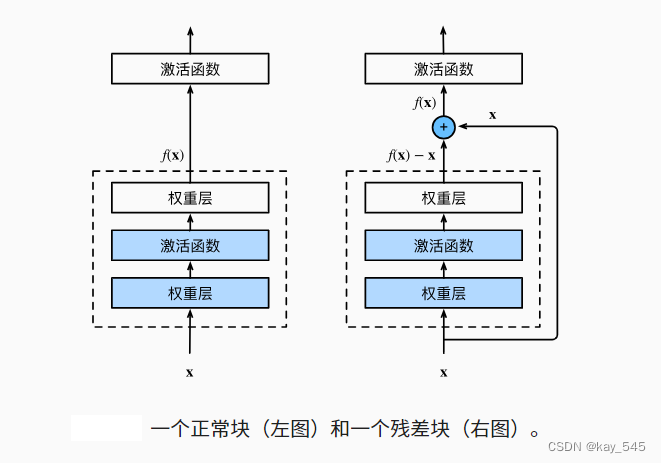

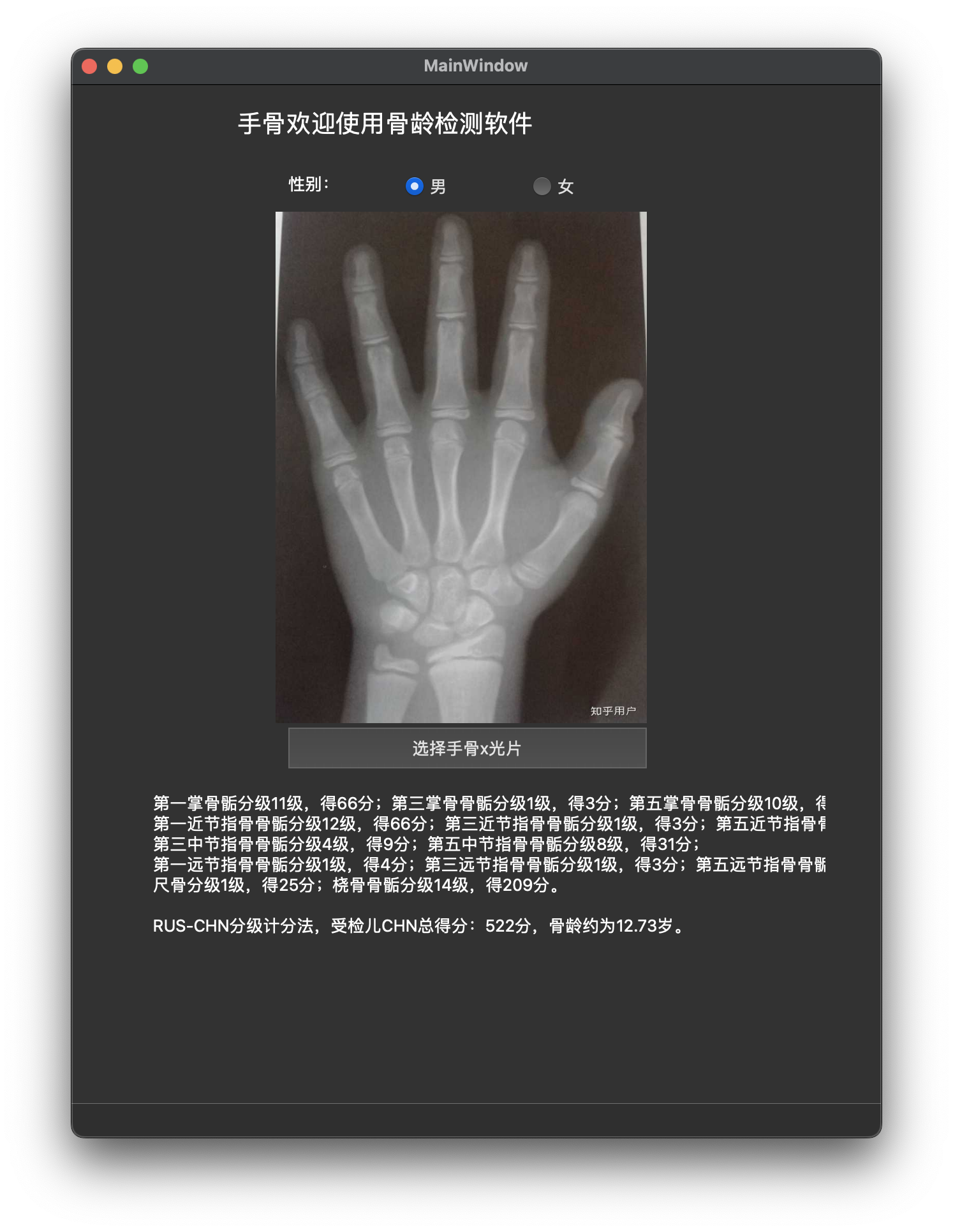

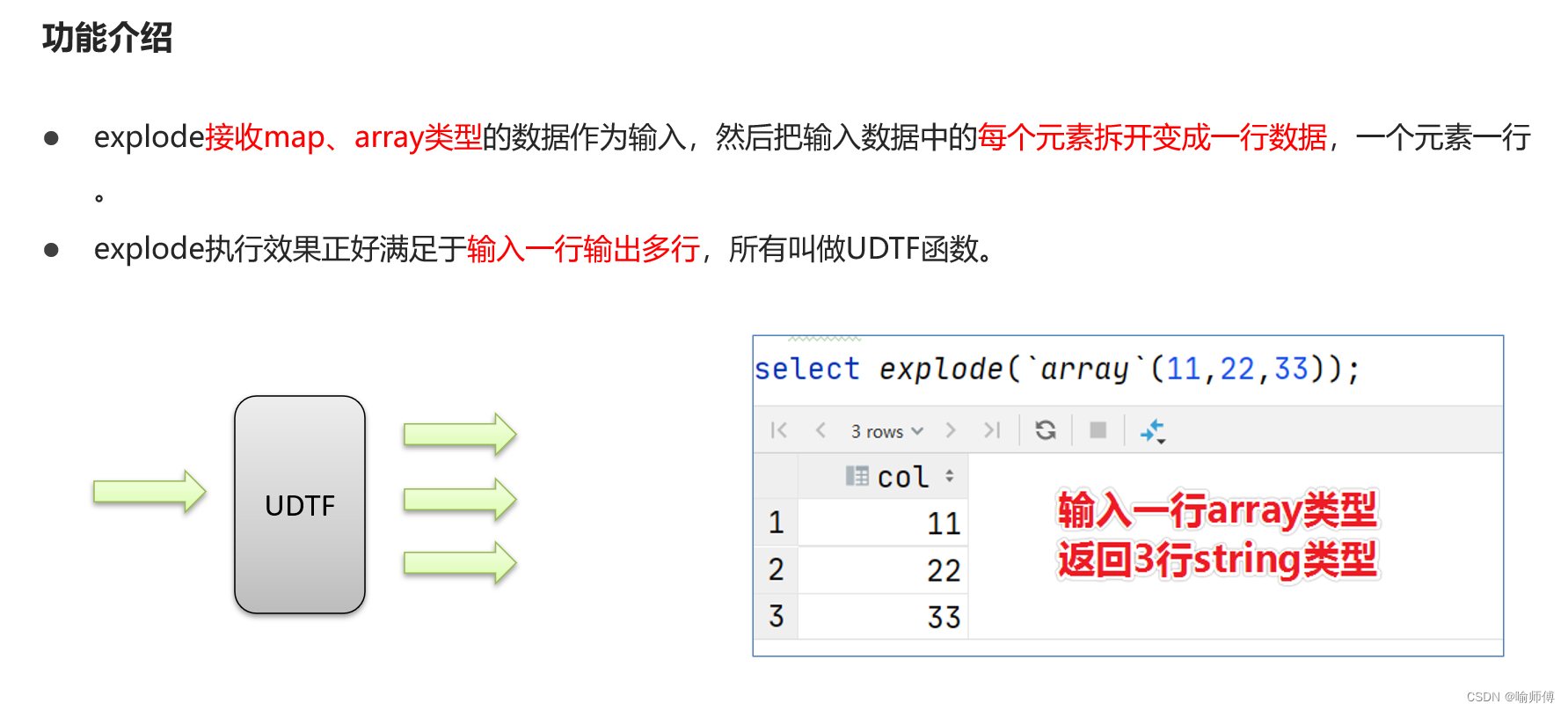

原理图:

实现:

定义残差块:

class Residual(nn.Module):

def __init__(self,input_channels,num_channels,use_1x1conv=False,strides=1):

super().__init__()

"""

blk = Residual(3,3)

X = torch.rand(4, 3, 6, 6) (样本数量,通道,高度,宽度)

"""

# in_channels = 3, num_channels = 3 H2 = (6 -3 + 2*1)/1 +1 = 6 W2 = 6

# [4,3,6,6] => [4,3,6,6]

self.conv1 = nn.Conv2d(input_channels,num_channels,kernel_size=3,padding=1,stride=strides)

# H2 = (6 -3 + 2*1)/1 +1 = 6 W2 = 6

# [4,3,6,6] => [4,3,6,6]

self.conv2 = nn.Conv2d(num_channels,num_channels,kernel_size=3,padding=1)

#若使用1x1的卷积层

if use_1x1conv:

# H2 = (6- 1) / 1 +1 =6 W2 = 6

# [4,3,6,6] => [4,3,6,6]

self.conv3 = nn.Conv2d(input_channels,num_channels,kernel_size=1,stride=strides)

else:

self.conv3 = None

self.bn1 = nn.BatchNorm2d(num_channels)

self.bn2 = nn.BatchNorm2d(num_channels)

self.relu = nn.ReLU(inplace=True)

def forward(self,x):

Y = F.relu(self.bn1(self.conv1(x)))

Y = self.bn2(self.conv2(Y))

if self.conv3:

x = self.conv3(x)

Y += x

return F.relu(Y)

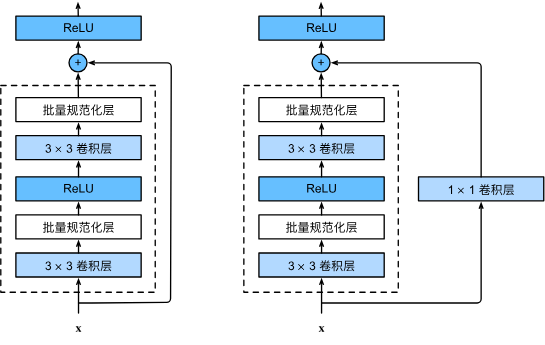

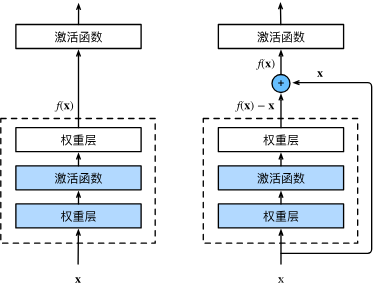

其中,该代码实现了两种不同的网络,一种是x直接传送,另一种是x经过卷积后再进行传送。原理图如下:

定义Resnet模型:

b1 = nn.Sequential(nn.Conv2d(1, 64, kernel_size=7, stride=2, padding=3),

nn.BatchNorm2d(64), nn.ReLU(),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1))

b2 = nn.Sequential(*resnet_block(64, 64, 2, first_block=True))

b3 = nn.Sequential(*resnet_block(64, 128, 2))

b4 = nn.Sequential(*resnet_block(128, 256, 2))

b5 = nn.Sequential(*resnet_block(256, 512, 2))

net = nn.Sequential(b1, b2, b3, b4, b5,

nn.AdaptiveAvgPool2d((1,1)),

nn.Flatten(), nn.Linear(512, 10))

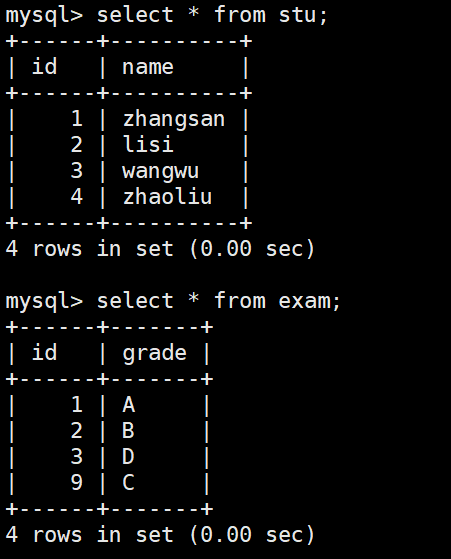

运行测试:

lr, num_epochs, batch_size = 0.05, 10, 256

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size, resize=96)

d2l.train_ch6(net, train_iter, test_iter, num_epochs, lr, d2l.try_gpu())

输出结果:

loss 0.013, train acc 0.997, test acc 0.917

936.3 examples/sec on cuda:0