InternVL 运行 InternVL 1.5-8bit教程

InternVL 官网仓库及教程

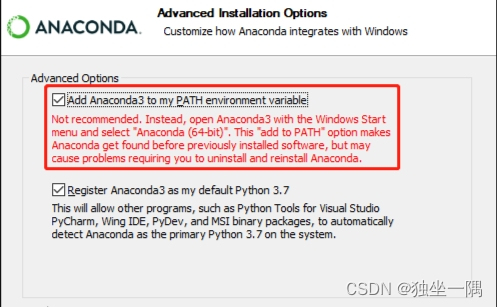

1. 设置最小环境

conda create --name internvl python=3.10 -y

conda activate internvl

conda install pytorch==2.2.2 torchvision pytorch-cuda=11.8 -c pytorch -c nvidia -y

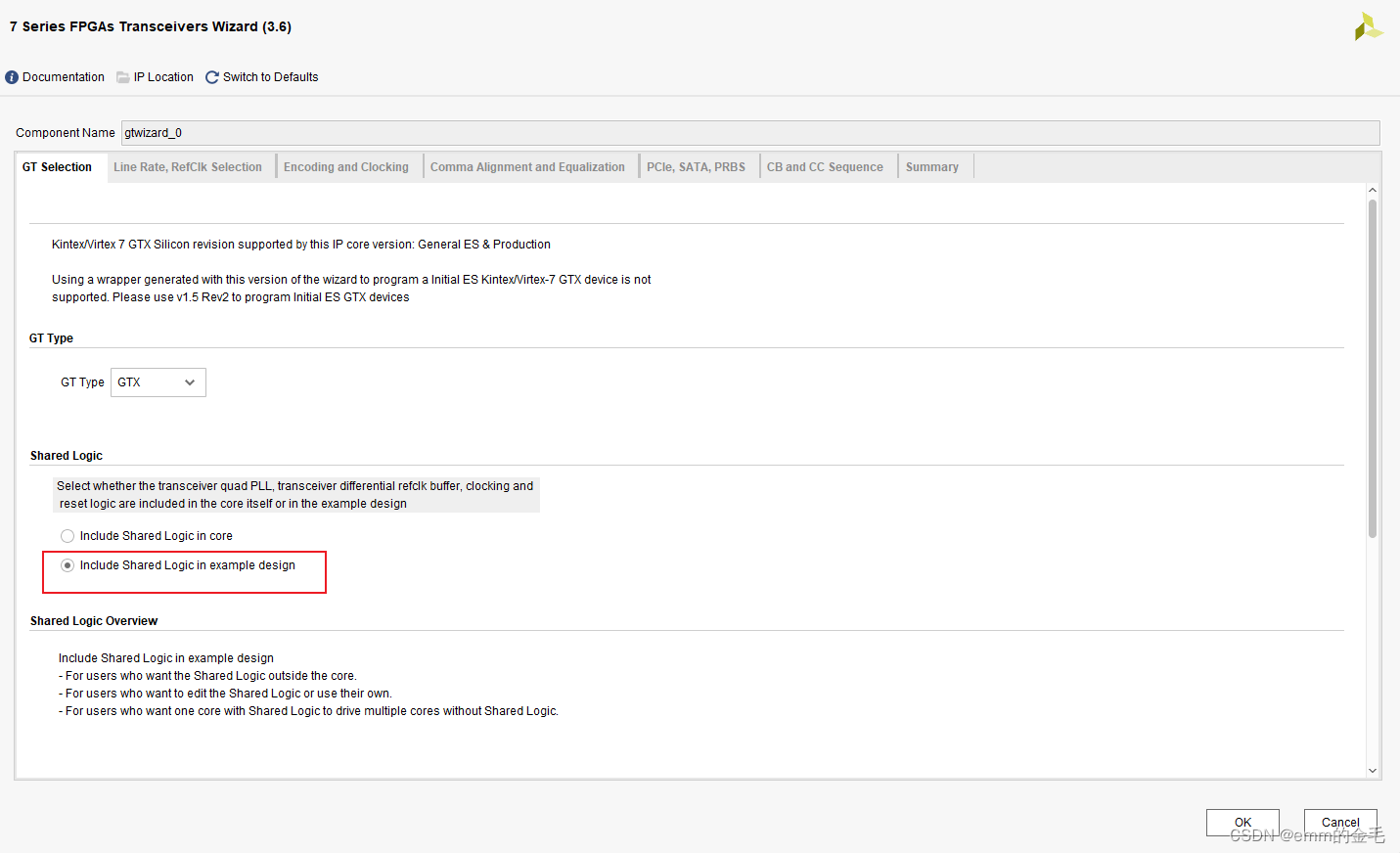

pip install transformers sentencepiece peft einops bitsandbytes accelerate timm ninja packaging protobuf 2.更改模型的cfg文件(config.json)

OpenGVLab/InternVL-Chat-V1-5-Int8 里面包含了config.json文件

- 设置

use_flash_attnfalse - 设置

attn_implementationeager

3.准备脚本 inter.py

from transformers import AutoTokenizer, AutoModel

import torch

import torchvision.transforms as T

from PIL import Image

from torchvision.transforms.functional import InterpolationMode

IMAGENET_MEAN = (0.485, 0.456, 0.406)

IMAGENET_STD = (0.229, 0.224, 0.225)

def build_transform(input_size):

MEAN, STD = IMAGENET_MEAN, IMAGENET_STD

transform = T.Compose([

T.Lambda(lambda img: img.convert('RGB') if img.mode != 'RGB' else img),

T.Resize((input_size, input_size), interpolation=InterpolationMode.BICUBIC),

T.ToTensor(),

T.Normalize(mean=MEAN, std=STD)

])

return transform

def find_closest_aspect_ratio(aspect_ratio, target_ratios, width, height, image_size):

best_ratio_diff = float('inf')

best_ratio = (1, 1)

area = width * height

for ratio in target_ratios:

target_aspect_ratio = ratio[0] / ratio[1]

ratio_diff = abs(aspect_ratio - target_aspect_ratio)

if ratio_diff < best_ratio_diff:

best_ratio_diff = ratio_diff

best_ratio = ratio

elif ratio_diff == best_ratio_diff:

if area > 0.5 * image_size * image_size * ratio[0] * ratio[1]:

best_ratio = ratio

return best_ratio

def dynamic_preprocess(image, min_num=1, max_num=6, image_size=448, use_thumbnail=False):

orig_width, orig_height = image.size

aspect_ratio = orig_width / orig_height

# calculate the existing image aspect ratio

target_ratios = set(

(i, j) for n in range(min_num, max_num + 1) for i in range(1, n + 1) for j in range(1, n + 1) if

i * j <= max_num and i * j >= min_num)

target_ratios = sorted(target_ratios, key=lambda x: x[0] * x[1])

# find the closest aspect ratio to the target

target_aspect_ratio = find_closest_aspect_ratio(

aspect_ratio, target_ratios, orig_width, orig_height, image_size)

# calculate the target width and height

target_width = image_size * target_aspect_ratio[0]

target_height = image_size * target_aspect_ratio[1]

blocks = target_aspect_ratio[0] * target_aspect_ratio[1]

# resize the image

resized_img = image.resize((target_width, target_height))

processed_images = []

for i in range(blocks):

box = (

(i % (target_width // image_size)) * image_size,

(i // (target_width // image_size)) * image_size,

((i % (target_width // image_size)) + 1) * image_size,

((i // (target_width // image_size)) + 1) * image_size

)

# split the image

split_img = resized_img.crop(box)

processed_images.append(split_img)

assert len(processed_images) == blocks

if use_thumbnail and len(processed_images) != 1:

thumbnail_img = image.resize((image_size, image_size))

processed_images.append(thumbnail_img)

return processed_images

def load_image(image_file, input_size=448, max_num=6):

image = Image.open(image_file).convert('RGB')

transform = build_transform(input_size=input_size)

images = dynamic_preprocess(image, image_size=input_size, use_thumbnail=True, max_num=max_num)

pixel_values = [transform(image) for image in images]

pixel_values = torch.stack(pixel_values)

return pixel_values

path = "./share_model/InternVL-Chat-V1-5-Int8"

model = AutoModel.from_pretrained(

path,

torch_dtype=torch.bfloat16,

low_cpu_mem_usage=True,

trust_remote_code=True,

load_in_8bit=True).eval()

tokenizer = AutoTokenizer.from_pretrained(path, trust_remote_code=True)

# set the max number of tiles in `max_num`

pixel_values = load_image("misc/dog.jpg", max_num=6).to(torch.bfloat16).cuda()

generation_config = dict(

num_beams=1,

max_new_tokens=512,

do_sample=False,

)

# single-round single-image conversation

question = "请详细描述图片" # Please describe the picture in detail

response = model.chat(tokenizer, pixel_values, question, generation_config)

print(question, response)4.检查结果

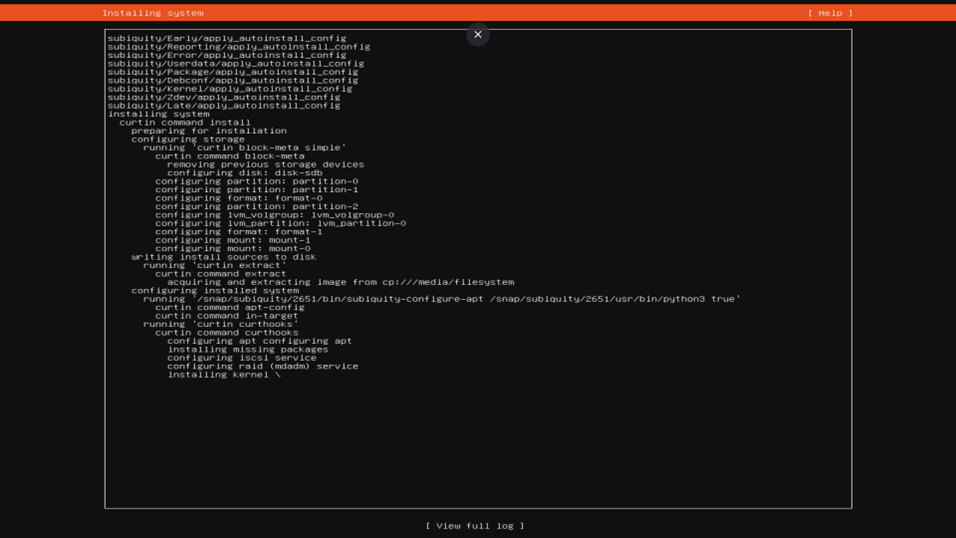

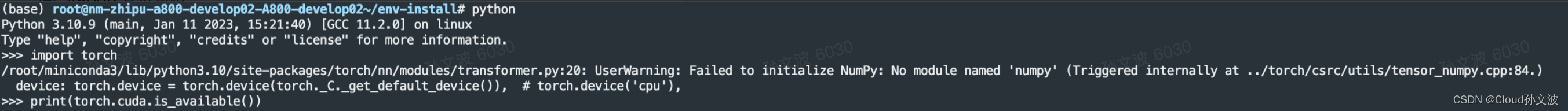

(internvl) /home/temp # python test_invl.py

FlashAttention is not installed.

The `load_in_4bit` and `load_in_8bit` arguments are deprecated and will be removed in the future versions. Please, pass a `BitsAndBytesConfig` object in `quantization_config` argument instead.

Unused kwargs: ['quant_method']. These kwargs are not used in <class 'transformers.utils.quantization_config.BitsAndBytesConfig'>.

Loading checkpoint shards: 100%|███████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 6/6 [00:52<00:00, 8.77s/it]

Special tokens have been added in the vocabulary, make sure the associated word embeddings are fine-tuned or trained.

dynamic ViT batch size: 5

请详细描述图片 这张图片展示了一只金毛寻回犬幼犬坐在一片开满橙色花朵的草地上。幼犬的毛发是金黄色,看起来非常柔软和蓬松。它的眼睛是深色的,嘴巴张开,似乎在微笑或者是在喘气,显得非常活泼和快乐。背景是一片模糊的绿色草地,可能是由于使用了浅景深拍摄技术,使得焦点集中在幼犬身上,而背景则显得柔和模糊。整体上,这张图片传达了一种温馨和快乐的氛围,幼犬看起来非常健康和快乐。