4. 接口应用

关于该项目的调用方式在上一篇文章中已经进行了详细介绍,具体使用可以参考《最新发布!TensorRT C# API :基于C#与TensorRT部署深度学习模型》,下面结合Yolov8-cls模型详细介绍一下更新的接口使用方法。

4.1 创建并配置C#项目

首先创建一个简单的C#项目,然后添加项目配置。

首先是添加TensorRT C# API 项目引用,如下图所示,添加上文中C#项目生成的dll文件即可。

接下来添加OpenCvSharp,此处通过NuGet Package安装即可,此处主要安装以下两个程序包即可:

|

|

配置好项目后,项目的配置文件如下所示:

<Project Sdk="Microsoft.NET.Sdk">

<PropertyGroup>

<OutputType>Exe</OutputType>

<TargetFramework>net6.0</TargetFramework>

<RootNamespace>TensorRT_CSharp_API_demo</RootNamespace>

<ImplicitUsings>enable</ImplicitUsings>

<Nullable>enable</Nullable>

</PropertyGroup>

<ItemGroup>

<PackageReference Include="OpenCvSharp4.Extensions" Version="4.9.0.20240103" />

<PackageReference Include="OpenCvSharp4.Windows" Version="4.9.0.20240103" />

</ItemGroup>

<ItemGroup>

<Reference Include="TensorRtSharp">

<HintPath>E:\GitSpace\TensorRT-CSharp-API\src\TensorRtSharp\bin\Release\net6.0\TensorRtSharp.dll</HintPath>

</Reference>

</ItemGroup>

</Project>

4.2 添加推理代码

此处演示一个简单的图像分类项目,以Yolov8-cls项目为例:

(1) 转换engine模型

动态输入的模型在进行格式转换时,需要指定模型推理形状至此的范围,minShapes表示模型推理支持的最小形状,optShapes表示模型推理支持的最佳形状,maxShapes表示模型推理支持的最大形状,模型转换需要消耗较多时间,最终转换成功后会在模型同级目录下生成相同名字的.engine文件。

Dims minShapes = new Dims(1, 3, 224, 224);

Dims optShapes = new Dims(10, 3, 224, 224);

Dims maxShapes = new Dims(20, 3, 224, 224);

Nvinfer.OnnxToEngine(onnxPath, 20, "images", minShapes, optShapes, maxShapes);

(2) 定义模型预测方法

下面代码是定义的Yolov8-cls模型的预测方法,该方法支持动态Bath输入模型推理,可以根据用户输入图片数量,自动设置输入Bath,然后进行推理。

下面代码与上一篇文章中的代码差异主要是增加了predictor.SetBindingDimensions("images", new Dims(batchNum, 3, 224, 224));这一句代码。同时在初始化时,设置最大支持20Bath,这与上文模型转换时设置的一致。

public class Yolov8Cls

{

public Dims InputDims;

public int BatchNum;

private Nvinfer predictor;

public Yolov8Cls(string enginePath)

{

predictor = new Nvinfer(enginePath, 20);

InputDims = predictor.GetBindingDimensions("images");

}

public void Predict(List<Mat> images)

{

BatchNum = images.Count;

for (int begImgNo = 0; begImgNo < images.Count; begImgNo += BatchNum)

{

DateTime start = DateTime.Now;

int endImgNo = Math.Min(images.Count, begImgNo + BatchNum);

int batchNum = endImgNo - begImgNo;

List<Mat> normImgBatch = new List<Mat>();

int imageLen = 3 * 224 * 224;

float[] inputData = new float[BatchNum * imageLen];

for (int ino = begImgNo; ino < endImgNo; ino++)

{

Mat input_mat = CvDnn.BlobFromImage(images[ino], 1.0 / 255.0, new OpenCvSharp.Size(224, 224), 0, true, false);

float[] data = new float[imageLen];

Marshal.Copy(input_mat.Ptr(0), data, 0, imageLen);

Array.Copy(data, 0, inputData, ino * imageLen, imageLen);

}

predictor.SetBindingDimensions("images", new Dims(batchNum, 3, 224, 224));

predictor.LoadInferenceData("images", inputData);

DateTime end = DateTime.Now;

Console.WriteLine("[ INFO ] Input image data processing time: " + (end - start).TotalMilliseconds + " ms.");

predictor.infer();

start = DateTime.Now;

predictor.infer();

end = DateTime.Now;

Console.WriteLine("[ INFO ] Model inference time: " + (end - start).TotalMilliseconds + " ms.");

start = DateTime.Now;

float[] outputData = predictor.GetInferenceResult("output0");

for (int i = 0; i < batchNum; ++i)

{

Console.WriteLine(string.Format("[ INFO ] Classification Top {0} result : ", 2));

float[] data = new float[1000];

Array.Copy(outputData, i * 1000, data, 0, 1000);

List<int> sortResult = Argsort(new List<float>(data));

for (int j = 0; j < 2; ++j)

{

string msg = "";

msg += ("index: " + sortResult[j] + "\t");

msg += ("score: " + data[sortResult[j]] + "\t");

Console.WriteLine("[ INFO ] " + msg);

}

}

end = DateTime.Now;

Console.WriteLine("[ INFO ] Inference result processing time: " + (end - start).TotalMilliseconds + " ms.\n");

}

}

public static List<int> Argsort(List<float> array)

{

int arrayLen = array.Count;

List<float[]> newArray = new List<float[]> { };

for (int i = 0; i < arrayLen; i++)

{

newArray.Add(new float[] { array[i], i });

}

newArray.Sort((a, b) => b[0].CompareTo(a[0]));

List<int> arrayIndex = new List<int>();

foreach (float[] item in newArray)

{

arrayIndex.Add((int)item[1]);

}

return arrayIndex;

}

}

(3) 预测方法调用

下面是上述定义的预测方法,为了测试不同Bath性能,此处读取了多张图片,并分别预测不同张数图片,如下所示:

Yolov8Cls yolov8Cls = new Yolov8Cls("E:\\Model\\yolov8\\yolov8s-cls_b.engine");

Mat image1 = Cv2.ImRead("E:\\ModelData\\image\\demo_4.jpg");

Mat image2 = Cv2.ImRead("E:\\ModelData\\image\\demo_5.jpg");

Mat image3 = Cv2.ImRead("E:\\ModelData\\image\\demo_6.jpg");

Mat image4 = Cv2.ImRead("E:\\ModelData\\image\\demo_7.jpg");

Mat image5 = Cv2.ImRead("E:\\ModelData\\image\\demo_8.jpg");

yolov8Cls.Predict(new List<Mat> { image1, image2 });

yolov8Cls.Predict(new List<Mat> { image1, image2, image3 });

yolov8Cls.Predict(new List<Mat> { image1, image2, image3, image4 });

yolov8Cls.Predict(new List<Mat> { image1, image2, image3, image4, image5 });

4.3 项目演示

配置好项目并编写好代码后,运行该项目,项目输出如下所示:

[ INFO ] Input image data processing time: 5.5277 ms.

[ INFO ] Model inference time: 1.3685 ms.

[ INFO ] Classification Top 2 result :

[ INFO ] index: 386 score: 0.8754883

[ INFO ] index: 385 score: 0.08013916

[ INFO ] Classification Top 2 result :

[ INFO ] index: 293 score: 0.89160156

[ INFO ] index: 276 score: 0.05480957

[ INFO ] Inference result processing time: 3.0823 ms.

[ INFO ] Input image data processing time: 2.7356 ms.

[ INFO ] Model inference time: 1.4435 ms.

[ INFO ] Classification Top 2 result :

[ INFO ] index: 386 score: 0.8754883

[ INFO ] index: 385 score: 0.08013916

[ INFO ] Classification Top 2 result :

[ INFO ] index: 293 score: 0.89160156

[ INFO ] index: 276 score: 0.05480957

[ INFO ] Classification Top 2 result :

[ INFO ] index: 14 score: 0.99853516

[ INFO ] index: 88 score: 0.0006980896

[ INFO ] Inference result processing time: 1.5137 ms.

[ INFO ] Input image data processing time: 3.7277 ms.

[ INFO ] Model inference time: 1.5285 ms.

[ INFO ] Classification Top 2 result :

[ INFO ] index: 386 score: 0.8754883

[ INFO ] index: 385 score: 0.08013916

[ INFO ] Classification Top 2 result :

[ INFO ] index: 293 score: 0.89160156

[ INFO ] index: 276 score: 0.05480957

[ INFO ] Classification Top 2 result :

[ INFO ] index: 14 score: 0.99853516

[ INFO ] index: 88 score: 0.0006980896

[ INFO ] Classification Top 2 result :

[ INFO ] index: 294 score: 0.96533203

[ INFO ] index: 269 score: 0.0124435425

[ INFO ] Inference result processing time: 2.7328 ms.

[ INFO ] Input image data processing time: 4.063 ms.

[ INFO ] Model inference time: 1.6947 ms.

[ INFO ] Classification Top 2 result :

[ INFO ] index: 386 score: 0.8754883

[ INFO ] index: 385 score: 0.08013916

[ INFO ] Classification Top 2 result :

[ INFO ] index: 293 score: 0.89160156

[ INFO ] index: 276 score: 0.05480957

[ INFO ] Classification Top 2 result :

[ INFO ] index: 14 score: 0.99853516

[ INFO ] index: 88 score: 0.0006980896

[ INFO ] Classification Top 2 result :

[ INFO ] index: 294 score: 0.96533203

[ INFO ] index: 269 score: 0.0124435425

[ INFO ] Classification Top 2 result :

[ INFO ] index: 127 score: 0.9008789

[ INFO ] index: 128 score: 0.07745361

[ INFO ] Inference result processing time: 3.5664 ms.

通过上面输出可以看出,不同Bath模型推理时间在1.3685~1.6947ms,大大提升了模型的推理速度。

5. 总结

在本项目中,我们扩展了TensorRT C# API 接口,使其支持动态输入模型。并结合分类模型部署流程向大家展示了TensorRT C# API 的使用方式,方便大家快速上手使用。

为了方便各位开发者使用,此处开发了配套的演示项目,主要是基于Yolov8开发的目标检测、目标分割、人体关键点识别、图像分类以及旋转目标识别,并且支持动态输入模型,用户可以同时推理任意张图像。

- Yolov8 Det 目标检测项目源码:

https://github.com/guojin-yan/TensorRT-CSharp-API-Samples/blob/master/model_samples/yolov8_custom_dynamic/Yolov8Det.cs

- Yolov8 Seg 目标分割项目源码:

https://github.com/guojin-yan/TensorRT-CSharp-API-Samples/blob/master/model_samples/yolov8_custom_dynamic/Yolov8Seg.cs

- Yolov8 Pose 人体关键点识别项目源码:

https://github.com/guojin-yan/TensorRT-CSharp-API-Samples/blob/master/model_samples/yolov8_custom_dynamic/Yolov8Pose.cs

- Yolov8 Cls 图像分类项目源码:

https://github.com/guojin-yan/TensorRT-CSharp-API-Samples/blob/master/model_samples/yolov8_custom_dynamic/Yolov8Cls.cs

- Yolov8 Obb 旋转目标识别项目源码:

https://github.com/guojin-yan/TensorRT-CSharp-API-Samples/blob/master/model_samples/yolov8_custom_dynamic/Yolov8Obb.cs

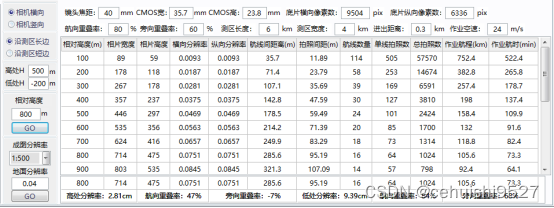

同时对本项目开发的案例进行了时间测试,以下时间只是程序运行一次的时间,测试环境为:

CPU:i7-165G7

CUDA型号:12.2

Cudnn:8.9.3

TensorRT:8.6.1.6

| Model | Batch | 数据预处理 (ms) | 模型推理 (ms) | 结果后处理 (ms) |

|---|---|---|---|---|

| Yolov8s-Det | 1 | 16.6 | 4.6 | 13.1 |

| 4 | 38.0 | 12.4 | 32.4 | |

| 8 | 70.5 | 23.0 | 80.1 | |

| Yolov8s-Obb | 1 | 28.7 | 8.9 | 17.7 |

| 4 | 81.7 | 25.9 | 67.4 | |

| 8 | 148.4 | 44.6 | 153.0 | |

| Yolov8s-Seg | 1 | 15.4 | 5.4 | 67.4 |

| 4 | 37.3 | 15.5 | 220.6 | |

| 8 | 78.7 | 26.9 | 433.6 | |

| Yolov8s-Pose | 1 | 15.1 | 5.2 | 8.7 |

| 4 | 39.2 | 13.2 | 14.3 | |

| 8 | 67.8 | 23.1 | 27.7 | |

| Yolov8s-Cls | 1 | 9.9 | 1.3 | 1.9 |

| 4 | 14.7 | 1.5 | 2.3 | |

| 8 | 22.6 | 2.0 | 2.9 |

最后如果各位开发者在使用中有任何问题,欢迎大家与我联系。

![19-指针[下]](https://img-blog.csdnimg.cn/12ce5c693ef54ea2bc20eb718851e9fa.jpg)