紧接上一篇文章机器学习-神经网络分类 继续描述

先得将数据从 numpy arrays 移到 PyTorch tensor 里。

import torch

# 将数据从numpy移到PyTorch tensors里

X = torch.from_numpy(X).type(torch.float)

y = torch.from_numpy(y).type(torch.float)

之后,将数据分成训练集和测试集

from sklearn.model_selection import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X,

y,

test_size = 0.2,

random_state = 42)

print(f"X_train 的长度: {len(X_train)}")

print(f"X_test 的长度: {len(X_test)}")

print(f"y_train 的长度: {len(y_train)}")

print(f"y_test 的长度: {len(y_test)}")

# 结果如下

X_train 的长度: 800

X_test 的长度: 200

y_train 的长度: 800

y_test 的长度: 200

现在就可以创建模型

# 创建一个模型

import torch

from torch import nn

# Construct a model class that subclasses nn.Module

class CircleModelV0(nn.Module):

def __init__(self):

super().__init__()

# Create 2 nn.Linear layers capable of handling X and y input and output shapes

self.layer_1 = nn.Linear(in_features = 2, out_features = 5) # takes in 2 features (X), produces 5 features. # 这一部分中的5称为 hidden units 或者 neurons

self.layer_2 = nn.Linear(in_features = 5, out_features = 1) # takes in 5 features, produces 1 feature (y)

# 3. Define a forward method containing the forward pass computation

def forward(self, x):

# Return the output of layer_2, a single feature, the same shape as y

return self.layer_2(self.layer_1(x)) # computation goes through layer_1 first then the output of layer_1 goes through layer_2

model_0 = CircleModelV0().to("cpu")

print(model_0)

# 结果如下

CircleModelV0(

(layer_1): Linear(in_features=2, out_features=5, bias=True)

(layer_2): Linear(in_features=5, out_features=1, bias=True)

)

The number of hidden units you can use in neural network layers is a hyperparameter (a value you can set yourself) and there’s no set in stone value you have to use.

Generally more hidden units is better but there’s also such a thing as too much. The amount you choose will depend on your model type and dataset you’re working with.

设置 loss function 和 Optimizer

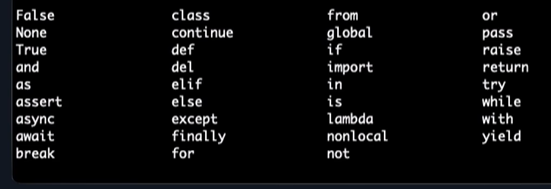

| Loss function/Optimizer | Problem type | PyTorch Code |

|---|---|---|

| Stochastic Gradient Descent (SGD) optimizer | Classification, regression, many others. | torch.optim.SGD() |

| Adam Optimizer | Classification regression, many others. | torch.optim.Adam() |

| Binary cross entropy loss | Binary classification | torch.nn.BCELossWithLogits or torch.nn.BCELoss |

| Cross entropy loss | Multi-class classification | torch.nn.CrossEntropyLoss |

| Mean absolute error (MAE) or L1 Loss | Regression | torch.nn.L1Loss |

| Mean squared error (MSE) or L2 Loss | Regression | torch.nn.MSELoss |

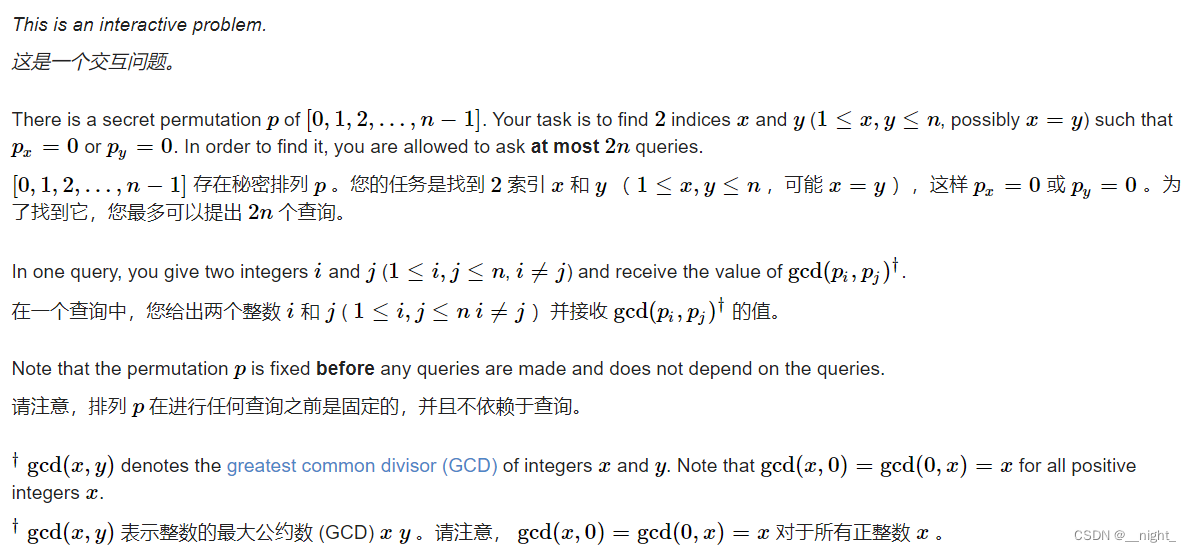

PyTorch has two binary cross entropy implementations:

torch.nn.BCELoss()- Creates a loss function that measures the binary cross entropy between the target (label) and input (features)torch.nn.BCEWithLogitsLoss()- This is the same as above except it has a sigmoid layer (nn.Sigmoid) built-in.

需要创建 loss function 和 optimizer

# 创建一个 loss function

loss_fn = nn.BCEWithLogitsLoss()

# 创建一个 optimizer

optimizer = torch.optim.SGD(params = model_0.parameters(),

lr = 0.1)

def accuracy_fn(y_true, y_pred):

correct = torch.eq(y_true, y_pred).sum().item()

acc = (correct / len(y_pred)) * 100

return acc

都看到这了,给个赞呗~