PartUploadUtils

package org.demo.apptest1.service;

import lombok.Data;

import lombok.extern.slf4j.Slf4j;

import org.apache.http.HttpEntity;

import org.apache.http.client.methods.CloseableHttpResponse;

import org.apache.http.client.methods.HttpPost;

import org.apache.http.entity.ContentType;

import org.apache.http.entity.mime.MultipartEntityBuilder;

import org.apache.http.impl.client.CloseableHttpClient;

import org.apache.http.impl.client.HttpClients;

import org.apache.http.util.EntityUtils;

import org.springframework.util.StringUtils;

import java.io.*;

import java.math.BigDecimal;

import java.math.RoundingMode;

import java.util.Map;

/**

* 分解大文件,并上传到指定的服务器

*

* @author zhe.xiao

* @date 2021-06-04 11:33

* @description

*/

@Slf4j

@Data

public class PartUploadUtils implements Closeable {

/**

* 大文件分片,每片的大小 2M

*/

private long pieceSize = 2 * 1024 * 1024;

private File file;

private long totalSize;

private int totalChunk;

RandomAccessFile randomAccessFile = null;

CloseableHttpClient httpClient = null;

public PartUploadUtils(File file) {

this.file = file;

}

public void initProperty() throws FileNotFoundException {

this.totalSize = file.length();

this.totalChunk = this.calcTotalChunk();

//创建文件对象

randomAccessFile = new RandomAccessFile(this.file, "r");

//创建HttpClient实例

httpClient = HttpClients.createDefault();

}

/**

* 读取chunk的字节数

*

* @param currentChunk 默认从1开始,作为第1片

* @return

*/

public byte[] readChunkBytes(int currentChunk) {

long beginPos = (currentChunk - 1) * pieceSize;

long actualSize = pieceSize;

if (currentChunk == totalChunk) {

actualSize = (int) (totalSize - (currentChunk - 1) * pieceSize);

}

log.info("chunk={}, beginPos={}, actualSize={}", currentChunk, beginPos, actualSize);

return this.seekData(beginPos, actualSize);

}

/**

* 寻找数据

*

* @param beginPos

* @param actualSize

* @return

*/

private byte[] seekData(long beginPos, long actualSize) {

try {

if (!this.file.exists() || this.file.length() == 0) {

return null;

}

RandomAccessFile randomAccessFile = this.randomAccessFile;

randomAccessFile.seek(beginPos);

byte[] bytes = new byte[(int) actualSize];

randomAccessFile.read(bytes);

return bytes;

} catch (Exception e) {

log.error("seekData error", e);

return null;

}

}

/**

* 计算总片数

*

* @return

*/

private int calcTotalChunk() {

return new BigDecimal(totalSize).divide(new BigDecimal(pieceSize), RoundingMode.UP).intValue();

}

@Override

public void close() throws IOException {

log.info("PartUploadUtils close start.");

if (randomAccessFile != null) {

randomAccessFile.close();

log.info("PartUploadUtils close randomAccessFile.");

}

if (httpClient != null) {

httpClient.close();

log.info("PartUploadUtils close httpClient.");

}

}

public void uploadChunk(String url, byte[] bytes, Map<String, String> param) throws IOException {

// 创建HttpPost实例

HttpPost httpPost = new HttpPost(url);

//添加token

String authToken = param.getOrDefault("authToken", "");

if (StringUtils.hasText(authToken)) {

httpPost.addHeader("Authorization", "Bearer " + authToken);

}

// 构建MultipartEntity,添加字节数据和参数

MultipartEntityBuilder multipartEntity = MultipartEntityBuilder.create();

multipartEntity.addBinaryBody("file", bytes, ContentType.DEFAULT_BINARY, param.get("filename")); // "file"是表单字段名

param.forEach((key, value) -> multipartEntity.addTextBody(key, value)); // 添加其他参数

HttpEntity httpEntity = multipartEntity.build();

// 设置请求实体

httpPost.setEntity(httpEntity);

// 执行请求

CloseableHttpResponse response = httpClient.execute(httpPost);

// 获取并打印响应内容

HttpEntity responseEntity = response.getEntity();

String responseString = EntityUtils.toString(responseEntity);

System.out.println(responseString);

// 关闭响应

response.close();

}

}

PartUploadClient

package org.demo.apptest1.service;

import lombok.extern.slf4j.Slf4j;

import org.apache.commons.codec.binary.Hex;

import org.springframework.stereotype.Service;

import java.io.File;

import java.security.MessageDigest;

import java.util.HashMap;

@Slf4j

@Service

public class PartUploadClient {

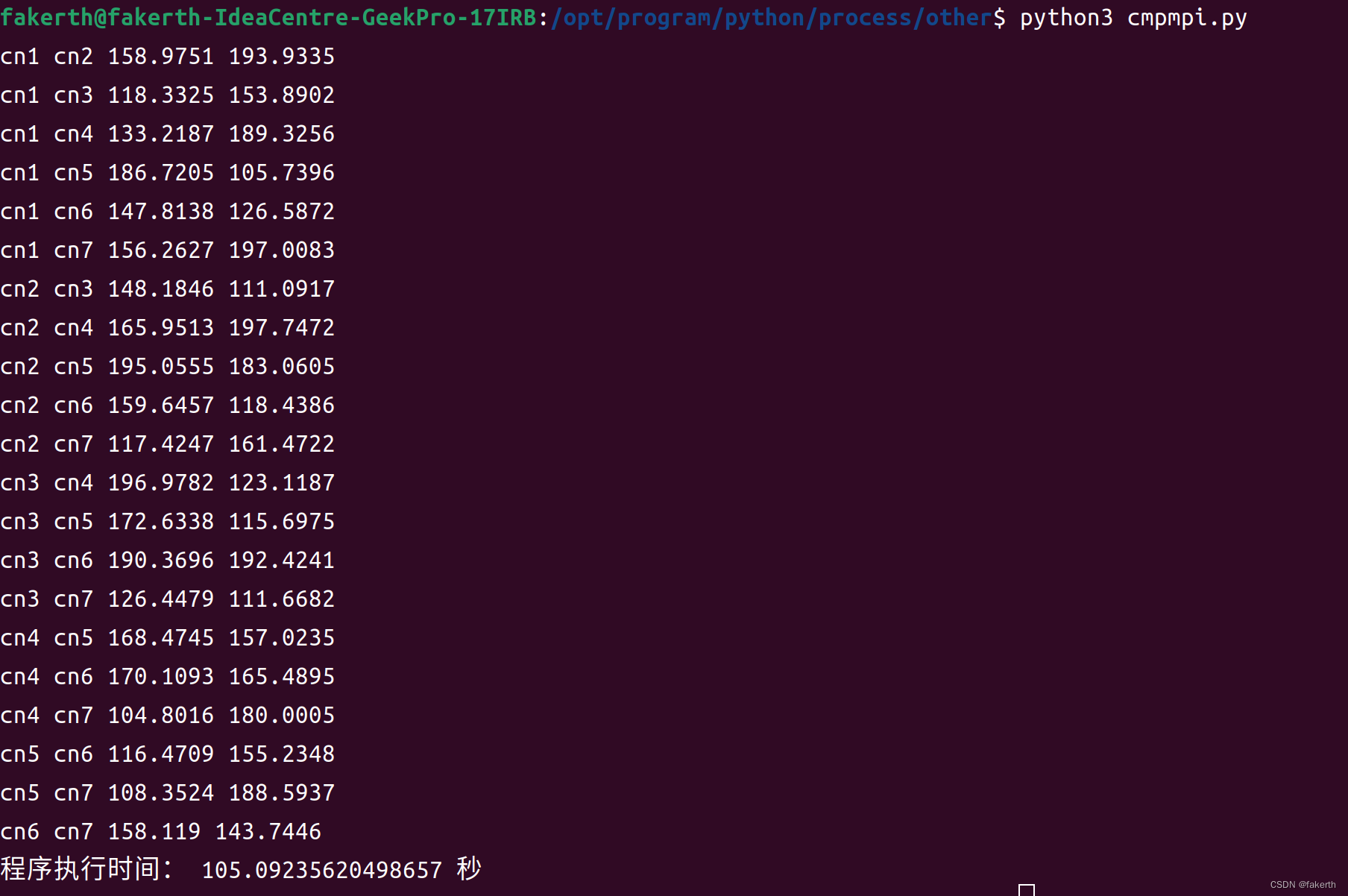

public void uploadFile() {

File file = new File("D:/zzzz.txt");

try (PartUploadUtils uploadUtils = new PartUploadUtils(file)) {

uploadUtils.initProperty();

int totalChunk = uploadUtils.getTotalChunk();

for (int i = 1; i <= totalChunk; i++) {

log.info("第{}片", i);

byte[] bytes = uploadUtils.readChunkBytes(i);

//上传数据

HashMap<String, String> map = new HashMap<>();

map.put("filename", file.getName());

map.put("md5", readMd5(bytes));

map.put("totalSize", String.valueOf(uploadUtils.getTotalSize()));

map.put("totalChunk", String.valueOf(totalChunk));

map.put("currentChunk", String.valueOf(i));

map.put("authToken", "eyJ0eXAiOiJKV1QiLCJhbGciOiJIUzI1NiJ9");

uploadUtils.uploadChunk("http://localhost:13000/uploadData", bytes, map);

}

} catch (Exception e) {

log.error("error", e);

}

}

private String readMd5(byte[] bytes) {

try {

MessageDigest md = MessageDigest.getInstance("MD5");

md.update(bytes);

byte[] md5Bytes = md.digest();

return Hex.encodeHexString(md5Bytes);

} catch (Exception e) {

log.error("error", e);

return "";

}

}

public static void main(String[] args) {

PartUploadClient client = new PartUploadClient();

client.uploadFile();

}

}

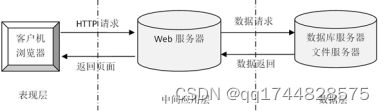

这个时候服务端可以采取正常的MultipartFile接受数据,对象如下:

import lombok.Data;

import lombok.experimental.Accessors;

import org.springframework.web.multipart.MultipartFile;

/**

* @author zhe.xiao

* @date 2023-02-03 13:50

* @description

**/

@Data

@Accessors(chain = true)

public class EcgPartUploadDto {

//文件的数据,存在两种形态 (文件句柄或者字节码)

private MultipartFile file;

//文件名

private String filename;

//文件总大小

private long totalSize;

//文件一共被分了多少个片

private int totalChunk;

//文件当前是第几个分片(第一个分片从1开始)

private int currentChunk;

//md5码

private String md5;

//目录地址

private String destDir;

}