Flink中所有的pom文件中的索引

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>org.example</groupId>

<artifactId>untitled</artifactId>

<version>1.0-SNAPSHOT</version>

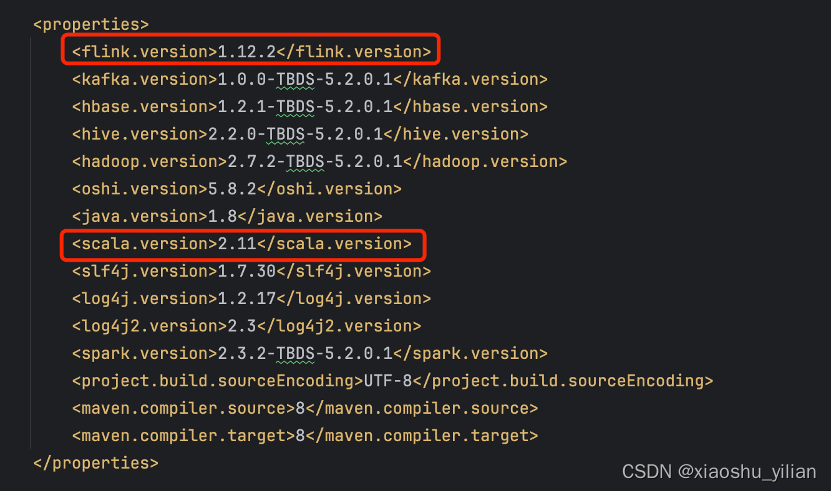

<properties>

<flink.version>1.14.0</flink.version>

<scala.version>2.12</scala.version>

<hive.version>3.1.2</hive.version>

<mysqlconnect.version>5.1.47</mysqlconnect.version>

<clickhouse.version>0.3.2</clickhouse.version>

<hdfs.version>3.1.3</hdfs.version>

<spark.version>3.1.1</spark.version>

<hbase.version>2.2.3</hbase.version>

<kafka.version>2.4.1</kafka.version>

<lang3.version>3.9</lang3.version>

<flink-connector-redis.verion>1.1.5</flink-connector-redis.verion>

</properties>

<dependencies>

<dependency>

<groupId>org.apache.commons</groupId>

<artifactId>commons-lang3</artifactId>

<version>${

lang3.version}</version>

</dependency>

<!-- mysql连接器 -->

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<version>${

mysqlconnect.version}</version>

</dependency>

<!-- spark处理离线 -->

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-sql_${

scala.version}</artifactId>

<version>${

spark.version}</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-hive_${

scala.version}</artifactId>

<version>${

spark.version}</version>

</dependency>

<dependency>

<groupId>com.google.guava</groupId>

<artifactId>guava</artifactId>

<version>27.0-jre</version>

</dependency>

<!-- <dependency>-->

<!-- <groupId>org.apache.hive</groupId>-->

<!-- <artifactId>hive-exec</artifactId>-->

<!-- <version>2.3.4</version>-->

<!-- </dependency>-->

<!-- kafka -->

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka_${

scala.version}</artifactId>

<version>${

kafka.version}</version>

</dependency>

<!-- flink 实时处理 -->

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-runtime-web_${

scala.version}</artifactId>

<version>${

flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-clients_${

scala.version}</artifactId>

<version>${

flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-streaming-scala_${

scala.version}</artifactId>

<version>${

flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-connector-kafka_${

scala.version}</artifactId>

<version>${

flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table-planner_${

scala.version}</artifactId>

<version>${

flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-json</artifactId>

<version>${

flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table-api-scala-bridge_${

scala.version}</artifactId>

<version>${

flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-connector-redis_2.11</artifactId>

<exclusions>

<exclusion>

<groupId>org.apache.flink</groupId>

<artifactId>flink-shaded-hadoop2</artifactId>

</exclusion>

<exclusion>

<groupId>org.apache.commons</groupId>

<artifactId>commons-lang3</artifactId>

</exclusion>

</exclusions>

<version>${

flink-connector-redis.verion}</version>

</dependency>

<dependency>

<groupId>org.apache.commons</groupId>

<artifactId>commons-lang3</artifactId>

<version>${

lang3.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-connector-hive_${

scala.version} </artifactId>

<version>${

flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-connector-hbase-2.2_${

scala.version}</artifactId>

<version>${

flink.version}</version>

</dependency>

<!-- hadoop相关 -->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>${

hdfs.version}</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-auth</artifactId>

<version>${

hdfs.version}</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-mllib_${

scala.version}</artifactId>

<version>${

spark.version}</version>

</dependency>

<!-- https:

<dependency>

<groupId>dom4j</groupId>

<artifactId>dom4j</artifactId>

<version>1.6.1</version>

</dependency>

<dependency>

<groupId>org.jdom</groupId>

<artifactId>jdom2</artifactId>

<version>2.0.6</version>

</dependency>

<dependency>

<groupId>jaxen</groupId>

<artifactId>jaxen</artifactId>

<version>1.1.6</version>

</dependency>

<dependency>

<groupId>xalan</groupId>

<artifactId>xalan</artifactId>

<version>2.7.2</version>

</dependency>

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>4.11</version>

<scope>test</scope>

</dependency>

<!-- clickhouse -->

<!-- 连接ClickHouse需要驱动包 -->

<dependency>

<groupId>ru.yandex.clickhouse</groupId>

<artifactId>clickhouse-jdbc</artifactId>

<version>${

clickhouse.version}</version>

<!-- 去除与Spark 冲突的包 -->

<exclusions>

<exclusion>

<groupId>com.fasterxml.jackson.core</groupId>

<artifactId>jackson-databind</artifactId>

</exclusion>

<exclusion>

<groupId>net.jpountz.lz4</groupId>

<artifactId>lz4</artifactId>

</exclusion>

</exclusions>

</dependency>

<!-- hudi -->

<!-- https:

<dependency>

<groupId>org.apache.hudi</groupId>

<artifactId>hudi-spark3.1-bundle_2.12</artifactId>

<version>0.12.0</version>

</dependency>

</dependencies>

</project>