背景:

阿里云的dts不支持前缀匹配迁移。

调研发现RedisShake可以前缀匹配迁移。

https://github.com/tair-opensource/RedisShake

proxy 代理模式

阿里云的redis cluster 默认是proxy 代理模式, 不支持增量迁移。

如果要支持增量迁移需要开启 redis cluster 的直连模式。 (和阿里沟通 开启关闭 直连模式对现有应用无影响)

和开发沟通,业务不需要增量迁移,增量数据可以忽略。

因为不能增量迁移 采用了 rump 模式。

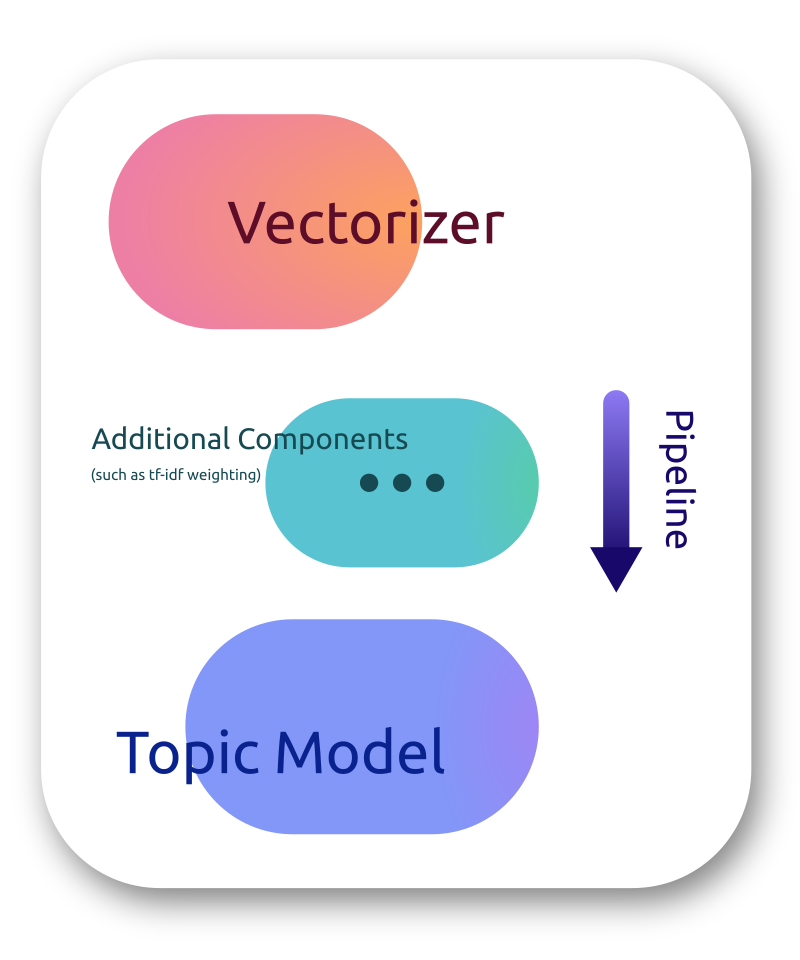

恢复(restore):将 RDB 文件恢复到目标Redis数据库。

备份(dump):将源 Redis 的全量数据通过RDB文件备份起来。

解析(decode):读取 RDB 文件,并以 JSON 格式解析存储。

同步(sync):支持源redis和目的redis的数据同步,支持全量和增量数据的迁移,支持从云下到阿里云云上的同步,也支持云下到云下不同环境的同步,支持单节点、主从版、集群版之间的互相同步。

同步(rump):支持源 Redis 和目的 Redis 的数据同步,仅支持全量迁移。采用scan和restore命令进行迁移,支持不同云厂商不同redis版本的迁移。

rump 模式 关键参数

conf.version = 1

id = redis-shake

log.file = /xxxx/redis-shake/logs/redis-shake.log

log.level = info

system_profile = 9310

http_profile = 9320

parallel = 5

source.type = proxy

source.address = r-xxx.redis.rds.aliyuncs.com:6379

source.password_raw =xxx

source.auth_type = auth

target.type = proxy

target.address = r-ddd.redis.rds.aliyuncs.com:6379

target.password_raw = xxx

target.auth_type = auth

target.db = -1

#key_exists = none

key_exists = rewrite

filter.db.whitelist = 0

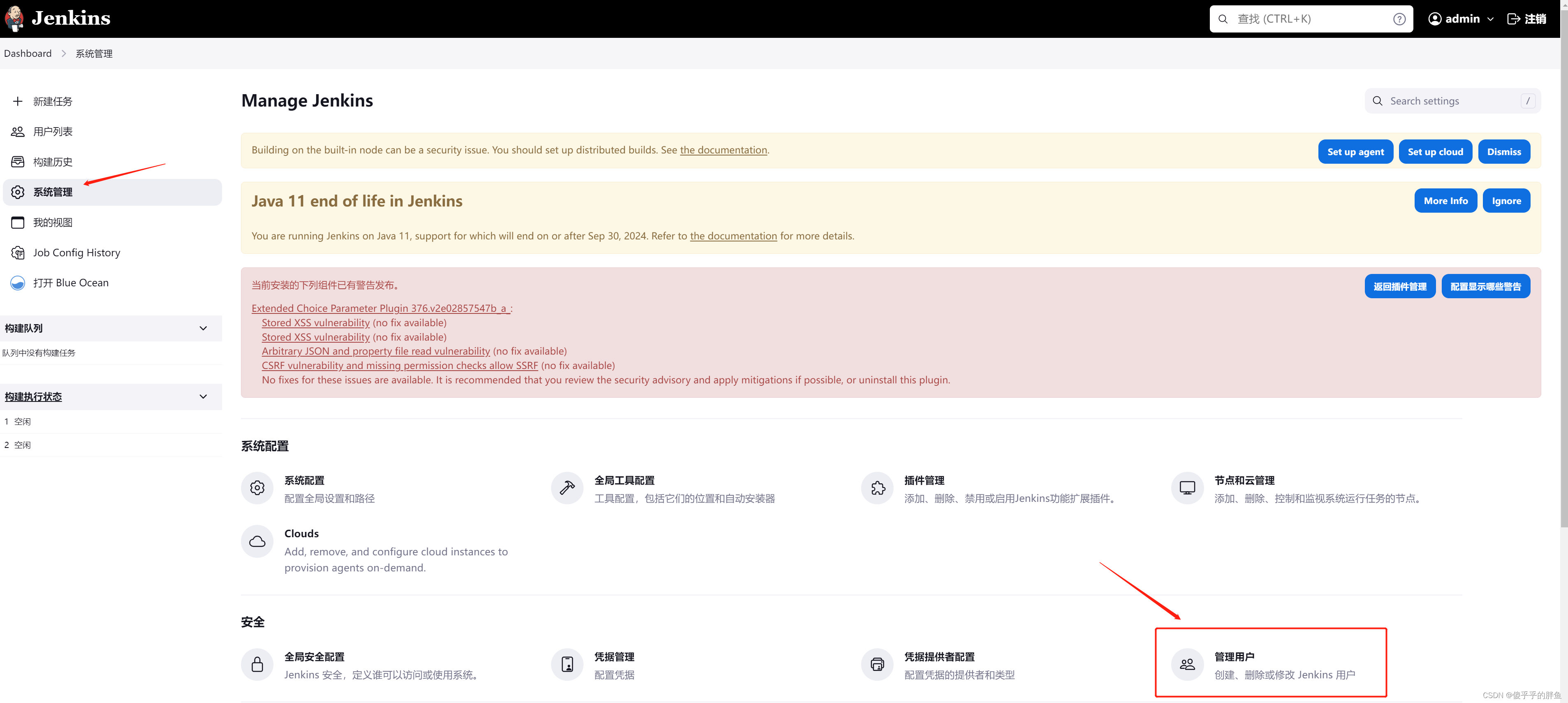

# 配置

filter.key.whitelist = YUEYUE_LEOPARD_ADS_showURLV1_

keep_alive = 0

# 配置拉去数据qps

qps = 10000

启动命令

./redis-shake.linux -conf ./redis-shake.conf -type rump

迁移用时

扫描了1千万的key ,过滤了1.2w数据

7分钟

filter.key.blacklis 排查前缀

支持按前缀过滤key,不让指定前缀的key通过,分号分隔。比如指定abc,将会阻塞abc, abc1, abcxxx

filter.key.blacklist =

在线实时增量同步

源阿里云redis需要开启直连地址。阿里直连地址是一个lb,但是redis-shake 需要可以直接链接的master地址或者slave地址(阿里直连模式,只给master节点分了vip)。

获取方式1、找阿里云后台看,控制台看不到 2、配置文件写一个直连地址,运行会报如下错误,提示的ip地址就是master节点的vip地址。3、使用直连地址进入命令行 运行命令CLUSTER NODES 查看到的地址就是master的vip地址。

[root@xxxredis-shake]# ./redis-shake.linux -conf ./redis-shake.conf -type sync

Conf.Options check failed: mode[sync] parse address failed[[source] redis address should be all masters or all slaves, master:[[172.18.1.134:6379 172.18.1.135:6379 172.18.1.136:6379 172.18.1.137:6379 172.18.1.138:6379 172.18.1.139:6379 172.18.1.140:6379 172.18.1.141:6379 172.18.1.142:6379 172.18.1.143:6379 172.18.1.144:6379 172.18.1.145:6379 172.18.1.146:6379 172.18.1.147:6379 172.18.1.148:6379 172.18.1.149:6379]], slave[[]]]

报错

2024/01/12 16:16:12 [INFO] DbSyncer[0] psync connect '172.18.1.134:6379' with auth type[auth] OK!

2024/01/12 16:16:12 [PANIC] repl listening-port failed[-ERR command replconf not support for your account]

[stack]:

2 github.com/alibaba/RedisShake/redis-shake/common/utils.go:141

github.com/alibaba/RedisShake/redis-shake/common.SendPSyncListeningPort

1 github.com/alibaba/RedisShake/redis-shake/dbSync/syncBegin.go:41

github.com/alibaba/RedisShake/redis-shake/dbSync.(*DbSyncer).sendPSyncCmd

0 github.com/alibaba/RedisShake/redis-shake/dbSync/dbSyncer.go:113

github.com/alibaba/RedisShake/redis-shake/dbSync.(*DbSyncer).Sync

默认配置文档

# This file is the configuration of redis-shake.

# If you have any problem, please visit: https://github.com/alibaba/RedisShake/wiki/FAQ

# 有疑问请先查阅:https://github.com/alibaba/RedisShake/wiki/FAQ

# current configuration version, do not modify.

# 当前配置文件的版本号,请不要修改该值。

conf.version = 1

# id

id = redis-shake

# The log file name, if left blank, it will be printed to stdout,

# otherwise it will be printed to the specified file.

# 日志文件名,留空则会打印到 stdout,否则打印到指定文件。

# for example:

# log.file =

# log.file = /var/log/redis-shake.log

log.file =

# log level: "none", "error", "warn", "info", "debug".

# default is "info".

# 日志等级,可选:none error warn info debug

# 默认为:info

log.level = info

# 进程文件存储目录,留空则会输出到当前目录,

# 注意这个是目录,真正生成的 pid 是 {pid_path}/{id}.pid

# 例如:

# pid_path = ./

# pid_path = /var/run/

pid_path =

# pprof port.

system_profile = 9310

# restful port, set -1 means disable, in `restore` mode RedisShake will exit once finish restoring RDB only if this value

# is -1, otherwise, it'll wait forever.

# restful port, 查看 metric 端口, -1 表示不启用. 如果是`restore`模式,只有设置为-1才会在完成RDB恢复后退出,否则会一直block。

# http://127.0.0.1:9320/conf 查看 redis-shake 使用的配置

# http://127.0.0.1:9320/metric 查看 redis-shake 的同步情况

http_profile = 9320

# parallel routines number used in RDB file syncing. default is 64.

# 启动多少个并发线程同步一个RDB文件。

parallel = 32

# source redis configuration.

# used in `dump`, `sync` and `rump`.

# source redis type, e.g. "standalone" (default), "sentinel" or "cluster".

# 1. "standalone": standalone db mode.

# 2. "sentinel": the redis address is read from sentinel.

# 3. "cluster": the source redis has several db.

# 4. "proxy": the proxy address, currently, only used in "rump" mode.

# used in `dump`, `sync` and `rump`.

# 源端 Redis 的类型,可选:standalone sentinel cluster proxy

# 注意:proxy 只用于 rump 模式。

source.type = standalone

# ip:port

# the source address can be the following:

# 1. single db address. for "standalone" type.

# 2. ${sentinel_master_name}:${master or slave}@sentinel single/cluster address, e.g., mymaster:master@127.0.0.1:26379;127.0.0.1:26380, or @127.0.0.1:26379;127.0.0.1:26380. for "sentinel" type.

# 3. cluster that has several db nodes split by semicolon(;). for "cluster" type. e.g., 10.1.1.1:20331;10.1.1.2:20441.

# 4. proxy address(used in "rump" mode only). for "proxy" type.

# 源redis地址。对于sentinel或者开源cluster模式,输入格式为"master名字:拉取角色为master或者slave@sentinel的地址",别的cluster

# 架构,比如codis, twemproxy, aliyun proxy等需要配置所有master或者slave的db地址。

# 源端 redis 的地址

# 1. standalone 模式配置 ip:port, 例如: 10.1.1.1:20331

# 2. cluster 模式需要配置所有 nodes 的 ip:port, 例如: source.address = 10.1.1.1:20331;10.1.1.2:20441

source.address = 127.0.0.1:20441

# source password, left blank means no password.

# 源端密码,留空表示无密码。

source.password_raw =

# auth type, don't modify it

source.auth_type = auth

# tls enable, true or false. Currently, only support standalone.

# open source redis does NOT support tls so far, but some cloud versions do.

source.tls_enable = false

# Whether to verify the validity of the redis certificate, true means verification, false means no verification

source.tls_skip_verify = false

# input RDB file.

# used in `decode` and `restore`.

# if the input is list split by semicolon(;), redis-shake will restore the list one by one.

# 如果是decode或者restore,这个参数表示读取的rdb文件。支持输入列表,例如:rdb.0;rdb.1;rdb.2

# redis-shake将会挨个进行恢复。

source.rdb.input =

# the concurrence of RDB syncing, default is len(source.address) or len(source.rdb.input).

# used in `dump`, `sync` and `restore`. 0 means default.

# This is useless when source.type isn't cluster or only input is only one RDB.

# 拉取的并发度,如果是`dump`或者`sync`,默认是source.address中db的个数,`restore`模式默认len(source.rdb.input)。

# 假如db节点/输入的rdb有5个,但rdb.parallel=3,那么一次只会

# 并发拉取3个db的全量数据,直到某个db的rdb拉取完毕并进入增量,才会拉取第4个db节点的rdb,

# 以此类推,最后会有len(source.address)或者len(rdb.input)个增量线程同时存在。

source.rdb.parallel = 0

# for special cloud vendor: ucloud

# used in `decode` and `restore`.

# ucloud集群版的rdb文件添加了slot前缀,进行特判剥离: ucloud_cluster。

source.rdb.special_cloud =

# target redis configuration. used in `restore`, `sync` and `rump`.

# the type of target redis can be "standalone", "proxy" or "cluster".

# 1. "standalone": standalone db mode.

# 2. "sentinel": the redis address is read from sentinel.

# 3. "cluster": open source cluster (not supported currently).

# 4. "proxy": proxy layer ahead redis. Data will be inserted in a round-robin way if more than 1 proxy given.

# 目的redis的类型,支持standalone,sentinel,cluster和proxy四种模式。

target.type = standalone

# ip:port

# the target address can be the following:

# 1. single db address. for "standalone" type.

# 2. ${sentinel_master_name}:${master or slave}@sentinel single/cluster address, e.g., mymaster:master@127.0.0.1:26379;127.0.0.1:26380, or @127.0.0.1:26379;127.0.0.1:26380. for "sentinel" type.

# 3. cluster that has several db nodes split by semicolon(;). for "cluster" type.

# 4. proxy address. for "proxy" type.

target.address = 127.0.0.1:6379

# target password, left blank means no password.

# 目的端密码,留空表示无密码。

target.password_raw =

# auth type, don't modify it

target.auth_type = auth

# all the data will be written into this db. < 0 means disable.

target.db = -1

# Format: 0-5;1-3 ,Indicates that the data of the source db0 is written to the target db5, and

# the data of the source db1 is all written to the target db3.

# Note: When target.db is specified, target.dbmap will not take effect.

# 例如 0-5;1-3 表示源端 db0 的数据会被写入目的端 db5, 源端 db1 的数据会被写入目的端 db3

# 当 target.db 开启的时候 target.dbmap 不会生效.

target.dbmap =

# tls enable, true or false. Currently, only support standalone.

# open source redis does NOT support tls so far, but some cloud versions do.

target.tls_enable = false

# Whether to verify the validity of the redis certificate, true means verification, false means no verification

target.tls_skip_verify = false

# output RDB file prefix.

# used in `decode` and `dump`.

# 如果是decode或者dump,这个参数表示输出的rdb前缀,比如输入有3个db,那么dump分别是:

# ${output_rdb}.0, ${output_rdb}.1, ${output_rdb}.2

target.rdb.output = local_dump

# some redis proxy like twemproxy doesn't support to fetch version, so please set it here.

# e.g., target.version = 4.0

target.version =

# use for expire key, set the time gap when source and target timestamp are not the same.

# 用于处理过期的键值,当迁移两端不一致的时候,目的端需要加上这个值

fake_time =

# how to solve when destination restore has the same key.

# rewrite: overwrite.

# none: panic directly.

# ignore: skip this key. not used in rump mode.

# used in `restore`, `sync` and `rump`.

# 当源目的有重复 key 时是否进行覆写, 可选值:

# 1. rewrite: 源端覆盖目的端

# 2. none: 一旦发生进程直接退出

# 3. ignore: 保留目的端key,忽略源端的同步 key. 该值在 rump 模式下不会生效.

key_exists = none

# filter db, key, slot, lua.

# filter db.

# used in `restore`, `sync` and `rump`.

# e.g., "0;5;10" means match db0, db5 and db10.

# at most one of `filter.db.whitelist` and `filter.db.blacklist` parameters can be given.

# if the filter.db.whitelist is not empty, the given db list will be passed while others filtered.

# if the filter.db.blacklist is not empty, the given db list will be filtered while others passed.

# all dbs will be passed if no condition given.

# 指定的db被通过,比如0;5;10将会使db0, db5, db10通过, 其他的被过滤

filter.db.whitelist =

# 指定的db被过滤,比如0;5;10将会使db0, db5, db10过滤,其他的被通过

filter.db.blacklist =

# filter key with prefix string. multiple keys are separated by ';'.

# e.g., "abc;bzz" match let "abc", "abc1", "abcxxx", "bzz" and "bzzwww".

# used in `restore`, `sync` and `rump`.

# at most one of `filter.key.whitelist` and `filter.key.blacklist` parameters can be given.

# if the filter.key.whitelist is not empty, the given keys will be passed while others filtered.

# if the filter.key.blacklist is not empty, the given keys will be filtered while others passed.

# all the namespace will be passed if no condition given.

# 支持按前缀过滤key,只让指定前缀的key通过,分号分隔。比如指定abc,将会通过abc, abc1, abcxxx

filter.key.whitelist =

# 支持按前缀过滤key,不让指定前缀的key通过,分号分隔。比如指定abc,将会阻塞abc, abc1, abcxxx

filter.key.blacklist =

# filter given slot, multiple slots are separated by ';'.

# e.g., 1;2;3

# used in `sync`.

# 指定过滤slot,只让指定的slot通过

filter.slot =

# filter give commands. multiple commands are separated by ';'.

# e.g., "flushall;flushdb".

# used in `sync`.

# at most one of `filter.command.whitelist` and `filter.command.blacklist` parameters can be given.

# if the filter.command.whitelist is not empty, the given commands will be passed while others filtered.

# if the filter.command.blacklist is not empty, the given commands will be filtered.

# besides, the other config caused filters also effect as usual, e.g. filter.lua = true would filter lua commands.

# all the commands, except the other config caused filtered commands, will be passed if no condition given.

# 只让指定命令通过,分号分隔

filter.command.whitelist =

# 不让指定命令通过,分号分隔

# 除了这些指定的命令外,其他配置选项指定的过滤命令也会照常生效,如开启 filter.lua 将会过滤 lua 相关命令

filter.command.blacklist =

# filter lua script. true means not pass. However, in redis 5.0, the lua

# converts to transaction(multi+{commands}+exec) which will be passed.

# 控制不让lua脚本通过,true表示不通过

filter.lua = false

# big key threshold, the default is 500 * 1024 * 1024 bytes. If the value is bigger than

# this given value, all the field will be spilt and write into the target in order. If

# the target Redis type is Codis, this should be set to 1, please checkout FAQ to find

# the reason.

# 正常key如果不大,那么都是直接调用restore写入到目的端,如果key对应的value字节超过了给定

# 的值,那么会分批依次一个一个写入。如果目的端是Codis,这个需要置为1,具体原因请查看FAQ。

# 如果目的端大版本小于源端,也建议设置为1。

big_key_threshold = 524288000

# enable metric

# used in `sync`.

# 是否启用metric

metric = true

# print in log

# 是否将metric打印到log中

metric.print_log = false

# sender information.

# sender flush buffer size of byte.

# used in `sync`.

# 发送缓存的字节长度,超过这个阈值将会强行刷缓存发送

sender.size = 104857600

# sender flush buffer size of oplog number.

# used in `sync`. flush sender buffer when bigger than this threshold.

# 发送缓存的报文个数,超过这个阈值将会强行刷缓存发送,对于目的端是cluster的情况,这个值

# 的调大将会占用部分内存。

sender.count = 4095

# delay channel size. once one oplog is sent to target redis, the oplog id and timestamp will also

# stored in this delay queue. this timestamp will be used to calculate the time delay when receiving

# ack from target redis.

# used in `sync`.

# 用于metric统计时延的队列

sender.delay_channel_size = 65535

# enable keep_alive option in TCP when connecting redis.

# the unit is second.

# 0 means disable.

# TCP keep-alive保活参数,单位秒,0表示不启用。

keep_alive = 0

# used in `rump`.

# number of keys captured each time. default is 100.

# 每次scan的个数,不配置则默认100.

scan.key_number = 50

# used in `rump`.

# we support some special redis types that don't use default `scan` command like alibaba cloud and tencent cloud.

# 有些版本具有特殊的格式,与普通的scan命令有所不同,我们进行了特殊的适配。目前支持腾讯云的集群版"tencent_cluster"

# 和阿里云的集群版"aliyun_cluster",注释主从版不需要配置,只针对集群版。

scan.special_cloud =

# used in `rump`.

# we support to fetching data from given file which marks the key list.

# 有些云版本,既不支持sync/psync,也不支持scan,我们支持从文件中进行读取所有key列表并进行抓取:一行一个key。

scan.key_file =

# limit the rate of transmission. Only used in `rump` currently.

# e.g., qps = 1000 means pass 1000 keys per second. default is 500,000(0 means default)

qps = 200000

# enable resume from break point, please visit xxx to see more details.

# 断点续传开关

resume_from_break_point = false

# ----------------splitter----------------

# below variables are useless for current open source version so don't set.

# replace hash tag.

# used in `sync`.

replace_hash_tag = false

数据校验

RedisFullCheck工具可以校验数据的一致性

https://github.com/tair-opensource/RedisFullCheck

参考:

https://developer.aliyun.com/article/783138

https://zhuanlan.zhihu.com/p/597319519

https://zhuanlan.zhihu.com/p/404215310

https://tair-opensource.github.io/RedisShake/zh/guide/introduction.html

https://support.huaweicloud.com/migration-dcs/dcs-migrate-demo02.html