//通过stap_a模式发送sps和pps包给对端。

int32_t yang_push_h264_package_stap_a(void *psession,

YangPushH264Rtp *rtp, YangFrame *videoFrame) {

int err = Yang_Ok;

YangRtcSession *session=(YangRtcSession*)psession;

//重置rtpPacket的字段

yang_reset_rtpPacket(&rtp->videoStapPacket);

//设置payloadType为h264

rtp->videoStapPacket.header.payload_type = YangH264PayloadType;

//设置ssrc为videoSsrc

rtp->videoStapPacket.header.ssrc = rtp->videoSsrc;

//设置帧类型为Video

rtp->videoStapPacket.frame_type = YangFrameTypeVideo;

//设置naluType为StapA,此模式下一个nalu包可以通过多个rtp包发送,此时多个rtp包的时间戳相同。

rtp->videoStapPacket.nalu_type = (YangAvcNaluType) kStapA;

//设置marker为false

rtp->videoStapPacket.header.marker = false;

//设置sequence为videoSeq

rtp->videoStapPacket.header.sequence = rtp->videoSeq++;

//设置timestamp为视频帧的显示时间戳

rtp->videoStapPacket.header.timestamp = videoFrame->pts;

//设置视频的payload_type为StapA的模式

rtp->videoStapPacket.payload_type = YangRtspPacketPayloadTypeSTAP;

//重置stapA的data

yang_reset_h2645_stap(&rtp->stapData);

//创建sps和pps的结构体变量

YangSample sps_sample;

YangSample pps_sample;

//从视频帧的payload中取出sps和pps

yang_decodeMetaH264(videoFrame->payload, videoFrame->nb, &sps_sample,&pps_sample);

//从sps中取出NaluType赋值给stapData的nri

uint8_t header = (uint8_t) sps_sample.bytes[0];

rtp->stapData.nri = (YangAvcNaluType) header;

//将sps和pps分别插入stapData.nalus中。

yang_insert_YangSampleVector(&rtp->stapData.nalus, &sps_sample);

yang_insert_YangSampleVector(&rtp->stapData.nalus, &pps_sample);

//调用yang_push_h264_encodeVideo方法编码rtp的视频buffer,发送给p2p对端。

if ((err = yang_push_h264_encodeVideo(session, rtp, &rtp->videoStapPacket))

!= Yang_Ok) {

return yang_error_wrap(err, "encode packet");

}

return err;

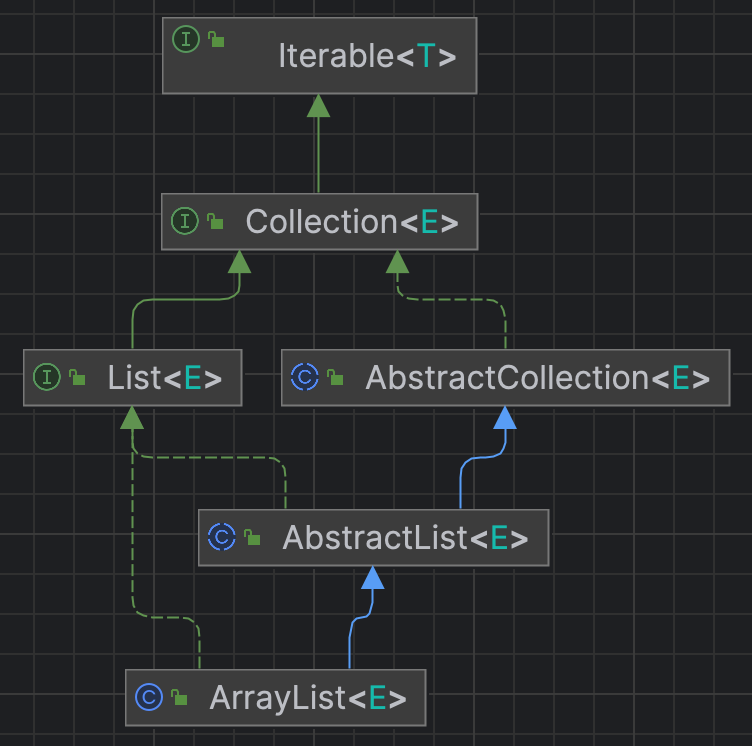

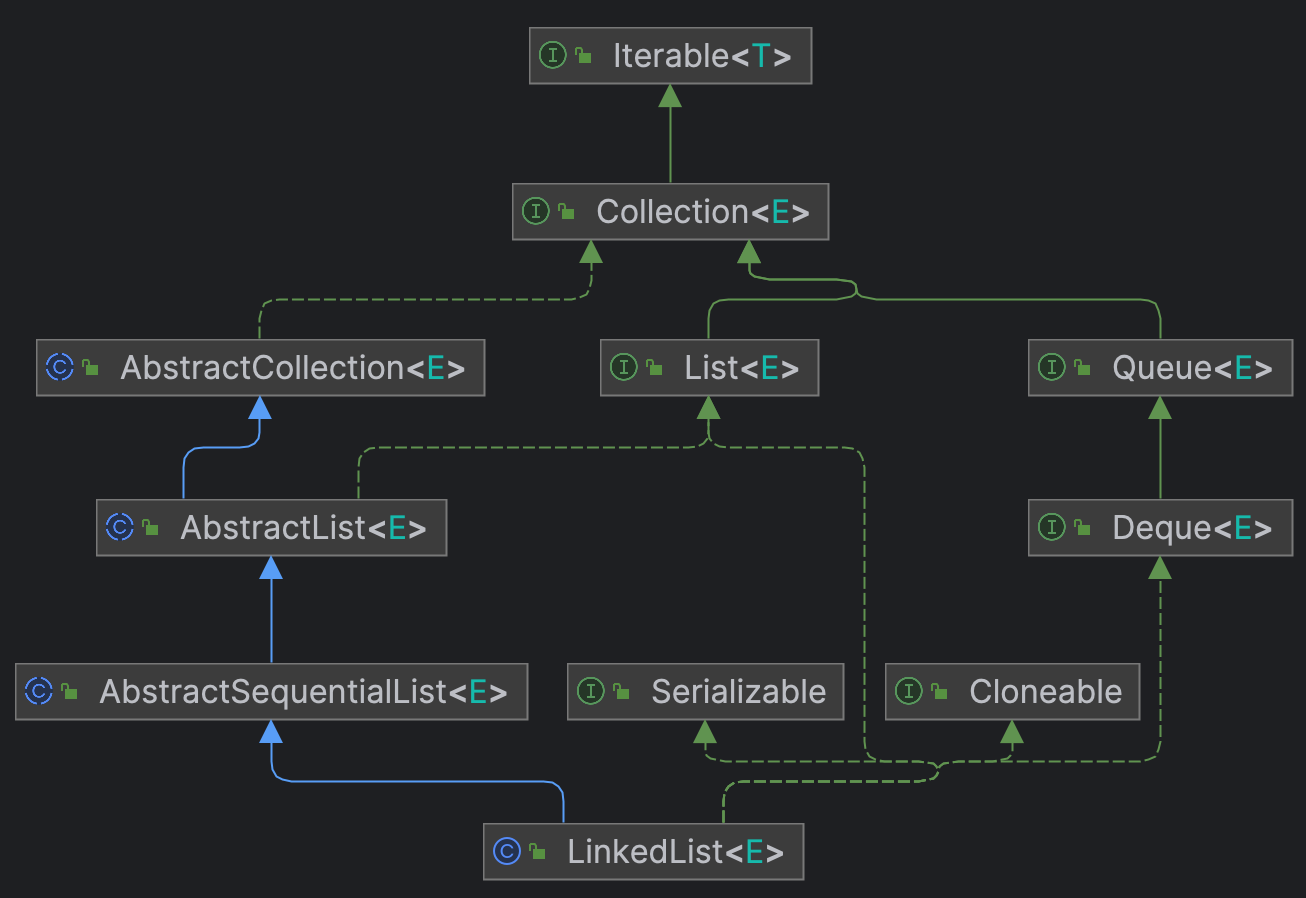

}ArrayList源码阅读

2024-01-08 00:34:03 39 阅读