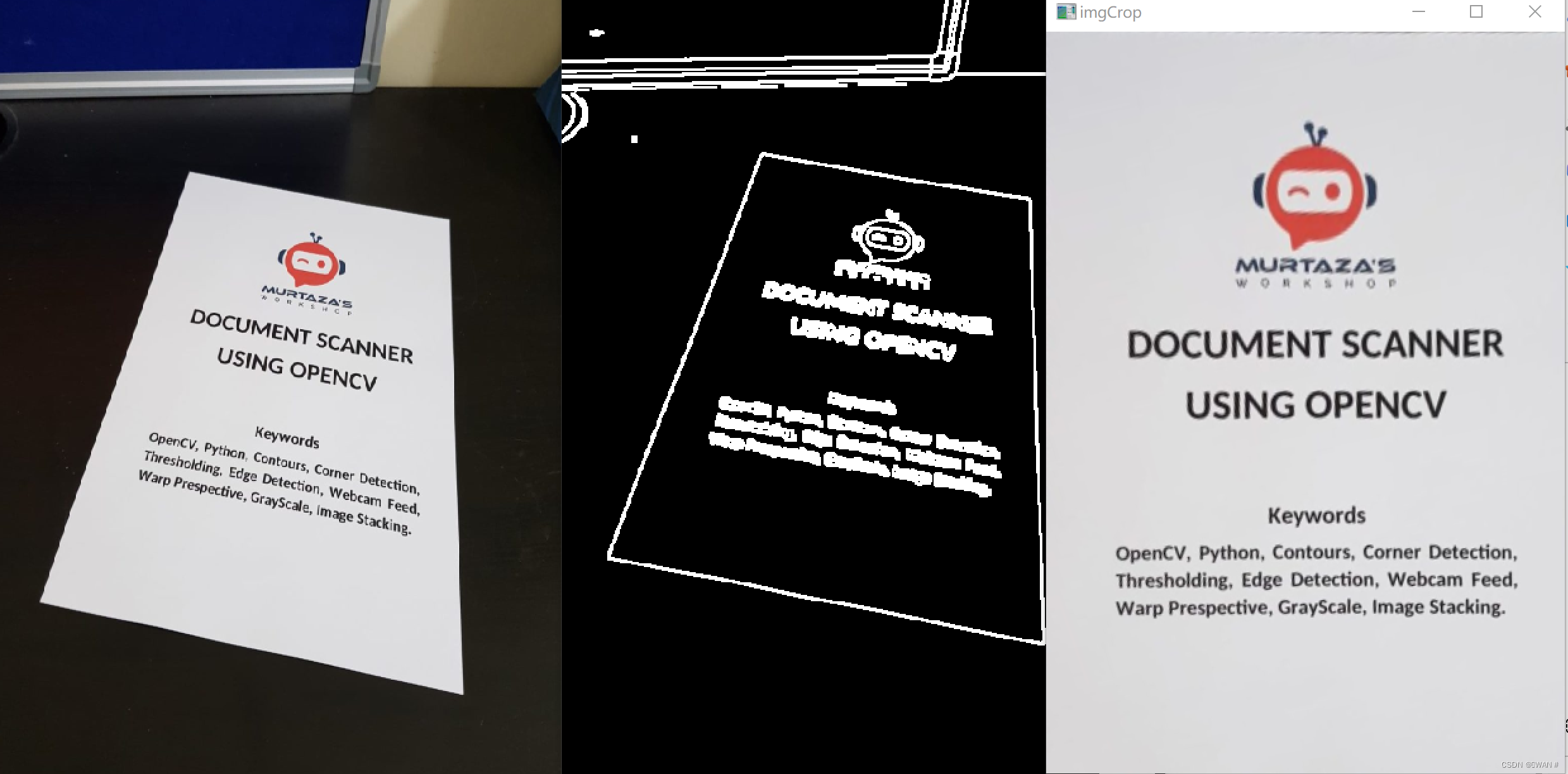

惯例先上结果图:

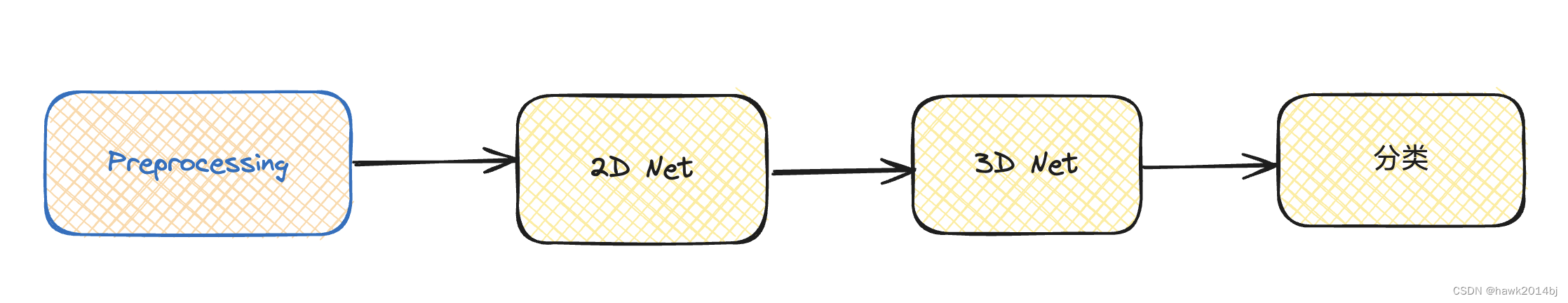

本文提供一种文本提取思路:

1、首先图像预处理:灰度转换、高斯模糊、边缘提取,膨胀。

Mat preProcessing(Mat img)

{

cvtColor(img, imgGray, COLOR_BGR2GRAY);

GaussianBlur(imgGray, imgBlur, Size(3, 3), 3, 0);

Canny(imgBlur, imgCanny, 25, 75);

Mat kernel = getStructuringElement(MORPH_RECT, Size(3, 3));

dilate(imgCanny, imgDil, kernel);

//erode(imgDil, imgErode, kernel);

return imgDil;

}2、预处理之后,获得轮廓特征、从而找到最大矩形,获取最大矩形的坐标。

vector<Point> getContours(Mat Dil) {

vector<vector<Point>> contours;

vector<Vec4i> hierarchy;

//contours定义为“vector<vector<Point>> contours”,是一个双重向量(向量内每个元素保存了一组由连续的Point构成的点的集合的向量),每一组点集就是一个轮廓,有多少轮廓,contours就有多少元素;

/* hierarchy包含4个值的数组:[Next, Previous, First Child, Parent]

Next:与当前轮廓处于同一层级的下一条轮廓

举例来说,前面图中跟0处于同一层级的下一条轮廓是1,所以Next = 1;同理,对轮廓1来说,Next = 2;那么对于轮廓2呢?没有与它同一层级的下一条轮廓了,此时Next = -1。

Previous:与当前轮廓处于同一层级的上一条轮廓

跟前面一样,对于轮廓1来说,Previous = 0;对于轮廓2,Previous = 1;对于轮廓2a,没有上一条轮廓了,所以Previous = -1。

First Child:当前轮廓的第一条子轮廓

比如对于轮廓2,第一条子轮廓就是轮廓2a,所以First Child = 2a;对轮廓3,First Child = 3a。

Parent:当前轮廓的父轮廓

比如2a的父轮廓是2,Parent = 2;轮廓2没有父轮廓,所以Parent = -1。*/

//RETR_EXTERNAL

//这种方式只寻找最高层级的轮廓,也就是只寻找最外层轮廓:

//CV_CHAIN_APPROX_SIMPLE:仅保存轮廓的拐点信息,把所有轮廓拐点处的点保存入contours向量内,拐点与拐点之间直线段上的信息点不予保留;

findContours(Dil, contours, hierarchy, RETR_EXTERNAL, CHAIN_APPROX_SIMPLE);

//drawContours(img, contours, -1, Scalar(255, 0, 255),2);

vector<vector<Point>>conPoly(contours.size());

vector<Rect>boundRect(contours.size());

vector<Point> biggest;

int maxArea = 0;

//排除干扰

for (int i = 0; i < contours.size(); i++) {

//计算轮廓面积

int area = contourArea(contours[i]);

string objectType;

//cout << area <<" ";

if (area > 1000 ) {

//arcLength(contours[i], true);计算轮廓周长

//InputArray类型的curve,输入的向量,二维点(轮廓顶点),可以为std::vector或Mat类型。

//bool类型的closed,用于指示曲线是否封闭的标识符,一般设置为true。

float peri = arcLength(contours[i], true);

对图像轮廓点进行多边形拟合

approxPolyDP(contours[i], conPoly[i], 0.02 * peri, true);

//cout << area << endl;

if (area > maxArea && conPoly[i].size()==4 ) {

//绘制轮廓

//drawContours(imgOriginal, conPoly, i, Scalar(255, 0, 255), 2);

biggest = {conPoly[i][0],conPoly[i][1], conPoly[i][2], conPoly[i][3]};

maxArea = area;

//cout << maxArea << endl;

}

//绘制矩形框

//rectangle(imgOriginal, boundRect[i].tl(), boundRect[i].br(), Scalar(0, 255, 0), 5);

}

}

return biggest;

}获取坐标之后,要进行仿射提取出文本,不过坐标提取出来的是0312(矩形从左到右从上到下标记),要变成0123。之后才能仿射,参考另一篇文章:轮廓提取、矩形标记时,点的位置需要重标-CSDN博客

全部代码实现:对于绘制函数可以视情况显示。

#include <opencv2/imgcodecs.hpp>

#include <opencv2/highgui.hpp>

#include <opencv2/imgproc.hpp>

#include <opencv2/objdetect.hpp>

#include <iostream>

using namespace std;

using namespace cv;

Document Scanner ///

Mat imgOriginal, imgGray, imgCanny, imgDil, imgThre, imgBlur, imgWarp, imgCrop;

vector<Point>initialPoints, docPoints;

float w = 420, h = 596;

Mat preProcessing(Mat img)

{

cvtColor(img, imgGray, COLOR_BGR2GRAY);

GaussianBlur(imgGray, imgBlur, Size(3, 3), 3, 0);

Canny(imgBlur, imgCanny, 25, 75);

Mat kernel = getStructuringElement(MORPH_RECT, Size(3, 3));

dilate(imgCanny, imgDil, kernel);

//erode(imgDil, imgErode, kernel);

return imgDil;

}

vector<Point> getContours(Mat Dil) {

vector<vector<Point>> contours;

vector<Vec4i> hierarchy;

//contours定义为“vector<vector<Point>> contours”,是一个双重向量(向量内每个元素保存了一组由连续的Point构成的点的集合的向量),每一组点集就是一个轮廓,有多少轮廓,contours就有多少元素;

/* hierarchy包含4个值的数组:[Next, Previous, First Child, Parent]

Next:与当前轮廓处于同一层级的下一条轮廓

举例来说,前面图中跟0处于同一层级的下一条轮廓是1,所以Next = 1;同理,对轮廓1来说,Next = 2;那么对于轮廓2呢?没有与它同一层级的下一条轮廓了,此时Next = -1。

Previous:与当前轮廓处于同一层级的上一条轮廓

跟前面一样,对于轮廓1来说,Previous = 0;对于轮廓2,Previous = 1;对于轮廓2a,没有上一条轮廓了,所以Previous = -1。

First Child:当前轮廓的第一条子轮廓

比如对于轮廓2,第一条子轮廓就是轮廓2a,所以First Child = 2a;对轮廓3,First Child = 3a。

Parent:当前轮廓的父轮廓

比如2a的父轮廓是2,Parent = 2;轮廓2没有父轮廓,所以Parent = -1。*/

//RETR_EXTERNAL

//这种方式只寻找最高层级的轮廓,也就是只寻找最外层轮廓:

//CV_CHAIN_APPROX_SIMPLE:仅保存轮廓的拐点信息,把所有轮廓拐点处的点保存入contours向量内,拐点与拐点之间直线段上的信息点不予保留;

findContours(Dil, contours, hierarchy, RETR_EXTERNAL, CHAIN_APPROX_SIMPLE);

//drawContours(img, contours, -1, Scalar(255, 0, 255),2);

vector<vector<Point>>conPoly(contours.size());

vector<Rect>boundRect(contours.size());

vector<Point> biggest;

int maxArea = 0;

//排除干扰

for (int i = 0; i < contours.size(); i++) {

//计算轮廓面积

int area = contourArea(contours[i]);

string objectType;

//cout << area <<" ";

if (area > 1000 ) {

//arcLength(contours[i], true);计算轮廓周长

//InputArray类型的curve,输入的向量,二维点(轮廓顶点),可以为std::vector或Mat类型。

//bool类型的closed,用于指示曲线是否封闭的标识符,一般设置为true。

float peri = arcLength(contours[i], true);

对图像轮廓点进行多边形拟合

approxPolyDP(contours[i], conPoly[i], 0.02 * peri, true);

//cout << area << endl;

if (area > maxArea && conPoly[i].size()==4 ) {

//绘制轮廓

//drawContours(imgOriginal, conPoly, i, Scalar(255, 0, 255), 2);

biggest = {conPoly[i][0],conPoly[i][1], conPoly[i][2], conPoly[i][3]};

maxArea = area;

//cout << maxArea << endl;

}

//绘制矩形框

//rectangle(imgOriginal, boundRect[i].tl(), boundRect[i].br(), Scalar(0, 255, 0), 5);

}

}

return biggest;

}

void drawPoints(vector<Point>points, Scalar color)

{

for (int i = 0; i < points.size(); i++)

{

circle(imgOriginal, points[i], 10, color, FILLED);

putText(imgOriginal, to_string(i), points[i], FONT_HERSHEY_PLAIN, 4, color,4);

}

}

vector<Point> reorder(vector<Point> points)

{

vector<Point> newPoints;

vector<int> sumPoints, subPoints;

for (int i = 0; i < points.size(); i++) {

cout << points[i].x << ", " << points[i].y << endl;

sumPoints.push_back(points[i].x + points[i].y);

cout << sumPoints[i] << endl;

}

for (int i = 0; i < points.size(); i++) {

subPoints.push_back(points[i].x - points[i].y);

cout << subPoints[i] << endl;

}

/// 冒泡实现 /

///*for (int j = 0; j < sumPoints.size(); j++) {

// for (int i = 1; i < sumPoints.size(); i++) {

// if (sumPoints[j] > sumPoints[i]) {

// newPoints = points[i];

// points[i] = points[j];

// points[j] = newPoints;

// }

// }

//}

//if (points[1].x - points[0].x < points[2].x - points[0].x) {

// Point p;

// p = points[1];

// points[1] = points[2];

// points[2] = p;

//}*/

newPoints.push_back(points[min_element(sumPoints.begin(),sumPoints.end()) - sumPoints.begin()]);

newPoints.push_back(points[max_element(subPoints.begin(), subPoints.end()) - subPoints.begin()]);

newPoints.push_back(points[min_element(subPoints.begin(), subPoints.end()) - subPoints.begin()]);

newPoints.push_back(points[max_element(sumPoints.begin(), sumPoints.end()) - sumPoints.begin()]);

return newPoints;

}

Mat getWarp(Mat img, vector<Point> points, float w, float h) {

Point2f src[4] = { points[0], points[1], points[2], points[3]};

Point2f dst[4] = { {0.0f,0.0f},{w,0.0f},{0.0f,h},{w,h} };

// 透视变换,将图片投影到一个新的视平面,也称投影映射

// src 输入图像四个点坐标 //dst 输出图像四个点坐标

Mat matrix = getPerspectiveTransform(src, dst);

//透视变换,img:原图像 imgWarp:输出图像 matrix:变换矩阵,Point(w,h):宽高

warpPerspective(img, imgWarp, matrix, Point(w, h));

return imgWarp;

}

void main() {

string path = "Learn-OpenCV-cpp-in-4-Hours-main\\Resources\\paper.jpg";

imgOriginal = imread(path);

resize(imgOriginal, imgOriginal, Size(), 0.5, 0.5);

// Prepropcessing

imgThre = preProcessing(imgOriginal);

// Get Contours - Biggest

initialPoints = getContours(imgThre);

//drawPoints(initialPoints, Scalar(255, 0, 0));

docPoints = reorder(initialPoints);

//drawPoints(docPoints, Scalar(0, 255, 0));

// warp

imgWarp = getWarp(imgOriginal, docPoints, w, h);

//Crap

Rect roi(5, 5, w - (2 * 5), h - (2 * 5));

imgCrop = imgWarp(roi);

namedWindow("Image",WINDOW_FREERATIO);

namedWindow("imgdilation", WINDOW_FREERATIO);

imshow("Image", imgOriginal);

imshow("imgdilation", imgThre);

//imshow("imgWarp", imgWarp);

imshow("imgCrop", imgCrop);

waitKey(0);

destroyAllWindows();

}