1. 规范了日志的打印格式

2. 增加了彩色日志输出

3. 支持异步推送kafka

4. 日志文件压缩功能

我们无需关心 Logback 版本,只需关注 Boot 版本即可,Parent 工程自动集成了 Logback。Springboot 本身就可以打印日志,为什么还需要规范日志?

日志统一,方便查阅管理。

日志归档功能。

日志持久化功能。

分布式日志查看功能(ELK),方便搜索和查阅。

关于 Logback 的介绍就略过了,下面进入代码阶段。本文主要有以下几个功能:

重新规定日志输出格式。

自定义指定包下的日志输出级别。

按模块输出日志。

日志异步推送 Kafka

POM 文件

如果需要将日志持久化到磁盘,则引入如下两个依赖(不需要推送 Kafka 也可以引入)

<properties>

<logback-kafka-appender.version>0.2.0-RC1</logback-kafka-appender.version>

<janino.version>2.7.8</janino.version>

</properties>

<!-- 将日志输出到Kafka -->

<dependency>

<groupId>com.github.danielwegener</groupId>

<artifactId>logback-kafka-appender</artifactId>

<version>${logback-kafka-appender.version}</version>

<scope>runtime</scope>

</dependency>

<!-- 在xml中使用<if condition>的时候用到的jar包 -->

<dependency>

<groupId>org.codehaus.janino</groupId>

<artifactId>janino</artifactId>

<version>${janino.version}</version>

</dependency>配置文件

在 项目中resource 文件夹下有三个配置文件

logback-defaults.xml

logback-pattern.xml

logback-spring.xml

logback-spring.xml

<?xml version="1.0" encoding="UTF-8"?>

<configuration>

<include resource="logging/logback-pattern.xml"/>

<include resource="logging/logback-defaults.xml"/>

</configuration>logback-defaults.xml

<?xml version="1.0" encoding="UTF-8"?>

<included>

<!-- spring日志 -->

<property name="LOG_FILE" value="${LOG_FILE:-${LOG_PATH:-${LOG_TEMP:-${java.io.tmpdir:-/tmp}}}/spring.log}"/>

<!-- 定义日志文件的输出路径 -->

<property name="LOG_HOME" value="${LOG_PATH:-/tmp}"/>

<!--

将日志追加到控制台(默认使用LogBack已经实现好的)

进入文件,其中<logger>用来设置某一个包或者具体的某一个类的日志打印级别

-->

<include resource="org/springframework/boot/logging/logback/console-appender.xml"/>

<include resource="logback-pattern.xml"/>

<!--定义日志文件大小 超过这个大小会压缩归档 -->

<property name="INFO_MAX_FILE_SIZE" value="100MB"/>

<property name="ERROR_MAX_FILE_SIZE" value="100MB"/>

<property name="TRACE_MAX_FILE_SIZE" value="100MB"/>

<property name="WARN_MAX_FILE_SIZE" value="100MB"/>

<!--定义日志文件最长保存时间 -->

<property name="INFO_MAX_HISTORY" value="9"/>

<property name="ERROR_MAX_HISTORY" value="9"/>

<property name="TRACE_MAX_HISTORY" value="9"/>

<property name="WARN_MAX_HISTORY" value="9"/>

<!--定义归档日志文件最大保存大小,当所有归档日志大小超出定义时,会触发删除 -->

<property name="INFO_TOTAL_SIZE_CAP" value="5GB"/>

<property name="ERROR_TOTAL_SIZE_CAP" value="5GB"/>

<property name="TRACE_TOTAL_SIZE_CAP" value="5GB"/>

<property name="WARN_TOTAL_SIZE_CAP" value="5GB"/>

<!-- 按照每天生成日志文件 -->

<appender name="INFO_FILE" class="ch.qos.logback.core.rolling.RollingFileAppender">

<!-- 当前Log文件名 -->

<file>${LOG_HOME}/info.log</file>

<!-- 压缩备份设置 -->

<rollingPolicy class="ch.qos.logback.core.rolling.SizeAndTimeBasedRollingPolicy">

<fileNamePattern>${LOG_HOME}/backup/info/info.%d{yyyy-MM-dd}.%i.log.gz</fileNamePattern>

<maxHistory>${INFO_MAX_HISTORY}</maxHistory>

<maxFileSize>${INFO_MAX_FILE_SIZE}</maxFileSize>

<totalSizeCap>${INFO_TOTAL_SIZE_CAP}</totalSizeCap>

</rollingPolicy>

<encoder class="ch.qos.logback.classic.encoder.PatternLayoutEncoder">

<pattern>${FILE_LOG_PATTERN}</pattern>

</encoder>

<filter class="ch.qos.logback.classic.filter.ThresholdFilter">

<level>INFO</level>

</filter>

</appender>

<appender name="WARN_FILE" class="ch.qos.logback.core.rolling.RollingFileAppender">

<!-- 当前Log文件名 -->

<file>${LOG_HOME}/warn.log</file>

<!-- 压缩备份设置 -->

<rollingPolicy class="ch.qos.logback.core.rolling.SizeAndTimeBasedRollingPolicy">

<fileNamePattern>${LOG_HOME}/backup/warn/warn.%d{yyyy-MM-dd}.%i.log.gz</fileNamePattern>

<maxHistory>${WARN_MAX_HISTORY}</maxHistory>

<maxFileSize>${WARN_MAX_FILE_SIZE}</maxFileSize>

<totalSizeCap>${WARN_TOTAL_SIZE_CAP}</totalSizeCap>

</rollingPolicy>

<encoder class="ch.qos.logback.classic.encoder.PatternLayoutEncoder">

<pattern>${FILE_LOG_PATTERN}</pattern>

</encoder>

<filter class="ch.qos.logback.classic.filter.LevelFilter">

<level>WARN</level>

<onMatch>ACCEPT</onMatch>

<onMismatch>DENY</onMismatch>

</filter>

</appender>

<appender name="ERROR_FILE" class="ch.qos.logback.core.rolling.RollingFileAppender">

<!-- 当前Log文件名 -->

<file>${LOG_HOME}/error.log</file>

<!-- 压缩备份设置 -->

<rollingPolicy class="ch.qos.logback.core.rolling.SizeAndTimeBasedRollingPolicy">

<fileNamePattern>${LOG_HOME}/backup/error/error.%d{yyyy-MM-dd}.%i.log.gz</fileNamePattern>

<maxHistory>${ERROR_MAX_HISTORY}</maxHistory>

<maxFileSize>${ERROR_MAX_FILE_SIZE}</maxFileSize>

<totalSizeCap>${ERROR_TOTAL_SIZE_CAP}</totalSizeCap>

</rollingPolicy>

<encoder class="ch.qos.logback.classic.encoder.PatternLayoutEncoder">

<pattern>${FILE_LOG_PATTERN}</pattern>

</encoder>

<filter class="ch.qos.logback.classic.filter.LevelFilter">

<level>ERROR</level>

<onMatch>ACCEPT</onMatch>

<onMismatch>DENY</onMismatch>

</filter>

</appender>

<!-- Kafka的appender -->

<appender name="KAFKA" class="com.github.danielwegener.logback.kafka.KafkaAppender">

<encoder class="ch.qos.logback.classic.encoder.PatternLayoutEncoder">

<pattern>${FILE_LOG_PATTERN}</pattern>

</encoder>

<topic>${kafka_env}applog_${spring_application_name}</topic>

<keyingStrategy class="com.github.danielwegener.logback.kafka.keying.HostNameKeyingStrategy" />

<deliveryStrategy class="com.github.danielwegener.logback.kafka.delivery.AsynchronousDeliveryStrategy" />

<producerConfig>bootstrap.servers=${kafka_broker}</producerConfig>

<!-- don't wait for a broker to ack the reception of a batch. -->

<producerConfig>acks=0</producerConfig>

<!-- wait up to 1000ms and collect log messages before sending them as a batch -->

<producerConfig>linger.ms=1000</producerConfig>

<!-- even if the producer buffer runs full, do not block the application but start to drop messages -->

<producerConfig>max.block.ms=0</producerConfig>

<!-- Optional parameter to use a fixed partition -->

<partition>8</partition>

</appender>

<appender name="KAFKA_ASYNC" class="ch.qos.logback.classic.AsyncAppender">

<appender-ref ref="KAFKA" />

</appender>

<root level="INFO">

<appender-ref ref="CONSOLE"/>

<appender-ref ref="INFO_FILE"/>

<appender-ref ref="WARN_FILE"/>

<appender-ref ref="ERROR_FILE"/>

<if condition='"true".equals(property("kafka_enabled"))'>

<then>

<appender-ref ref="KAFKA_ASYNC"/>

</then>

</if>

</root>

</included>注意:

上面的 <partition>8</partition> 指的是将消息发送到哪个分区,如果你主题的分区为 0~7,那么会报错,解决办法是要么去掉这个属性,要么指定有效的分区。

HostNameKeyingStrategy 是用来指定 key 的生成策略,我们知道 kafka 是根据 key 来判定将消息发送到哪个分区上的,此种是根据主机名来判定,这样带来的好处是每台服务器生成的日志都是在同一个分区上面,从而保证了时间顺序。但默认的是 NoKeyKeyingStrategy,会随机分配到各个分区上面,这样带来的坏处是,无法保证日志的时间顺序,不推荐这样来记录日志。

logback-pattern.xml

<?xml version="1.0" encoding="UTF-8"?>

<included>

<!-- 日志展示规则,比如彩色日志、异常日志等 -->

<conversionRule conversionWord="clr" converterClass="org.springframework.boot.logging.logback.ColorConverter" />

<conversionRule conversionWord="wex" converterClass="org.springframework.boot.logging.logback.WhitespaceThrowableProxyConverter" />

<conversionRule conversionWord="wEx" converterClass="org.springframework.boot.logging.logback.ExtendedWhitespaceThrowableProxyConverter" />

<!-- 自定义日志展示规则 -->

<conversionRule conversionWord="ip" converterClass="com.ryan.utils.IPAddressConverter" />

<conversionRule conversionWord="module" converterClass="com.ryan.utils.ModuleConverter" />

<!-- 上下文属性 -->

<springProperty scope="context" name="spring_application_name" source="spring.application.name" />

<springProperty scope="context" name="server_port" source="server.port" />

<!-- Kafka属性配置 -->

<springProperty scope="context" name="spring_application_name" source="spring.application.name" />

<springProperty scope="context" name="kafka_enabled" source="ryan.web.logging.kafka.enabled"/>

<springProperty scope="context" name="kafka_broker" source="ryan.web.logging.kafka.broker"/>

<springProperty scope="context" name="kafka_env" source="ryan.web.logging.kafka.env"/>

<!-- 日志输出的格式如下 -->

<!-- appID | module | dateTime | level | requestID | traceID | requestIP | userIP | serverIP | serverPort | processID | thread | location | detailInfo-->

<!-- CONSOLE_LOG_PATTERN属性会在console-appender.xml文件中引用 -->

<property name="CONSOLE_LOG_PATTERN" value="%clr(${spring_application_name}){cyan}|%clr(%module){blue}|%clr(%d{ISO8601}){faint}|%clr(%p)|%X{requestId}|%X{X-B3-TraceId:-}|%X{requestIp}|%X{userIp}|%ip|${server_port}|${PID}|%clr(%t){faint}|%clr(%.40logger{39}){cyan}.%clr(%method){cyan}:%L|%m%n${LOG_EXCEPTION_CONVERSION_WORD:-%wEx}"/>

<!-- FILE_LOG_PATTERN属性会在logback-defaults.xml文件中引用 -->

<property name="FILE_LOG_PATTERN" value="${spring_application_name}|%module|%d{ISO8601}|%p|%X{requestId}|%X{X-B3-TraceId:-}|%X{requestIp}|%X{userIp}|%ip|${server_port}|${PID}|%t|%.40logger{39}.%method:%L|%m%n${LOG_EXCEPTION_CONVERSION_WORD:-%wEx}"/>

<!--

将 org/springframework/boot/logging/logback/defaults.xml 文件下的默认logger写过来

-->

<logger name="org.apache.catalina.startup.DigesterFactory" level="ERROR"/>

<logger name="org.apache.catalina.util.LifecycleBase" level="ERROR"/>

<logger name="org.apache.coyote.http11.Http11NioProtocol" level="WARN"/>

<logger name="org.apache.sshd.common.util.SecurityUtils" level="WARN"/>

<logger name="org.apache.tomcat.util.net.NioSelectorPool" level="WARN"/>

<logger name="org.eclipse.jetty.util.component.AbstractLifeCycle" level="ERROR"/>

<logger name="org.hibernate.validator.internal.util.Version" level="WARN"/>

</included>自定义获取 moudle

/**

* <b>Function: </b> 获取日志模块名称

*

* @program: ModuleConverter

* @Package: com.kingbal.king.dmp

* @author: songjianlin

* @date: 2024/04/17

* @version: 1.0

* @Copyright: 2024 www.kingbal.com Inc. All rights reserved.

*/

public class ModuleConverter extends ClassicConverter {

private static final int MAX_LENGTH = 20;

@Override

public String convert(ILoggingEvent event) {

if (event.getLoggerName().length() > MAX_LENGTH) {

return "";

} else {

return event.getLoggerName();

}

}

}自定义获取 ip

/**

* <b>Function: </b> 获取ip地支

*

* @program: IPAddressConverter

* @Package: com.kingbal.king.dmp

* @author: songjianlin

* @date: 2024/04/17

* @version: 1.0

* @Copyright: 2024 www.kingbal.com Inc. All rights reserved.

*/

@Slf4j

public class IPAddressConverter extends ClassicConverter {

private static String ipAddress;

static {

try {

ipAddress = InetAddress.getLocalHost().getHostAddress();

} catch (UnknownHostException e) {

log.error("fetch localhost host address failed", e);

ipAddress = "UNKNOWN";

}

}

@Override

public String convert(ILoggingEvent event) {

return ipAddress;

}

}按模块输出

给 @Slf4j 加上 topic。

/**

* <b>Function: </b> todo

*

* @program: LogbackController

* @Package: com.kingbal.king.dmp

* @author: songjianlin

* @date: 2024/04/17

* @version: 1.0

* @Copyright: 2024 www.kingbal.com Inc. All rights reserved.

*/

@RestController

@RequestMapping(value = "/portal")

@Slf4j(topic = "LogbackController")

public class LogbackController {

@RequestMapping(value = "/gohome")

public void m1() {

log.info("buddy,we go home~");

}

}自定义日志级别

如果想打印 SQL 语句,需要将日志级别设置成 debug 级别。

logging.path = /tmp

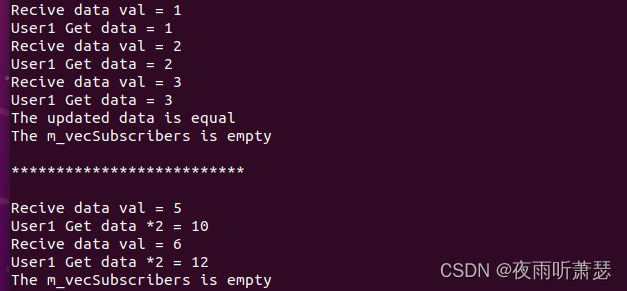

logging.level.com.ryan.trading.account.dao = debug 推送 Kafka展开目录

ryan.web.logging.kafka.enabled=true

#多个broker用英文逗号分隔

ryan.web.logging.kafka.broker=127.0.0.1:9092

#创建Kafka的topic时使用

ryan.web.logging.kafka.env=test