文章目录

K8S Deployment HA

1.机器规划

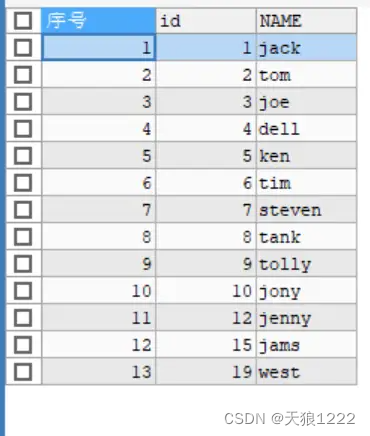

| IP | 主机名 | 角色 |

|---|---|---|

| 10.83.195.6 | master1 | master |

| 10.83.195.7 | master2 | master |

| 10.83.195.8 | master3 | master |

| 10.83.195.9 | node1 | node |

| 10.83.195.10 | node2 | node |

| 10.83.195.250 | VIP |

2.前期准备

2.1 安装ansible

# master1节点

yum install -y ansible

2.2 修改 hostname

# 修改hostname

hostnamectl set-hostname xxx

# 配置hosts

# 127.0.0.1 localhost xxx ::1 localhost6xxx 需要保留,否则calico pod会报错

ansible -i /opt/ansible/nodes all -m shell -a "cat >> /etc/hosts<<EOF

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost6 localhost6.localdomain6 localhost6.localdomain

10.83.195.6 master1

10.83.195.7 master2

10.83.195.8 master3

10.83.195.9 node1

10.83.195.10 node2

EOF

"

2.3 配置免密

# 生成ssh密钥对

ssh-keygen

# root免密

ansible -i /opt/ansible/nodes all -m shell -a "sudo sed -i 's/PermitRootLogin no/PermitRootLogin yes/' /etc/ssh/sshd_config && sudo grep PermitRootLogin /etc/ssh/sshd_config && sudo systemctl restart sshd"

# master1 ssh-copy-id

ssh-copy-id 10.83.195.6

# 可以把 maste1的公私钥 拷贝到 master2、3节点,方便免密

2.4 时间同步

ansible -i /opt/ansible/nodes all -m shell -a "yum install chrony -y"

ansible -i /opt/ansible/nodes all -m shell -a "systemctl start chronyd && systemctl enable chronyd && chronyc sources"

2.5 系统参数调整

# 临时关闭;关闭swap主要是为了性能考虑

# 通过free命令查看swap是否关闭

ansible -i /opt/ansible/nodes all -m shell -a 'sudo swapoff -a && free'

# 永久关闭

ansible -i /opt/ansible/nodes all -m shell -a "sudo sed -i 's/.*swap.*/#&/' /etc/fstab"

# 禁用SELinux

# 临时关闭

ansible -i /opt/ansible/nodes all -m shell -a "setenforce 0"

# 永久禁用

ansible -i /opt/ansible/nodes all -m shell -a "sed -i 's/^SELINUX=enforcing$/SELINUX=disabled/' /etc/selinux/config"

# 关闭防火墙

ansible -i /opt/ansible/nodes all -m shell -a "systemctl stop firewalld && systemctl disable firewalld"

# 允许 iptables 检查桥接流量

ansible -i /opt/ansible/nodes all -m shell -a "sudo modprobe br_netfilter && lsmod | grep br_netfilter"

ansible -i /opt/ansible/nodes all -m shell -a "sudo cat <<EOF | sudo tee /etc/modules-load.d/k8s.conf

overlay

br_netfilter

EOF"

ansible -i /opt/ansible/nodes all -m shell -a "sudo modprobe overlay && sudo modprobe br_netfilter"

# 设置所需的 sysctl 参数,参数在重新启动后保持不变

ansible -i /opt/ansible/nodes all -m shell -a "sudo cat <<EOF | sudo tee /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

net.ipv4.ip_forward = 1

EOF"

ansible -i /opt/ansible/nodes all -m shell -a "echo 1|sudo tee /proc/sys/net/ipv4/ip_forward"

# 应用 sysctl 参数而不重新启动

ansible -i /opt/ansible/nodes all -m shell -a "sudo sysctl --system"

2.6 安装 Docker

# centos7

ansible -i /opt/ansible/nodes all -m shell -a "wget -O /etc/yum.repos.d/CentOS-Base.repo http://mirrors.aliyun.com/repo/Centos-7.repo"

# centos8

# wget -O /etc/yum.repos.d/CentOS-Base.repo http://mirrors.aliyun.com/repo/Centos-8.repo

# 安装yum-config-manager配置工具

ansible -i /opt/ansible/nodes all -m shell -a "sudo yum -y install yum-utils"

# 设置yum源

ansible -i /opt/ansible/nodes all -m shell -a "sudo yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo"

# 软链,修改docker镜像存储目录

ansible -i /opt/ansible/nodes all -m shell -a "sudo mkdir /data/docker && sudo ln -s /data/docker /var/lib/docker"

# 安装docker-ce版本

ansible -i /opt/ansible/nodes all -m shell -a "sudo yum install -y docker-ce"

# 自启、启动

ansible -i /opt/ansible/nodes all -m shell -a "sudo systemctl start docker && sudo systemctl enable docker && sudo docker --version"

# 查看版本号

# sudo docker --version

# 查看版本具体信息

# sudo docker version

# 修改Docker镜像源设置

# 修改文件 /etc/docker/daemon.json,没有这个文件就创建

ansible -i /opt/ansible/nodes all -m shell -a 'sudo cat <<EOF | sudo tee /etc/docker/daemon.json

{

"registry-mirrors": ["https://ogeydad1.mirror.aliyuncs.com"],

"exec-opts": ["native.cgroupdriver=systemd"]

}

EOF

'

# 重载、重启 docker

ansible -i /opt/ansible/nodes all -m shell -a "sudo systemctl reload docker &&sudo systemctl restart docker && sudo systemctl status docker"

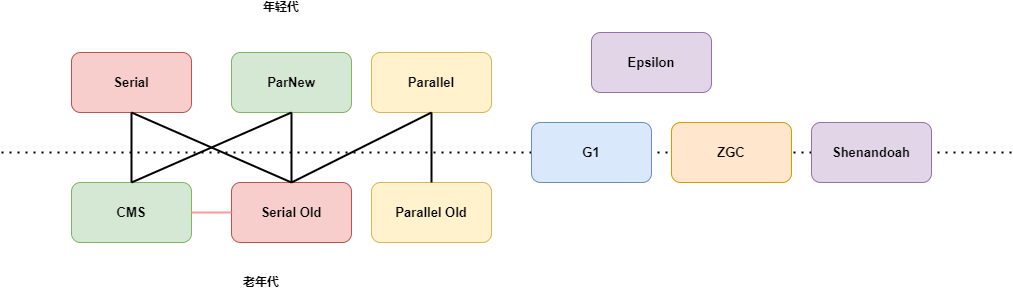

2.7 部署 Haproxy+Keepalived

K8S Master HA 通过 Haproxy+Keepalived 实现

# 3个master节点上执行

ansible -i /opt/ansible/nodes master -m shell -a "yum install keepalived haproxy -y"

修改 haproxy.cfg配置

# vim /etc/haproxy/haproxy.cfg 追加如下配置

frontend k8s-master

bind 0.0.0.0:16443

mode tcp

option tcplog

tcp-request inspect-delay 5s

default_backend k8s-master

backend k8s-master

mode tcp

option tcplog

option tcp-check

balance roundrobin

default-server inter 10s downinter 5s rise 2 fall 2 slowstart 60s maxconn 250 maxqueue 256 weight 100

server master1 10.83.195.6:6443 check inter 10000 fall 2 rise 2 weight 100

server master2 10.83.195.7:6443 check inter 10000 fall 2 rise 2 weight 100

server master3 10.83.195.8:6443 check inter 10000 fall 2 rise 2 weight 100

# 分发到其他master

ansible -i /opt/ansible/nodes master -m copy -a "src=/etc/haproxy/haproxy.cfg dest=/etc/haproxy/haproxy.cfg"

修改keepalived.conf配置

# vim /etc/keepalived/keepalived.conf 替换内容

# state: 主节点为MASTER,从节点为BACKUP

# interface: ifconfig 查看网卡名

# priority: MASTER使用101,BACKUP使用100

# master

! Configuration File for keepalived

global_defs {

script_user root

enable_script_security

router_id LVS_DEVEL

}

vrrp_script check_apiserver {

script "/etc/keepalived/check_k8s.sh"

interval 3

weight -2

fall 2

rise 2

}

vrrp_instance VI_1 {

# 主节点为MASTER,从节点为BACKUP

state MASTER

# 网卡名

interface ens192

virtual_router_id 51

# MASTER当中使用101,BACKUP当中使用100

priority 101

authentication {

auth_type PASS

auth_pass admin

}

virtual_ipaddress {

# VIP

10.83.195.250

}

track_script {

check_k8s

}

}

# backup

! Configuration File for keepalived

global_defs {

router_id LVS_DEVEL

}

vrrp_script check_apiserver {

script "/etc/keepalived/check_k8s.sh"

interval 3

weight -2

fall 2

rise 2

}

vrrp_instance VI_1 {

# 主节点为MASTER,从节点为BACKUP

state BACKUP

# 网卡名

interface ens192

virtual_router_id 51

# MASTER当中使用101,BACKUP当中使用100

priority 100

authentication {

auth_type PASS

auth_pass admin

}

virtual_ipaddress {

# VIP

10.83.195.250

}

track_script {

check_k8s

}

}

检测脚本 check_k8s.sh

#!/bin/bash

function check_k8s() {

for ((i=0;i<5;i++));do

apiserver_pid_id=$(pgrep kube-apiserver)

if [[ ! -z $apiserver_pid_id ]];then

return

else

sleep 2

fi

apiserver_pid_id=0

done

}

# 1:running 0:stopped

check_k8s

if [[ $apiserver_pid_id -eq 0 ]];then

/usr/bin/systemctl stop keepalived

exit 1

else

exit 0

fi

# 分发

ansible -i /opt/ansible/nodes master -m copy -a "src=/etc/keepalived/check_k8s.sh dest=/etc/keepalived/"

ansible -i /opt/ansible/nodes master -m shell -a "chmod +x /etc/keepalived/check_k8s.sh"

# 启动

ansible -i /opt/ansible/nodes master -m shell -a "systemctl enable --now keepalived haproxy"

# 查看VIP

ip a

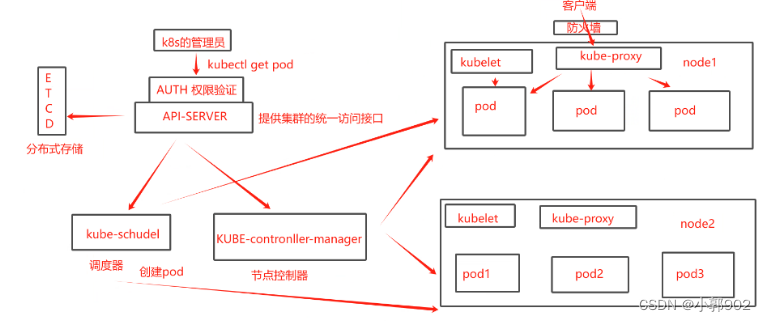

3. 部署 K8S

3.1 安装 k8s命令

# 所有节点

ansible -i /opt/ansible/nodes all -m shell -a "sudo cat <<EOF | sudo tee /etc/yum.repos.d/kubernetes.repo

[k8s]

name=k8s

enabled=1

gpgcheck=0

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

EOF

"

# disableexcludes=kubernetes:禁掉除了这个kubernetes之外的别的仓库

ansible -i /opt/ansible/nodes all -m shell -a "yum install -y kubelet-1.23.6 kubeadm-1.23.6 kubectl-1.23.6 --disableexcludes=kubernetes"

# 查看k8s版本

# sudo kubectl version命令 会报错正常 Unable to connect to the server: dial tcp: lookup localhost on 10.82.26.252:53: no such host

ansible -i /opt/ansible/nodes all -m shell -a "sudo kubectl version && sudo yum info kubeadm"

# 设置为开机自启并现在立刻启动服务 --now:立刻启动服务

ansible -i /opt/ansible/nodes all -m shell -a "sudo systemctl enable --now kubelet && sudo systemctl status kubelet"

3.2 k8s初始化

# master1 节点执行

# --control-plane-endpoint VIP:16443

# --pod-network-cidr=192.168.0.0/16 需要与calico.yaml 文件中的 CALICO_IPV4POOL_CIDR 配置网段一致

kubeadm init --image-repository registry.aliyuncs.com/google_containers --kubernetes-version v1.23.6 --pod-network-cidr=192.168.0.0/16 --control-plane-endpoint 10.83.195.250:16443 --upload-cert

# Your Kubernetes control-plane has initialized successfully!

# To start using your cluster, you need to run the following as a regular user:

# mkdir -p $HOME/.kube

# sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

# sudo chown $(id -u):$(id -g) $HOME/.kube/config

# Alternatively, if you are the root user, you can run:

# export KUBECONFIG=/etc/kubernetes/admin.conf

# You should now deploy a pod network to the cluster.

# Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

# https://kubernetes.io/docs/concepts/cluster-administration/addons/

# You can now join any number of the control-plane node running the following command on each as root:

# kubeadm join 10.83.195.250:16443 --token 6z1jge.6hue81vruwh8msdl \

# --discovery-token-ca-cert-hash sha256:a3db8061e0b570e897b2d0e7c243ef7342c51299d04ef649737187e50aee8ea6 \

# --control-plane --certificate-key 35e73eae794acd9275445902cfd8d545a0e3b8e017f8d5960bd2e6796f74c386

# Please note that the certificate-key gives access to cluster sensitive data, keep it secret!

# As a safeguard, uploaded-certs will be deleted in two hours; If necessary, you can use

# "kubeadm init phase upload-certs --upload-certs" to reload certs afterward.

# Then you can join any number of worker nodes by running the following on each as root:

# kubeadm join 10.83.195.250:16443 --token 6z1jge.6hue81vruwh8msdl \

# --discovery-token-ca-cert-hash sha256:a3db8061e0b570e897b2d0e7c243ef7342c51299d04ef649737187e50aee8ea6

3.3 添加其他master节点

# You can now join any number of the control-plane node running the following command on each as root:

kubeadm join 10.83.195.250:16443 --token 6z1jge.6hue81vruwh8msdl \

--discovery-token-ca-cert-hash sha256:a3db8061e0b570e897b2d0e7c243ef7342c51299d04ef649737187e50aee8ea6 \

--control-plane --certificate-key 35e73eae794acd9275445902cfd8d545a0e3b8e017f8d5960bd2e6796f74c386

# 3个master节点

# 临时生效(退出当前窗口重连环境变量失效)

export KUBECONFIG=/etc/kubernetes/admin.conf

# 永久生效(推荐)

echo "export KUBECONFIG=/etc/kubernetes/admin.conf" >> ~/.bash_profile && source ~/.bash_profile

# 重新部署

# kubeadm reset

# rm -rf $HOME/.kube && rm -rf /etc/cni/net.d && rm -rf /etc/kubernetes/*

# 再执行kubeadm init 命令

3.4 添加 Node节点

# Then you can join any number of worker nodes by running the following on each as root:

# kubeadm token create --print-join-command

kubeadm join 10.83.195.250:16443 --token 6z1jge.6hue81vruwh8msdl \

--discovery-token-ca-cert-hash sha256:a3db8061e0b570e897b2d0e7c243ef7342c51299d04ef649737187e50aee8ea6

3.5 安装 CNI

# master1 节点

# kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

# master1节点执行

# 下载 calico 配置文件,可能会网络超时

curl https://docs.projectcalico.org/manifests/calico.yaml -O # 生成重定向链接

curl https://calico-v3-25.netlify.app/archive/v3.25/manifests/calico.yaml -O

kubectl apply -f calico.yaml

# 修改 calico.yaml 文件中的 CALICO_IPV4POOL_CIDR 配置,修改为与初始化的 cidr 相同

# 修改 IP_AUTODETECTION_METHOD 下的网卡名称

# 删除镜像 docker.io/ 前缀,避免下载过慢导致失败

# sed -i 's#docker.io/##g' calico.yaml

3.6 查看pod状态

kubectl get pods -A

3.7 配置IPVS

解决集群内无法ping通ClusterIP(或ServiceName)

# 加载ip_vs相关内核模块

ansible -i /opt/ansible/nodes all -m shell -a "sudo modprobe -- ip_vs && sudo modprobe -- ip_vs_sh && sudo sudo modprobe -- ip_vs_rr && sudo modprobe -- ip_vs_wrr && sudo modprobe -- nf_conntrack_ipv4"

# 验证开启ipvs:

ansible -i /opt/ansible/nodes all -m shell -a "sudo lsmod |grep ip_vs"

# 安装ipvsadm工具

ansible -i /opt/ansible/nodes all -m shell -a "sudo yum install ipset ipvsadm -y"

# 编辑kube-proxy配置文件,mode修改成ipvs

kubectl edit configmap -n kube-system kube-proxy

# 先查看

kubectl get pod -n kube-system | grep kube-proxy

# delete让它自拉起

kubectl get pod -n kube-system | grep kube-proxy |awk '{system("kubectl delete pod "$1" -n kube-system")}'

# 再查看

kubectl get pod -n kube-system | grep kube-proxy

# 查看ipvs转发规则

ipvsadm -Ln

![[dvwa] file upload](https://img-blog.csdnimg.cn/direct/a2d0a8a0eef1412c81fd333c766b2df9.png)