1、rtp协议头

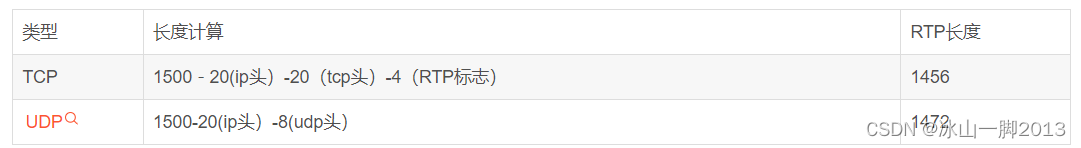

2、rtp可以基于TCP或者UDP

其中基于TCP需要加4个字节的RTP标志

3、rtph264depay定义解析函数gst_rtp_h264_depay_process,通过RFC 3984文档实现。

static void

gst_rtp_h264_depay_class_init (GstRtpH264DepayClass * klass)

{

GObjectClass *gobject_class;

GstElementClass *gstelement_class;

GstRTPBaseDepayloadClass *gstrtpbasedepayload_class;

gobject_class = (GObjectClass *) klass;

gstelement_class = (GstElementClass *) klass;

gstrtpbasedepayload_class = (GstRTPBaseDepayloadClass *) klass;

gobject_class->finalize = gst_rtp_h264_depay_finalize;

gobject_class->set_property = gst_rtp_h264_depay_set_property;

gobject_class->get_property = gst_rtp_h264_depay_get_property;

/**

* GstRtpH264Depay:wait-for-keyframe:

*

* Wait for the next keyframe after packet loss,

* meaningful only when outputting access units

*

* Since: 1.20

*/

g_object_class_install_property (gobject_class, PROP_WAIT_FOR_KEYFRAME,

g_param_spec_boolean ("wait-for-keyframe", "Wait for Keyframe",

"Wait for the next keyframe after packet loss, meaningful only when "

"outputting access units",

DEFAULT_WAIT_FOR_KEYFRAME,

G_PARAM_READWRITE | G_PARAM_STATIC_STRINGS));

/**

* GstRtpH264Depay:request-keyframe:

*

* Request new keyframe when packet loss is detected

*

* Since: 1.20

*/

g_object_class_install_property (gobject_class, PROP_REQUEST_KEYFRAME,

g_param_spec_boolean ("request-keyframe", "Request Keyframe",

"Request new keyframe when packet loss is detected",

DEFAULT_REQUEST_KEYFRAME,

G_PARAM_READWRITE | G_PARAM_STATIC_STRINGS));

gst_element_class_add_static_pad_template (gstelement_class,

&gst_rtp_h264_depay_src_template);

gst_element_class_add_static_pad_template (gstelement_class,

&gst_rtp_h264_depay_sink_template);

gst_element_class_set_static_metadata (gstelement_class,

"RTP H264 depayloader", "Codec/Depayloader/Network/RTP",

"Extracts H264 video from RTP packets (RFC 3984)",

"Wim Taymans <wim.taymans@gmail.com>");

gstelement_class->change_state = gst_rtp_h264_depay_change_state;

gstrtpbasedepayload_class->process_rtp_packet = gst_rtp_h264_depay_process;

gstrtpbasedepayload_class->set_caps = gst_rtp_h264_depay_setcaps;

gstrtpbasedepayload_class->handle_event = gst_rtp_h264_depay_handle_event;

}4、gst_rtp_h264_depay_process的具体实现

static GstBuffer *

gst_rtp_h264_depay_process (GstRTPBaseDepayload * depayload, GstRTPBuffer * rtp)

{

GstRtpH264Depay *rtph264depay;

GstBuffer *outbuf = NULL;

guint8 nal_unit_type;

rtph264depay = GST_RTP_H264_DEPAY (depayload);

if (!rtph264depay->merge)

rtph264depay->waiting_for_keyframe = FALSE;

/* 是否是弃用数据 */

if (GST_BUFFER_IS_DISCONT (rtp->buffer)) {

gst_adapter_clear (rtph264depay->adapter);

rtph264depay->wait_start = TRUE;

rtph264depay->current_fu_type = 0;

rtph264depay->last_fu_seqnum = 0;

if (rtph264depay->merge && rtph264depay->wait_for_keyframe) {

rtph264depay->waiting_for_keyframe = TRUE;

}

if (rtph264depay->request_keyframe)

gst_pad_push_event (GST_RTP_BASE_DEPAYLOAD_SINKPAD (depayload),gst_video_event_new_upstream_force_key_unit (GST_CLOCK_TIME_NONE,TRUE, 0));

}

{

gint payload_len;

guint8 *payload;

guint header_len;

guint8 nal_ref_idc;

GstMapInfo map;

guint outsize, nalu_size;

GstClockTime timestamp;

gboolean marker;

timestamp = GST_BUFFER_PTS (rtp->buffer);

payload_len = gst_rtp_buffer_get_payload_len (rtp);

payload = gst_rtp_buffer_get_payload (rtp);

marker = gst_rtp_buffer_get_marker (rtp);

GST_DEBUG_OBJECT (rtph264depay, "receiving %d bytes", payload_len);

if (payload_len == 0)

goto empty_packet;

/* +---------------+

* |0|1|2|3|4|5|6|7|

* +-+-+-+-+-+-+-+-+

* |F|NRI| Type |

* +---------------+

*

* F must be 0.

*/

nal_ref_idc = (payload[0] & 0x60) >> 5;

nal_unit_type = payload[0] & 0x1f;

/* at least one byte header with type */

header_len = 1;

GST_DEBUG_OBJECT (rtph264depay, "NRI %d, Type %d %s", nal_ref_idc, nal_unit_type, marker ? "marker" : "");

/* If FU unit was being processed, but the current nal is of a different

* type. Assume that the remote payloader is buggy (didn't set the end bit

* when the FU ended) and send out what we gathered thusfar */

if (G_UNLIKELY (rtph264depay->current_fu_type != 0 && nal_unit_type != rtph264depay->current_fu_type))

gst_rtp_h264_finish_fragmentation_unit (rtph264depay);

switch (nal_unit_type) {

case 0:

case 30:

case 31:

/* undefined */

goto undefined_type;

case 25:

/* STAP-B Single-time aggregation packet 5.7.1 */

/* 2 byte extra header for DON */

header_len += 2;

/* fallthrough */

case 24:

{

/* strip headers */

payload += header_len;

payload_len -= header_len;

rtph264depay->wait_start = FALSE;

/* STAP-A Single-time aggregation packet 5.7.1 */

while (payload_len > 2) {

gboolean last = FALSE;

/* 1

* 0 1 2 3 4 5 6 7 8 9 0 1 2 3 4 5

* +-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

* | NALU Size |

* +-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

*/

nalu_size = (payload[0] << 8) | payload[1];

/* don't include nalu_size */

if (nalu_size > (payload_len - 2))

nalu_size = payload_len - 2;

outsize = nalu_size + sizeof (sync_bytes);

outbuf = gst_buffer_new_and_alloc (outsize);

gst_buffer_map (outbuf, &map, GST_MAP_WRITE);

if (rtph264depay->byte_stream) {

memcpy (map.data, sync_bytes, sizeof (sync_bytes));

} else {

map.data[0] = map.data[1] = 0;

map.data[2] = payload[0];

map.data[3] = payload[1];

}

/* strip NALU size */

payload += 2;

payload_len -= 2;

memcpy (map.data + sizeof (sync_bytes), payload, nalu_size);

gst_buffer_unmap (outbuf, &map);

gst_rtp_copy_video_meta (rtph264depay, outbuf, rtp->buffer);

if (payload_len - nalu_size <= 2)

last = TRUE;

gst_rtp_h264_depay_handle_nal (rtph264depay, outbuf, timestamp,

marker && last);

payload += nalu_size;

payload_len -= nalu_size;

}

break;

}

case 26:

/* MTAP16 Multi-time aggregation packet 5.7.2 */

// header_len = 5;

/* fallthrough, not implemented */

case 27:

/* MTAP24 Multi-time aggregation packet 5.7.2 */

// header_len = 6;

goto not_implemented;

break;

case 28:

case 29:

{

/* FU-A Fragmentation unit 5.8 */

/* FU-B Fragmentation unit 5.8 */

gboolean S, E;

/* +---------------+

* |0|1|2|3|4|5|6|7|

* +-+-+-+-+-+-+-+-+

* |S|E|R| Type |

* +---------------+

*

* R is reserved and always 0

*/

S = (payload[1] & 0x80) == 0x80;

E = (payload[1] & 0x40) == 0x40;

GST_DEBUG_OBJECT (rtph264depay, "S %d, E %d", S, E);

if (rtph264depay->wait_start && !S)

goto waiting_start;

if (S) {

/* NAL unit starts here */

guint8 nal_header;

/* If a new FU unit started, while still processing an older one.

* Assume that the remote payloader is buggy (doesn't set the end

* bit) and send out what we've gathered thusfar */

if (G_UNLIKELY (rtph264depay->current_fu_type != 0))

gst_rtp_h264_finish_fragmentation_unit (rtph264depay);

rtph264depay->current_fu_type = nal_unit_type;

rtph264depay->fu_timestamp = timestamp;

rtph264depay->last_fu_seqnum = gst_rtp_buffer_get_seq (rtp);

rtph264depay->wait_start = FALSE;

/* reconstruct NAL header */

nal_header = (payload[0] & 0xe0) | (payload[1] & 0x1f);

/* strip type header, keep FU header, we'll reuse it to reconstruct

* the NAL header. */

payload += 1;

payload_len -= 1;

nalu_size = payload_len;

outsize = nalu_size + sizeof (sync_bytes);

outbuf = gst_buffer_new_and_alloc (outsize);

gst_buffer_map (outbuf, &map, GST_MAP_WRITE);

memcpy (map.data + sizeof (sync_bytes), payload, nalu_size);

map.data[sizeof (sync_bytes)] = nal_header;

gst_buffer_unmap (outbuf, &map);

gst_rtp_copy_video_meta (rtph264depay, outbuf, rtp->buffer);

GST_DEBUG_OBJECT (rtph264depay, "queueing %d bytes", outsize);

/* and assemble in the adapter */

gst_adapter_push (rtph264depay->adapter, outbuf);

} else {

if (rtph264depay->current_fu_type == 0) {

/* previous FU packet missing start bit? */

GST_WARNING_OBJECT (rtph264depay, "missing FU start bit on an "

"earlier packet. Dropping.");

gst_adapter_clear (rtph264depay->adapter);

return NULL;

}

if (gst_rtp_buffer_compare_seqnum (rtph264depay->last_fu_seqnum,

gst_rtp_buffer_get_seq (rtp)) != 1) {

/* jump in sequence numbers within an FU is cause for discarding */

GST_WARNING_OBJECT (rtph264depay, "Jump in sequence numbers from "

"%u to %u within Fragmentation Unit. Data was lost, dropping "

"stored.", rtph264depay->last_fu_seqnum,

gst_rtp_buffer_get_seq (rtp));

gst_adapter_clear (rtph264depay->adapter);

return NULL;

}

rtph264depay->last_fu_seqnum = gst_rtp_buffer_get_seq (rtp);

/* strip off FU indicator and FU header bytes */

payload += 2;

payload_len -= 2;

outsize = payload_len;

outbuf = gst_buffer_new_and_alloc (outsize);

gst_buffer_fill (outbuf, 0, payload, outsize);

gst_rtp_copy_video_meta (rtph264depay, outbuf, rtp->buffer);

GST_DEBUG_OBJECT (rtph264depay, "queueing %d bytes", outsize);

/* and assemble in the adapter */

gst_adapter_push (rtph264depay->adapter, outbuf);

}

outbuf = NULL;

rtph264depay->fu_marker = marker;

/* if NAL unit ends, flush the adapter */

if (E)

gst_rtp_h264_finish_fragmentation_unit (rtph264depay);

break;

}

default:

{

rtph264depay->wait_start = FALSE;

/* 1-23 NAL unit Single NAL unit packet per H.264 5.6 */

/* the entire payload is the output buffer */

nalu_size = payload_len;

outsize = nalu_size + sizeof (sync_bytes);

outbuf = gst_buffer_new_and_alloc (outsize);

gst_buffer_map (outbuf, &map, GST_MAP_WRITE);

if (rtph264depay->byte_stream) {

memcpy (map.data, sync_bytes, sizeof (sync_bytes));

} else {

map.data[0] = map.data[1] = 0;

map.data[2] = nalu_size >> 8;

map.data[3] = nalu_size & 0xff;

}

memcpy (map.data + sizeof (sync_bytes), payload, nalu_size);

gst_buffer_unmap (outbuf, &map);

gst_rtp_copy_video_meta (rtph264depay, outbuf, rtp->buffer);

gst_rtp_h264_depay_handle_nal (rtph264depay, outbuf, timestamp, marker);

break;

}

}

}

return NULL;

/* ERRORS */

empty_packet:

{

GST_DEBUG_OBJECT (rtph264depay, "empty packet");

return NULL;

}

undefined_type:

{

GST_ELEMENT_WARNING (rtph264depay, STREAM, DECODE,

(NULL), ("Undefined packet type"));

return NULL;

}

waiting_start:

{

GST_DEBUG_OBJECT (rtph264depay, "waiting for start");

return NULL;

}

not_implemented:

{

GST_ELEMENT_ERROR (rtph264depay, STREAM, FORMAT,

(NULL), ("NAL unit type %d not supported yet", nal_unit_type));

return NULL;

}

}