一、概述

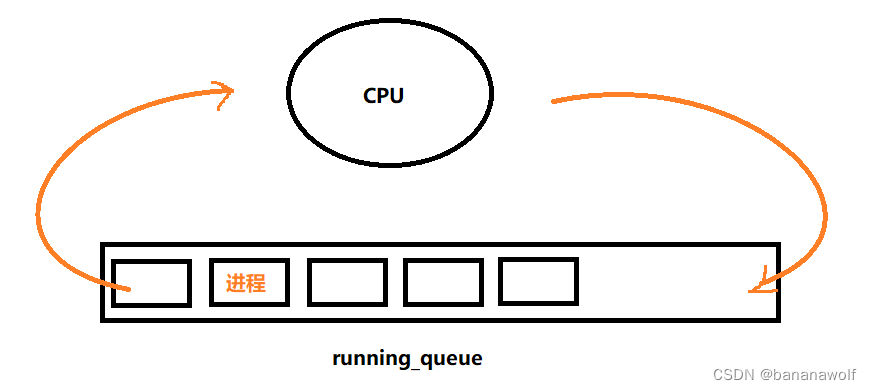

CFS调度器目标是实现各进程完全公平调度。就绪队列维护一颗红黑树,并采用虚拟运行时间来实现时间片的分配。动态计算每个进程的虚拟运行时间,从而实现公平性和高效性。

二、权重与vruntime关系

2.1 权重计算

struct task_struct {

/*

进程执行时,它会根据具体情况改变状态,Linux 中的进程主要有如下状态

TASK_RUNNING 可运行

TASK_INTERRUPTIBLE 可中断的等待状态

TASK_UNINTERRUPTIBLE 不可中断的等待状态

TASK_ZOMBIE 僵死

TASK_STOPPED 暂停

*/

volatile long state; /* -1 unrunnable, 0 runnable, >0 stopped */

int prio; /*动态优先级,根据静态优先级及其他条件计算而来*/

int static_prio; /*普通进程静态优先级,取值范围是100(优先级最高)~139(优先级最低),分别对应nice value的-20 ~ 19。*/

int normal_prio; /*调度器综合考虑各种因素,例如调度策略,nice value、scheduling priority等,把这些factor全部考虑进来,归一化成一个数轴上的number,以此来表示其优先级,这就是normalized priority。对于一个线程,其normalized priority的number越小,其优先级越大。*/

unsigned int rt_priority; /*实时进程优先级*/

unsigned int policy; /*调度策略*/

const struct sched_class *sched_class; /*调度类*/

struct sched_entity se; /*普通进程调度实体*/

struct sched_rt_entity rt; /*实时进程调度实体*/

struct sched_dl_entity dl; /*deadline进程调度实体*/

};

struct load_weight {

unsigned long weight;

u32 inv_weight;

};

struct sched_entity {

/* For load-balancing: */

struct load_weight load;

struct rb_node run_node;

struct list_head group_node;

unsigned int on_rq;

u64 exec_start;

u64 sum_exec_runtime;

u64 vruntime;

u64 prev_sum_exec_runtime;

u64 nr_migrations;

struct sched_statistics statistics;

......

}

// 普通进程nice值与权重转换表,如nice值为0,对应权重为1024

const int sched_prio_to_weight[40] = {

7527 /* -20 */ 88761, 71755, 56483, 46273, 36291,

7528 /* -15 */ 29154, 23254, 18705, 14949, 11916,

7529 /* -10 */ 9548, 7620, 6100, 4904, 3906,

7530 /* -5 */ 3121, 2501, 1991, 1586, 1277,

7531 /* 0 */ 1024, 820, 655, 526, 423,

7532 /* 5 */ 335, 272, 215, 172, 137,

7533 /* 10 */ 110, 87, 70, 56, 45,

7534 /* 15 */ 36, 29, 23, 18, 15,

7535

};进程静态优先级:0~139,其中0~99为实时进程优先级,100~139为普通进程的优先级(对应的nice值为-20~19)

普通进程的权重:参考普通进程nice值与权重转换表,如nice值为0,对应权重为1024。数组的值可以看作是公式:weight = 1024 / 1.25^nice计算得到。公式中的1.25取值依据是:进程每降低一个nice值,将多获得10% cpu的时间。

分配给进程的时间: 总的cpu时间 * 进程的权重/就绪队列(runqueue)所有进程权重之和。

虚拟运行时间:vruntime = delta_exec * nice_0_weight / weight,由于vruntime计算会涉及浮点数计算,为了提高计算效率,采用乘法与移位计算方式,vruntime = (delta_exec * nice_0_weight * multiplier / weight)>> 32 (delta_exec:实际运行时间 multiplier:2的32次幂)。

2.2 vruntime核心代码实现

static u64 __calc_delta(u64 delta_exec, unsigned long weight, struct load_weight *lw)

{

u64 fact = scale_load_down(weight);

u32 fact_hi = (u32)(fact >> 32);

int shift = WMULT_SHIFT;

int fs;

__update_inv_weight(lw);

if (unlikely(fact_hi)) {

fs = fls(fact_hi);

shift -= fs;

fact >>= fs;

}

fact = mul_u32_u32(fact, lw->inv_weight);

fact_hi = (u32)(fact >> 32);

if (fact_hi) {

fs = fls(fact_hi);

shift -= fs;

fact >>= fs;

}

return mul_u64_u32_shr(delta_exec, fact, shift);

}2.3 实际运行时间

static void update_curr(struct cfs_rq *cfs_rq)

{

struct sched_entity *curr = cfs_rq->curr;

u64 now = rq_clock_task(rq_of(cfs_rq));

u64 delta_exec;

if (unlikely(!curr))

return;

// 上一次在cpu上的执行时间

delta_exec = now - curr->exec_start;

if (unlikely((s64)delta_exec <= 0))

return;

curr->exec_start = now;

if (schedstat_enabled()) {

struct sched_statistics *stats;

stats = __schedstats_from_se(curr);

__schedstat_set(stats->exec_max,

max(delta_exec, stats->exec_max));

}

// 总的运行时间

curr->sum_exec_runtime += delta_exec;

schedstat_add(cfs_rq->exec_clock, delta_exec);

// 计算虚拟运行时间

curr->vruntime += calc_delta_fair(delta_exec, curr);

update_deadline(cfs_rq, curr);

// 更新cfs就绪队列中最小的vruntime

update_min_vruntime(cfs_rq);

if (entity_is_task(curr)) {

struct task_struct *curtask = task_of(curr);

trace_sched_stat_runtime(curtask, delta_exec, curr->vruntime);

cgroup_account_cputime(curtask, delta_exec);

account_group_exec_runtime(curtask, delta_exec);

}

account_cfs_rq_runtime(cfs_rq, delta_exec);

}三、进程创建

3.1 计算新进程的vruntime

新创建的进程会被加入调度队列,导致cfs队列权重发生变化,需要一个惩罚值:

static u64 sched_slice(struct cfs_rq *cfs_rq, struct sched_entity *se)

{

unsigned int nr_running = cfs_rq->nr_running;

u64 slice;

if (sched_feat(ALT_PERIOD))

nr_running = rq_of(cfs_rq)->cfs.h_nr_running;

slice = __sched_period(nr_running + !se->on_rq);

for_each_sched_entity(se) {

struct load_weight *load;

struct load_weight lw;

cfs_rq = cfs_rq_of(se);

load = &cfs_rq->load;

if (unlikely(!se->on_rq)) {

lw = cfs_rq->load;

update_load_add(&lw, se->load.weight);

load = &lw;

}

slice = __calc_delta(slice, se->load.weight, load);

}

if (sched_feat(BASE_SLICE))

slice = max(slice, (u64)sysctl_sched_min_granularity);

return slice;

}新创建的进程vruntime = max(调度实体的实际虚拟时间, min_vruntime)。

3.2 加入调度器

执行wake_up_new_task函数加入到调度器中:

void wake_up_new_task(struct task_struct *p)

{

struct rq_flags rf;

struct rq *rq;

trace_android_rvh_wake_up_new_task(p);

raw_spin_lock_irqsave(&p->pi_lock, rf.flags);

p->state = TASK_RUNNING;

#ifdef CONFIG_SMP

/*

* Fork balancing, do it here and not earlier because:

* - cpus_ptr can change in the fork path

* - any previously selected CPU might disappear through hotplug

*

* Use __set_task_cpu() to avoid calling sched_class::migrate_task_rq,

* as we're not fully set-up yet.

*/

p->recent_used_cpu = task_cpu(p);

rseq_migrate(p);

__set_task_cpu(p, select_task_rq(p, task_cpu(p), SD_BALANCE_FORK, 0));

#endif

rq = __task_rq_lock(p, &rf);

update_rq_clock(rq);

post_init_entity_util_avg(p);

trace_android_rvh_new_task_stats(p);

activate_task(rq, p, ENQUEUE_NOCLOCK);

trace_sched_wakeup_new(p);

check_preempt_curr(rq, p, WF_FORK);

#ifdef CONFIG_SMP

if (p->sched_class->task_woken) {

/*

* Nothing relies on rq->lock after this, so its fine to

* drop it.

*/

rq_unpin_lock(rq, &rf);

p->sched_class->task_woken(rq, p);

rq_repin_lock(rq, &rf);

}

#endif

task_rq_unlock(rq, p, &rf);

}3.3 加入到红黑树

加入到就绪队列的红黑树中:

static void __enqueue_entity(struct cfs_rq *cfs_rq, struct sched_entity *se)

{

struct rb_node **link = &cfs_rq->tasks_timeline.rb_root.rb_node;

struct rb_node *parent = NULL;

struct sched_entity *entry;

bool leftmost = true;

trace_android_rvh_enqueue_entity(cfs_rq, se);

/*

* Find the right place in the rbtree:

*/

while (*link) {

parent = *link;

entry = rb_entry(parent, struct sched_entity, run_node);

/*

* We dont care about collisions. Nodes with

* the same key stay together.

*/

if (entity_before(se, entry)) {

link = &parent->rb_left;

} else {

link = &parent->rb_right;

leftmost = false;

}

}

b_link_node(&se->run_node, parent, link);

rb_insert_color_cached(&se->run_node,

&cfs_rq->tasks_timeline, leftmost);

}3.4 负载计算

runnable_sum = running time + 等待时间;

runnable_period = 在系统中总时间;

runnable_avg_sum:可运行状态总的衰减时间;

runnable_avg_period = 在系统中总的衰减时间;

load_avg_contrib:进程平均负载贡献度;

load_avg_contrib = runnable_avg_sum * weight / runnable_avg_period;

runnable_load_avg:就绪队列上所有调度实体的load_avg_contrib总和;

一个调度实体负载总和:L = L0 + L1 * y1 + L2 * y2 + ...... + L32 * y32 + ......,yn = y*y*...*y(共n个);

static const u32 pelt32_runnable_avg_yN_inv[] __maybe_unused = {

0xffffffff, 0xfa83b2da, 0xf5257d14, 0xefe4b99a, 0xeac0c6e6, 0xe5b906e6,

0xe0ccdeeb, 0xdbfbb796, 0xd744fcc9, 0xd2a81d91, 0xce248c14, 0xc9b9bd85,

0xc5672a10, 0xc12c4cc9, 0xbd08a39e, 0xb8fbaf46, 0xb504f333, 0xb123f581,

0xad583ee9, 0xa9a15ab4, 0xa5fed6a9, 0xa2704302, 0x9ef5325f, 0x9b8d39b9,

0x9837f050, 0x94f4efa8, 0x91c3d373, 0x8ea4398a, 0x8b95c1e3, 0x88980e80,

0x85aac367, 0x82cd8698,

};计算第n个周期的衰减值:

static u64 decay_load(u64 val, u64 n)

{

unsigned int local_n;

if (unlikely(n > LOAD_AVG_PERIOD * 63))

return 0;

/* after bounds checking we can collapse to 32-bit */

local_n = n;

/*

* As y^PERIOD = 1/2, we can combine

* y^n = 1/2^(n/PERIOD) * y^(n%PERIOD)

* With a look-up table which covers y^n (n<PERIOD)

*

* To achieve constant time decay_load.

*/

if (unlikely(local_n >= LOAD_AVG_PERIOD)) {

val >>= local_n / LOAD_AVG_PERIOD;

local_n %= LOAD_AVG_PERIOD;

}

val = mul_u64_u32_shr(val, pelt_runnable_avg_yN_inv[local_n], 32);

return val;

}四、进程调度

4.1 选择被调度的task

struct task_struct *

pick_next_task_fair(struct rq *rq, struct task_struct *prev, struct rq_flags *rf)

{

struct cfs_rq *cfs_rq = &rq->cfs;

struct sched_entity *se;

struct task_struct *p;

int new_tasks;

again:

if (!sched_fair_runnable(rq))

goto idle;

#ifdef CONFIG_FAIR_GROUP_SCHED

if (!prev || prev->sched_class != &fair_sched_class)

goto simple;

/*

* Because of the set_next_buddy() in dequeue_task_fair() it is rather

* likely that a next task is from the same cgroup as the current.

*

* Therefore attempt to avoid putting and setting the entire cgroup

* hierarchy, only change the part that actually changes.

*/

do {

struct sched_entity *curr = cfs_rq->curr;

/*

* Since we got here without doing put_prev_entity() we also

* have to consider cfs_rq->curr. If it is still a runnable

* entity, update_curr() will update its vruntime, otherwise

* forget we've ever seen it.

*/

if (curr) {

if (curr->on_rq)

update_curr(cfs_rq);

else

curr = NULL;

/*

* This call to check_cfs_rq_runtime() will do the

* throttle and dequeue its entity in the parent(s).

* Therefore the nr_running test will indeed

* be correct.

*/

if (unlikely(check_cfs_rq_runtime(cfs_rq))) {

cfs_rq = &rq->cfs;

if (!cfs_rq->nr_running)

goto idle;

goto simple;

}

}

se = pick_next_entity(cfs_rq);

cfs_rq = group_cfs_rq(se);

} while (cfs_rq);

p = task_of(se);

/*

* Since we haven't yet done put_prev_entity and if the selected task

* is a different task than we started out with, try and touch the

* least amount of cfs_rqs.

*/

if (prev != p) {

struct sched_entity *pse = &prev->se;

while (!(cfs_rq = is_same_group(se, pse))) {

int se_depth = se->depth;

int pse_depth = pse->depth;

if (se_depth <= pse_depth) {

put_prev_entity(cfs_rq_of(pse), pse);

pse = parent_entity(pse);

}

if (se_depth >= pse_depth) {

set_next_entity(cfs_rq_of(se), se);

se = parent_entity(se);

}

}

put_prev_entity(cfs_rq, pse);

set_next_entity(cfs_rq, se);

}

goto done;

simple:

#endif

if (prev)

put_prev_task(rq, prev);

do {

se = pick_next_entity(cfs_rq);

set_next_entity(cfs_rq, se);

cfs_rq = group_cfs_rq(se);

} while (cfs_rq);

p = task_of(se);

done: __maybe_unused;

#ifdef CONFIG_SMP

/*

* Move the next running task to the front of

* the list, so our cfs_tasks list becomes MRU

* one.

*/

list_move(&p->se.group_node, &rq->cfs_tasks);

#endif

if (hrtick_enabled_fair(rq))

hrtick_start_fair(rq, p);

update_misfit_status(p, rq);

sched_fair_update_stop_tick(rq, p);

return p;

idle:

if (!rf)

return NULL;

new_tasks = newidle_balance(rq, rf);

/*

* Because newidle_balance() releases (and re-acquires) rq->lock, it is

* possible for any higher priority task to appear. In that case we

* must re-start the pick_next_entity() loop.

*/

if (new_tasks < 0)

return RETRY_TASK;

if (new_tasks > 0)

goto again;

/*

* rq is about to be idle, check if we need to update the

* lost_idle_time of clock_pelt

*/

update_idle_rq_clock_pelt(rq);

return NULL;

}4.2 进程上下文切换

prev:将要被换出的进程;

next:将要被执行的进程;

static __always_inline struct rq *

context_switch(struct rq *rq, struct task_struct *prev,

struct task_struct *next, struct rq_flags *rf)

{

prepare_task_switch(rq, prev, next);

/*

* For paravirt, this is coupled with an exit in switch_to to

* combine the page table reload and the switch backend into

* one hypercall.

*/

arch_start_context_switch(prev);

/*

* kernel -> kernel lazy + transfer active

* user -> kernel lazy + mmgrab_lazy_tlb() active

*

* kernel -> user switch + mmdrop_lazy_tlb() active

* user -> user switch

*

* switch_mm_cid() needs to be updated if the barriers provided

* by context_switch() are modified.

*/

if (!next->mm) { // to kernel

enter_lazy_tlb(prev->active_mm, next);

next->active_mm = prev->active_mm;

if (prev->mm) // from user

mmgrab_lazy_tlb(prev->active_mm);

else

prev->active_mm = NULL;

} else {

// 将新进程的页表基地址设置到页目录表基地址的寄存器 // to user

membarrier_switch_mm(rq, prev->active_mm, next->mm);

/*

* sys_membarrier() requires an smp_mb() between setting

* rq->curr / membarrier_switch_mm() and returning to userspace.

*

* The below provides this either through switch_mm(), or in

* case 'prev->active_mm == next->mm' through

* finish_task_switch()'s mmdrop().

*/

switch_mm_irqs_off(prev->active_mm, next->mm, next);

lru_gen_use_mm(next->mm);

if (!prev->mm) { // from kernel

/* will mmdrop_lazy_tlb() in finish_task_switch(). */

rq->prev_mm = prev->active_mm;

prev->active_mm = NULL;

}

}

/* switch_mm_cid() requires the memory barriers above. */

switch_mm_cid(rq, prev, next);

prepare_lock_switch(rq, next, rf);

/* Here we just switch the register state and the stack. */

// 新旧进程的堆栈切换

switch_to(prev, next, prev);

barrier();

return finish_task_switch(prev);

}设置页表基地址到寄存器,并设置context ID:

// kernel/arch/arm/mm/proc-v7-2level.S

ENTRY(cpu_v7_switch_mm)

#ifdef CONFIG_MMU

mmid r1, r1 @ get mm->context.id

ALT_SMP(orr r0, r0, #TTB_FLAGS_SMP)

ALT_UP(orr r0, r0, #TTB_FLAGS_UP)

#ifdef CONFIG_PID_IN_CONTEXTIDR

mrc p15, 0, r2, c13, c0, 1 @ read current context ID

lsr r2, r2, #8 @ extract the PID

bfi r1, r2, #8, #24 @ insert into new context ID

#endif

#ifdef CONFIG_ARM_ERRATA_754322

dsb

#endif

mcr p15, 0, r1, c13, c0, 1 @ set context ID

isb

mcr p15, 0, r0, c2, c0, 0 @ set TTB 0

isb

#endif

bx lr

ENDPROC(cpu_v7_switch_mm)切换新旧进程的栈空间:

r0:旧进程的task_struct

r1:旧进程的thread_info结构体

r2:新进程的thread_info结构体

将旧进程的寄存器上下文信息保存到thread_info->cpu_context,将新进程的thread_info->cpu_context设置到cpu的寄存器中,实现进程的堆栈切换。

// kernel/arch/arm/kernel/entry-armv.S

ENTRY(__switch_to)

UNWIND(.fnstart )

UNWIND(.cantunwind )

add ip, r1, #TI_CPU_SAVE

ARM( stmia ip!, {r4 - sl, fp, sp, lr} ) @ Store most regs on stack

THUMB( stmia ip!, {r4 - sl, fp} ) @ Store most regs on stack

THUMB( str sp, [ip], #4 )

THUMB( str lr, [ip], #4 )

ldr r4, [r2, #TI_TP_VALUE]

ldr r5, [r2, #TI_TP_VALUE + 4]

#ifdef CONFIG_CPU_USE_DOMAINS

mrc p15, 0, r6, c3, c0, 0 @ Get domain register

str r6, [r1, #TI_CPU_DOMAIN] @ Save old domain register

ldr r6, [r2, #TI_CPU_DOMAIN]

#endif

switch_tls r1, r4, r5, r3, r7

#if defined(CONFIG_STACKPROTECTOR) && !defined(CONFIG_SMP)

ldr r7, [r2, #TI_TASK]

ldr r8, =__stack_chk_guard

.if (TSK_STACK_CANARY > IMM12_MASK)

add r7, r7, #TSK_STACK_CANARY & ~IMM12_MASK

.endif

ldr r7, [r7, #TSK_STACK_CANARY & IMM12_MASK]

#endif

#ifdef CONFIG_CPU_USE_DOMAINS

mcr p15, 0, r6, c3, c0, 0 @ Set domain register

#endif

mov r5, r0

add r4, r2, #TI_CPU_SAVE

ldr r0, =thread_notify_head

mov r1, #THREAD_NOTIFY_SWITCH

bl atomic_notifier_call_chain

#if defined(CONFIG_STACKPROTECTOR) && !defined(CONFIG_SMP)

str r7, [r8]

#endif

THUMB( mov ip, r4 )

mov r0, r5

ARM( ldmia r4, {r4 - sl, fp, sp, pc} ) @ Load all regs saved previously

THUMB( ldmia ip!, {r4 - sl, fp} ) @ Load all regs saved previously

THUMB( ldr sp, [ip], #4 )

THUMB( ldr pc, [ip] )

UNWIND(.fnend )

ENDPROC(__switch_to)五、调度tick

CFS调度器的tick是一个固定的时间间隔,通常为1毫秒。每当tick发生时,调度器会检查当前正在运行的进程是否已经运行了足够的时间,并且是否需要进行调度。

tick的作用有两个主要方面:

1)周期性的任务切换:CFS调度器会周期性地检查运行的进程,并确保每个进程在公平共享CPU时间片。通过将运行时间切割为较小的时间片,调度器可以尽量保证各个进程以相等的机会获得CPU资源。

2)决策调度点:tick也是CFS调度器进行重要决策的时间点。在每个tick中,调度器会评估进程的优先级和运行时间,并根据这些信息做出决策,比如选择下一个需要运行的进程。

void scheduler_tick(void)

{

int cpu = smp_processor_id();

struct rq *rq = cpu_rq(cpu);

struct task_struct *curr = rq->curr;

struct rq_flags rf;

unsigned long thermal_pressure;

arch_scale_freq_tick();

sched_clock_tick();

rq_lock(rq, &rf);

trace_android_rvh_tick_entry(rq);

// 更新cpu就绪队列中的时钟计数

update_rq_clock(rq);

thermal_pressure = arch_scale_thermal_pressure(cpu_of(rq));

update_thermal_load_avg(rq_clock_thermal(rq), rq, thermal_pressure);

curr->sched_class->task_tick(rq, curr, 0);

calc_global_load_tick(rq);

psi_task_tick(rq);

rq_unlock(rq, &rf);

perf_event_task_tick();

#ifdef CONFIG_SMP

rq->idle_balance = idle_cpu(cpu);

trigger_load_balance(rq);

#endif

trace_android_vh_scheduler_tick(rq);

}static void task_tick_fair(struct rq *rq, struct task_struct *curr, int queued)

{

struct cfs_rq *cfs_rq;

struct sched_entity *se = &curr->se;

for_each_sched_entity(se) {

cfs_rq = cfs_rq_of(se);

entity_tick(cfs_rq, se, queued);

}

if (static_branch_unlikely(&sched_numa_balancing))

task_tick_numa(rq, curr);

update_misfit_status(curr, rq);

update_overutilized_status(task_rq(curr));

}六、组调度

组调度结构体:

struct task_group {

struct cgroup_subsys_state css;

#ifdef CONFIG_FAIR_GROUP_SCHED

/* schedulable entities of this group on each CPU */

struct sched_entity **se;

/* runqueue "owned" by this group on each CPU */

struct cfs_rq **cfs_rq;

unsigned long shares;

......

}创建一个组调度:

struct task_group *sched_create_group(struct task_group *parent)

{

struct task_group *tg;

tg = kmem_cache_alloc(task_group_cache, GFP_KERNEL | __GFP_ZERO);

if (!tg)

return ERR_PTR(-ENOMEM);

if (!alloc_fair_sched_group(tg, parent))

goto err;

if (!alloc_rt_sched_group(tg, parent))

goto err;

alloc_uclamp_sched_group(tg, parent);

return tg;

err:

sched_free_group(tg);

return ERR_PTR(-ENOMEM);

}int alloc_fair_sched_group(struct task_group *tg, struct task_group *parent)

{

struct sched_entity *se;

struct cfs_rq *cfs_rq;

int i;

tg->cfs_rq = kcalloc(nr_cpu_ids, sizeof(cfs_rq), GFP_KERNEL);

if (!tg->cfs_rq)

goto err;

tg->se = kcalloc(nr_cpu_ids, sizeof(se), GFP_KERNEL);

if (!tg->se)

goto err;

tg->shares = NICE_0_LOAD;

init_cfs_bandwidth(tg_cfs_bandwidth(tg));

for_each_possible_cpu(i) {

cfs_rq = kzalloc_node(sizeof(struct cfs_rq),

GFP_KERNEL, cpu_to_node(i));

if (!cfs_rq)

goto err;

se = kzalloc_node(sizeof(struct sched_entity),

GFP_KERNEL, cpu_to_node(i));

if (!se)

goto err_free_rq;

init_cfs_rq(cfs_rq);

init_tg_cfs_entry(tg, cfs_rq, se, i, parent->se[i]);

init_entity_runnable_average(se);

}

return 1;

err_free_rq:

kfree(cfs_rq);

err:

return 0;

}

void init_tg_cfs_entry(struct task_group *tg, struct cfs_rq *cfs_rq,

struct sched_entity *se, int cpu,

struct sched_entity *parent)

{

struct rq *rq = cpu_rq(cpu);

cfs_rq->tg = tg;

cfs_rq->rq = rq;

init_cfs_rq_runtime(cfs_rq);

tg->cfs_rq[cpu] = cfs_rq;

tg->se[cpu] = se;

/* se could be NULL for root_task_group */

if (!se)

return;

if (!parent) {

se->cfs_rq = &rq->cfs;

se->depth = 0;

} else {

se->cfs_rq = parent->my_q;

se->depth = parent->depth + 1;

}

se->my_q = cfs_rq;

/* guarantee group entities always have weight */

update_load_set(&se->load, NICE_0_LOAD);

se->parent = parent;

}进程加入到组调度:

static void cpu_cgroup_attach(struct cgroup_taskset *tset)

{

struct task_struct *task;

struct cgroup_subsys_state *css;

cgroup_taskset_for_each(task, css, tset)

// 将进程加入到组调度中

sched_move_task(task);

trace_android_rvh_cpu_cgroup_attach(tset);

}

void sched_move_task(struct task_struct *tsk)

{

int queued, running, queue_flags =

DEQUEUE_SAVE | DEQUEUE_MOVE | DEQUEUE_NOCLOCK;

struct rq_flags rf;

struct rq *rq;

rq = task_rq_lock(tsk, &rf);

update_rq_clock(rq);

running = task_current(rq, tsk);

queued = task_on_rq_queued(tsk);

if (queued)

dequeue_task(rq, tsk, queue_flags);

if (running)

put_prev_task(rq, tsk);

sched_change_group(tsk, TASK_MOVE_GROUP);

if (queued)

enqueue_task(rq, tsk, queue_flags);

if (running) {

set_next_task(rq, tsk);

/*

* After changing group, the running task may have joined a

* throttled one but it's still the running task. Trigger a

* resched to make sure that task can still run.

*/

resched_curr(rq);

}

task_rq_unlock(rq, tsk, &rf);

}组调度实现原理总结:

1)创建组调度tg后,需要为每个cpu创建创建组调度内部的就绪队列

2)组调度以一个调度实体加入cpu的就绪队列

3)某个进程加入组调度的就绪队列后,需要从cpu的就绪队列中移除

4)选择被调度的实体是一个组调度时,需要再遍历组调度中的调度实体

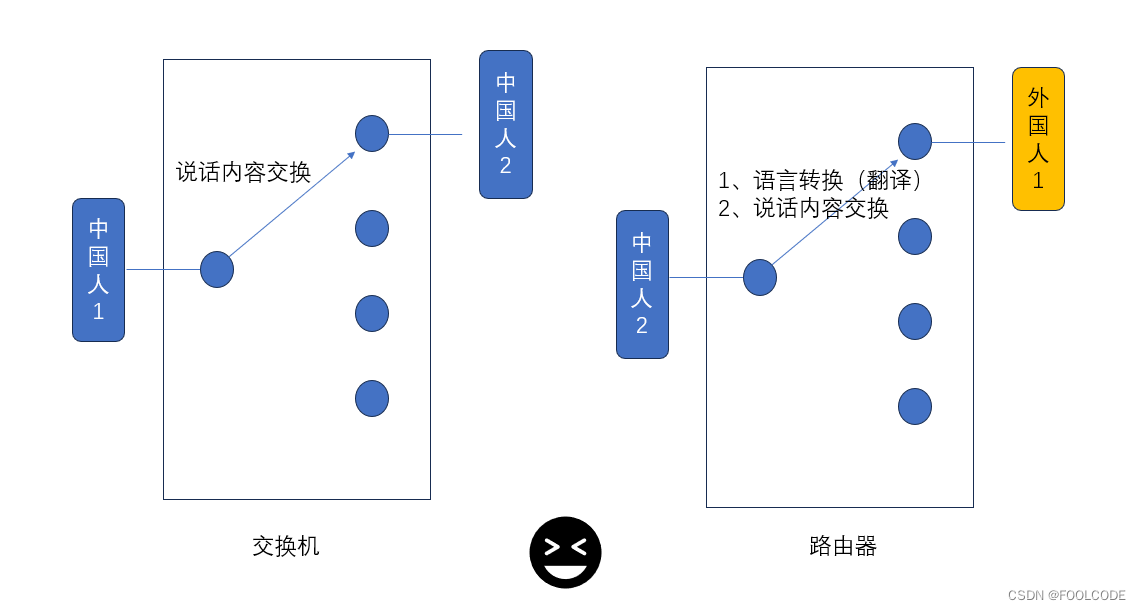

七、调度类&调度实体&就绪队列

在Linux中,将调度器公共的部分抽象,使用struct sched_class结构体描述一个具体的调度类。系统核心调度代码会通过struct sched_class结构体的成员调用具体调度类的核心算法。

struct sched_class {

const struct sched_class *next; //指向下一个调度类,按照优先级

void (*enqueue_task) (struct rq *rq, struct task_struct *p, int flags); //向该调度类的runqueue(就绪队列)中添加进程

void (*dequeue_task) (struct rq *rq, struct task_struct *p, int flags); //向该调度类的runqueue(就绪队列)中删除进程

void (*check_preempt_curr)(struct rq *rq, struct task_struct *p, int flags); //当一个进程被唤醒或者创建的时候,需要检查当前进程是否可以抢占当前cpu上正在运行的进程,如果可以抢占需要标记TIF_NEED_RESCHED flag

//从runqueue中选择一个最适合运行的task

struct task_struct * (*pick_next_task)(struct rq *rq, struct task_struct *prev,

struct rq_flags *rf);

......

}

static inline struct task_struct *pick_next_task(struct rq *rq,

struct task_struct *prev, struct rq_flags *rf)

{

const struct sched_class *class;

struct task_struct *p;

/* 按照优先级顺序便利所有的调度类,通过next指针便利单链表 */

for_each_class(class) {

p = class->pick_next_task(rq, prev, rf);

if (p)

return p;

}

}调度类 |

描述 |

调度策略 |

dl_sched_class |

deadline调度器 |

SCHED_DEADLINE |

rt_sched_class |

实时调度器 |

SCHED_FIFO、SCHED_RR |

fair_sched_class |

完全公平调度器 |

SCHED_NORMAL、SCHED_BATCH |

idle_sched_class |

Idle task调度器 |

SCHED_IDLE |

调度实体:

struct sched_entity {

struct load_weight load;

struct rb_node run_node;

unsigned int on_rq;

u64 sum_exec_runtime;

u64 vruntime;

}; 就绪队列:

struct rq {

struct cfs_rq cfs;

struct rt_rq rt;

struct dl_rq dl;

};

struct rb_root_cached {

struct rb_root rb_root; //红黑树的根节点

struct rb_node *rb_leftmost; //红黑树的最左边节点

};

struct cfs_rq {

struct load_weight load; //就绪队列管理的所有调度实体权重之和

unsigned int nr_running; //调度实体的个数

u64 min_vruntime; //就绪队列上的最小虚拟时间

struct rb_root_cached tasks_timeline; //就绪队列红黑树的信息

};八、调度延迟

调度延迟是保证每一个可运行进程都至少运行一次的时间间隔,也称作调度周期。

例如,每个进程都运行10ms,系统中总共有2个进程,那么调度延迟就是20ms。如果有5个进程,那么调度延迟就是50ms。如果现在保证调度延迟不变,固定是6ms,那么系统中如果有2个进程,那么每个进程运行3ms。如果有6个进程,那么每个进程运行1ms。如果有100个进程,那么每个进程分配到的时间就是0.06ms。随着进程的增加,每个进程分配的时间在减少,进程调度过于频繁,上下文切换时间开销就会变大。因此,CFS调度器的调度延迟时间的设定并不是固定的。当系统处于就绪态的进程少于一个定值(默认值8)的时候,调度延迟也是固定一个值不变(默认值6ms)。当系统就绪态进程个数超过这个值时,我们保证每个进程至少运行一定的时间才让出cpu。这个“至少一定的时间”被称为最小粒度时间。在CFS默认设置中,最小粒度时间是0.75ms。用变量sysctl_sched_min_granularity记录。因此,调度周期是一个动态变化的值。调度周期计算函数是__sched_period()

static u64 __sched_period(unsigned long nr_running)

{

// sched_nr_latency为系统处于就绪态的进程数量(默认值8)

if (unlikely(nr_running > sched_nr_latency))

return nr_running * sysctl_sched_min_granularity;

else

return sysctl_sched_latency;

}