PPO(Proximal Policy Optimization)

最好先看一下策略梯度优化,再看这篇文章,不然公式推不明白

PPO是Openai默认的强化学习策略

- On-policy:学习的agent和与环境交互的agent是同一个

∇ R ˉ θ = E τ ∼ p θ ( τ ) [ R ( τ ) ∇ log p θ ( τ ) ] \nabla \bar{R}_\theta = E_{\tau \sim p_\theta(\tau)}[R(\tau) \nabla \log p_\theta(\tau)] ∇Rˉθ=Eτ∼pθ(τ)[R(τ)∇logpθ(τ)]

- Off-policy:学习的agent和与环境交互的agent不同

这里的agent可以理解为上文的 actor

∇ R ˉ θ = E τ ∼ p θ ′ ( τ ) [ p θ ( τ ) p θ ′ ( τ ) R ( τ ) ∇ log p θ ( τ ) ] \nabla \bar{R}_\theta = E_{\tau \sim p_{\theta'}(\tau)}\left[\frac{p_\theta(\tau)}{p_{\theta'}(\tau)} R(\tau) \nabla \log p_\theta(\tau)\right] ∇Rˉθ=Eτ∼pθ′(τ)[pθ′(τ)pθ(τ)R(τ)∇logpθ(τ)]

为什么要off-policy ?

因为在on-policy时,你每一次采样后的 τ \tau τ 只能用一次,在更新 θ \theta θ 参数后就得重新采样

变成off-policy的好处就是一次采样多次使用,不在原始分布 p ( x ) p(x) p(x) 上采样,而是在伪分布 q ( x ) q(x) q(x) 上采样,然后来训练 p ( x ) p(x) p(x) 分布的参数 θ \theta θ

在做 off-policy 时,我们补充下述方法和公式推导

Importance Sampling

E x ∼ p [ f ( x ) ] ≈ 1 N ∑ i = 1 N f ( x i ) = ∫ f ( x ) p ( x ) d x = ∫ f ( x ) p ( x ) q ( x ) q ( x ) d x = E x ∼ q [ f ( x ) p ( x ) q ( x ) ] E_{x \sim p}[f(x)] \approx \frac{1}{N} \sum_{i=1}^N f(x^i)= \int f(x) p(x) \, dx = \int f(x) \frac{p(x)}{q(x)} q(x) \, dx = E_{x \sim q}\left[f(x) \frac{p(x)}{q(x)}\right] Ex∼p[f(x)]≈N1i=1∑Nf(xi)=∫f(x)p(x)dx=∫f(x)q(x)p(x)q(x)dx=Ex∼q[f(x)q(x)p(x)]

- 原来 x i x_i xi 是从 p ( x ) p(x) p(x) 分布中去抓取,但是通过上述推导,我们可以从 q ( x ) q(x) q(x) 分布中抓取 x i x_i xi

- p ( x ) p(x) p(x) 分布是实际分布, q ( x ) q(x) q(x) 分布是模仿实际分布的伪分布

在有伪分布 q ( x ) q(x) q(x) 后,我们就可以从中抽取 θ ′ \theta^{'} θ′ 来训练 θ \theta θ ,由于 θ ′ \theta^{'} θ′ 是固定的,所以可以重复使用,不用再像之前的 θ \theta θ 一样每次都得更新。

但importance sampling 也有一些问题:方差会不一样

方差公式: V a r [ X ] = E [ X 2 ] − ( E [ X ] ) 2 Var[X] = E[X^2]-(E[X])^2 Var[X]=E[X2]−(E[X])2

Var x ∼ p [ f ( x ) ] = E x ∼ p [ f ( x ) 2 ] − ( E x ∼ p [ f ( x ) ] ) 2 \text{Var}_{x \sim p}[f(x)] = E_{x \sim p}[f(x)^2] - (E_{x \sim p}[f(x)])^2 Varx∼p[f(x)]=Ex∼p[f(x)2]−(Ex∼p[f(x)])2

Var x ∼ q [ f ( x ) p ( x ) q ( x ) ] = E x ∼ q [ ( f ( x ) p ( x ) q ( x ) ) 2 ] − ( E x ∼ q [ f ( x ) p ( x ) q ( x ) ] ) 2 = ∫ q ( x ) f ( x ) 2 p ( x ) 2 q ( x ) 2 d x − ∫ q ( x ) 2 f ( x ) 2 p ( x ) 2 q ( x ) 2 d x = E x ∼ p [ f ( x ) 2 p ( x ) q ( x ) ] − ( E x ∼ p [ f ( x ) ] ) 2 \text{Var}_{x \sim q}\left[f(x) \frac{p(x)}{q(x)}\right] = E_{x \sim q}\left[\left(f(x) \frac{p(x)}{q(x)}\right)^2\right] - \left(E_{x \sim q}\left[f(x) \frac{p(x)}{q(x)}\right]\right)^2 \\ = \int q(x)f(x)^2\frac{p(x)^2}{q(x)^2}dx - \int q(x)^2f(x)^2\frac{p(x)^2}{q(x)^2}dx \\= E_{x \sim p}[f(x)^2\frac{p(x)}{q(x)}] - \left(E_{x \sim p}[f(x)]\right)^2 Varx∼q[f(x)q(x)p(x)]=Ex∼q[(f(x)q(x)p(x))2]−(Ex∼q[f(x)q(x)p(x)])2=∫q(x)f(x)2q(x)2p(x)2dx−∫q(x)2f(x)2q(x)2p(x)2dx=Ex∼p[f(x)2q(x)p(x)]−(Ex∼p[f(x)])2

观察上述两个方差等式我们可以看到,如果 p ( x ) p(x) p(x) 分布和 q ( x ) q(x) q(x) 分布一样,需要满足下述等式

E x ∼ p [ f ( x ) ] = E x ∼ q [ f ( x ) p ( x ) q ( x ) ] E_{x \sim p}[f(x)] = E_{x \sim q}\left[f(x) \frac{p(x)}{q(x)}\right] Ex∼p[f(x)]=Ex∼q[f(x)q(x)p(x)]

如果 p ( x ) q ( x ) \frac{p(x)}{q(x)} q(x)p(x) 差距很大,即实际分布和伪分布相差很多,那么实际方差与估计方差就会有很大的差距

理想状态下,只要有足够多的采样,上述问题就不是问题。但是实际情况是我们无法做到采样足够多的样本,所以 E x ∼ p [ f ( x ) ] = E x ∼ q [ f ( x ) p ( x ) q ( x ) ] E_{x \sim p}[f(x)] = E_{x \sim q}\left[f(x) \frac{p(x)}{q(x)}\right] Ex∼p[f(x)]=Ex∼q[f(x)q(x)p(x)] 的左右两侧会产生很大的差别

梯度更新

gradient for update = E ( s t , a t ) ∼ π θ [ A θ ( s t , a t ) ∇ log p θ ( a t ∣ s t ) ] = E ( s t , a t ) ∼ π θ ′ [ p θ ( s t , a t ) p θ ′ ( s t , a t ) A θ ( s t , a t ) ∇ log p θ ( a t ∣ s t ) ] = E ( s t , a t ) ∼ π θ ′ [ p θ ( a t ∣ s t ) p θ ( s t ) p θ ′ ( a t ∣ s t ) p θ ′ ( s t ) A θ ( s t , a t ) ∇ log p θ ( a t ∣ s t ) ] \text{gradient for update} = E_{(s_t,a_t) \sim \pi_\theta} \left[ A^\theta(s_t, a_t) \nabla \log p_\theta(a_t | s_t) \right] \\= E_{(s_t,a_t) \sim \pi_{\theta'}} \left[ \frac{p_\theta(s_t, a_t)}{p_{\theta'}(s_t, a_t)} A^\theta(s_t, a_t) \nabla \log p_\theta(a_t | s_t) \right] \\ = E_{(s_t,a_t) \sim \pi_{\theta'}} \left[ \frac{p_\theta(a_t | s_t) p_\theta(s_t)}{p_{\theta'}(a_t | s_t) p_{\theta'}(s_t)} A^\theta(s_t, a_t) \nabla \log p_\theta(a_t | s_t) \right] gradient for update=E(st,at)∼πθ[Aθ(st,at)∇logpθ(at∣st)]=E(st,at)∼πθ′[pθ′(st,at)pθ(st,at)Aθ(st,at)∇logpθ(at∣st)]=E(st,at)∼πθ′[pθ′(at∣st)pθ′(st)pθ(at∣st)pθ(st)Aθ(st,at)∇logpθ(at∣st)]

其中 p θ ( s t ) p θ ′ ( s t ) \frac{p_\theta(s_t)}{p_{\theta'}(s_t)} pθ′(st)pθ(st) 默认相等然后约掉(因为不好算,所以认为他们近似等价并约分)

有推导公式 ∇ f ( x ) = f ( x ) ∇ log f ( x ) \nabla f(x) = f(x) \nabla \log f(x) ∇f(x)=f(x)∇logf(x) ,观察上述梯度等式可以发现,

f ( x ) = p θ ( a t ∣ s t ) ∇ log f ( x ) = ∇ log p θ ( a t ∣ s t ) f(x) =p_\theta(a_t | s_t)\\ \nabla \log f(x)=\nabla \log p_\theta(a_t | s_t) f(x)=pθ(at∣st)∇logf(x)=∇logpθ(at∣st)

所以

∇ f ( x ) = f ( x ) ∇ log f ( x ) = p θ ( a t ∣ s t ) ∇ log p θ ( a t ∣ s t ) = ∇ p θ ( a t ∣ s t ) \nabla f(x)=f(x) \nabla \log f(x) =p_\theta(a_t | s_t)\nabla \log p_\theta(a_t | s_t)=\nabla p_\theta(a_t | s_t) ∇f(x)=f(x)∇logf(x)=pθ(at∣st)∇logpθ(at∣st)=∇pθ(at∣st)

即梯度为

∇ = E ( s t , a t ) ∼ π θ ′ [ ∇ p θ ( a t ∣ s t ) p θ ′ ( a t ∣ s t ) A θ ′ ( s t , a t ) ] \nabla = E_{(s_t,a_t) \sim \pi_{\theta'}} \left[ \frac{\nabla p_\theta(a_t | s_t)}{p_{\theta'}(a_t | s_t) } A^{\theta'}(s_t, a_t) \right] ∇=E(st,at)∼πθ′[pθ′(at∣st)∇pθ(at∣st)Aθ′(st,at)]

由梯度可以推出关于 θ ′ \theta' θ′ 的奖励目标函数

J θ ′ ( θ ) = E ( s t , a t ) ∼ π θ ′ [ p θ ( a t ∣ s t ) p θ ′ ( a t ∣ s t ) A θ ′ ( s t , a t ) ] J^{\theta'}(\theta) = E_{(s_t, a_t) \sim \pi_{\theta'}} \left[ \frac{p_\theta(a_t | s_t)}{p_{\theta'}(a_t | s_t)} A^{\theta'}(s_t, a_t) \right] Jθ′(θ)=E(st,at)∼πθ′[pθ′(at∣st)pθ(at∣st)Aθ′(st,at)]

PPO算法

J P P O k ( θ ) = J θ k ( θ ) − β K L ( θ , θ k ) J θ k ( θ ) ≈ ∑ ( s t , a t ) p θ ( a t ∣ s t ) p θ k ( a t ∣ s t ) A θ k ( s t , a t ) If K L ( θ , θ k ) ≥ K L max , increase β If K L ( θ , θ k ) ≤ K L min , decrease β J^{k}_{PPO}(\theta) = J^{\theta^k}(\theta) - \beta KL(\theta, \theta^k)\\ J^{\theta^k}(\theta) \approx \sum_{(s_t, a_t)} \frac{p_\theta(a_t | s_t)}{p_{\theta^k}(a_t | s_t)} A^{\theta^k}(s_t, a_t)\\ \text{If } KL(\theta, \theta^k) \geq KL_{\text{max}}, \text{ increase } \beta \\ \text{If } KL(\theta, \theta^k) \leq KL_{\text{min}}, \text{ decrease } \beta \\ JPPOk(θ)=Jθk(θ)−βKL(θ,θk)Jθk(θ)≈(st,at)∑pθk(at∣st)pθ(at∣st)Aθk(st,at)If KL(θ,θk)≥KLmax, increase βIf KL(θ,θk)≤KLmin, decrease β

在后面加上了KL散度

- 初始化策略参数 θ 0 \theta^0 θ0。

- 在每次迭代中:

- 使用 θ k \theta^k θk 与环境交互,收集 { s t , a t } \{s_t, a_t\} {st,at},并计算优势 A θ k ( s t , a t ) A^{\theta^k}(s_t, a_t) Aθk(st,at)。

- 找到优化 J P P O ( θ ) J_{PPO}(\theta) JPPO(θ)的 θ \theta θ。

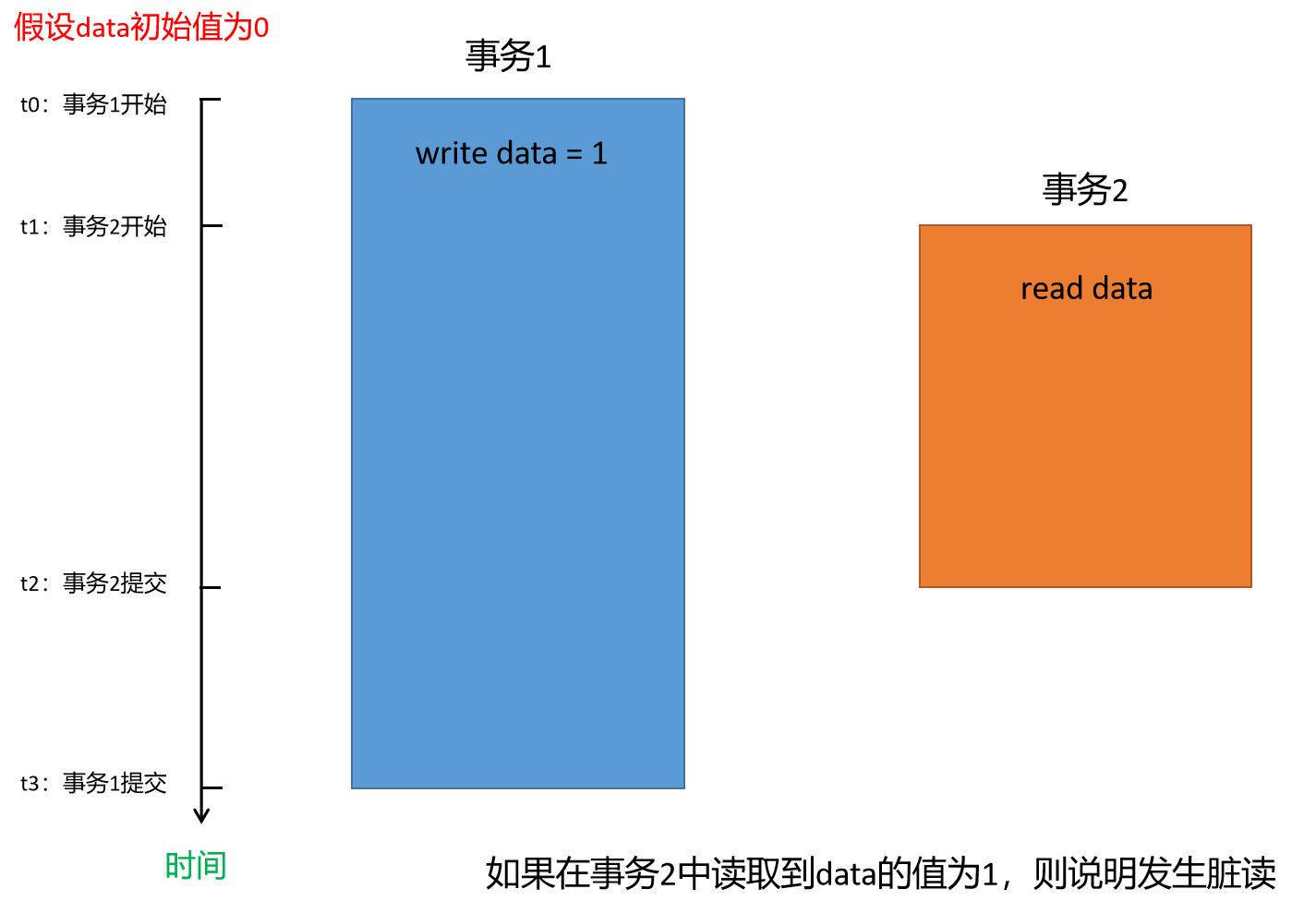

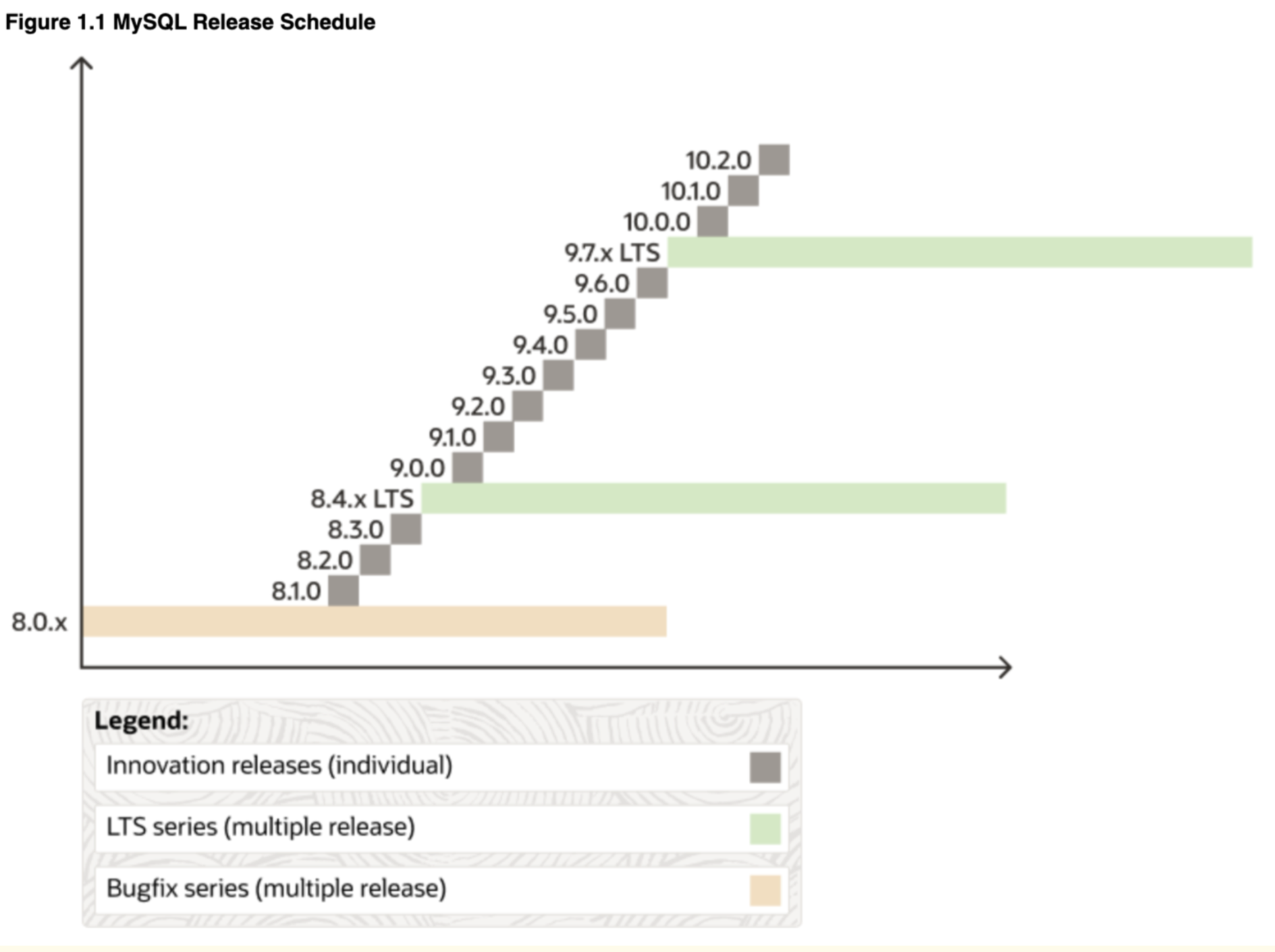

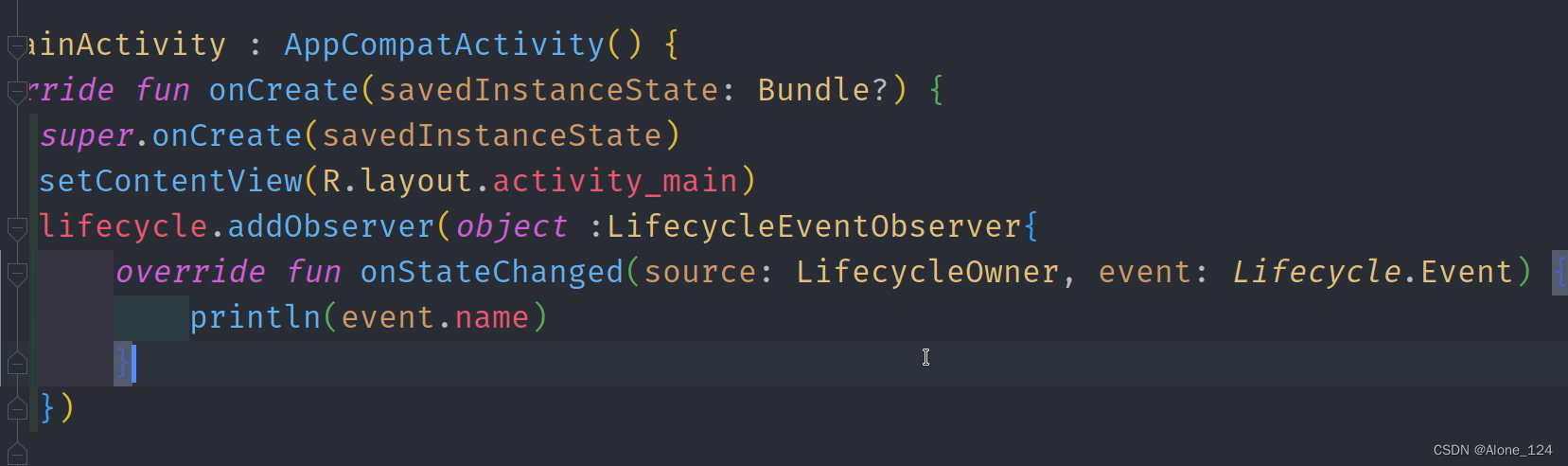

PPO的第二种方式

J P P O 2 θ k ( θ ) ≈ ∑ ( s t , a t ) min ( p θ ( a t ∣ s t ) p θ k ( a t ∣ s t ) A θ k ( s t , a t ) , clip ( p θ ( a t ∣ s t ) p θ k ( a t ∣ s t ) , 1 − ξ , 1 + ξ ) A θ k ( s t , a t ) ) J_{PPO2}^{\theta^k}(\theta) \approx \sum_{(s_t, a_t)} \min \left( \frac{p_\theta(a_t | s_t)}{p_{\theta^k}(a_t | s_t)} A^{\theta^k}(s_t, a_t), \text{clip}\left(\frac{p_\theta(a_t | s_t)}{p_{\theta^k}(a_t | s_t)}, 1 - \xi, 1 + \xi\right) A^{\theta^k}(s_t, a_t) \right) JPPO2θk(θ)≈(st,at)∑min(pθk(at∣st)pθ(at∣st)Aθk(st,at),clip(pθk(at∣st)pθ(at∣st),1−ξ,1+ξ)Aθk(st,at))

clip是一个选择函数

当 p θ ( a t ∣ s t ) p θ k ( a t ∣ s t ) < 1 − ξ \frac{p_\theta(a_t | s_t)}{p_{\theta^k}(a_t | s_t)} < 1 -\xi pθk(at∣st)pθ(at∣st)<1−ξ 时选 1 − ξ 1 -\xi 1−ξ

当 p θ ( a t ∣ s t ) p θ k ( a t ∣ s t ) > 1 + ξ \frac{p_\theta(a_t | s_t)}{p_{\theta^k}(a_t | s_t)} > 1 +\xi pθk(at∣st)pθ(at∣st)>1+ξ 时选 1 + ξ 1 +\xi 1+ξ

当 1 − ϵ < p θ ( a t ∣ s t ) p θ k ( a t ∣ s t ) < 1 + ξ 1 -\epsilon < \frac{p_\theta(a_t | s_t)}{p_{\theta^k}(a_t | s_t)} < 1 +\xi 1−ϵ<pθk(at∣st)pθ(at∣st)<1+ξ 时选 p θ ( a t ∣ s t ) p θ k ( a t ∣ s t ) \frac{p_\theta(a_t | s_t)}{p_{\theta^k}(a_t | s_t)} pθk(at∣st)pθ(at∣st)

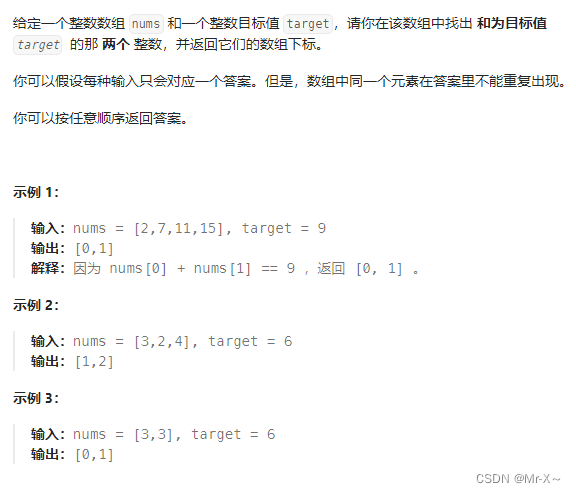

绿线是 p θ ( a t ∣ s t ) p θ k ( a t ∣ s t ) A θ k ( s t , a t ) \frac{p_\theta(a_t | s_t)}{p_{\theta^k}(a_t | s_t)} A^{\theta^k}(s_t, a_t) pθk(at∣st)pθ(at∣st)Aθk(st,at)的范围

蓝线是clip选择后的范围

x轴是 p θ ( a t ∣ s t ) p θ k ( a t ∣ s t ) \frac{p_\theta(a_t | s_t)}{p_{\theta^k}(a_t | s_t)} pθk(at∣st)pθ(at∣st) 的比值

我们不希望真实分布 p θ ( x ) p_\theta(x) pθ(x) 和伪分布 p θ ′ ( x ) p_{\theta'}(x) pθ′(x) 相差太大,也就是这个比值最好是在1左右

这里尝试对上图进行解释:

- 当 A > 0 A > 0 A>0 时,说明 s 和 a 获得的奖励是好的,说明此时 p θ ( a t ∣ s t ) p_\theta(a_t | s_t) pθ(at∣st) 做出的决策是正确的,我们希望它越大越好,此时目标函数(红线)会把概率往上推,但只能推到一定的程度

- 当 A < 0 A < 0 A<0 时,说明 s 和 a 获得的奖励是好的,说明此时 p θ ( a t ∣ s t ) p_\theta(a_t | s_t) pθ(at∣st) 做出的决策是错误的,我们希望它越小越好,此时目标函数(红线)就把概率往下推,但也不会推的很小

![[华为od]C 卷 园区某部门举办了Family Day,邀请员工及其家属参加](https://img-blog.csdnimg.cn/direct/2c0da3731a744d48bd8d9e8be9466cbf.png)