1 meaning and purpose

- Scatter/Gather mappings are a special type of streaming DMA mapping where one can transfer several buffer regions in a single shot, instead of mapping each buffer individually and transferring them one by one.

- Think of a scence, you have several buffers that might not be physically contiguous, all of which need to be transferred at the same time to or from the device. Scatter/Gather can help to solve the problem.

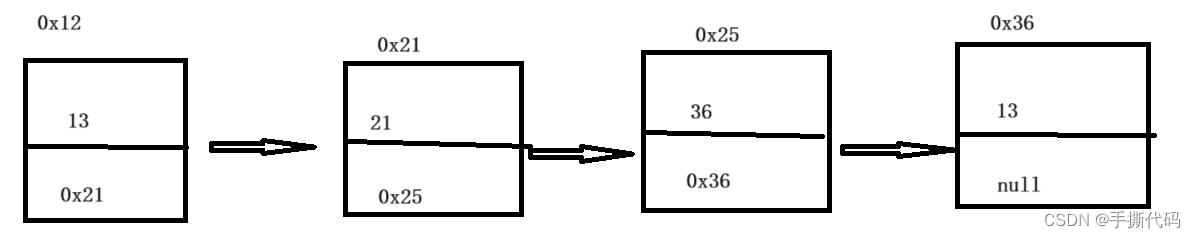

- Most two improtant sturctures: scatterlist and sg_table. sg_table consits of scatterlist and point to the first scatterlist. scatterlist present the buffer size and address.

struct scatterlist {

unsigned long page_link;

unsigned int offset;

unsigned int length;

dma_addr_t dma_address;

};

struct sg_table {

struct scatterlist *sgl; /* the list */

unsigned int nents; /* number of mapped entries */

unsigned int orig_nents; /* original size of list */

};

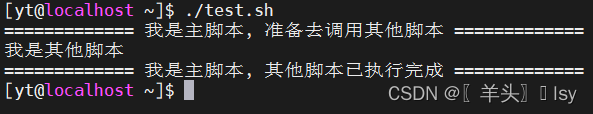

2 construct the sg_table and scatterlist

2.1 construct sg_table

- alloc the sg_table: sg_alloc_table

- fill the sg_table: sg_set_page

#define SG_CHAIN 0x01UL

#define SG_END 0x02UL

/*

* We overload the LSB of the page pointer to indicate whether it's

* a valid sg entry, or whether it points to the start of a new scatterlist.

*/

#define SG_PAGE_LINK_MASK (SG_CHAIN | SG_END)

static inline unsigned int __sg_flags(struct scatterlist *sg)

{

return sg->page_link & SG_PAGE_LINK_MASK;

}

static inline struct scatterlist *sg_chain_ptr(struct scatterlist *sg)

{

return (struct scatterlist *)(sg->page_link & ~SG_PAGE_LINK_MASK);

}

static inline bool sg_is_chain(struct scatterlist *sg)

{

return __sg_flags(sg) & SG_CHAIN;

}

static inline bool sg_is_last(struct scatterlist *sg)

{

return __sg_flags(sg) & SG_END;

}

struct scatterlist *sg_next(struct scatterlist *sg)

{

if (sg_is_last(sg))

return NULL;

sg++;

if (unlikely(sg_is_chain(sg)))

sg = sg_chain_ptr(sg);

return sg;

}

/*Loop over each sg element, following the pointer to a new list if necessary*/

#define for_each_sg(sglist, sg, nr, __i) \

for (__i = 0, sg = (sglist); __i < (nr); __i++, sg = sg_next(sg))

#define sg_dma_address(sg) ((sg)->dma_address)

#define sg_dma_len(sg) ((sg)->length)

static inline struct page *sg_page(struct scatterlist *sg)

{

return (struct page *)((sg)->page_link & ~SG_PAGE_LINK_MASK);

}

static inline void sg_assign_page(struct scatterlist *sg, struct page *page)

{

unsigned long page_link = sg->page_link & (SG_CHAIN | SG_END);

/*

* In order for the low bit stealing approach to work, pages

* must be aligned at a 32-bit boundary as a minimum.

*/

BUG_ON((unsigned long)page & SG_PAGE_LINK_MASK);

sg->page_link = page_link | (unsigned long) page;

}

/**

* sg_set_page - Set sg entry to point at given page

* @sg: SG entry

* @page: The page

* @len: Length of data

* @offset: Offset into page

*

* Description:

* Use this function to set an sg entry pointing at a page, never assign

* the page directly. We encode sg table information in the lower bits

* of the page pointer. See sg_page() for looking up the page belonging

* to an sg entry.

*

**/

static inline void sg_set_page(struct scatterlist *sg, struct page *page,

unsigned int len, unsigned int offset)

{

sg_assign_page(sg, page);

sg->offset = offset;

sg->length = len;

}

static struct sg_table *i915_gem_map_dma_buf(struct dma_buf_attachment *attach,

enum dma_data_direction dir)

{

struct drm_i915_gem_object *obj = dma_buf_to_obj(attach->dmabuf);

struct sg_table *sgt;

struct scatterlist *src, *dst;

int ret, i;

/*

* Make a copy of the object's sgt, so that we can make an independent

* mapping

*/

sgt = kmalloc(sizeof(*sgt), GFP_KERNEL);

if (!sgt) {

ret = -ENOMEM;

goto err;

}

ret = sg_alloc_table(sgt, obj->mm.pages->orig_nents, GFP_KERNEL);

if (ret)

goto err_free;

dst = sgt->sgl;

/*

#define for_each_sg(sglist, sg, nr, __i) \

for (__i = 0, sg = (sglist); __i < (nr); __i++, sg = sg_next(sg))

*/

for_each_sg(obj->mm.pages->sgl, src, obj->mm.pages->orig_nents, i) {

sg_set_page(dst, sg_page(src), src->length, 0);

dst = sg_next(dst);

}

ret = dma_map_sgtable(attach->dev, sgt, dir, DMA_ATTR_SKIP_CPU_SYNC);

if (ret)

goto err_free_sg;

return sgt;

err_free_sg:

sg_free_table(sgt);

err_free:

kfree(sgt);

err:

return ERR_PTR(ret);

}

3 parse the sg_table and scatterlist

We use the unsigned long page_link field in the scatterlist struct to place the page pointer AND encode information about the sg table as well. The two lower bits are reserved for this information.

If bit 0 is set, then the page_link contains a pointer to the next sg table list. Otherwise the next entry is at sg + 1.

If bit 1 is set, then this sg entry is the last element in a list.

see sg_next()

3.1 scene 1

static int i915_gem_object_get_pages_dmabuf(struct drm_i915_gem_object *obj)

{

struct sg_table *pages;

unsigned int sg_page_sizes;

pages = dma_buf_map_attachment(obj->base.import_attach, DMA_BIDIRECTIONAL); //-->i915_gem_map_dma_buf

if (IS_ERR(pages))

return PTR_ERR(pages);

sg_page_sizes = i915_sg_page_sizes(pages->sgl); //遍历sg_table中的scatterlist,获取所有的sg_table中scatterlist表示的pagesize的大小总和

/*

obj->mm.get_page.sg_pos = pages->sgl;

obj->mm.get_page.sg_idx = 0;

obj->mm.pages = pages;

*/

__i915_gem_object_set_pages(obj, pages, sg_page_sizes); //给obj赋值为sg_table中scatterlist

return 0;

}

3.2 scene 2

dma_addr_t

i915_gem_object_get_dma_address(struct drm_i915_gem_object *obj,

unsigned long n)

{

struct scatterlist *sg;

unsigned int offset;

sg = i915_gem_object_get_sg(obj, n, &offset);

return sg_dma_address(sg) + (offset << PAGE_SHIFT);

}

struct scatterlist *

i915_gem_object_get_sg(struct drm_i915_gem_object *obj,

unsigned int n,

unsigned int *offset)

{

struct i915_gem_object_page_iter *iter = &obj->mm.get_page;

struct scatterlist *sg;

unsigned int idx, count;

sg = iter->sg_pos;

idx = iter->sg_idx;

count = __sg_page_count(sg);

while (idx + count <= n) {

void *entry;

unsigned long i;

int ret;

idx += count;

sg = ____sg_next(sg);

count = __sg_page_count(sg);

}

}